Update on GPU Optimizations in Galaxy S 4

by Anand Lal Shimpi & Brian Klug on July 31, 2013 10:11 AM EST- Posted in

- Smartphones

- Samsung

- Android

- Mobile

- Benchmarks

Yesterday we posted our analysis of the Exynos 5 Octa's behavior in international versions of the Galaxy S 4 in certain benchmarks first discovered by Beyond3D user @Andreif7. Samsung addressed issue on their blog earlier today:

Under ordinary conditions, the GALAXY S4 has been designed to allow a maximum GPU frequency of 533MHz. However, the maximum GPU frequency is lowered to 480MHz for certain gaming apps that may cause an overload, when they are used for a prolonged period of time in full-screen mode. Meanwhile, a maximum GPU frequency of 533MHz is applicable for running apps that are usually used in full-screen mode, such as the S Browser, Gallery, Camera, Video Player, and certain benchmarking apps, which also demand substantial performance.

The maximum GPU frequencies for the GALAXY S4 have been varied to provide optimal user experience for our customers, and were not intended to improve certain benchmark results.

Samsung Electronics remains committed to providing our customers with the best possible user experience.

The blog seems to confirm our findings, that the 533MHz GPU frequency is available for certain benchmarks ("a maximum GPU frequency of 533MHz is applicable for running apps that are usually used in full-screen mode, such as the S Browser, Gallery, Camera, Video Player, and certain benchmarking apps"). The full screen statement doesn't make a ton of sense (both GLBenchmark 2.5.1 and 2.7.0 are full screen apps, but with different GPU behavior). Samsung claims however that a number of its first party apps (S Browser, Gallery, Camera and Video Player) can also run the GPU at up to 532MHz, which actually explains something else we saw while digging around.

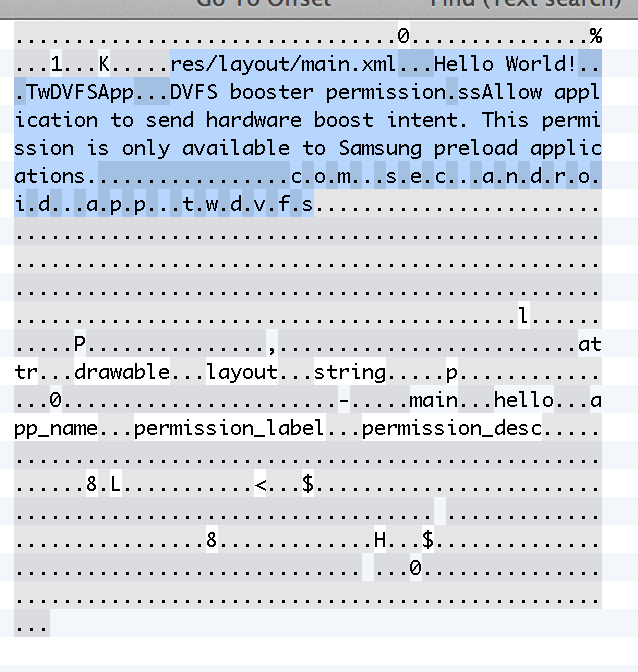

Looking at resources.arc inside TwDVFSApp.apk we find the following reference to pre-loaded Samsung apps:

This is what we originally assumed was happening with GLBenchmark 2.5.1 (that it was broadcasting boost intent to Samsung, but Kishonti told us that wasn't the case when we asked).

As we mentioned in our original piece, there's a flag that's set whenever this boost mode is activated: +/sys/class/thermal/thermal_zone0/boost_mode. None of the first party apps get that flag set, only the specific benchmarks we pointed out in the original article. Of those first party apps, S Browser, Gallery and Video Player all top out at a GPU frequency of 266MHz (which makes sense, none of the apps are particularly GPU intensive). I tried running WebGL content in S Browser to confirm (I ran Aquarium with 500 fish), as well as edited some photos in Gallery - 266MHz was the max observed GPU frequency.

| Max Observed GPU Frequency | ||||||||

| S Browser | Gallery | Video Player | Camera | Modern Combat 4 | AnTuTu | |||

| Samsung GT-I9500 | 266MHz | 266MHz | 266MHz | 532MHz | 480MHz | 532MHz | ||

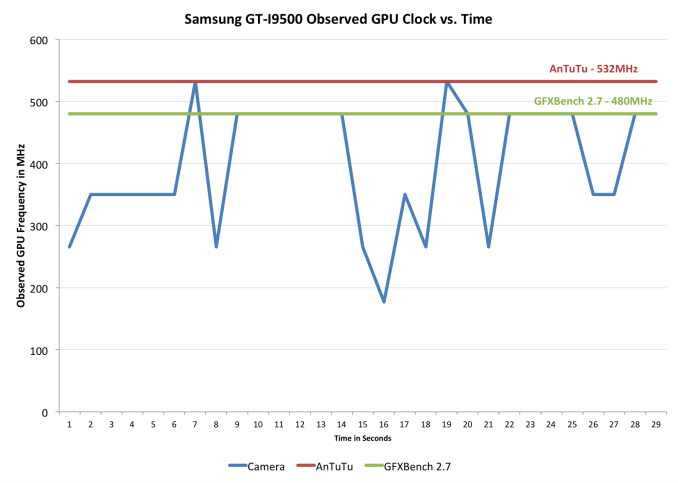

The camera app on the other hand is a unique example. Here we see short blips up to 532MHz if you play around with the live filters aggressively (I just quickly tapped between enabling all of the filters, the rugged/oil pastel/fish eye filters seemed to be the more likely to trigger a 532MHz excursion). I never saw 532MHz for more than a second though. The chart below plots GPU frequency vs. sampling time to illustrate what I saw there:

What appears to be happening here is the boost routine grants access to raised thermal limits, which makes it possible to sustain the 532MHz GPU frequency for the duration of certain benchmarks. Since the camera app doesn't get the boost mode flag, it doesn't seem to sustain 532MHz - only 480MHz.

Otherwise the Samsung response is consistent with our findings and we're generally in agreement. Games aren't given access to the 532MHz GPU frequency, while certain benchmarks are. Samsung's pre-loaded apps can send boost intent to the DVFS (dynamic voltage and frequency scaling) controller, but they don't appear to be given the same thermal boost ability as we see in the benchmarks. Their reasoning for not giving games access to the higher frequency makes sense as well. Higher frequencies typically require higher voltage to reach, and power scales quadratically with voltage so that's typically not the best way of increasing performance - especially at the limits of one's frequency/voltage curve. In short, you wouldn't want to for thermal (and battery life) reasons. The debate is ultimately about what happens within those specified benchmarks.

Note that we're ultimately talking about an optimization that would increase GPU performance in certain benchmarks by around 10%. It doesn't sound like a whole lot, but in a very thermally limited scenario it's likely the best you can do.

I stand by the right solution here being to either allow the end user to toggle this boost mode on/off (for all apps) or to remove the optimization entirely. I suspect the reason why Samsung wouldn't want to do the former is because you honestly don't want to run in a less thermally constrained mode for extended periods of time in a phone. Long term I'm guessing we'll either see the optimization removed or we'll see access to view current GPU clock obscured. I really hope it's not the latter as we as an industry need more insight into what's going on underneath the hood of our mobile devices, not less. Furthermore, I think this also highlights a real issue with the way DVFS management is done presently in the mobile space. Software is absolutely the wrong place to handle DVFS, it needs to be done in hardware.

Since our post yesterday we've started finding others who exhibit the same CPU frequency behavior that we reported on the SGS4. This isn't really the big part of the discovery since the CPU frequencies offered are available to all apps (not just specific benchmarks). We'll be posting our findings there in the near future.

38 Comments

View All Comments

jleach1 - Wednesday, August 7, 2013 - link

You're being extremely naive. The vast majority of companies HAVE done this AT SOME IN TIME, most of the time until they were caught. Key word: caught. Companies to this day design their hardware around benchmarks, or they might even attempt to release their own benchmarking software.I'd bet my sister that most are doing this in far more subtle and far more undetectable ways.

Kirus93x - Thursday, September 19, 2013 - link

So your saying because there device is programmed to not use it's GPU to it's full potential when it's not needed is a bad thing? Are you that dense? It's not to make there device look better, it's to safe the user battery life.Krysto - Wednesday, July 31, 2013 - link

Isn't what Samsung is doing pretty much what Intel is doing with the Turbo-Boost activated in benchmarks, too?BillyONeal - Wednesday, July 31, 2013 - link

No, turbo does not favor anyone. It just allows the CPU to use unused thermal headroom.Dman23 - Wednesday, July 31, 2013 - link

"Under ordinary conditions, the GALAXY S4 has been designed to allow a maximum GPU frequency of 533MHz." This is absolute bullocks. CLEARLY after these two postings / findings by Anand and Brian, they are cherry-picking which Apps of access to this higher "boost-mode". This might as well be called "cheat-mode" because it is not something all apps can use if the thermal and battery requirements are there, and it seems to target mostly benchmarking apps (i.e. GLBenchmark, Quadrant, and AnTuTu) and superfluous apps such as their camera app, gallery app, and video player, mostly because most people wouldn't test to see if there was any shenanigans going on in these stock apps.Kudos to AnandTech for finding these shandy engineering tactics and exposing them to the general public. I don't care which company has done this, if a company is engineering ways to cheat / boost their results on benchmark tests, they need to be ridiculed and shamed regardless of how prevalent it is. And if this company is cherry-picking only certain apps from having access to this type of higher GPU frequency-mode, then they should be even more ridiculed and shamed.

BC2009 - Thursday, August 1, 2013 - link

Don't forget that the switching from the A7 cores to the A15 cores is also not instantaneous. The normal thing would be for the increased load from a benchmark app to eventually push the device to its power-hungry A15 cores. However, Samsung locked the device into using those CPU cores form the get-go and refused to let it drop to the A7 cores.The big problem with ARM A15 cores is that they were designed for higher power consumption and not for mobile. The Big Little config with the A7 cores is there to mitigate that and it is part of the real-world performance profile of the device. Samsung has made it so the CPU benchmarks strictly use the A15 cores, which is very misleading (In addition to over-clocking the GPU).

Kevin G - Wednesday, July 31, 2013 - link

Turbo doesn't take input from specific applications/OS calls. Intel's CPU's do not increase their clock speeds when a specific application is run.Thermal level, power consumption and thread load are the three metrics which determine what frequency the chip runs at with tubro.Chloiber - Wednesday, July 31, 2013 - link

The difference is that turbo boost can potentially be used by every application. It basically says "If you have thermal headroom, use the boost." And it is done in hardware.Samsung on the other hand only allows that boost for benchmarks, which is bad.

And they should feel bad...

jameskatt - Thursday, August 1, 2013 - link

Samsung is never going to admit wrong doing. To do so loses face. They would have to admit they are cheating. They have less shame than Lance Armstrong. So Samsung is never going to admit wrongdoing.name99 - Wednesday, July 31, 2013 - link

I guess you missed the single MOST IMPORTANT point of the post above:"Furthermore, I think this also highlights a real issue with the way DVFS management is done presently in the mobile space. Software is absolutely the wrong place to handle DVFS, it needs to be done in hardware."

Doing fine-grained power management scaling in HW is absolutely the correct way to do things. It is more responsive, it allows access to more information about whatever the relevant parameters are (current budget, thermals,...), it can't be borked by a broken driver, etc etc.

It will be interesting (though will we ever know?) to see how rapidly Apple goes down this path. They have the total control which allows them to decide whether they want to make the tradeoff in HW vs SW, and my guess is they will want to move to HW ASAP (gated, of course, by their current practical concerns --- getting to 64bit soon, and figuring out where the thing will be fabbed and what that implies going forward).