Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

Performance Consistency

In our Intel SSD DC S3700 review I introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

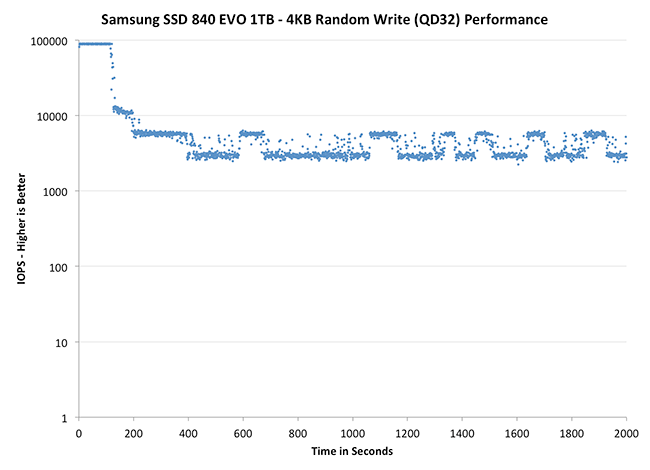

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

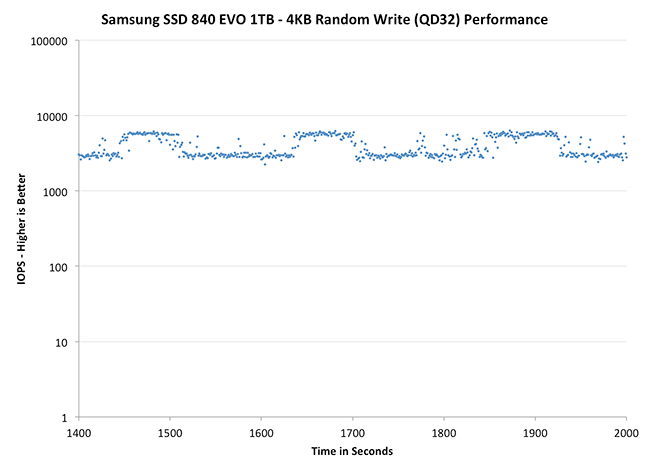

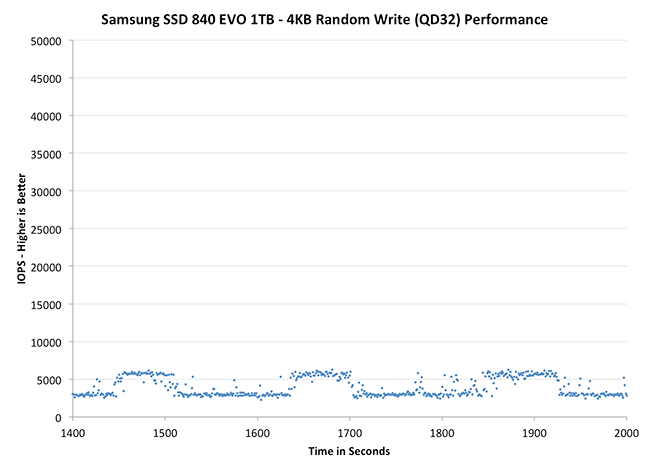

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

Thanks to the EVO's higher default over provisioning, you actually get better consistency out of the EVO than the 840 Pro out of the box. Granted you can get similar behavior out of the Pro if you simply don't use all of the drive. The big comparison is against Crucial's M500, where the EVO does a bit better. SanDisk's Extreme II however remains the better performer from an IO consistency perspective.

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

|

|||||||||

| Crucial M500 960GB | Samsung SSD 840 EVO 1TB | Samsung SSD 840 EVO 250GB | SanDisk Extreme II 480GB | Samsung SSD 840 Pro 256GB | |||||

| Default | |||||||||

Zooming in we see very controlled and frequent GC patterns on the 1TB drive, something we don't see in the 840 Pro. The 250GB drive looks a bit more like a clustered random distribution of IOs, but minimum performance is still much better than on the standard OP 840 Pro.

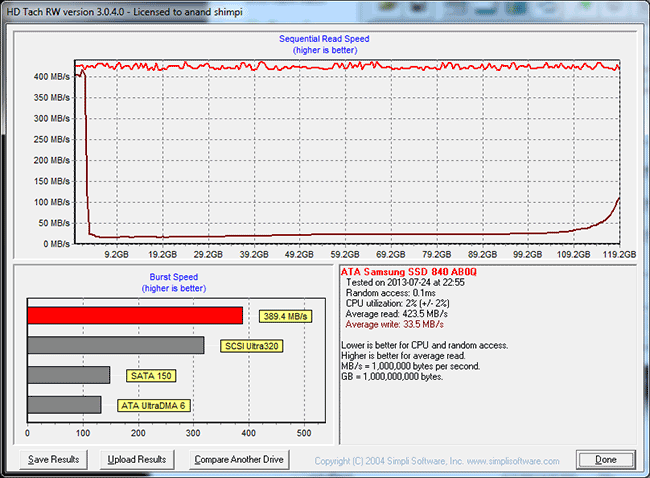

TRIM Validation

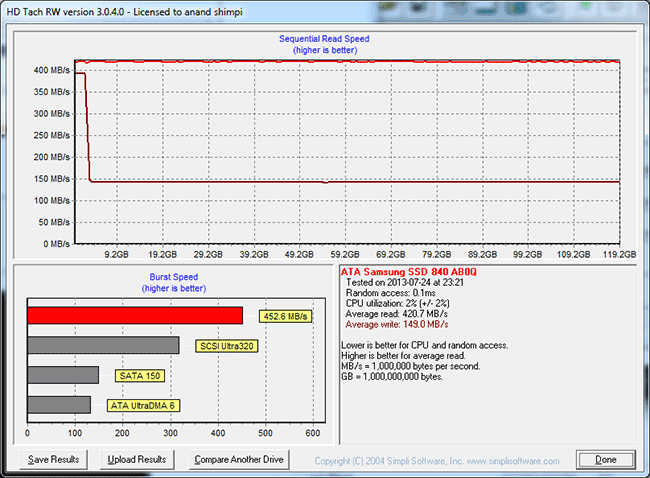

Our performance consistency test actually replaces our traditional TRIM test in terms of looking at worst case scenario performance, but I wanted to confirm that TRIM was functioning properly on the EVO so I dusted off our old test for another go. The test procedure remains unchanged: fill the drive with sequential data, run a 4KB random write test (QD32, 100% LBA range) for a period of time (30 minutes in this case) and use HDTach to visualize the impact on write performance:

Minimum performance drops down to around 30MB/s, eugh. Although the EVO can be reasonably consistent, you'll still want to leave some free space on the drive to ensure that performance always stays high (I recommend 15 - 25% if possible).

A single TRIM pass (quick format under Windows 7) fully restores performance as expected:

The short period of time at 400MB/s is just TurboWrite doing its thing.

137 Comments

View All Comments

verjic - Thursday, February 13, 2014 - link

I'm talking about 120 Gb versionverjic - Thursday, February 13, 2014 - link

Also what is Write/Read IOMeter Bootup and Write/Read IOMeter IOMix - what means their speed? Thank YouAhDah - Thursday, May 15, 2014 - link

The TRIM validation graph shows a tremendous performance drop after a few gigs of writes, even after TRIM pass, the write speed is only 150MBps.Does this mean once the drive is 75%-85% filled up, the write speed will always be slow?

I'm tempted to get Crucial M550 because of this down fall.

njwhite2 - Wednesday, October 15, 2014 - link

Kudos to Anand Lal Shimpi! This is one of the finest reviews I have ever read! No jargon. No unexplained acronyms. Quantitative testing of compared items instead of reviewer bias. Explanation of why the measured criteria are imortant to the end user! Just fabulous! I read dozens of reviews each week, so I'm surprised I had not stumbled upon Anandtech before. I'm (for sure) going to check out their smartphone reviews. Most of those on other sites are written by Apple fans or Android fans and really don't tell the potential purchaser what they need to know to make the best choice for them.IT_Architect - Thursday, October 22, 2015 - link

I would be interested in how reliable they are. The reason I ask is one time, when the time the Intel SLC technology was just under two years old, and there was no MLC or TLC, I needed speed to load a database from scratch 6 times an hour during incredible traffic times. I was getting requests by users at the rate of 66 times a second per server, which each required many reads of the database per request. I couldn't swap databases without breaking sessions, and mirror and unmirror did not work well. I would have to pay a ton to duplicate a redundant array in SSDs. Then I asked the data center how many of these drives they had out there. They (SoftLayer) queried and came back with 700+. Then I asked them how many they've had go bad. They queried their records and it was none, not so much as a DOA. I reasoned from that I would be just as likely to have a chassis or disk controller go bad. None of them have any moving parts, and the drives are low power. Those were enterprise drives of course because that's all there was at that time.In 2011 I bought a Dell M6600. Dell was shipping them with the Micron SSD. I was concerned about the lifespan and I do a lot of reading and writing with it and work constantly with virtual machines while prototyping, and VM files are huge. It calculated out to 4 years. While researching, I came across that situation where Dell had "cold feet" about OEMing them due to lifespan. Micron/Intel demonstrated to them 10x the rated lifespan, which convinced Dell. There was plenty of other trouble with consumer-level SSDs at the time, which gave the technology a bad name. The Micron/Intel was one of the very few solid citizens at the time. I went with it, although I didn't buy my M6600 with it because Dell had such a premium on them. I had two problems with the drive, which by the way is still in service today. The first was the drive just stopped doing anything one day. I called Micron and it turned out to be a bug in the firmware. If I had two drives arrayed, it would have stopped both at the same time. I upgraded the firmware and never had that problem again. The next time I was troubleshooting the laptop and putting the battery in and out and the computer would no longer boot. I again called Micron. It was by design. They said disconnect the power, pull the battery, and wait one hour. I did, and it has worked perfectly since. If I had an array, it would have stopped both at the same time.

Today, the market is much more mature and the technology no longer has a bad name. A redundant array is no substitute for a backup anyway. A redundant array brings business continuity and speed. Are we just as likely or more so to have a motherboard go out? We don't have redundant motherboards unless without having another entire computer. Unlike a power supplies and CPUs, SSDs are low-current devices. I'm considering the possibility that we may be at the point, even for consumer-level drives, where redundant arrays for SSDs are just plain silly.

Gothmoth - Sunday, January 8, 2017 - link

in real life my RAPID test showed no benefits AT ALL!!all it does is making low level benchmarks look better.

you should test with real applications. RAPID is a useless feature.

jeyjey - Friday, June 7, 2019 - link

I have one of this drive. I need to find a little part that is fired, I need to replace it to try to enter the data inside. Please help.