Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

Endurance

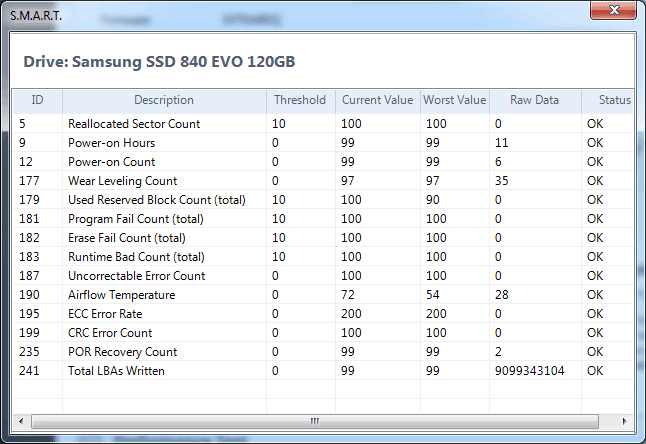

Samsung isn't quoting any specific TB written values for how long it expects the EVO to last, although the drive comes with a 3 year warranty. Samsung doesn't explicitly expose total NAND writes in its SMART details but we do get a wear level indicator (SMART attribute 177). The wear level indicator starts at 100 and decreases linearly down to 1 from what I can tell. At 1 the drive will have exceeded all of its rated p/e cycles, but in reality the drive's total endurance can significantly exceed that value.

Kristian calculated around 1000 p/e cycles using the wear level indicator on his 840 sample last year or roughly 242TB of writes, but we've seen reports of much more than that (e.g. this XtremeSystems user who saw around 432TB of writes to a 120GB SSD 840 before it died). I used Kristian's method of mapping sequential writes to the wear level indicator to determine the rated number of p/e cycles on my 120GB EVO sample:

| Samsung SSD 840 EVO Endurance Estimation | |||||||

| Samsung SSD EVO 120GB | |||||||

| Total Sequential Writes | 4338.98 GiB | ||||||

| Wear Level Counter Decrease | -3 (raw value = 35) | ||||||

| Estimated Total Writes | 144632.81 GiB | ||||||

| Estimated Rated P/E Cycles | 1129 cycles | ||||||

Using the 1129 cycle estimate (which is an improvement compared to last year's 840 sample), I put together the table below to put any fears of endurance to rest. I even upped the total NAND writes per day to 50 GiB just to be a bit more aggressive than the typically quoted 10 - 30 GiB for consumer workloads:

| Samsung SSD 840 EVO TurboWrite Buffer Size vs. Capacity | |||||||

| 120GB | 250GB | 500GB | 750GB | 1TB | |||

| NAND Capacity | 128 GiB | 256 GiB | 512 GiB | 768 GiB | 1024 GiB | ||

| NAND Writes per Day | 50 GiB | 50 GiB | 50 GiB | 50 GiB | 50 GiB | ||

| Days per P/E Cycle | 2.56 | 5.12 | 10.24 | 15.36 | 20.48 | ||

| Estimated P/E Cycles | 1129 | 1129 | 1129 | 1129 | 1129 | ||

| Estimated Lifespan in Days | 2890 | 5780 | 11560 | 17341 | 23121 | ||

| Estimated Lifespan in Years | 7.91 | 15.83 | 31.67 | 47.51 | 63.34 | ||

| Estimated Lifespan @ 100 GiB of Writes per Day | 3.95 | 7.91 | 15.83 | 23.75 | 31.67 | ||

Endurance scales linearly with NAND capacity, and the worst case scenario at 50 GiB of writes per day is just under 8 years of constant write endurance. Keep in mind that this is assuming a write amplification of 1, if you're doing 50 GiB of 4KB random writes you'll blow through this a lot sooner. For a client system however you're probably looking at something much lower than 50 GiB per day of total writes to NAND, random IO included.

I also threw in a line of lifespan estimates at 100 GiB of writes per day. It's only in this configuration that we see the 120GB drive drop below 4 years of endurance, again based on a conservative p/e estimate. Even with 100 GiB of NAND writes per day, once you get beyond the 250GB EVO we're back into absolutely ridiculous endurance estimates.

Keep in mind that all of this is based on 1129 p/e cycles, which is likely less than half of what the practical p/e cycle limit on Samsung's 19nm TLC NAND. To go ahead and double those numbers and then you're probably looking at reality. Endurance isn't a concern for client systems using the 840 EVO.

137 Comments

View All Comments

Grim0013 - Sunday, July 28, 2013 - link

I wonder what, if anything, the impact of Turbo Write is on drive endurance, as in, does the SLC buffer have the effect of "shielding" the TLC from some amount of write amplification (WA)? More specifically, I was thinking that in the case of small random writes (high WA), many of them would be going to the SLC first, then when the data is transferred to the TLC, I wonder if the buffering affords the controller the opportunity to write the data is such a way as to reduce WA on the TLC?In fact, I wonder if that is something that is done...if the controller is able to characterize certain types of files as being likely the be frequently modified then just keep them in the SLC semi-permanently. Stuff like the page file and other OS stuff that is constantly modified...I'm not very well-versed on this stuff so I'm just guessing. It just seems like taking advantage of SLCs crazy p/e endurance in addition to it's speed could really help make these things bulletproof.

shodanshok - Sunday, July 28, 2013 - link

Yea, I was thinking the same thing. After all, Sandisk already did it on the Ultra Plus and Ultra II SSDs: they have a small pseudo-SLC zone used both for greater performance and reducing WA.shodanshok - Sunday, July 28, 2013 - link

I am not so exited about RAPID: data integrity is a delicate thing, so I am not so happy to trust Samsung (or others) replacing the key well-tested caching algorithm natively built into the OS.Anyway, Windows' write caching is not so quick because the OS, by default, flush its in-memory cache each second. Moreover, it normally issue a barrier event to flush the disk's DRAM cache. This last thing can be disabled, but the flush of the in-memory cache can not be changed, as far I know.

Linux, on the other side, use much aggressive caching policy: it issue an in-memory cache flush (pagecache) ever 30 seconds, and it aggressively try to coalesce multiple writes into a single transactions. This parameter is configurable using the /proc interface. Moreover, if you have a BBU or power-tolerant disk subsystem, you can even disable the barrier instruction normally issued to the disk's DRAM cache.

Timur Born - Sunday, July 28, 2013 - link

My Windows 8 setup uses quite exactly 1 gb RAM for write caching, regardless of whether it's writing to a 5400 rpm 2.5" HD, 5400 rpm 3.5" HD or Crucial M4 256 gb SSD. That's exactly the size of the RAPID cache. The "flush its cache each second" part becomes a problem when the source and destination are on the same drive, because once Windows starts writing the disk queue starts to climb.But even then it should mostly be a problem for spinning HDs that don't really like higher queue numbers. Even more so when you copy multiple files via Windows Explorer, which reads and write files concurrently even on spinning HDs.

So I wonder if RAPID's only real advantage is its feature to coalesce multiple small writes into single big ones for durations longer than one second?!

Timur Born - Sunday, July 28, 2013 - link

By the way, my personal experience is that CPU power saving features, as set up in both in the default "Balanced" and the "High Performance" power-profiles, have far more of an impact on SSD performance than caching stuff. I can up my M4' 4K random performance by 60% and more just by messing with CPU power savings to be less aggressive (or off).shodanshok - Monday, July 29, 2013 - link

If I correctly remember, Windows use at most 1/8 of total RAM size for write caching. How much RAM did you have?Timur Born - Tuesday, July 30, 2013 - link

8 gb, so you may be correct. Or you may mix it up with the 1/8 part of dirty cache that is being flushed by the Windows cache every second. Or both may be 1/8. ;-)zzz777 - Monday, July 29, 2013 - link

I'm interested in caching writes to a ram disk then to storage. This reminds me if the concept of a write-back cache: for almost everyone The possibility of data corruption is so low that there's no reason not to enable it: can this ssd ramdisk write quickly enough that home users also don't have to worry about using it? Beyond that I'm not a normal home user, I want to see benchmarks for virtualization, I want the quickest way to create, modify and test a vm before putting it on front life hardwareWwhat - Monday, July 29, 2013 - link

I for me still say: I rather go for the pro version.andreaciri - Thursday, August 1, 2013 - link

i have to decide if buy an 840 now, or an EVO when it will be available, for my macbook. considering that RAPID technology is only supported under windows, and that i'm more interested in read performance than write, is 840 a good choice?