NVIDIA Demonstrates Logan SoC: < 1W Kepler, Shipping in 1H 2014, More Energy Efficient than A6X?

by Anand Lal Shimpi on July 24, 2013 9:00 AM ESTPower Consumption

There's a lot of uncertainty around whether or not Kepler is suitable for ultra low power operation, especially given that we've only seen it in relatively high TDP (compared to tablets/smartphones) PCs. NVIDIA hoped to put those concerns to rest with a quick GLBenchmark 2.7 demo at Siggraph. The demo pitted an iPad 4 against a Logan development platform, with Logan's Kepler GPU clocked low enough to equal the performance of the iPad 4. The low clock speed does put Kepler at an advantage as it can run at a lower voltage as well, so the comparison is definitely one you'd expect NVIDIA to win.

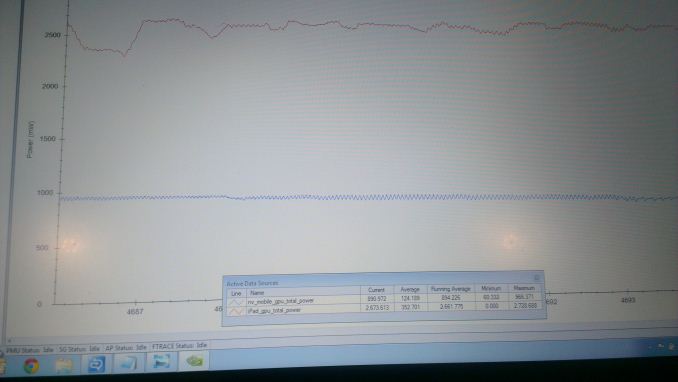

Unlike Tegra 3, Logan includes a single voltage rail that feeds just the GPU. NVIDIA instrumented this voltage rail and measured power consumption while running the offscreen 1080p T-Rex HD test in GLB2.7. Isolating GPU power alone, NVIDIA measured around 900mW for Logan's Kepler implementation running at iPad 4 performance levels (potentially as little as 1/5 of Logan's peak performance). NVIDIA also attempted to find and isolate the GPU power rail going into Apple's A6X (using a similar approach to what we documented here), and came up with an average GPU power value of around 2.6W.

I won't focus too much on the GPU power comparison as I don't know what else (if anything) Apple hangs off of its GPU power rail, but the most important takeaway here is that Kepler seems capable of scaling down to below 1W. In reality NVIDIA wouldn't ship Logan with a < 1W Kepler implementation, so we'll likely see higher performance (and power consumption) in shipping devices. If these numbers are believable, you could see roughly 2x the performance of an iPad 4 in a Logan based smartphone, and 4 - 5x the performance of an iPad 4 in a Logan tablet - in as little as 12 months from now if NVIDIA can ship this thing on time.

If NVIDIA's A6X power comparison is truly apples-to-apples, then it would be a huge testament to the power efficiency of NVIDIA's mobile Kepler architecture. Given the recent announcement of NVIDIA's willingness to license Kepler IP to any company who wants it, this demo seems very well planned.

NVIDIA did some work to make Kepler suitable for low power, but it's my understanding that the underlying architecture isn't vastly different from what we have in notebooks and desktops today. Mobile Kepler retains all of the graphics features as its bigger counterparts, although I'm guessing things like FP64 CUDA cores are gone.

Final Words

For the past couple of years we've been talking about a point in the future when it'll be possible to start playing console class games (Xbox 360/PS3) on mobile devices. We're almost there. The move to Kepler with Logan is a big deal for NVIDIA. It finally modernizes NVIDIA's ultra mobile GPU, bringing graphics API partity to everything from smartphones to high-end desktop PCs. This is a huge step for game developers looking to target multiple platforms. It's also a big deal for mobile OS vendors and device makers looking to capitalize on gaming as a way of encouraging future smartphone and tablet upgrades. As smartphone and tablet upgrade cycles slow down, pushing high-end gaming to customers will become a more attractive option for device makers.

Logan is expected to ship in the first half of 2014. With early silicon back now, I think 10 - 12 months from now is a reasonable estimate. There is the unavoidable fact that we haven't even seen Tegra 4 devices on the market yet and NVIDIA is already talking about Logan. Everything I've heard points to Tegra 4 being on the schedule for a bunch of device wins, but delays on NVIDIA's part forced it to be designed out. Other than drumming up IP licensing business, I wonder if that's another reason why we're seeing a very public demo of Logan now - to show the health of early silicon. There's also a concern about process node. Logan will likely ship at 28nm next year, just before the transition to 20nm. If NVIDIA is late with Logan, we could have another Tegra 3 situation where NVIDIA is shipping on an older process technology.

Regardless of process tech however, Kepler's power story in ultra mobile seems great. I really didn't believe the GLBenchmark data when I first saw it. I showed it to Ryan Smith, our Senior GPU Editor, and even he didn't believe it. If NVIDIA is indeed able to get iPad 4 levels of graphics performance at less than 1W (and presumably much more performance in the 2.5 - 5W range) it looks like Kepler will do extremely well in mobile.

Whatever NVIDIA's reasons for showing off Logan now, the result is something that I'm very excited about. A mobile SoC with NVIDIA's latest GPU architecture is exactly what we've been waiting for.

141 Comments

View All Comments

ollienightly - Thursday, July 25, 2013 - link

And what do we need OpenGL 4.4 for? More wasted silicon or power? You do realize NO ONE would ever develop OpenGL 4.4 games for the Tegra 5 EVER.djgandy - Thursday, July 25, 2013 - link

Nvidia haven't even implemented OpenGL ES 3.0 on anything that ships or is near to shipping! And now they are going completely to the other end of the spectrum and doing a fully DX11 compliant chip!? Oh and the reason there is no OpenGL 4.4 on other mobile GPU's is because there is no point in doing it. Why burn area for an API you cannot use.Keep smoking that Nvidia marketing bs

ltcommanderdata - Wednesday, July 24, 2013 - link

The expectation is that this year is Apple's "S" refresh which last time with the iPad 2/iPhone 4S brought 9x (iPad 2)/7x (iPhone 4S) claims of GPU performance increases over the previous generation by Apple which I believed translated into ~5x real world performance improvements. As such a 5x theoretical GPU performance increase by nVidia in 2014 is not out of line with what Apple could be delivering later in 2013. As michael2k points out, PowerVR 6 Rogue is certainly scalable to those performance levels. We'll have to see them implemented in actual devices to see how well they compare within realistic power constraints to really compare their effectiveness of course.mmrezaie - Wednesday, July 24, 2013 - link

I also think the end result will be like what we have with AMD. AMD offers very good hardware, but nvidia pushes more on api/driver lvl. So if PowerVR and Kepler become comparable I wonder what kind of competition we will see in Driver/API/Game Engine front.ltcommanderdata - Wednesday, July 24, 2013 - link

http://gfxbench.com/compare.jsp?D1=Apple+iPhone+5&...Apple actually writes their own drivers for their PowerVR GPUs which seem to be very efficient considering the iPhone 5's SGX543MP3 actually achieves double the triangle throughput of the Galaxy S4's SGX544MP3 despite the Galaxy S4 having higher clock speed and memory bandwidth. So there is the comparison between PowerVR reference drivers which is probably what most device manufacturers are using vs Keplar and Apple PowerVR drivers vs Keplar. It's a safe bet Apple will continue to make gaming a focus on iOS given that's a major part of the App Store, so they'll continue putting effort into GPU driver performance optimization. nVidia of course has a long track record with driver optimization so we'll definitely see lots of competition in this area.

In terms of features, Apple continues expose new features in PowerVR GPUs through OpenGL ES extensions. They've already implemented or are going to implement in iOS 7 a number OpenGL ES 3.0 features on existing Series 5/5XT GPUs including sync objects, instanced rendering, and additional texture formats, etc. The major untapped feature is multiple render target support which should be coming since the EXT extension has now been finalized. Series6/Rogue is DX10 compliant, so I expect we'll be seeing those additional features like geometry shaders exposed through OpenGL ES 3.0 extensions. So it'll be a comparison between OpenGL 3.3 vs OpenGL 4.4 exposed through OpenGL ES 3.0 extensions.

One advantage Apple does have is that they can regularly release performance optimizations and new features in regular iOS updates which see rapid adoption throughout the userbase so developers can count on the features and use them. nVidia likely has more trouble pushing out new drivers broadly on Android. As such, it's good that nVidia is aiming high to begin with in terms of performance and features since they have less opportunity to gradually increase them over time through driver updates.

name99 - Wednesday, July 24, 2013 - link

You also have to remember that most of Apple's customers (like most customers of all phones and tablets) don't give a damn about the most demanding games. Apple is happy to help out game developers, happy even to boast about them occasionally, but games are not central to what Apple cares about in a GPU.What Apple DOES care about is enhancing the entire UI. This means that (especially as they get more control over their entire SOC) they're going to be doing more and more things that will be invisible if you just look at specs and traditional benchmarks. For example, it means that they will pick and choose whatever features are valuable in Open GL4.4 and integrate those into their devices (maybe, maybe not, exposing API).

In this context, for example, the shared buffers of Open GL4.4 would be extremely valuable to Layer Manager, and they have enough control over the CPU (and I assume could negotiate enough control over the GPU) to implement what's necessary to get this working on their systems. It could be there, invisible to external programmers, but making Layer Manager operations (ie the entire damn UI) run 20% faster and with 20% less energy.

A second example. Apple, on both OSX and iOS, have constantly tried to push image processing ever closer to what is "theoretically" correct rather than computationally easy. So they would like all image processing to be done in something like Lab space, and only converted to gamma corrected RGB at the very stage of display. They do this on OSX, on iOS with less CPU power they do as much as they can in SRGB space. But if you had HW that ran the transformations from RGB (with various profiles) or YUV (again with various profiles) to Lab and back, you could expand this for usage everywhere. It's the kind of small thing (like kerning or ligatures) that many people won't notice, but it will make all images (and especially manipulated/processed images) on iOS look just that much better --- but to really pull it off requires dedicated HW.

Point is: I am sure Apple will continue to throw more transistors at the GPU part of their SOC. But they won't necessarily be throwing those transistors at the same things nVidia is throwing them at. They may even seem to lag nVidia in benchmarks, but it would be foolish to map that onto assuming the devices feel slower than nVidia devices.

happycamperjack - Wednesday, July 24, 2013 - link

I think your opinion about Apple's position on gaming is 4 years old. 75% of App store revenue come from games. iPhone 3GS, iPhone 4S, iPhone 5, iPads all featuring GPU, not necessarily CPU, that were well ahead of competitions when it came out. So Apple doesn't just CARE about games, they effing love games/money! It didn't start out this way of course, but they learn that's what their customers wanted through app downloads and sale, so that's what they are focusing now.lmcd - Wednesday, July 24, 2013 - link

Apple's mentality there is accurate for Macs but not iOS devices.happycamperjack - Wednesday, July 24, 2013 - link

Well I can't blame them. Most computer games are written and optimized in DirectX. With the mac market still a small fraction of of PC, it's hard to see developers change their DirectX stance. Thus it's hard to see Apple change their stance on computer games as well. It's more likely for them to make a console based on iOS then push for Mac games.TheJian - Monday, August 5, 2013 - link

The app store doesn't make a ton of money, neither does google's currently. Revenue and profit are two different things also. They are both really just designed to push the platforms and devices. Maybe they will be huge money makers in the end as games get more evolved and rise in price, but I doubt Apple makes 1B on the store vs something like 39B from the hardware. In 2011 Piper Jaffray said they made ~$239 mil but apple divulges nothing so no real proof of what they pay in costs to run the store or what they make after that. Apple has only claimed to run at break even (oppenheimer 2010). If numbers are correct, they make ~$3bil in revenue on downloads but how much is left for profits after server costs, maintaining them, bandwidth etc.Apple cares about games when they start OPTIMIZING for their products. Currently they are inspiring nobody to do this. I see nobody but Nvidia doing anything to make things better looking on their hardware. The fact that apple's store has 75% of revenue from games just says game devs own most of the revenue from the store, and that consoles will suffer because many game elsewhere (which shows in wiiu/vita sales). It says nothing about APPLE themselves promoting games development.

Links to Apple proof of game investing please. So far all I see is "you should be thankful we let you put your game on IOS" rather than "Here' please make it BETTER on IOS & A6 and we'll help you". With NV they send help to the game dev to optimize for their hardware (literally send people to help out). Check out Ouya comments etc. If apple is doing this I'm not aware of it and have seen no articles from any dev saying "apple was great to work with, gave tons of help to optimize for A6" etc...AMD/NV have courted game devs forever (ok, 20yrs). I have seen nothing from Apple and devs didn't even jump on macs conversions until they hit 10% share (which is dropping now, so I expect it to drop again for devs). Apple appears to be learning nothing. If they had made games a HUGE part of their priority 3yrs ago android would have never taken off. They played the enterprise card properly but so far they have wasted the game card. Enterprise brought down Rimm (everyone gaining exchange), but only google seems to be helping to court devs (and NV helping). Shield wasn't on display in main view for all at Google IO for nothing. Google seems to understand gaming is needed to kill windows, take over some PC sales and kill directx. All of these are done with games on android. Couple that strategy with a free OS and Free office package at some point for home users (in a decent package) and WINTEL isn't needed. Qcom/NV/Samsung will provide the soc power and they google provides the platform to run on them. All of us win in games if we get off directx. Devs make more money because of easy porting thus having more money for risk on better games since they have so many to target. Any decent game should make money just due to the sheer # of devices to sell to at that point.