The ARM Diaries, Part 2: Understanding the Cortex A12

by Anand Lal Shimpi on July 17, 2013 12:30 PM EST- Posted in

- CPUs

- Arm

- SoCs

- Cortex A12

Back End Improvements

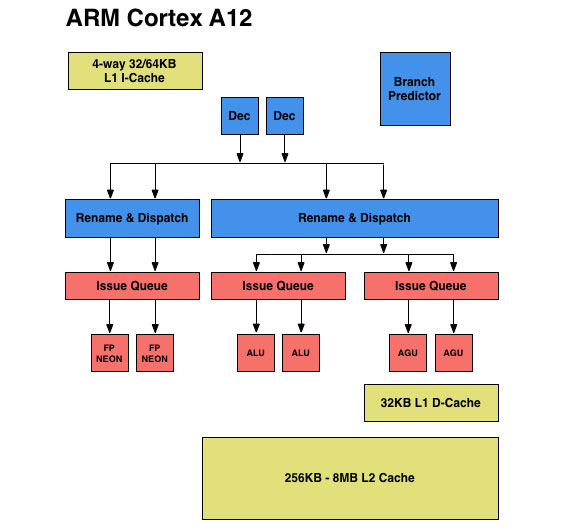

The front end of the Cortex A12 is a bit more efficient than the Cortex A9, but the bulk of the performance gains really come from improvements to the execution side of the core. Similar to the Cortex A15, ARM introduced multiple independent issue queues ahead of the functional units. It’s important to get nomenclature right here. Instructions are decoded into micro-ops, renamed instructions are dispatched into the issue queues and then micro-ops are issued from the issue queues when their operands are available. Everything up to the issue queue is handled in order, while issuing can be handled out of order in the Cortex A12 (in most cases, more on this later).

Whereas the Cortex A9 had a single issue queue ahead of all functional units, the Cortex A12 moves to three independent issue queues. The A9’s issue queue could hold 4 decoded instructions, while each issue queue in Cortex A12 is larger than that. The move to larger independent issue queues alone should help with increasing IPC.

The three issue queues are as follows: one for integer, one for FP/NEON and one for loads and stores. ARM provided bits and pieces of an architectural block diagram for the Cortex A12. I reconstructed one as best as I could below. The blue blocks indicate in-order components of the design, while the pink/salmon blocks are out-of-order. You can toggle between the A12 and A9 diagrams to see how things have changed.

Cortex A12 retains the two integer pipelines of the Cortex A9, but adds support for integer divides (like the A7 and A15, other A-series architectures generally lacked support for hardware int divides). The rest of the integer execution capabilities are unchanged.

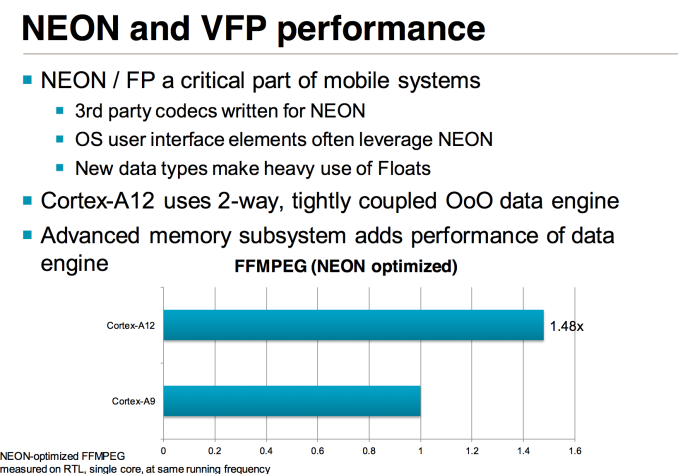

The FP/NEON units are vastly improved on the Cortex A12. When the Cortex A9 was first introduced, NEON code was rarely used which even lead NVIDIA to dropping NEON support altogether in Tegra 2. Times quickly changed as NEON code is widely used in Android and mobile applications.

The Cortex A12 design retains separate physical register files for integer and FP operations, but the RFs are larger than in Cortex A9.

Although Cortex A9 was considered an out-of-order microarchitecture, all FP and NEON instructions were executed in-order. With Cortex A12, ARM moves to a fully out-of-order architecture, at least as far as non-memory-ops are concerned. The FP/NEON issue queue now dual-issues into two FP/NEON pipes, both of which operate fully out-of-order. The FP/NEON pipes are also more tightly coupled, allowing for quicker data movement between FP and Integer units.

The improvements to the FP/NEON side are expected to show up quite nicely in benchmarks. ARM shared performance data using an FFMPEG workload on simulated Cortex A9 and Cortex A12 designs at the same frequency with the same number of cores (1):

A 48% increase in NEON performance isn’t unexpected at all given the magnitude of improvements to this part of the execution engine.

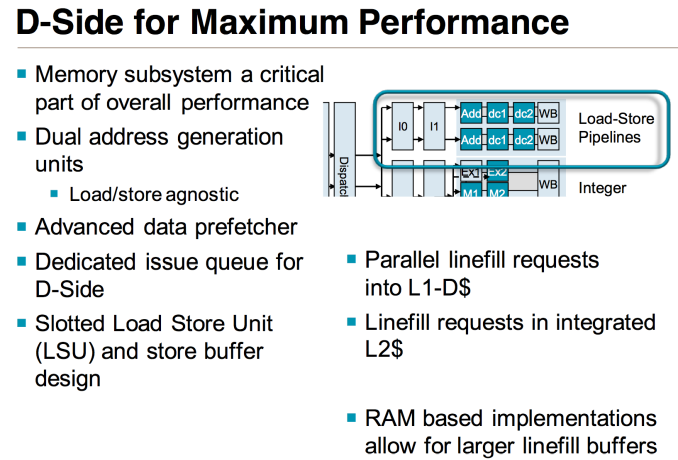

The final issue queue feeds the two load-store pipelines with two AGUs, once again a doubling from what was present in the Cortex A9 design. Each pipeline is equally capable (load/store agnostic) and mostly out-of-order (limits on what you can re-order if there are address dependencies between loads). By comparison, the load/store pipe in Cortex A9 was fully in-order.

65 Comments

View All Comments

Krysto - Saturday, July 20, 2013 - link

The difference is those ARM chips do take full advantage of the maximum core speed. Saying you start a web page - any web page. It WILL activate the maximum clock speed - whereas the Turbo-Boost in Atom doesn't activate all the time.If we're talking about receiving notifications and such, then obviously the ARM processors won't go to 2 Ghz either, but that's not really what we're talking about here, is it? We're talking about what happens when you're doing normal heavy stuff (web browsing, apps, games).

jeffkibuule - Monday, July 22, 2013 - link

That's the problem I have with performance benchmarks on cell phones. At some point thermal throttling kicks in because you're draining the battery a ton running your CPUs at full tilt. IPC improvements will be felt far more than clock speed ramping. If you ever look at CPU-Z on Android, you'll notice that a Snapdragon 600 with 4 cores clocked at 1.7Ghz tries its hardest to downclock to 1 core at 384Mhz. Even just scrolling up and down the monitoring screen pumps up the CPU speed to 1134Mhz and turns on a second core as well. Peak performance is nice, but ideally should rarely be utilized.Krysto - Saturday, July 20, 2013 - link

No, I meant it's a problem because Atom chips look like they are "competitive" in benchmarks, when in reality they have HALF the performance. That's what I was saying. It's a problem for US, not Intel. Intel wins by being misleading.felixyang - Thursday, July 18, 2013 - link

intel didn't mislead you. In SLM's review, they have very clear description about turbo. Copied here.Previous Atom based mobile SoCs had a very crude version of Intel’s Turbo Boost. The CPU would expose all of its available P-states to the OS and as it became thermally limited, Intel would clamp the max P-state it would expose to the OS. Everything was OS-driven and previous designs weren’t able to capitalize on unused thermal budget elsewhere in the SoC to drive up frequency in active parts of chip. ........ this is also how a lot of the present day ARM architectures work as well. At best, they vary what operating states they expose to the OS and clamp max frequency depending on thermals.

opwernby - Thursday, July 18, 2013 - link

That's not cheating: it's what compilers are supposed to do. For example, if you write, "for (i=0; i<1000; i++);" a good optimizing compiler will analyze the loop, realize that it does nothing, resolve it to "i=1000;" and compile that. I believe the first use of this type of aggressive compiler technology was seen in Sun's C compiler for whatever version of Solaris it was that ran on the Sparc chips back in the '80s. The fact that the ARM compilers didn't do this speaks more about the expected performance of the chipset than anything else: you can build hardware to be as fast as you like, but if the compilers can't keep up, you might as well be running your code on a Commodore Pet.opwernby - Thursday, July 18, 2013 - link

Speaking of the Sun thing: I distinctly remember that the then-current version of the Sun "pizza-box"-style workstation appeared in benchmarks to be 100 times faster than the IBM PC-RT (another RISC architecture competing with Sun's platform) even though, on paper, the PC-RT was running on faster hardware: analysis of the benchmarks' compiled code revealed that Sun's compiler had effectively edited out the loops as I described above. Result: the PC-RT died off very quickly.FunBunny2 - Friday, July 19, 2013 - link

The PC-RT didn't last long, but the processor (in its children) lives on as the RS-6000/PPC/iSeries/ZWilco1 - Thursday, July 18, 2013 - link

It's certainly cheating, if you followed the whole thing it was not just about ICC optimizing much of the benchmark away. The particular optimization was added recently to ICC - it was a lot more complex than an empty loop, it only optimized a very specific loop by a huge factor (so specific that if you compiled all open source code it would likely only apply to the benchmark and nothing else). For some odd reason AnTuTu then secretly switched to that ICC version despite ICC not being a standard Android compiler. Finally it turned out the settings for ARM were non-optimal, using an older GCC version with pretty much all loop optimizations disabled. Intel and ABI research then started making false claims on how fast Atom was compared to Galaxy S4 based on the parts of AnTuTu that were broken (without actually mentioning AnTuTu).Giving one side such a huge unfair advantage is called cheating. As a result AnTuTu will now stop using ICC.

jwcalla - Thursday, July 18, 2013 - link

This is why benchmarks have to be taken with a healthy dose of skepticism.First, if the benchmark program isn't open source, right off the bat it's worthless. If you can't see the code, you can't trust it.

Second, if the program isn't compiled with the same compiler and the same compiler options, the results are crap. You're not getting a valid comparison of the hardware itself.

It's kind of ridiculous seeing many of the journalists out there who took this sensational headline and ran with it without even questioning its legitimacy.

Wilco1 - Wednesday, July 17, 2013 - link

The IPC comparison for integer code goes like:Silverthorne < A7 < A9 < A9R4 < Silvermont < A12 < Bobcat < A15 < Jaguar

This is based on fair comparisons using Geekbench and so doesn't reflect what some marketing departments claim or what cheated benchmarks (ie. AnTuTu) appear to show.