The ARM Diaries, Part 1: How ARM’s Business Model Works

by Anand Lal Shimpi on June 28, 2013 12:06 AM ESTHow ARM Makes Money

AMD, Intel and NVIDIA all make money by ultimately selling someone a chip. ARM’s revenue comes entirely from IP licensing. It’s up to ARM’s licensees/partners/customers to actually build and sell the chip. ARM’s revenue structure is understandably very different than what we’re used to.

There are two amounts that all ARM licensees have to pay: an upfront license fee, and a royalty. There are a bunch of other adders with things like support, but for the purposes of our discussions we’ll focus on these big two.

Everyone pays an upfront license fee and everyone pays a royalty. The amount of these two is what varies depending on the type of license.

The upfront license fee depends on the complexity of the design you’re licensing. An older ARM11 will have a lower up front fee than a Cortex A57. The upfront fee generally ranges from $1M - $10M, although there are options lower or higher than that (I’ll get to that shortly).

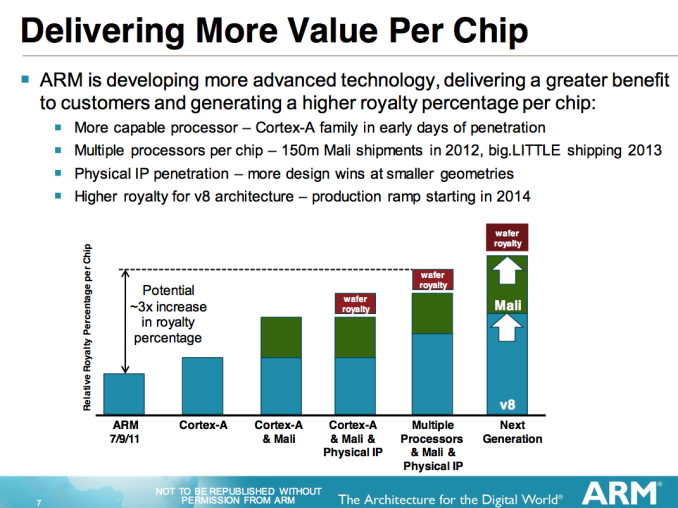

The royalty is on a per chip basis. Every chip that contains ARM IP has a royalty associated with it. The royalty is typically 1 - 2% of the selling price of the chip. For chips that are sold externally that’s an easy figure to calculate, but if a company is building and selling a chip internally the royalty is based on what the market price would be for that chip.

Both the up front license fee and the royalty are negotiable. There are discounts for multiple ARM cores used in a single design. This is where things like support contracts come into play.

Buses/interfaces come for free, you really just pay for CPU/GPU licenses. ARM’s Mali GPU is typically going to be viewed as an adder and is currently viewed as demanding less of a royalty than ARM’s high-end CPU licenses. A rough breakdown is below:

| ARM Example Royalties | ||

| IP | Royalty (% of chip cost) | |

| ARM7/9/11 | 1.0% - 1.5% | |

| ARM Cortex A-series | 1.5% - 2.0% | |

| ARMv8 Based Cortex A-series | 2.0% and above | |

| Mali GPU | 0.75% - 1.25% adder | |

| Physical IP Package (POP) | 0.5% adder | |

In cases of a POP license, the royalty is actually paid by the foundry and not the customer. The royalty is calculated per wafer and it works out to roughly a 0.5% adder per chip sold.

It usually takes around 6 months to negotiate a contract with an ARM licensee. From license acquisition to first revenue shipments can often take around 3 - 4 years. Designs can then ship for up to 20 years depending on the market segment.

Of the 320 companies that license IP from ARM, over half are currently paying a royalty - the rest are currently in period between signing a license and shipping a product. ARM signs roughly 30 - 40 new licensees per year.

About 80% of the companies that sign a license end up building a chip that they can sell in the market. The remaining 20% either get acquired or fail for other reasons. Royalties make up roughly 50% of ARM’s total revenues, licensing fees are just over 33% and the remainder is equally distributed between software tools and technical support.

ARM's revenues are decent (and growing), but it's still a relatively small company. In 2012 ARM brought in $913.1M. Given how many ARM designs exist in the market (and the size of some of ARM's biggest customers), it almost seems like ARM should be raising its royalty rates a bit. Because of ARM's unique business model, gross margin can be north of 94%. Operating margin tends to be around 45% though.

64 Comments

View All Comments

Arbee - Friday, June 28, 2013 - link

AMD's clones up through the 486 were true clones of the relevant Intel parts. For the Pentium Intel stopped allowing that and AMD started making their own designs, which at first weren't all that good.ShieTar - Friday, June 28, 2013 - link

Even these days, AMD is somewhat forced to at least copy the INTEL instruction set. At this point, Intel definitly profits from the situation, whatever they decide to include for their CPU, AMD needs to support soon enough in order not to fall back.fluxtatic - Monday, July 1, 2013 - link

The new arch Intel made was Itanium, as they were hoping to get away from x86. We all know how well that worked out.What put AMD behind was Intel's incredibly dirty tricks around the days the Athlon64 was stomping all over Intel - all the backroom deals with Dell and HP, among others, to keep AMD parts out of PCs. Intel would kick in significant amounts of money in both marketing dollars and straight (very illegal) kickbacks to companies that were willing to play ball.

It ended up settling a couple years ago, with Intel paying around $2 billion, as I recall, but that was far too late for the damage done to AMD.

And to Wolfpup's point - there's likely not even money changing hands between Intel and AMD these days - they've both got significant contributions to the x86 architecture that are covered by the cross-licensing agreement. However, likely a lot of the newer features Intel has developed aren't covered, and AMD has to clean-room reverse engineer them if they want to include them in their own designs.

Scannall - Friday, June 28, 2013 - link

There were many reasons for them licensing x86 at the time. And there used to be a whole lot of licensees. AMD is just the last one standing. Other manufacturers were coming up with very good and compelling CPU architectures, and there was really no standard per se. Between contract requirements, and many companies using licensed x86 IP it became the 'standard'.So it looks to me like it was far from a mistake. Use all the licensees to get your architecture king of the hill, then grind them into the grave. Big win for Intel.

rabidpeach - Saturday, June 29, 2013 - link

so arm could be early intel.1. build up cash pile thru licensing

2. buy a fab

3. make it make your exclusive next gen processor that isnt licensed

4. sell it and destroy all the commodity producers

this strategy would need intel to stay on the sidelines.

TheinsanegamerN - Sunday, June 30, 2013 - link

except amd went and sold its fab.slatanek - Friday, June 28, 2013 - link

Great article Anand! It's actually something I have been thinking for a while too (ARM). But I have to admit I miss PODCASTS too! (I'm not the only one as it seems)Callitrax - Friday, June 28, 2013 - link

I'm not so sure the statement "In the PC world, Intel ... ends up being the biggest part of the BoM (Bill of Materials)" is all that accurate. I know it hasn't been for me for 10+ years, the largest part of my BoM is I think the same as a mobile device - the display. Anybody running multiple displays or 1200+ vertical lines (which probably constitutes a large fraction of the readers here) spent spent somewhere between $300 and $800+ dollars which means that they would need an i7 at the low end or i7 extreme at the high end (+PCH) to for Intel's cut to exceed the display cost. And for that matter Samsung just topped the processor in my computer (SSD).* okay technically you could break the monitor BoM into payments to a few companies, but my panel I would guess still exceeds my cpu since the display was $600 on sale.

** and the economics get fuzzed up a little since desktop displays can last through several cpus (mine is on its 3rd cpu and 4 graphics card) whereas mobile device displays are glued to the cpu.

mitcoes - Friday, June 28, 2013 - link

it is possible we will see future ARM64 models where the SoC is plugged in, even a standard connector / format, and be able to upgrade the SoC 2 or 3 times as we do actually with desktop computers. I suggested it to several brands, and I suposse they read the suggestions, and perhaps one will do itname99 - Friday, June 28, 2013 - link

It makes zero sense to optimize for something that is cheap (and part of a cheap system). The days of replaceable cores are gone, just like the days of replaceable batteries. Complaining about them just reveals you as out of touch.There is good physics behind this, not just bitchiness. Mounting which allows devices to be plugged in and out is physically larger, uses more power, and limits frequencies and so performance. There's no point in providing it in most circumstances.

Next to go (for this sort of reason) will be replaceable RAM. It's already not part of the phone/tablet/ultrabook experience, and at some point the performance limitations (as opposed to the size and power issues) will probably mean it's also removed from mainstream desktops.

Complain all you like, but physics is physics. The impedance mismatch from these sorts of connectors is a real problem.