AMD's A10-5750M Review, Part 2: The MSI GX60 Gaming Notebook

by Dustin Sklavos on June 29, 2013 12:00 PM ESTGaming Performance

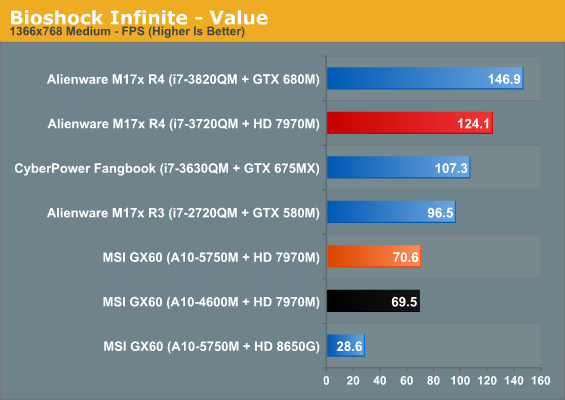

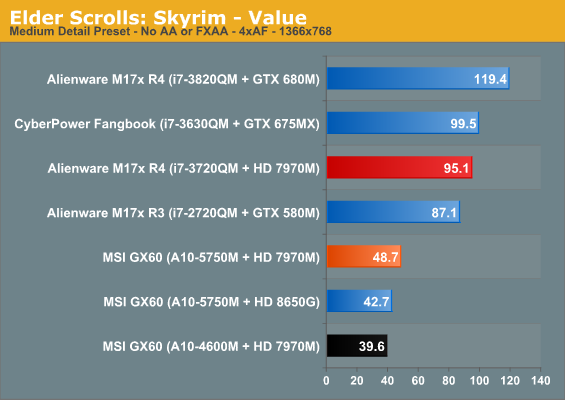

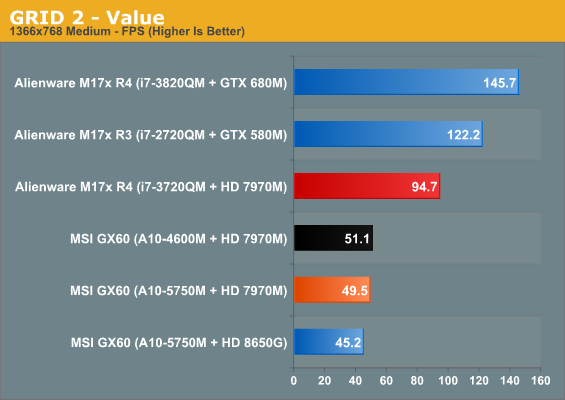

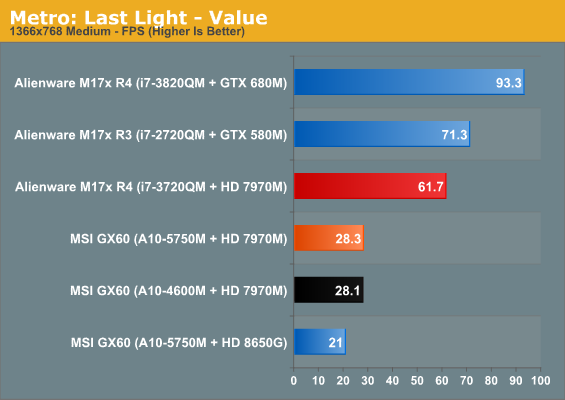

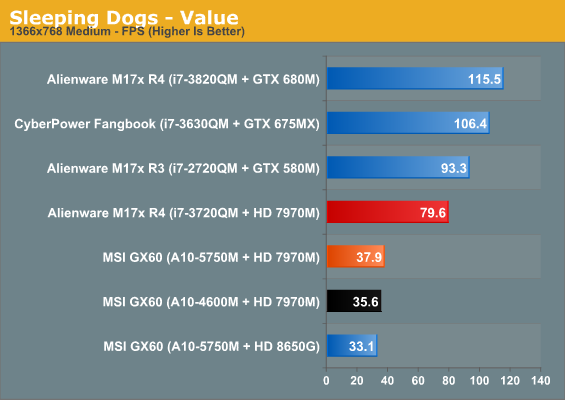

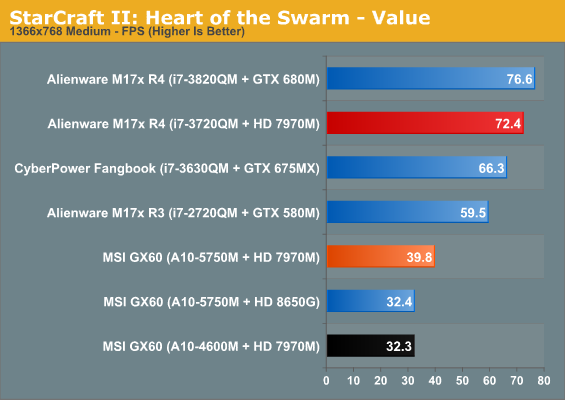

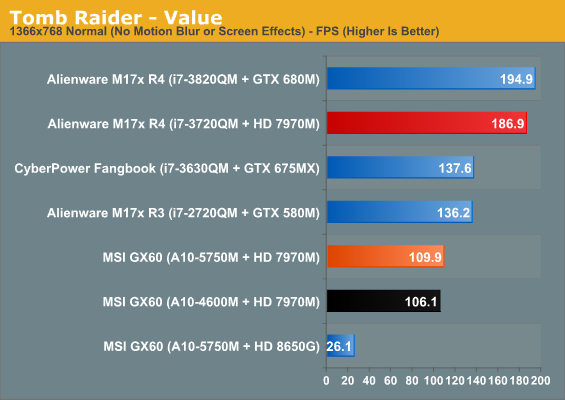

Given that the AMD Radeon HD 7970M is the fastest mobile GPU that AMD offers, I'd ordinarily eschew including our "Value" benchmark results for the MSI GX60. Under the circumstances, though, those numbers might be enlightening. When a system is heavily CPU-limited, gaming benchmark results will often be flat or show very little performance loss as you move up in resolution and settings. It's reasonable to assume we'll see that kind of phenomenon here.

At our "Value" settings, it's clear Richland is giving the 7970M at least a little more performance headroom, but the gulf is massive compared to the way Ivy lets it stretch its legs. Metro: Last Light seems to be a bit of a bizarre outlier, though. Metro 2033 used to hammer the GPU almost exclusively, but times seem to have changed with the new release.

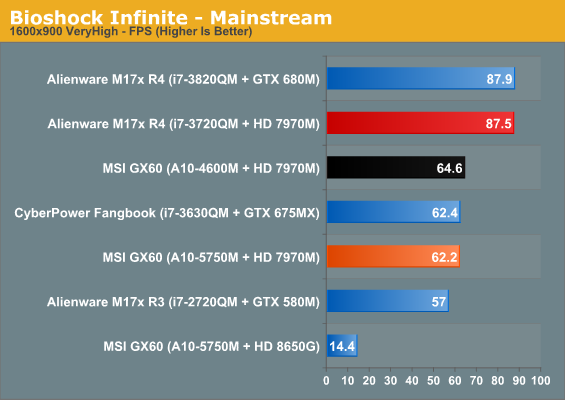

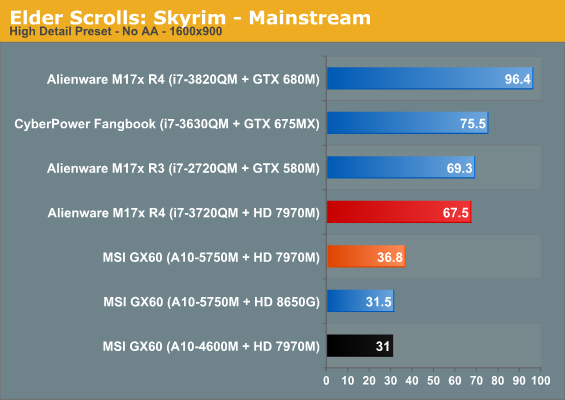

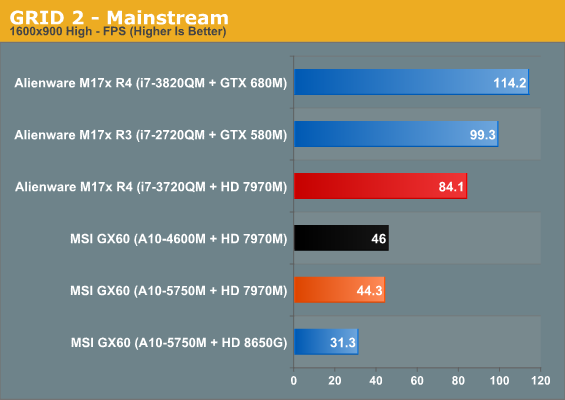

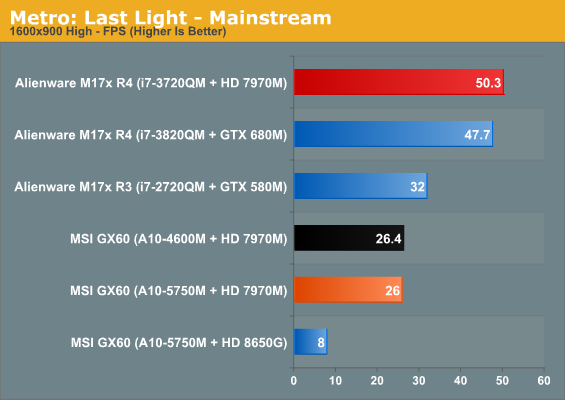

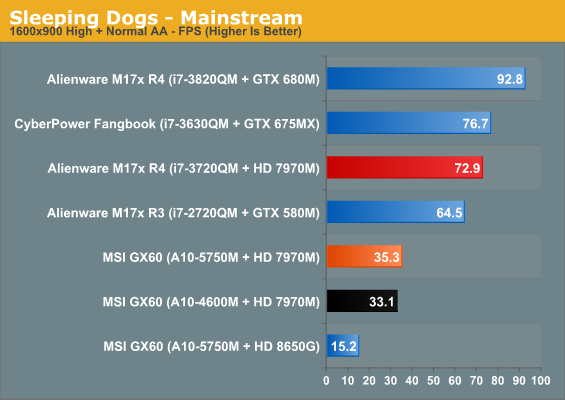

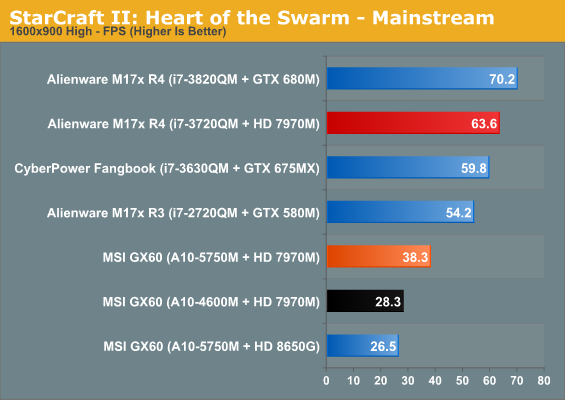

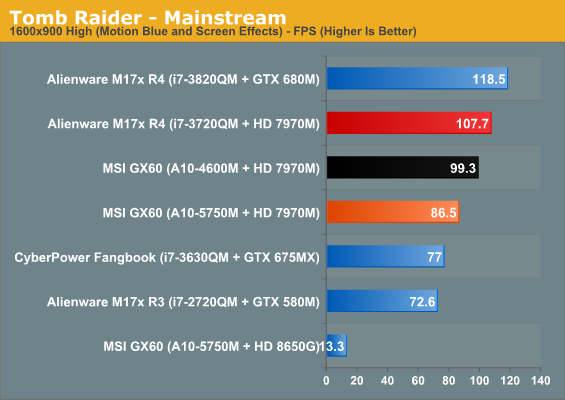

Mainstream performance actually demonstrates the same kind of weird wash between Trinity and Richland that I experienced testing using the IGP. It's only Tomb Raider that takes a bath with Richland, though, and even then it's still very playable. While our settings here help close the gap between the Alienware M17x R4's 7970M and the MSI GX60's, we're still obviously leaving a lot of performance on the table. Metro: Last Light in particular continues to be unplayable.

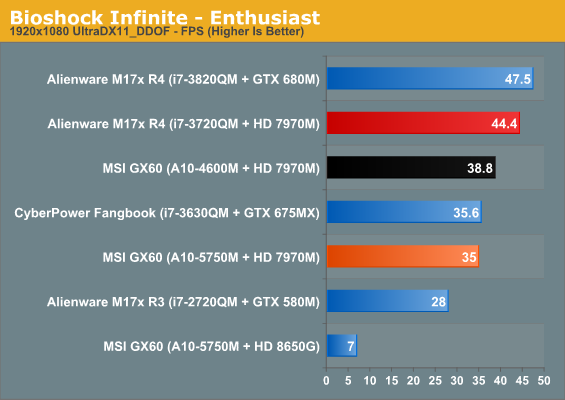

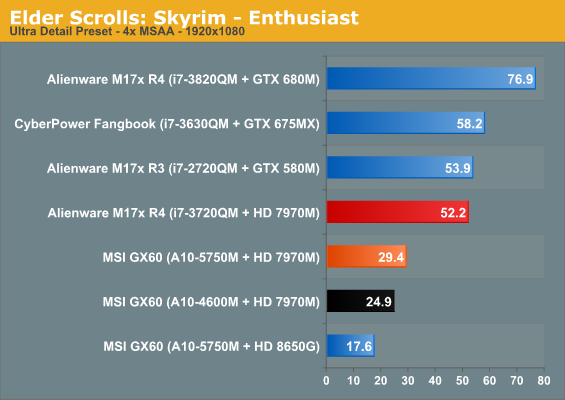

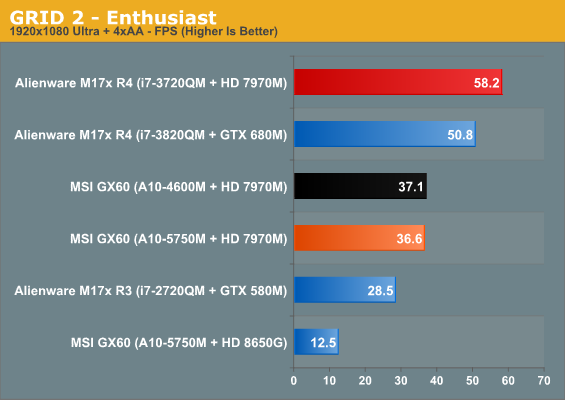

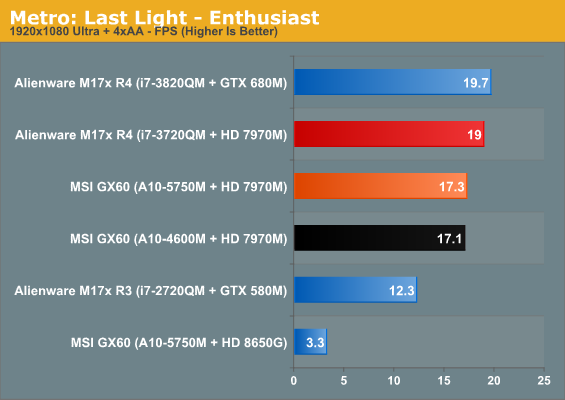

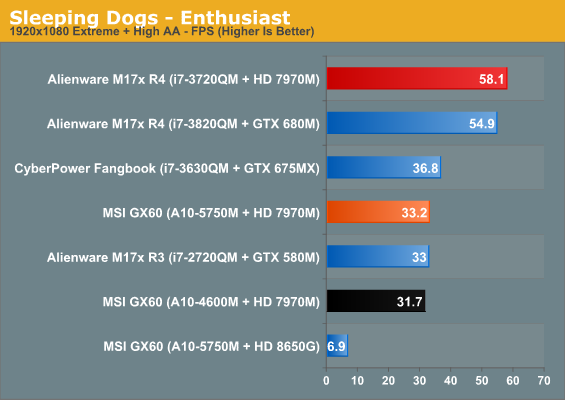

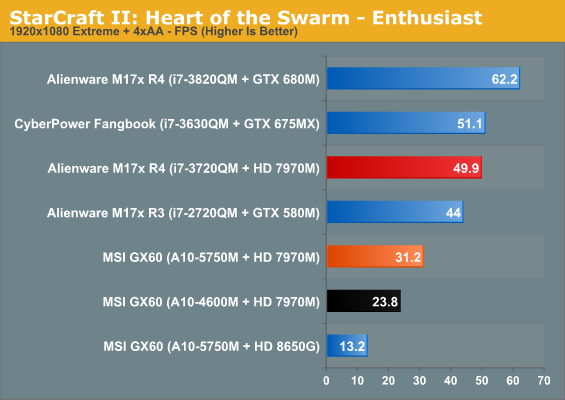

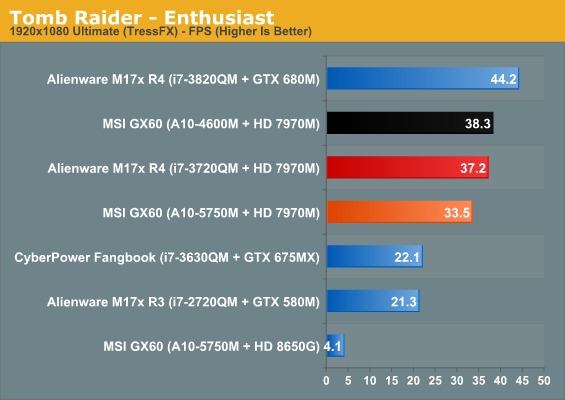

In some cases, enthusiast settings allow the GX60's 7970M to come within striking distance of the M17x R4's. Generally speaking, though, we still have a lot of performance left on the table, and it's sometimes even the difference between a game being playable and not. In situations where we're severely GPU limited (Tomb Raider with TressFX, for example), the GX60 makes a very strong case for itself. The problem is that in other situations, the GTX 675MX (and by extrapolation, the slightly slower GTX 765M) winds up producing a better experience because the CPU isn't bogging it down.

69 Comments

View All Comments

JarredWalton - Sunday, June 30, 2013 - link

My testing suggests otherwise. Despite having 20 EUs vs. 16 EUs, HD 4400 and HD 4600 are generally about the same performance as HD 4000. I've even got a quad-core standard voltage i7-4700MQ system (MSI GE40), and surprisingly there are several games where the HD 4600 iGPU fails to be a significant upgrade to an ULV HD 4000 in and i5-3317U. I'm not sure if Intel somehow changed each EU so that the Haswell EUs are less powerful relative to IVB, but outside of driver optimizations (it's still early in the game for Haswell) I have no good reason for the lack of performance I'm getting from HD 4600.sheh - Monday, July 1, 2013 - link

The i7-4500U review showed not much of a difference at times, and even slightly lower performance, but +20% in other cases. i7-4770 vs i7-3770 shows more improvement. I'm guessing the improvement in a 37W CPU would be more like the desktop parts rather than the 15W CPU. But, well, all theoretical anyway.TheinsanegamerN - Saturday, June 29, 2013 - link

its a little problematic, i think. they are ultrabook cpus, and bga to boot. it's cheaper to make laptops with rpga connectors than soldering the cpu to the motherboard. until rpga models appear (not likely) we might not see that many. they are also $100 more expensive than ultrabook models from last year, so a lot of maufacturers are still using ivy. toshiba just brought out 3 new laptops, two are ultrabooks, all which use ivy instead of haswell.TheinsanegamerN - Saturday, June 29, 2013 - link

never mind, just found list that included socketed models. guess the oems are just slowIntelUser2000 - Saturday, June 29, 2013 - link

The SV dual cores are actually Q4, so its quite a few bit left. They are planning on phasing it out in favor of U chips, where it Broadwell it disappears entirely.sheh - Sunday, June 30, 2013 - link

On the forum you said Q4 is rumors, any more concrete info since then?arthur449 - Saturday, June 29, 2013 - link

I wouldn't sell or buy a laptop if it didn't have all its available memory channels populated. This entire review is pointless to me.JarredWalton - Saturday, June 29, 2013 - link

Because buying an extra 8GB DIMM for $75 or whatever is too hard? And it will only help with iGPU performance?arthur449 - Saturday, June 29, 2013 - link

Mainstream CPUs have used a dual channel memory controllers for so long that simply disabling access to one channel can have (as we've seen in this case) a not insignificant effect on benchmarks that do not directly stress the GPU portion of the chip. CPUs have had uses for more memory bandwidth before there were on-chip GPUs, afterall.Furthermore, in scientific terms, due to the author changing two variables in this experiment (var1a: iGPU. var 1b: dual channel. var2a: dGPU. var2b: single channel.) to compare the iGPU to the dGPU, we cannot be certain that the subtraction of the second channel of memory has a non-zero effect on dGPU performance.

If the author's intention was merely reviewing the out-of-the-box performance of this hardware configuration of the MSI GX60 Gaming Notebook, this would be fine. Instead, the article is titled: "AMD's A10-5750M Review: ..."

I'm not trying to be unnecessarily harsh. And the subject of this review could very easily apply to my interest. (I *just* purchased a laptop for a family member with an AMD A8-5550M.) But if the author is going to test the processor's gaming performance: don't change more than one variable.

TheinsanegamerN - Saturday, June 29, 2013 - link

the single channel ram should be fine in this case, since its not used for video at all. at least its 1600mhz