AMD's Richland vs. Intel's Haswell GPU on the Desktop: Radeon HD 8670D vs. Intel HD 4600

by Anand Lal Shimpi on June 6, 2013 12:00 PM EST3DMark and GFXBench

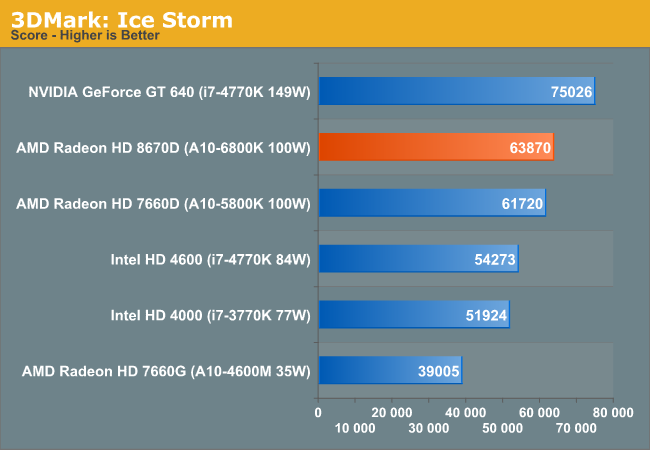

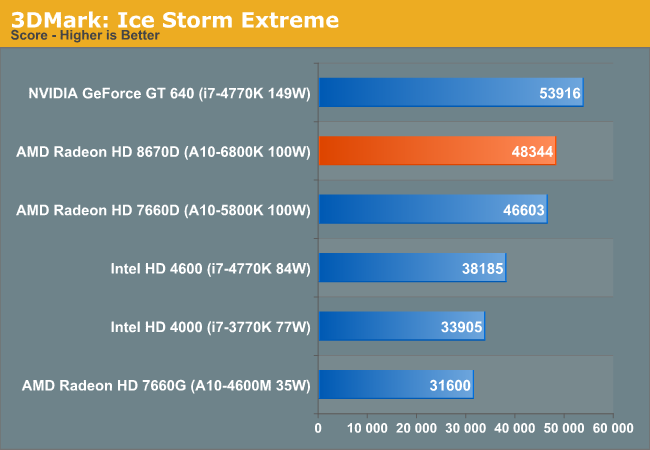

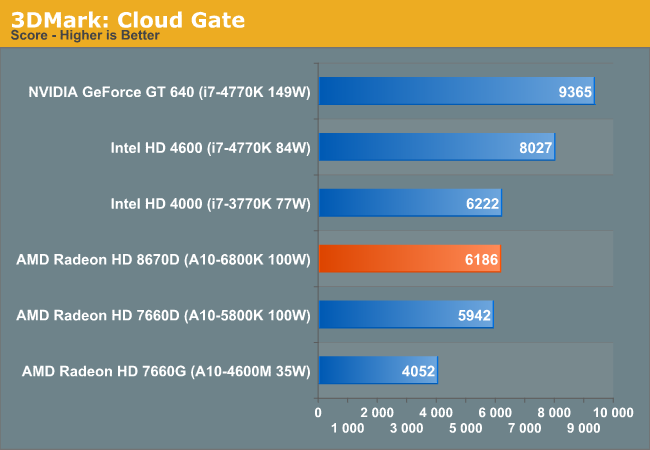

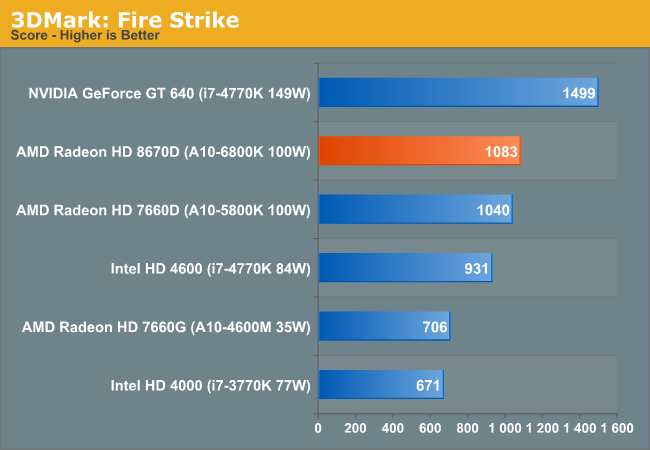

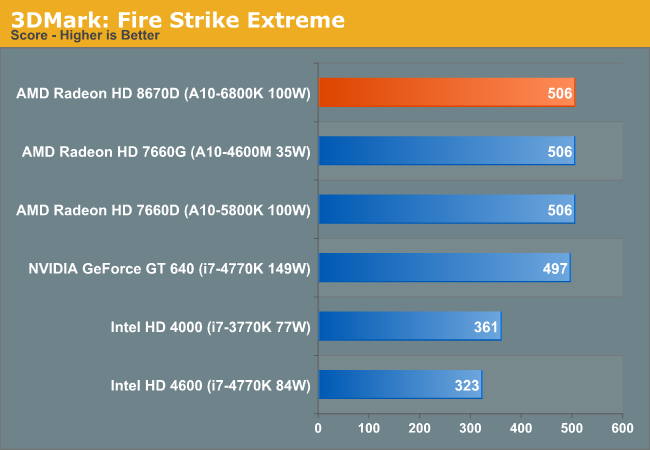

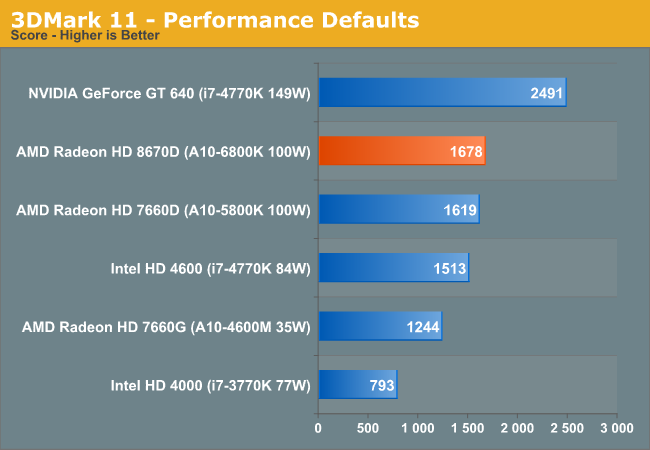

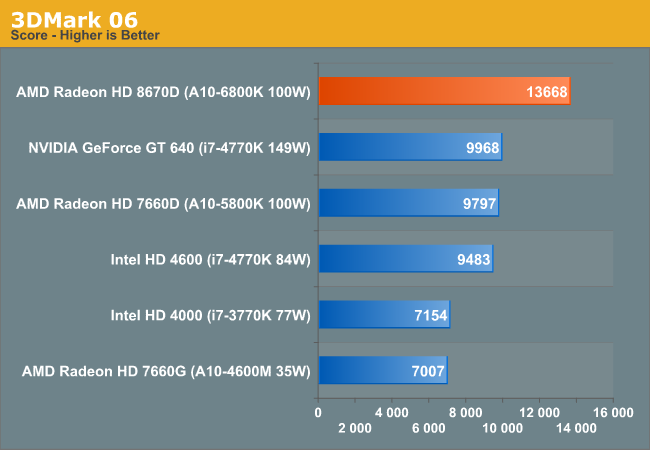

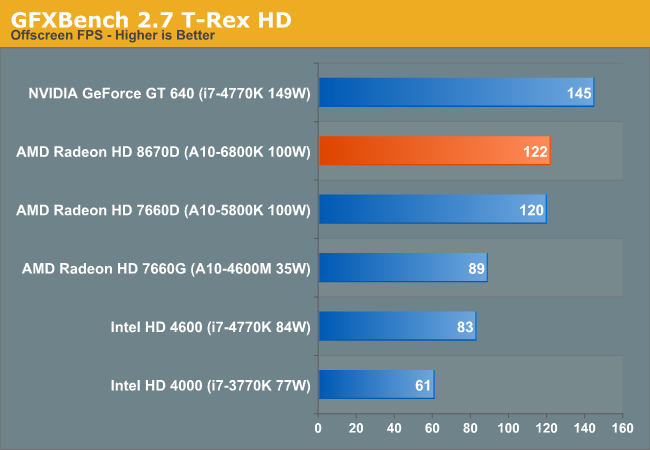

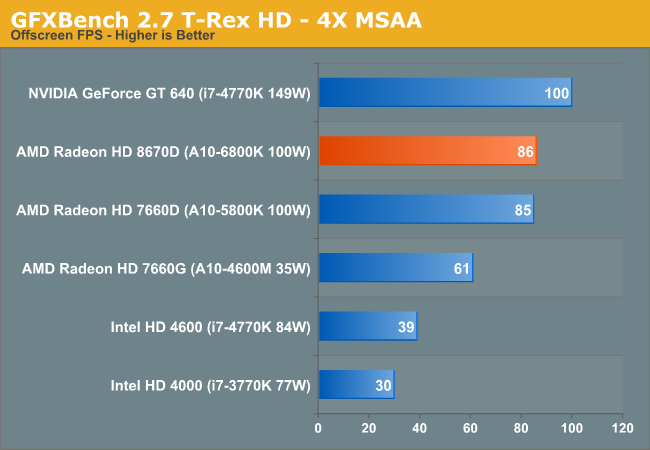

Although we don't draw any conclusions based on 3DMark and GFXBench, I ran this data on Richland as well since I had Trinity, Ivy Bridge and Haswell comparison points.

102 Comments

View All Comments

whatthehey - Thursday, June 6, 2013 - link

I can't imagine anyone really wanting minimum quality 1080p over Medium quality 1366x768. Where it makes a difference in performance, the cost in image quality is generally too great to be warranted. (e.g. in something like StarCraft II, the difference between Low and Medium is massive! At Low, SC2 basically looks like a high res version of the original StarCraft.) You can get a reasonable estimate of 1080p Medium performance by taking the 1366x768 scores and multiplying by .51 (there are nearly twice as many pixels at 1080p as at 1366x768). That should be the lower limit, so in some games it may only be 30-40% slower rather than 50% slower, but the only games likely to stay above 30FPS at 1080p Medium are older titles, and perhaps Sleeping Dogs, Tomb Raider, and (if you're lucky) Bioshock Infinite. I'd be willing to wager that relative performance at 1080p Medium is within 10% of relative performance at 1366x768 Medium, though, so other than dropping FPS the additional testing wouldn't matter too much.THF - Friday, June 7, 2013 - link

You're wrong. Most people who take Starcraft 2 seriously are actually playing on the highest resolution they can get, with the low detail setting. Sure, the game looks flashier, but it's easier to play with less detail. All pros do it.Also, as for myself, I like to have the games running on native resolution of the display. It makes "alt-tabbing" (or equivalent thereof on Linux) much more responsive.

Calinou__ - Friday, June 7, 2013 - link

+1, "Low" in today's AAA games is far from ugly if you keep the texture detail to the maximum.tential - Thursday, June 6, 2013 - link

This is my BIGGEST pet peeve with some reviewers who will test 1080p and only show those results when testing these types of chips. All of the frame rates will be unplayable yet they'll try to draw "some conclusion" from the results. Test resolutions where the minimum frame rate is like 20-25 fps by the contenders so I can see how smooth it actually will be when I play.I didn't purchase an IGP solution to play 10 FPS games at 1080p. I purchased it to play low resolution at OK frame rates.

zoxo - Thursday, June 6, 2013 - link

I always start by setting the game to my display's native res (1080p), and then find out at what settings can I achieve passable performance. I just hate non-native resolution too much :(taltamir - Thursday, June 6, 2013 - link

because you render at a lower res and upscale with iGPUsjamyryals - Thursday, June 6, 2013 - link

Love that Die render on the first page. It's dumb, but I always like seeing those.Homeles - Thursday, June 6, 2013 - link

It's not dumb. You can learn a lot about CPU design from them.Bakes - Thursday, June 6, 2013 - link

Not that it really matters but I think he's saying it's dumb that he always likes seeing those.Gigaplex - Thursday, June 6, 2013 - link

Homeles' comment could be interpreted in a way that agrees with you and says it's not dumb to like seeing them because you can learn lots.