NVIDIA GeForce GTX 770 Review: The $400 Fight

by Ryan Smith on May 30, 2013 9:00 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

GTX 770 ends up being an interesting case study in all 3 factors due to the fact that NVIDIA is pushing the GK104 GPU so hard. Though the old version of GPU Boost muddles things some, there’s no denying that higher clockspeeds coupled with the higher voltages needed to reach those clockspeeds has a notable impact on power consumption. This makes it very hard for NVIDIA to stick to their efficiency curve, since adding voltages and clockspeeds offers diminishing returns for the increase in power consumption.

| GeForce GTX 770 Voltages | ||||

| GTX 770 Max Boost | GTX 680 Max Boost | GTX 770 Idle | ||

| 1.2v | 1.175v | 0.862v | ||

As we can see, NVIDIA has pushed up their voltage from 1.175v on GTX 680 to 1.2v on GTX 770. This buys them the increased clockspeeds they need, but it will drive up power consumption. At the same time GPU Boost 2.0 helps to counter this some, as it will keep leakage from being overwhelming by keeping GPU temperatures at or below 80C.

| GeForce GTX 770 Average Clockspeeds | |||

| Max Boost Clock | 1136MHz | ||

| DiRT:S |

1136MHz

|

||

| Shogun 2 |

1136MHz

|

||

| Hitman |

1136MHz

|

||

| Sleeping Dogs |

1102MHz

|

||

| Crysis |

1136MHz

|

||

| Far Cry 3 |

1136MHz

|

||

| Battlefield 3 |

1136MHz

|

||

| Civilization V |

1136MHz

|

||

| Bioshock Infinite |

1128MHz

|

||

| Crysis 3 |

1136MHz

|

||

Speaking of clockspeeds, we also took the average clockspeeds for GTX 770 in our games. In short, GTX 770 is almost always at its maximum boost bin of 1136; the oversized Titan cooler keeps temperatures just below the thermal throttle, and there’s enough TDP headroom left that the card doesn’t need to pull back to avoid that. This is one of the reasons why GTX 770’s performance advantage over GTX 680 is greater than the clockspeed increases alone.

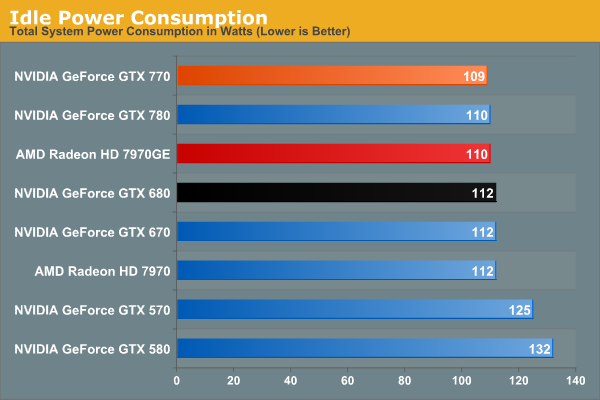

We don’t normally publish this data, but GTX 770 has an extra interesting attribute about it: its idle clockspeed is lower than other Kepler parts. GTX 680 and GTX 780 both idle at 324MHz, but GTX 770 idles at 135MHz. Even 324MHz has proven low enough to keep Kepler’s idle power in check in the past, so it’s not entirely clear just what NVIDIA is expecting here. We’re seeing 1W less at the wall, but by this point the rest of our testbed is drowning out the video card.

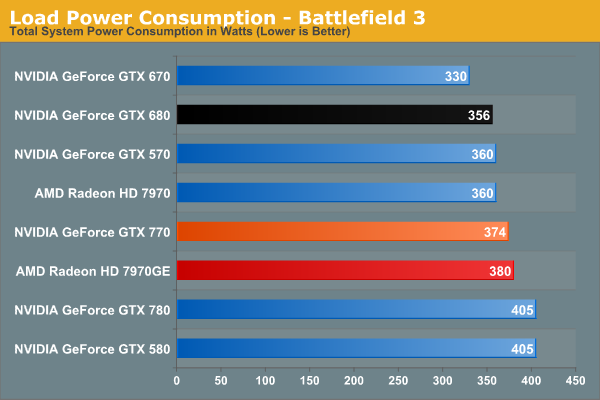

Moving on to BF3 power consumption, we can see the power cost of GTX 770’s performance. 374W at the wall is only 18W more than GTX 680, thanks in part to the fact that GTX 770 isn’t hitting its TDP limit here. At the same time compared to the outgoing GTX 670, this is a 44W difference. This makes it very clear that GTX 770 is not a drop-in replacement for GTX 670 as far as power and cooling go. On the other hand GTX 770 and GTX 570 are very close, even if GTX 770’s TDP is technically a bit higher than GTX 570’s.

Despite this runup, GTX 770 still stays a hair under 7970GE, despite the slightly higher CPU power consumption from GTX 770’s higher performance in this benchmark. It’s only 6W at the wall, but it showcases that NVIDIA didn’t have to completely blow their efficiency curve to get a GK104 card back up to 7970GE performance levels.

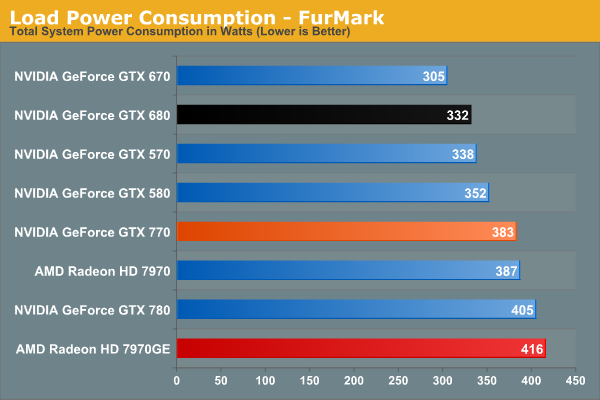

In our TDP constrained scenario we can see the gaps between our cards grow. 78W separates the GTX 770 from GTX 670, and even GTX 680 draws 41W less, almost exactly what we’d expect from their published TDPs. On the flip side of the coin 383W is still less than both 7970 cards, reflecting the fact that GTX 770 is geared for 230W while AMD’s best is geared for 250W.

This is also a reminder however that at a mid-generation product extra performance does not come for free. With the same process and the same architecture, performance increases require power increases. This won’t significantly change until we see 20nm cards next year.

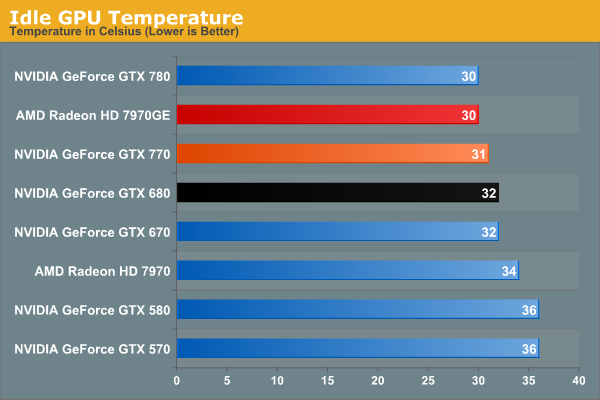

Moving on to temperatures, these are going to be a walk in the part for the reference GTX 770 due to the Titan cooler. At idle we see it hit 31C, which is actually 1C warmer than GTX 780, but this really just comes down to uncontrollable variations in our tests.

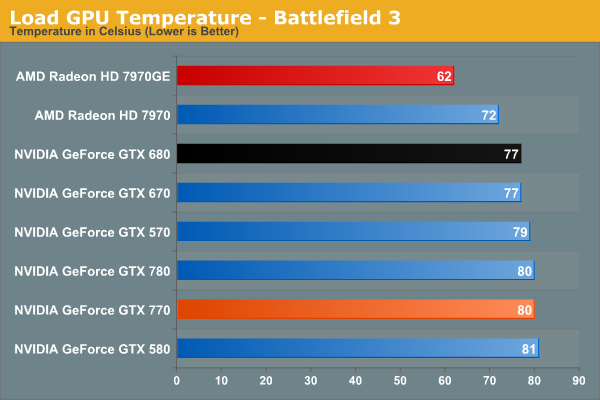

As a GPU Boost 2.0 card temperatures will top out at 80C in games, and that’s exactly what happens here. Interestingly, GTX 770 is just hitting 80C, as evidenced by our clockspeeds earlier. If it was running hotter, it would have needed to drop to lower clockspeeds.

Of course it doesn’t hold a candle here to 7970GE, but that’s the difference between a blower and an open air cooler in action. The blower based 7970 is much closer, as we’d expect.

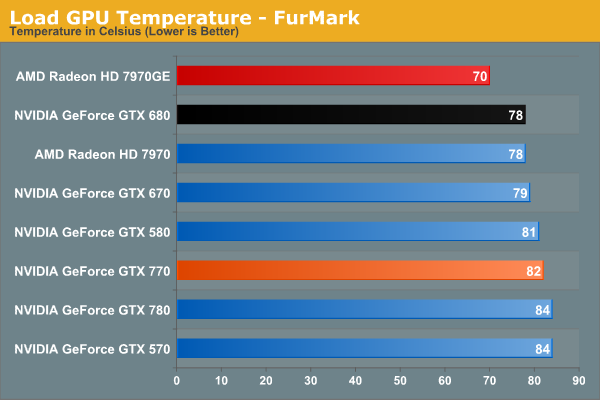

Under FurMark the temperature situation is largely the same. The GTX 770 comes up to 82C here (favoring TDP throttling over temperature throttling), but the relative rankings are consistent.

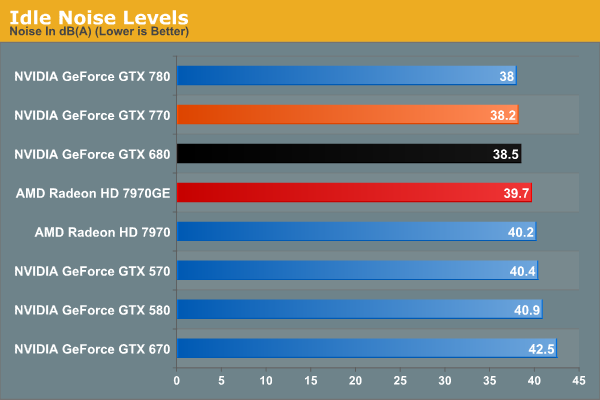

With Titan’s cooler in tow, idle noise looks very good on GTX 770.

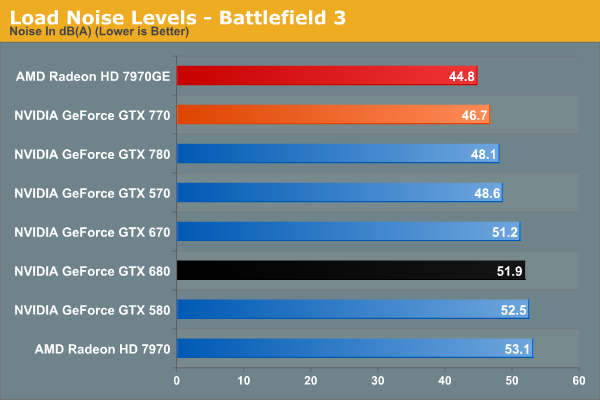

Our noise results under Battlefield 3 are a big part of the reason we’ve been calling the Titan cooler oversized for GTX 770. When is the last time we’ve seen a blower on a 230W card that only hit 46.7dB? The short answer is never. GTX 770’s fan simply doesn’t have to rev up very much to handle the lesser heat output. In fact it’s damn near competitive with the open air cooled 7970GE; there’s still a difference, but it’s under 2dB. More importantly however, despite being a more powerful and more power-hungry card than the GTX 680, the GTX 770 is over 5dB quieter, and this is despite the fact that the GTX 680 is already a solid card own its own. Titan’s cooler is certainly expensive, but it gets results.

Of course this is why it’s all the more a shame that none of NVIDIA’s partners are releasing retail cards with this cooler. There are some blowers in the pipeline, so it will be interesting to see if they can maintain Titan’s performance while giving up the metal.

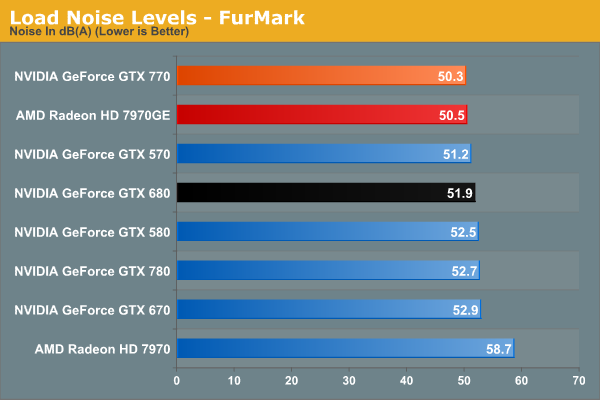

With FurMark pushing our GTX 770 at full TDP, our noise results are still good, but not as impassive as they were under BF3. 50.3dB is still over a dB quieter than GTX 680, though obviously much closer than before. On the other hand the GTX 770 ever so slightly edges out the 7970GE and its open air cooler. Part of this comes down to the TDP difference of course, but beating an open air cooler like that is still quite the feat.

Wrapping things up here, it will be interesting to see where NVIDIA’s partners go with their custom designs. GTX 770, despite being a higher TDP part than both GTX 670 and GTX 680, ends up looking very impressive when it comes to noise, and it would be great to see NVIDIA’s partners match that. At the same time the increased power consumption and heat generation relative to the GeForce 600 series is unfortunate, but not unexpected. But for buyers coming from the GeForce 400 and GeForce 500 series, GTX 770 is in-line with what those previous generation cards were already pulling.

117 Comments

View All Comments

raghu78 - Thursday, May 30, 2013 - link

what most of reviews. across a wide range of games and you will see these two cards are tied.http://www.hardwarecanucks.com/forum/hardware-canu...

http://www.computerbase.de/artikel/grafikkarten/20...

http://www.pcgameshardware.de/Geforce-GTX-770-Graf...

http://www.hardware.fr/articles/896-22/recapitulat...

bitstorm - Thursday, May 30, 2013 - link

It seems to match up with other reviews I have seen. Maybe you are looking at ones that are not using the reference card? The non-reference reviews show it doing a bit better.Still even with the better results of the non reference cards it is a bit disappointing of a release from Nvidia IMO. While it is good that it will likely cause AMD to drop the price of the 7970 GE but it won't set a fire under AMD to make an impressive jump on their next lineup refresh.

Brainling - Thursday, May 30, 2013 - link

And if you look at any AMD review, you'll see fanbois jumping out of the wood work to accuse Anand and crew of being Nvidia homers. You can't win for losing I guess.kallogan - Thursday, May 30, 2013 - link

barely beats 680 at higher power consumption. Turbo boost is useless. Useless gpu. Next.gobaers - Thursday, May 30, 2013 - link

There are no bad products, only bad prices. If you want to think of this as a 680 with a price cut and modest bump, where is the harm in that?EJS1980 - Thursday, May 30, 2013 - link

Exactly!B3an - Thursday, May 30, 2013 - link

I'd glad you mentioned the 2GB VRAM issue Ryan. Because it WILL be a problem soon.In the comments for 780 review i was saying that even 3GB VRAM will probably not be enough for the next 18 months - 2 years, atleast for people who game at 2560x1600 and higher (maybe even 1080p with enough AA). As usual many short-sighted idiots didn't agree, when it should be amazingly obvious theres going to be a big VRAM usage jump when these new consoles arrive and their games start getting ported to PC. They will easily be going over 2GB.

I definitely wouldn't buy the 770 with 2GB. It's not enough and i've had problems with high-end cards running out of VRAM in the past when the 360/PS3 launched. It will happen again with 2GB cards. And it's really not a nice experience when it happens (single digit FPS) and totally unacceptable for hardware this expensive.

TheinsanegamerN - Monday, July 29, 2013 - link

people have been saying that for a long time. i heard the same thing when i bought my 550 ti's. and, 2 years later....only battlefield 3 pushed ppast the 1 GB frame buffer at 1080p, and that was on unplayable setting (everything maxed out). now, if I lower the settings to maintain at least 30fps, no problems. 700 MB usage max. mabye 750 on a huge map. now, at 1440p, i can see this being a problem for 2 gb, but i think 3gb will be just fine for a long time.just4U - Thursday, May 30, 2013 - link

I don't quite understand why Nvidia's partners wouldn't go with the reference design of the 770. I've been keenly interested in those nice high quality coolers and hoping they'd make their way into the $400 parts. It's a great selling point (I think) and disappointing to know that they won't be using them.chizow - Thursday, May 30, 2013 - link

I agree, it feels like false advertising or bait and switch given GPU Boost 2.0 relies greatly on operating temps and throttling once you hit 80C.Seems a bit irresponsible for Nvidia to send out cards like this and for reviewers to subsequently review and publish the results.