NVIDIA GeForce GTX 770 Review: The $400 Fight

by Ryan Smith on May 30, 2013 9:00 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

GTX 770 ends up being an interesting case study in all 3 factors due to the fact that NVIDIA is pushing the GK104 GPU so hard. Though the old version of GPU Boost muddles things some, there’s no denying that higher clockspeeds coupled with the higher voltages needed to reach those clockspeeds has a notable impact on power consumption. This makes it very hard for NVIDIA to stick to their efficiency curve, since adding voltages and clockspeeds offers diminishing returns for the increase in power consumption.

| GeForce GTX 770 Voltages | ||||

| GTX 770 Max Boost | GTX 680 Max Boost | GTX 770 Idle | ||

| 1.2v | 1.175v | 0.862v | ||

As we can see, NVIDIA has pushed up their voltage from 1.175v on GTX 680 to 1.2v on GTX 770. This buys them the increased clockspeeds they need, but it will drive up power consumption. At the same time GPU Boost 2.0 helps to counter this some, as it will keep leakage from being overwhelming by keeping GPU temperatures at or below 80C.

| GeForce GTX 770 Average Clockspeeds | |||

| Max Boost Clock | 1136MHz | ||

| DiRT:S |

1136MHz

|

||

| Shogun 2 |

1136MHz

|

||

| Hitman |

1136MHz

|

||

| Sleeping Dogs |

1102MHz

|

||

| Crysis |

1136MHz

|

||

| Far Cry 3 |

1136MHz

|

||

| Battlefield 3 |

1136MHz

|

||

| Civilization V |

1136MHz

|

||

| Bioshock Infinite |

1128MHz

|

||

| Crysis 3 |

1136MHz

|

||

Speaking of clockspeeds, we also took the average clockspeeds for GTX 770 in our games. In short, GTX 770 is almost always at its maximum boost bin of 1136; the oversized Titan cooler keeps temperatures just below the thermal throttle, and there’s enough TDP headroom left that the card doesn’t need to pull back to avoid that. This is one of the reasons why GTX 770’s performance advantage over GTX 680 is greater than the clockspeed increases alone.

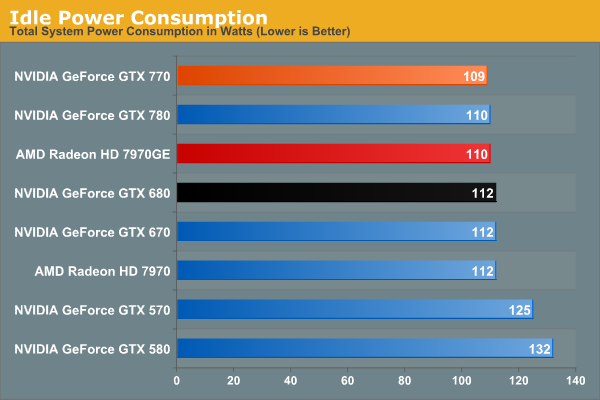

We don’t normally publish this data, but GTX 770 has an extra interesting attribute about it: its idle clockspeed is lower than other Kepler parts. GTX 680 and GTX 780 both idle at 324MHz, but GTX 770 idles at 135MHz. Even 324MHz has proven low enough to keep Kepler’s idle power in check in the past, so it’s not entirely clear just what NVIDIA is expecting here. We’re seeing 1W less at the wall, but by this point the rest of our testbed is drowning out the video card.

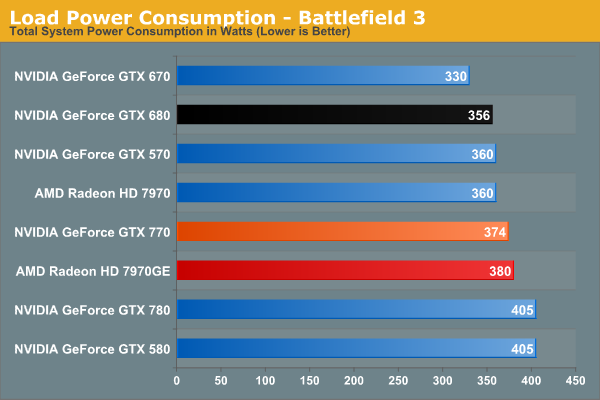

Moving on to BF3 power consumption, we can see the power cost of GTX 770’s performance. 374W at the wall is only 18W more than GTX 680, thanks in part to the fact that GTX 770 isn’t hitting its TDP limit here. At the same time compared to the outgoing GTX 670, this is a 44W difference. This makes it very clear that GTX 770 is not a drop-in replacement for GTX 670 as far as power and cooling go. On the other hand GTX 770 and GTX 570 are very close, even if GTX 770’s TDP is technically a bit higher than GTX 570’s.

Despite this runup, GTX 770 still stays a hair under 7970GE, despite the slightly higher CPU power consumption from GTX 770’s higher performance in this benchmark. It’s only 6W at the wall, but it showcases that NVIDIA didn’t have to completely blow their efficiency curve to get a GK104 card back up to 7970GE performance levels.

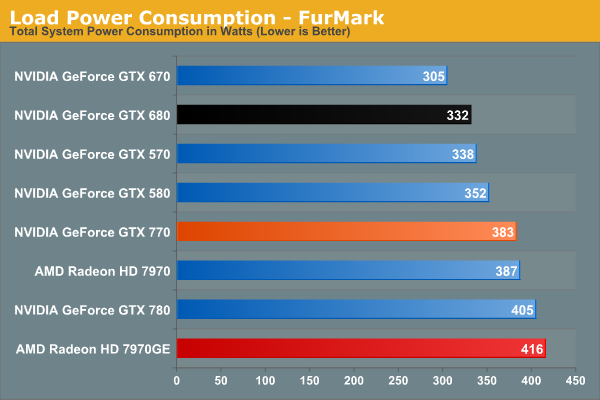

In our TDP constrained scenario we can see the gaps between our cards grow. 78W separates the GTX 770 from GTX 670, and even GTX 680 draws 41W less, almost exactly what we’d expect from their published TDPs. On the flip side of the coin 383W is still less than both 7970 cards, reflecting the fact that GTX 770 is geared for 230W while AMD’s best is geared for 250W.

This is also a reminder however that at a mid-generation product extra performance does not come for free. With the same process and the same architecture, performance increases require power increases. This won’t significantly change until we see 20nm cards next year.

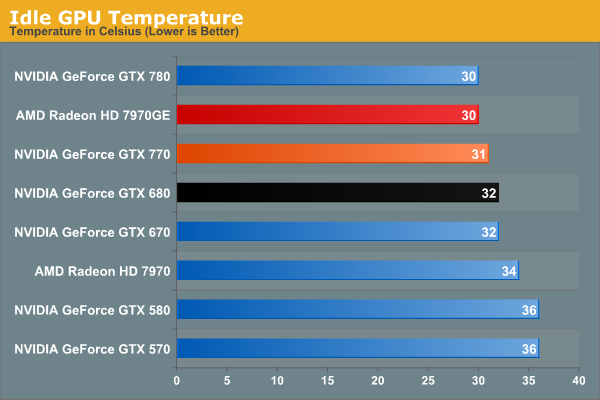

Moving on to temperatures, these are going to be a walk in the part for the reference GTX 770 due to the Titan cooler. At idle we see it hit 31C, which is actually 1C warmer than GTX 780, but this really just comes down to uncontrollable variations in our tests.

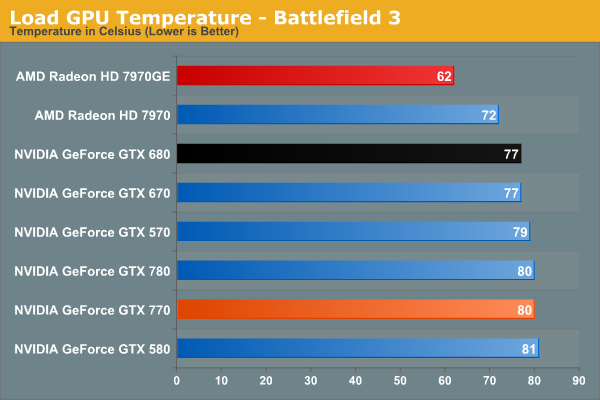

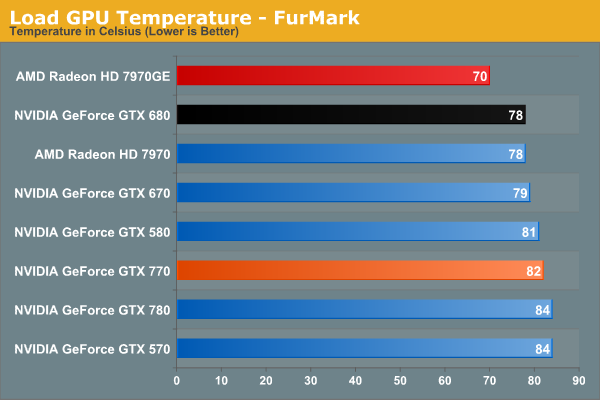

As a GPU Boost 2.0 card temperatures will top out at 80C in games, and that’s exactly what happens here. Interestingly, GTX 770 is just hitting 80C, as evidenced by our clockspeeds earlier. If it was running hotter, it would have needed to drop to lower clockspeeds.

Of course it doesn’t hold a candle here to 7970GE, but that’s the difference between a blower and an open air cooler in action. The blower based 7970 is much closer, as we’d expect.

Under FurMark the temperature situation is largely the same. The GTX 770 comes up to 82C here (favoring TDP throttling over temperature throttling), but the relative rankings are consistent.

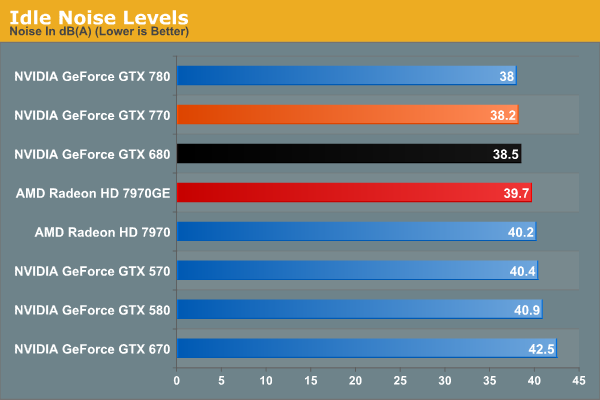

With Titan’s cooler in tow, idle noise looks very good on GTX 770.

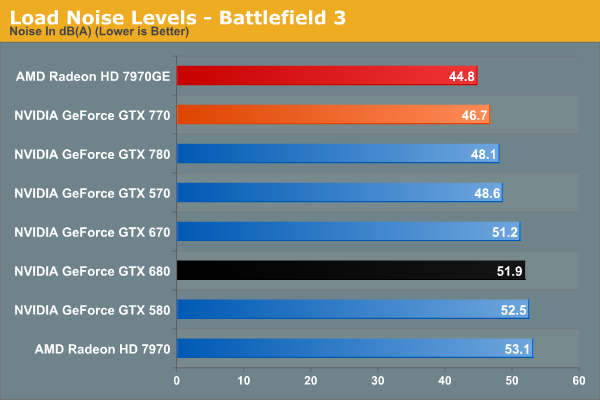

Our noise results under Battlefield 3 are a big part of the reason we’ve been calling the Titan cooler oversized for GTX 770. When is the last time we’ve seen a blower on a 230W card that only hit 46.7dB? The short answer is never. GTX 770’s fan simply doesn’t have to rev up very much to handle the lesser heat output. In fact it’s damn near competitive with the open air cooled 7970GE; there’s still a difference, but it’s under 2dB. More importantly however, despite being a more powerful and more power-hungry card than the GTX 680, the GTX 770 is over 5dB quieter, and this is despite the fact that the GTX 680 is already a solid card own its own. Titan’s cooler is certainly expensive, but it gets results.

Of course this is why it’s all the more a shame that none of NVIDIA’s partners are releasing retail cards with this cooler. There are some blowers in the pipeline, so it will be interesting to see if they can maintain Titan’s performance while giving up the metal.

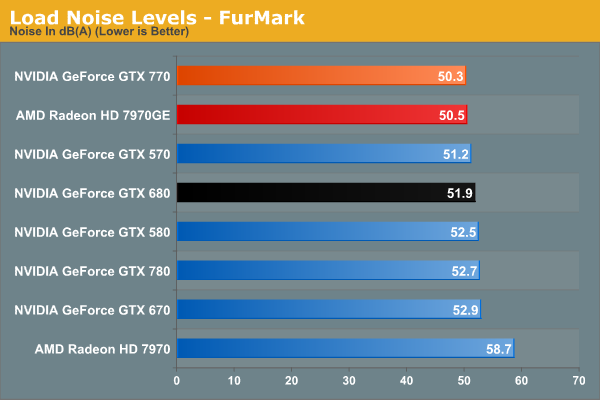

With FurMark pushing our GTX 770 at full TDP, our noise results are still good, but not as impassive as they were under BF3. 50.3dB is still over a dB quieter than GTX 680, though obviously much closer than before. On the other hand the GTX 770 ever so slightly edges out the 7970GE and its open air cooler. Part of this comes down to the TDP difference of course, but beating an open air cooler like that is still quite the feat.

Wrapping things up here, it will be interesting to see where NVIDIA’s partners go with their custom designs. GTX 770, despite being a higher TDP part than both GTX 670 and GTX 680, ends up looking very impressive when it comes to noise, and it would be great to see NVIDIA’s partners match that. At the same time the increased power consumption and heat generation relative to the GeForce 600 series is unfortunate, but not unexpected. But for buyers coming from the GeForce 400 and GeForce 500 series, GTX 770 is in-line with what those previous generation cards were already pulling.

117 Comments

View All Comments

Enkur - Thursday, May 30, 2013 - link

Why is there a picture of Xbox One in the article when its mentioned nowhere.Razorbak86 - Thursday, May 30, 2013 - link

The 2GB Question & The Test"The wildcard in all of this will be the next-generation consoles, each of which packs 8GB of RAM, which is quite a lot of RAM for video operations even after everything else is accounted for. With most PC games being ports of console games, there’s a decent risk of 2GB cards being undersized when used with high resolutions and the highest quality art assets. The worst case scenario is only that these highest quality assets may not be usable at playable performance, but considering the high performance of every other aspect of GTX 770 that would be a distinct and unfortunate bottleneck."

kilkennycat - Thursday, May 30, 2013 - link

NONE of the release offerings (May 30)of the GTX770 on Newegg have the Titan cooler !!!! Regardless of the pictures in this article and on the GTX7xx main page on Newegg. And no bundled software to "ease the pain" and perhaps help mentally deaden the fan noise..... this product takes more power than the GTX680. Early buyers beware... !!geok1ng - Thursday, May 30, 2013 - link

"Having 2GB of RAM doesn’t impose any real problems today, but I’m left to wonder for how much longer that’s going to be true. The wildcard in all of this will be the next-generation consoles, each of which packs 8GB of RAM, which is quite a lot of RAM for video operations even after everything else is accounted for. With most PC games being ports of console games, there’s a decent risk of 2GB cards being undersized when used with high resolutions and the highest quality art assets. "Last week a noob posted something like that on the 780 review. It was decimated by a slew of tech geeks comments afterward. I am surprised to see the same kind of reasoning on a text written by an AT expert.

All AT reviewers by now know that next console will be using an APU from AMD that will have the graphic muscle (almost) comparable to a 6670 ( 5670 in PS4 case thanks to GDDR5) . So what Mr. Ryan Smith is stating is that a "8GB" 6670 can perform better than a 2GB 770 in video operations?

I am well aware that Mr Ryan Smith is over-qualified to help AT readers revisit this old legend of graphics memory :

How little is too little?

And please let us not starting flaming about memory usage- most modern OSs and gaming engines use available RAM dinamically, so if one sees a game use 90%+ of available graphics memory does not imply , at all, that such game would run faster if we double the graphics memory. The opposite is often the true.

As soon as 4GB versions of the 770 launch AT should pit these versions against the 2GB 770 and the 3GB 7970. Or we could go back months ago and re-read tests done when the 4GB versions of the 680 came out- only at triple screen resolutions and insane levels of AA would we see any theoretical advantage of 3-4Gb over 2GB, which is largely unpractical since most games can't run at these resolutions and AA with a single card anyway.

I think NVDIA did it right (again): 2GB is enough for today and we wont see next gen consoles running triple screen resolutions at 16xAA+. 2Gb means less BoM, which is good for profit and price competition and less energy consumption which is good for card temps and max Oc results.

Enkur - Thursday, May 30, 2013 - link

I cant believe AT is mixing up unified graphics and system memory on consoles with dedicated RAM of the graphics card. doesnt make sense.Egg - Thursday, May 30, 2013 - link

PS4 has 8GB of GDDR5 and a GPU somewhat close to a 7850. I don't know where you got your facts from.geok1ng - Thursday, May 30, 2013 - link

Just to start the flaming war- next consoles will not run in monolithic GPUs, but in twin jaguar cores. So when you see those 768/1152 GPU cores numbers, remember these are "crossfired" cores. And in both consoles the GPU is running at a mere 800Mhz, hence the comparison with the 5670/6670, 480 shaders cards@ 800Mhz.It is widely accepted that console games are developed using the lowest common denominator, in this case, the Xbox One DDR3 memory. Even if we take the huge assumption that dual jaguar cores running in tandem can work similar to a 7850 -1024 cores at 860Mhz- in a PS4 ( which is a huge leap of faith looking back to ho badly AMD fared in previous crossfires attempts using integrated GPU like these jaguar cores) that turns out to be the same:

Do an 8GB 7850 gives us better graphical results than a 2GB 770, for any gaming application in the foreseeable future?

Don't 4k on me please: both consoles will be using HDMI, not DisplayPort. and no, they wont be able to drive games across 3 screens. This "next gen-consoles will have more Video RAM than high GPUs in PCs, so their games will be better" is reminding of the old days of "1gb DDr2 cards are better than 256Mb DDr3 cards for future games" scam.

Ryan Smith - Thursday, May 30, 2013 - link

We're aware of the difference. A good chunk of that unified memory is going to be consumed by the OS, the application, and other things that typically reside on the CPU in a PC. But we're still expecting games to be able to load 3GB+ in assets, which would be a problem for 2GB cards.iEATu - Thursday, May 30, 2013 - link

Why are you guys using FXAA in benchmarks as high end as these? Especially for games like BF3 where you have FPS over 100. 4x AA for 1080p and 2x for 1440p. No question those look better than FXAA...Ryan Smith - Thursday, May 30, 2013 - link

In BF3 we're testing both FXAA and MSAA. Otherwise most of our other tests are MSAA, except for Crysis 3 which is FXAA only for performance reasons.