Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTBioShock: Infinite

Bioshock Infinite is Irrational Games’ latest entry in the Bioshock franchise. Though it’s based on Unreal Engine 3 – making it our obligatory UE3 game – Irrational had added a number of effects that make the game rather GPU-intensive on its highest settings. As an added bonus it includes a built-in benchmark composed of several scenes, a rarity for UE3 engine games, so we can easily get a good representation of what Bioshock’s performance is like.

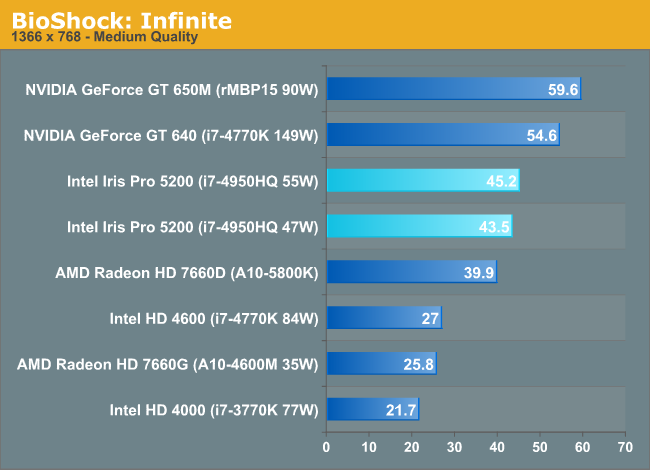

Both the 650M and desktop GT 640 are able to outperform Iris Pro here. Compared to the 55W configuration, the 650M is 32% faster. There's not a huge difference in performance between the GT 640 and 650M, indicating that the performance advantage here isn't due to memory bandwidth but something fundamental to the GPU architecture.

In the grand scheme of things, Iris Pro does extremely well. There isn't an integrated GPU that can touch it. Only the 100W desktop Trinity approaches Iris Pro performance but at more than 2x the TDP.

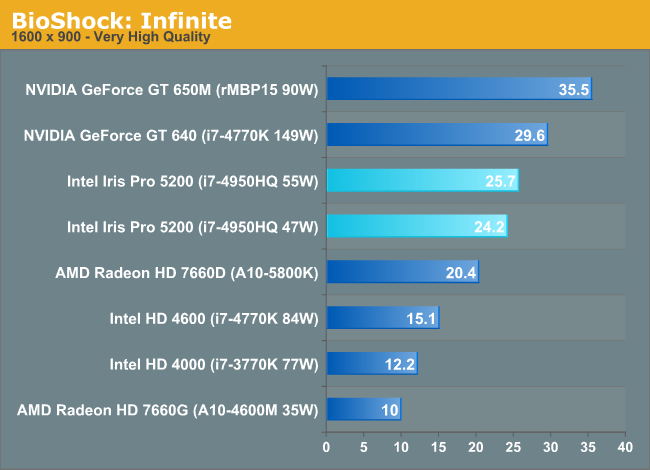

The standings don't really change at the higher resolution/quality settings, but we do see some of the benefits of Crystalwell appear. A 9% advantage over the 100W desktop Trinity part grows to 18% as memory bandwidth demands increase. Compared to the desktop HD 4000 we're seeing more than 2x the performance, which means in mobile that number will likely grow even further. The mobile Trinity comparison is a shut out as well.

177 Comments

View All Comments

kyuu - Saturday, June 1, 2013 - link

It's probably habit coming from eluding censoring.maba - Saturday, June 1, 2013 - link

To be fair, there is only one data point (GFXBenchmark 2.7 T-Rex HD - 4X MSAA) where the 47W cTDP configuration is more than 40% slower than the tested GT 650M (rMBP15 90W).Actually we have the following [min, max, avg, median] for 47W (55W):

games: 61%, 106%, 78%, 75% (62%, 112%, 82%, 76%)

synth.: 55%, 122%, 95%, 94% (59%, 131%, 102%, 100%)

compute: 85%, 514%, 205%, 153% (86%, 522%, 210%, 159%)

overall: 55%, 514%, 101%, 85% (59%, 522%, 106%, 92%)

So typically around 75% for games with a considerably lower TDP - not that bad.

I do not know whether Intel claimed equal or better performance given a specific TDP or not. With the given 47W (55W) compared to a 650M it would indeed be a false claim.

But my point is, that with at least ~60% performance and typically ~75% it is admittedly much closer than you stated.

whyso - Saturday, June 1, 2013 - link

Note your average 650m is clocked lower than the 650m reviewed here.lmcd - Saturday, June 1, 2013 - link

If I recall correctly, the rMBP 650m was clocked as high as or slightly higher than the 660m (which was really confusing at the time).JarredWalton - Sunday, June 2, 2013 - link

Correct. GT 650M by default is usually 835MHz + Boost, with 4GHz RAM. The GTX 660M is 875MHz + Boost with 4GHz RAM. So the rMBP15 is a best-case for GT 650M. However, it's not usually a ton faster than the regular GT 650M -- benchmarks for the UX51VZ are available here:http://www.anandtech.com/bench/Product/814

tipoo - Sunday, June 2, 2013 - link

I think any extra power just went to the rMBP scaling operations.DickGumshoe - Sunday, June 2, 2013 - link

Do you know if the scaling algorithms are handled by the CPU or the GPU on the rMBP?The big thing I am wondering is that if Apple releases a higher-end model with the MQ CPU's, would the HD 4600 be enough to eliminate the UI lag currently present on the rMBP's HD 4000?

If it's done on the GPU, then having the HQ CPU's might actually get *better* UI performance than the MQ CPU's for the rMPB.

lmcd - Sunday, June 2, 2013 - link

No, because these benchmarks would change the default resolution, which as I understand is something the panel would compensate for?Wait, aren't these typically done while the laptop screen is off and an external display is used?

whyso - Sunday, June 2, 2013 - link

You got this wrong. 650m is 735/1000 + boost to 850/1000. 660m is 835/1250 boost to 950/1250.jasonelmore - Sunday, June 2, 2013 - link

worst mistake intel made was that demo with DIRT when it was side by side with a 650m laptop. That set people's expectations. and it falls short in the reviews and people are dogging it. If they would have just kept quite people would be praising them up and down right now.