Choosing a Gaming CPU at 1440p: Adding in Haswell

by Ian Cutress on June 4, 2013 10:00 AM ESTDiRT 3

DiRT 3 is a rallying video game and the third in the Dirt series of the Colin McRae Rally series, developed and published by Codemasters. DiRT 3 also falls under the list of ‘games with a handy benchmark mode’. In previous testing, DiRT 3 has always seemed to love cores, memory, GPUs, PCIe lane bandwidth, everything. The small issue with DiRT 3 is that depending on the benchmark mode tested, the benchmark launcher is not indicative of game play per se, citing numbers higher than actually observed. Despite this, the benchmark mode also includes an element of uncertainty, by actually driving a race, rather than a predetermined sequence of events such as Metro 2033. This in essence should make the benchmark more variable, but we take repeated runs in order to smooth this out. Using the benchmark mode, DiRT 3 is run at 1440p with Ultra graphical settings. Results are reported as the average frame rate across four runs.

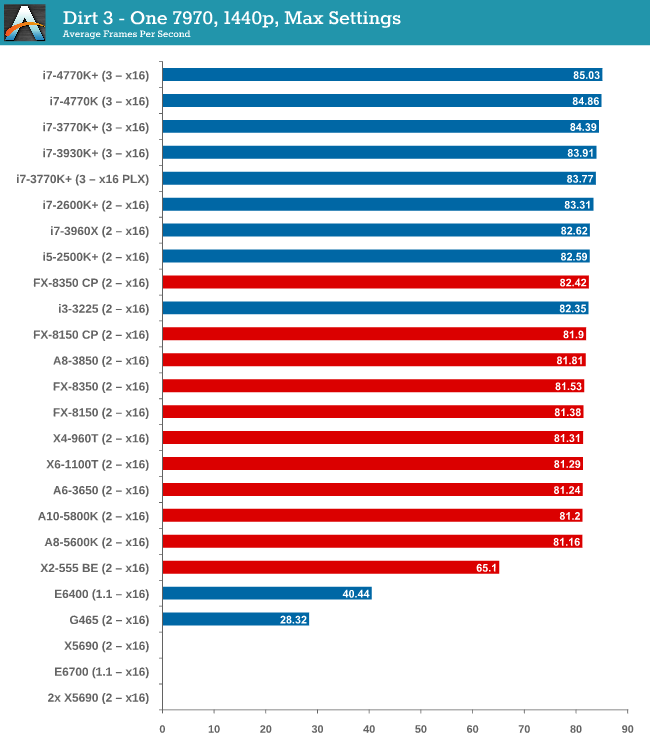

One 7970

While the testing shows a pretty dynamic split between Intel and AMD at around the 82 FPS mark, all processors are roughly +/- 1 or 2 around this mark, meaning that even an A8-5600K will feel like the i7-3770K. The 4770K has a small but ultimately unnoticable advantage in gameplay.

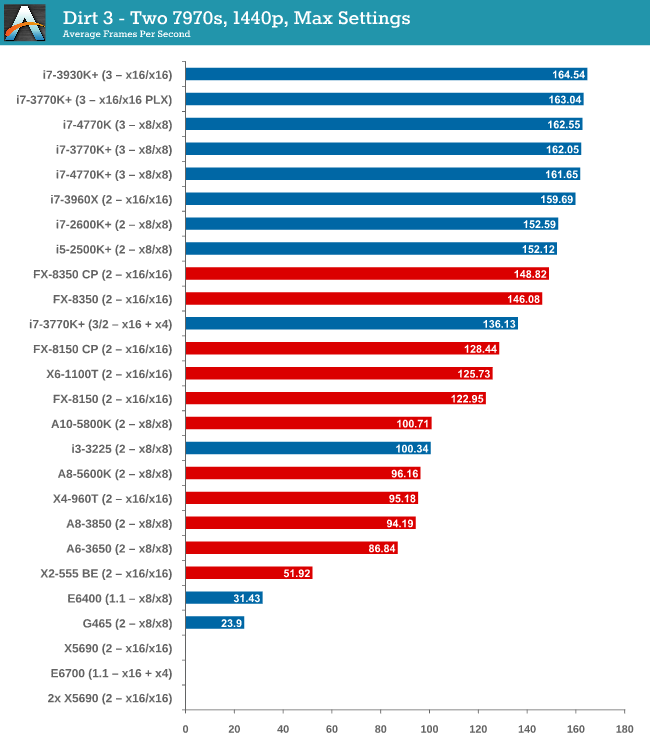

Two 7970s

When reaching two GPUs, the Intel/AMD split is getting larger. The FX-8350 puts up a good fight against the i5-2500K and i7-2600K, but the top i7-3770K offers almost 20 FPS more and 40 more than either the X6-1100T or FX-8150.

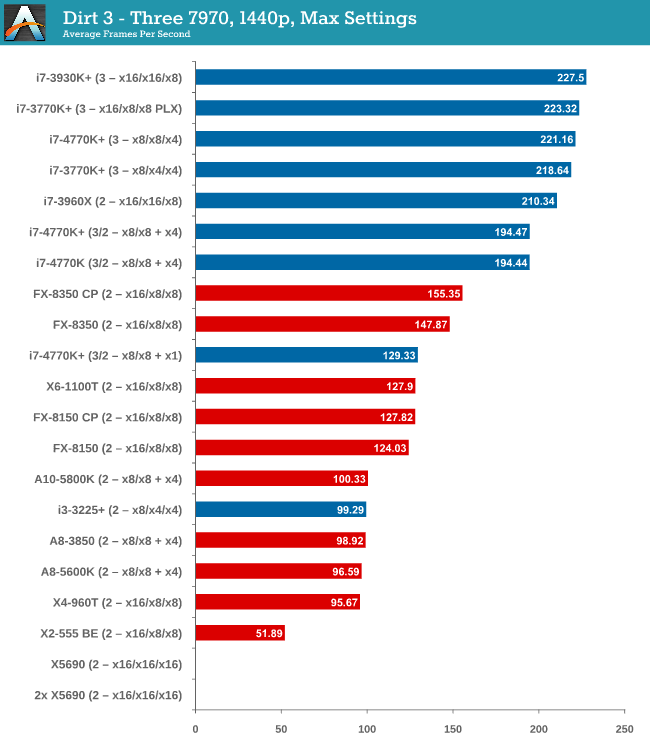

Three 7970s

Moving up to three GPUs and DiRT 3 is jumping on the PCIe bandwagon, enjoying bandwidth and cores as much as possible. Despite this, the gap to the best AMD processor is growing – almost 70 FPS between the FX-8350 and the i7-3770K. The 4770K is slightly ahead of the 3770K at x8/x4/x4, suggesting a small IPC difference,

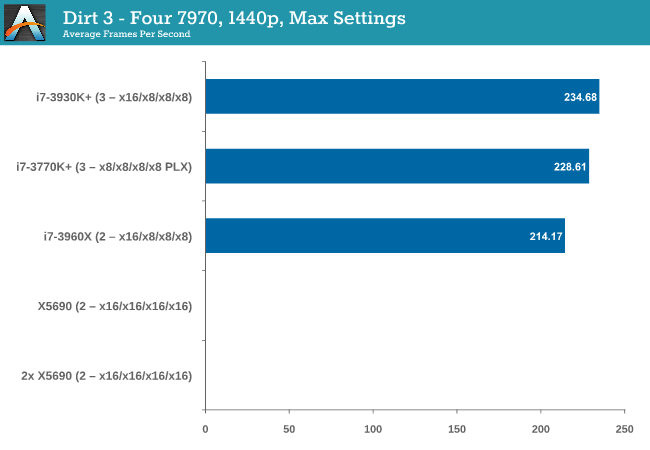

Four 7970s

At four GPUs, bandwidth wins out, and the PLX effect on the UP7 seems to cause a small dip compared to the native lane allocation on the RIVE (there could also be some influence due to 6 cores over 4).

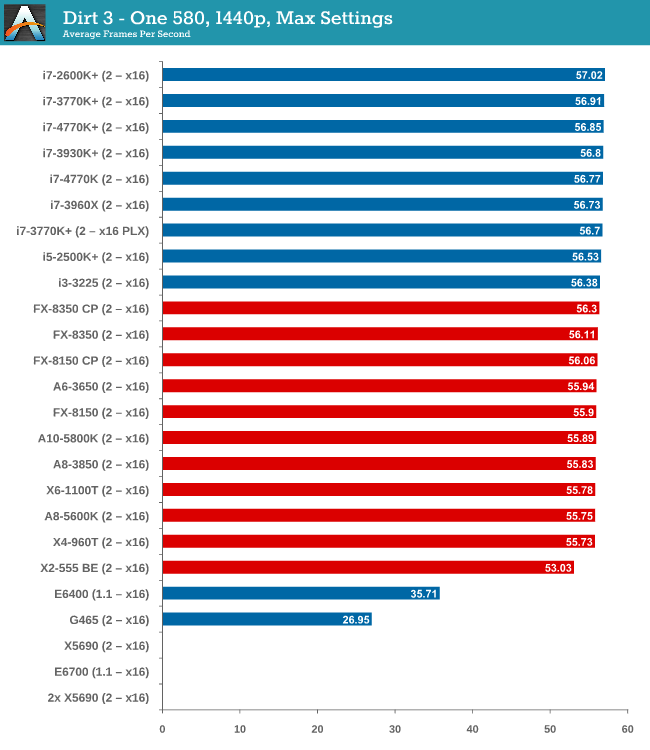

One 580

Similar to the one 7970 setup, using one GTX 580 has a split between AMD and Intel that is quite noticeable. Despite the split, all the CPUs perform within 1.3 FPS, meaning no big difference.

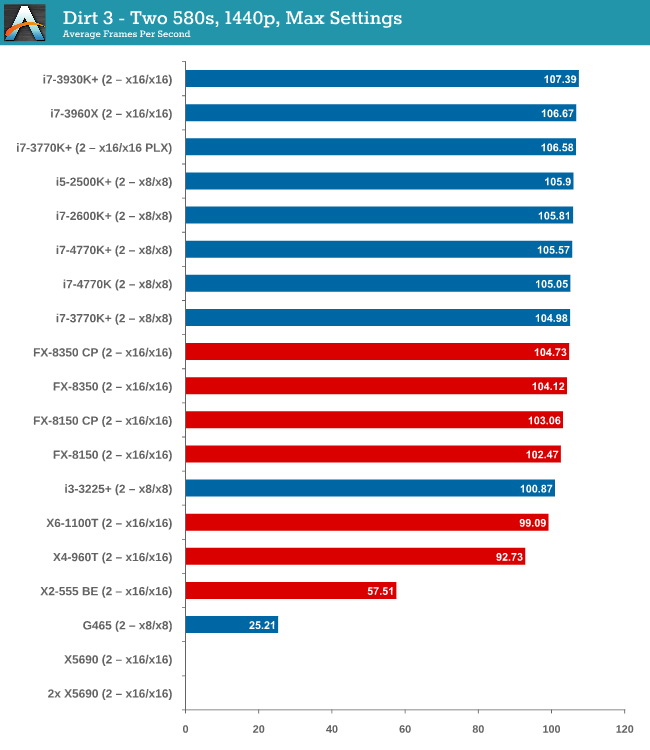

Two 580s

Moving to dual GTX 580s, and while the split gets bigger, processors like the i3-3225 are starting to lag behind. The difference between the best AMD and best Intel processor is only 2 FPS though, nothing to write home about.

DiRT 3 conclusion

Much like Metro 2033, DiRT 3 has a GPU barrier and until you hit that mark, the choice of CPU makes no real difference at all. In this case, at two-way 7970s, choosing a quad core Intel processor does the business over the FX-8350 by a noticeable gap that continues to grow as more GPUs are added, (assuming you want more than 120 FPS).

116 Comments

View All Comments

ninjaquick - Tuesday, June 4, 2013 - link

"If you were buying new, the obvious answer would be looking at an i5-3570K on Ivy Bridge rather than the 2500K"Ian basically wanted to get a relatively broad test suite, at as many performance points as possible. Haswell, however, is really quite a bit quicker. More than anything, this article is an introduction to how they are going to be testing moving forward, as well as a list of recommendations for different budgets.

dsumanik - Tuesday, June 4, 2013 - link

2 year old mid range tech is competitive with, and cheaper, than haswell.Hence anandtech's recommendation.

The best thing about haswell is the motherboards, which are damn nice.

TheJian - Wednesday, June 5, 2013 - link

This is incorrect. It is only competitive when you TAP out the gpu by forcing them into situations they can't handle. If you drop the res to 1080p suddenly the CPU is VERY important and they part like the red sea.This is another attempt at covering for AMD and trying to help them sell products (you can judge whether it's intentional or not on your own). When no single card can handle the resolutions being forced on them (1440p) you end up with ALL cpu's looking like they're fine. This is just a case of every cpu saying hurry up mr. vid card I'm waiting (or we're all waiting). Lower the res to where they can handle it and cpus start to show their colors. If this article was written with 1080p being the focus (as even his own survey shows 96% of us use it OR lower, and adding in 1920x1200 you end up with 98.75%!!) you would see how badly AMD is doing vs Intel since the video cards would NOT be brick walled screaming under the load.

http://www.tomshardware.com/reviews/neverwinter-pe...

An example of what happens when you put the vid card at 1080p where cpu's can show their colors.

"At this point, it's pretty clear that Neverwinter needs a pretty quick processor if you want the performance of a reasonably-fast graphics card to shine through. At 1920x1080, it doesn't matter if you have a Radeon HD 7790, GeForce GTX 650 Ti, Radeon HD 7970, or GeForce GTX 680 if you're only using a mid-range Core i5 processor. All of those cards are limited by our CPU, even though it offers four cores and a pretty quick clock rate."

It's not just Civ5. I could point out how inaccurate the suggestions in this 1440p article are all day. Just start looking up cpu articles on other web sites and check the 1080p data. Most cpu articles show using a top card (7970 or 680 etc) so you get to see the TRUTH. The CPU is important in almost EVERY game, unless you shoot the resolution up so high they all score the same because your video card can't handle the job (thus making ANY cpu spend all day waiting on the vid card).

I challenge anandtech to rerun the same suite, same chips at 1080p and prove I'm wrong. I DARE YOU.

http://www.hardocp.com/article/2012/10/22/amd_fx83...

More evidence of what happens when gpu is NOT tapped out. Look at how Intel is KILLING AMD at hardocp. Even if you say "but eventually I'll up my res and spend $600 on a 1440p monitor", you have to understand that as you get better gpu's that can handle that res, you'll hate the fact you chose AMD for a cpu as it will AGAIN become the limiter.

"Lost Planet is still used here at HardOCP because it is one of the few gaming engines that will reach fully into our 8C/8T processors. Here we see Vishera pull off its biggest victory yet when compared to Zambezi, but still lagging behind 4 less cores from Intel."

"Again we see a new twist on the engine above, and it too will reach into our 8C/8T. While not as pronounced as Lost Planet, Lost Planet 2 engine shows off the Vishera processors advancements, yet it still trails Intel's technology by a wide margin."

"The STALKER engine shows almost as big an increase as we saw above, yet with Intel still dealing a crippling gaming blow to AMD's newest architecture."

Yeah, a 65% faster Intel is a LOT right? Understand if you go AMD now, once you buy a card (20nm maxwell etc? 14nm eventually in 3yrs?) you will CRY over your cpu limiting you at even 1440p. Note the video card Hardocp use for testing was ONLY a GTX 470. That's old junk, he could now run with 7970ghz or 780gtx and up the res to 1080p and show the same results. AMD would get a shellacking.

http://techreport.com/review/24879/intel-core-i7-4...

Here, techreport did it in 1080p. 20% lower for A10-5800 than 4770K in crysis 3. It gets worse with farcry 3 etc. In Far Cry 3 i4770k scored 96fps at 1080p, yet AMD's A10-5800 scored a measly 68. OUCH. So roughly 30% slower in this game. HOLY COW man check out Tomb Raider...Intel 126fps! AMD A10-5800 68fps! Does Anandtech still say this is a good cpu to go with? At the rest 98.75% of us run at YOU ARE WRONG. That's almost 2x faster in tomb raider at 1080p! Metro last light INtel 93fps, vs, AMD A10-5800 51fps again almost TWO TIMES faster!

From Ian's conclusion page here:

"If I were gaming today on a single GPU, the A8-5600K (or non-K equivalent) would strike me as a price competitive choice for frame rates, as long as you are not a big Civilization V player and do not mind the single threaded performance. The A8-5600K scores within a percentage point or two across the board in single GPU frame rates with both a HD7970 and a GTX580, as well as feel the same in the OS as an equivalent Intel CPU."

He's not even talking the A10-5800 that got SMASHED at techreport as shown in the link. Note they only used a RAdeon 7950. A 7970ghz or GTX 780 would be even less taxed and show even larger CPU separations. I hope people are getting the point here. Anandtech is MISLEADING you at best by showing a resolution higher than 98.75% of us are using and tapping out the single gpu. I could post a dozen other cpu reviews showing the same results. Don't walk, RUN away from AMD if you are a gamer today (or tomorrow). Haswell boards are supposed to take a broadwell chip also, even more ammo to run from AMD.

Ian is recommending a cpu that is lower than the one I show getting KILLED here. Games might not even be playable as the A10-5800 was hitting 50fps AVG on some things. What would you hit with a lower cpu avg, and worse what would the mins be? Unplayable? Get a better CPU. You've been warned.

haukionkannel - Wednesday, June 5, 2013 - link

Hmm... If the game is fast enough 1440p then it is fast enough for 1080p... We are talking about serious players. Who on earth would buy 7970 or 580 for gaming in 1080p? That is serious overkill...We all know that Intel will run faster if we use 720p, just because it is faster CPU than AMD, nothing new in there since the era of Pentium4 and Athlon2. What this articles telss is that if you want to play games with some serious GPU power, you can save money buy using AMD CPU when using single or even in some cases double GPU. If you go beyond that the CPU becomes a bottleneck.

TheJian - Thursday, June 6, 2013 - link

The killing happened at 1080p also which is what techreport showed. Since 98.75% of us run 1920x1200 or below, I'm thinking that is pretty important data.The second you put in more than one card the cpus separate even at 1440p. Meaning, next years SINGLE card or the one after will AGAIN separate the cpus as that single card will be able to wait on the CPU as the bottleneck goes back to cpu. Putting that aside, hardocp showed even the mighty titan at $1000 had stuff turned of at 1080p. So you are incorrect. Is it serious overkill if hardocp is turning stuff off for a smooth game experience? 7970/GTX680 had to turn off even more stuff in the 780GTX review (titan and 780gtx mostly had the same stuff on, but the 7970ghz and 680gtx they compared to turned off quite a bit to remain above 30fps).

I'm a serious player, and I can't run 1920x1200 with my radeon 5850 which was $300 when I bought it. I'm hoping maxwell will get me 30fps with EVERYTHING on in a few games at 1440p (I'm planning on buying a 27 or 30in at some point) and for the ones that don't I'll play them on my Dell 24 as I do now. But the current cards (without spending a grand and even that don't work) in single format still have trouble with 1080p as hardocp etc has shown. I want my next card to at least play EVERY game at 1920x1200 on my dell, and hope for a good portion on the next monitor purchase. With the 5850 I run a lot of games on my 22in at 1680x1050 to enable everything. I don't like turning stuff down or off, as that isn't how the dev intended me to play their game right?

Apparently you think all 7970 and 580 owners are all running 1440p and up? Ridiculous. The steam survey says you are woefully incorrect. 98.75% of us are all running 1920x1200 or below and a TON of us have 7970, 680, 580 etc etc (not me yet) and enjoying the fact that they NEVER turn stuff down (well, apparently you still do on some games...see the point?). Only DUAL card owners are running above as the steam survey shows, go there and check out the breakdown. You can see the population (even as small as that 1% is...LOL) has TWO cards running above 1920x1200. So you are categorically incorrect or steam's users change all their resolutions down just to fake a survey?...ROFL. Ok. Whatever. You expect me to believe they get done with the survey and jack it up for UNDER 30fps gameplay? Ok...

Even here, at 1440p for instance, metro only ran 34fps (and last light is more taxing than 2033). How low do you think the minimums are when you're only doing 34fps AVERAGE? UNPLAYABLE. I can pull anandtech quotes that say you'd really like 60fps to NEVER dip below 30fps minimum. In that they are actually correct and other sites agree...

http://www.guru3d.com/articles_pages/palit_geforce...

"Frames per second Gameplay

<30 FPS very limited gameplay

30-40 FPS average yet very playable

40-60 FPS good gameplay

>60 FPS best possible gameplay

So if a graphics card barely manages less than 30 FPS, then the game is not very playable, we want to avoid that at all cost.

With 30 FPS up-to roughly 40 FPS you'll be very able to play the game with perhaps a tiny stutter at certain graphically intensive parts. Overall a very enjoyable experience. Match the best possible resolution to this result and you'll have the best possible rendering quality versus resolution, hey you want both of them to be as high as possible.

When a graphics card is doing 60 FPS on average or higher then you can rest assured that the game will likely play extremely smoothly at every point in the game, turn on every possible in-game IQ setting."

So as the single 7970 (assuming ghz edition here in this 1440p article) can barely hit 34fps, by guru3d's definition it's going to STUTTER. Right? You can check max/avg/min everywhere and you'll see there is a HUGE diff between min and avg. Thus the 60fps point is assumed good to ensure above 30 min and no stutter (I'd argue higher depending on the game, mulitplayer etc as you can tank when tons of crap is going on). Guru3d puts that in EVERY gpu article.

The single 580 in this article can't even hit 24fps and that is AN AVERAGE. So unplayable totally, thus making the whole point moot right? You're going to drop to 1080p just to hit 30fps and you say this and a 7970 is overkill for 1080p? Even this FLAWED article here proves you WRONG.

Sleeping dogs right here in this review on a SINGLE 7970 UNDER 30fps AVERAGE. What planet are you playing on? If you are hitting 28.2fps avg your gameplay SUCKS!

http://www.tomshardware.com/reviews/geforce-gtx-77...

Bioshock infinite 31fps on GTX 580...Umm, mins are going to stutter at 1440p right? Even the 680 only gets 37fps...You'll need to turn both down for anything fluid maxed out. Same res for Crysis 3 shows even the Titan only hitting 32fps and with DETAILS DOWN. So mins will stutter right? MSAA is low, you have two more levels above this which would put it into single digits for mins a lot. Even this low on msaa the 580 never gets above 22fps avg...LOL. You want to rethink your comments yet? The 580's avg was 18 FPS! 1440p is NOT for a SINGLE 580...LOL. Only 25fps for 7970...LOL. NOT PLAYABLE on your 7970ghz either. Clearly this game is 1080p huh? Look how much time in the graph 7970ghz spends BELOW 20fps at 1440p. Serious gamers play at 1080p unless they have two cards. FAR CRY 3, same story. 7970ghz is 29fps...ROFL. The 580 scores 21fps...You go right ahead and try to play these games at 1440p. Welcome to the stutterfest my friend.

"GeForce GTX 770 and Radeon HD 7970 GHz Edition nearly track together, dipping into the mid-20 FPS range."

Yeah, Far Cry will be good at 20fps.

Hitman Absolution has to disable MSAA totally...LOL. Even then 580 only hits 40fps avg.

Note the tomb raider comment at 1440p:

"The GeForce GTX 770 bests Nvidia’s GeForce GTX 680, but neither card is really fluid enough to call the Ultimate Quality preset smooth."

So 36fps and 39fps avg for those two is NOT SMOOTH. 770 dropped to 20fps for a while.

A titan isn't even serious overkill for 1080p. It's just good enough and for hardocp a game or two had to be turned down even on it at 1080p! The data doesn't lie. Single cards are for 1080p. How many games do I have to show you dipping into the 20's before you get it? Batman AC barely hits 30's avg on 7970ghz with 8xmsaa and you have to turn physx off (not nv physx, phsyx period). Check tom's charts for gpus.

In hardocp's review of 770gtx 1080p was barely playable with 680gtx and everything on. Upping to 2560x1600 caused nearly every card to need tessellation down and physx off in Metro Last Light. 31fps min on 770 with SSAA OFF and Physx OFF!

http://hardocp.com/article/2013/05/30/msi_geforce_...

You must like turning stuff off. I don't think you're a serious gamer until you turn everything on and expect it to run there. NO SACRIFICING quality! Are we done yet? If this article really tells you to pair expensive gpus ($400-1000) with a cheapo $115 AMD cpu then they are clearly misleading you. It looks like is exactly what they got you to believe. Never mind your double gpu comment paired with the same crap cpu adding to the ridiculous claims here already.

Calinou__ - Friday, June 7, 2013 - link

"Serious gamers play at 1080p unless they have two cards."Fun fact 2: there are properly coded games out there which will run fine in 2560×1440 on mid-high end cards.

TheJian - Sunday, June 9, 2013 - link

No argument there. My point wasn't that you can't find a game to run at 1440p ok. I could cite many, though I think most wouldn't be maxed out doing it on mid cards and surely aren't what most consider graphically intensive. But there are FAR too many that don't run there without turning lots of stuff off as many sites I linked to show. Also 98.75% of us don't even have monitors that go above 1920x1200 (I can't see many running NON-Native but it's possible), so not quite sure fun fact2 matters much so my statement is still correct for nearly 99% of the world right? :) There are probably a few people in here who care what the top speed of a Veyron SS is (maybe they can afford one, 258mph I think), but for the vast majority of us, we couldn't care less about it since we'll never buy a car over 100K. I probably could have said 50K and still be right for most.Your statement kind of implies coders are lazy :) Not going to argue that point either...LOL. Not all coders are lazy mind you...But with so much power on pc's it's probably hard not to be lazy occasionally, not to mention they have the ability to patch them to death afterwards. I can handle 1-2 patches but if you need 5 just to get it to run properly after launch on most hardware (unless adding features/play balancing etc like a skyrim type game etc) maybe you should have kept it in house for another month or two of QA :) Just a thought...

Sabresiberian - Wednesday, June 5, 2013 - link

So, you want an article specifically written for gaming at 2560x1440 to do the testing at 1920x1080?Your rant starts from that low point and goes downhill from there.

TheJian - Thursday, June 6, 2013 - link

You completely missed the point. The article is testing for .87% of the market. That is less than one percent. This article will be nice to reprint in 2-3yrs...Then it may actually be relevant. THAT is the point. I think it's a pretty HIGH point, not low, and that fact that you choose to ignore the data in my post doesn't make it any less valid or real. Nice try though :) Come back when you have some data actually making a relevant point please.Calinou__ - Friday, June 7, 2013 - link

So, all the websites that are about Linux should shut down because Linux has ~1% market share? Nope.