Choosing a Gaming CPU at 1440p: Adding in Haswell

by Ian Cutress on June 4, 2013 10:00 AM ESTDiRT 3

DiRT 3 is a rallying video game and the third in the Dirt series of the Colin McRae Rally series, developed and published by Codemasters. DiRT 3 also falls under the list of ‘games with a handy benchmark mode’. In previous testing, DiRT 3 has always seemed to love cores, memory, GPUs, PCIe lane bandwidth, everything. The small issue with DiRT 3 is that depending on the benchmark mode tested, the benchmark launcher is not indicative of game play per se, citing numbers higher than actually observed. Despite this, the benchmark mode also includes an element of uncertainty, by actually driving a race, rather than a predetermined sequence of events such as Metro 2033. This in essence should make the benchmark more variable, but we take repeated runs in order to smooth this out. Using the benchmark mode, DiRT 3 is run at 1440p with Ultra graphical settings. Results are reported as the average frame rate across four runs.

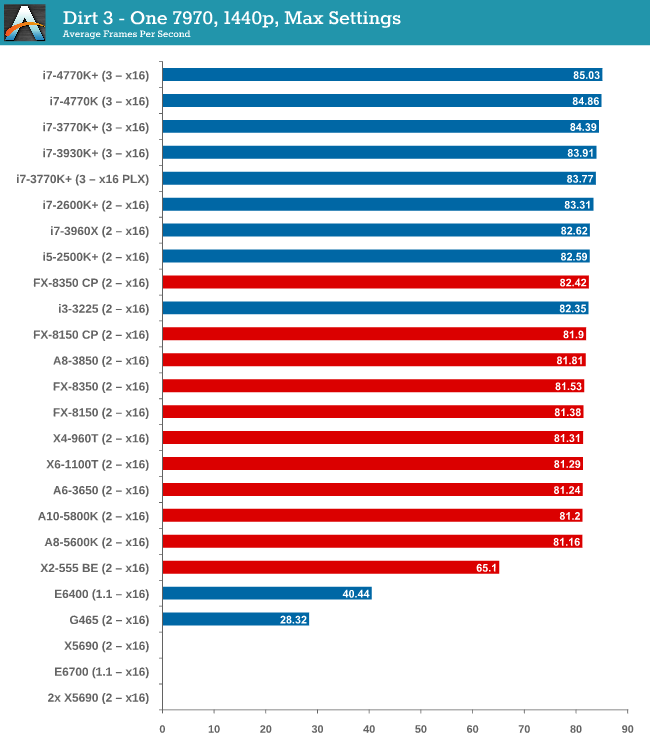

One 7970

While the testing shows a pretty dynamic split between Intel and AMD at around the 82 FPS mark, all processors are roughly +/- 1 or 2 around this mark, meaning that even an A8-5600K will feel like the i7-3770K. The 4770K has a small but ultimately unnoticable advantage in gameplay.

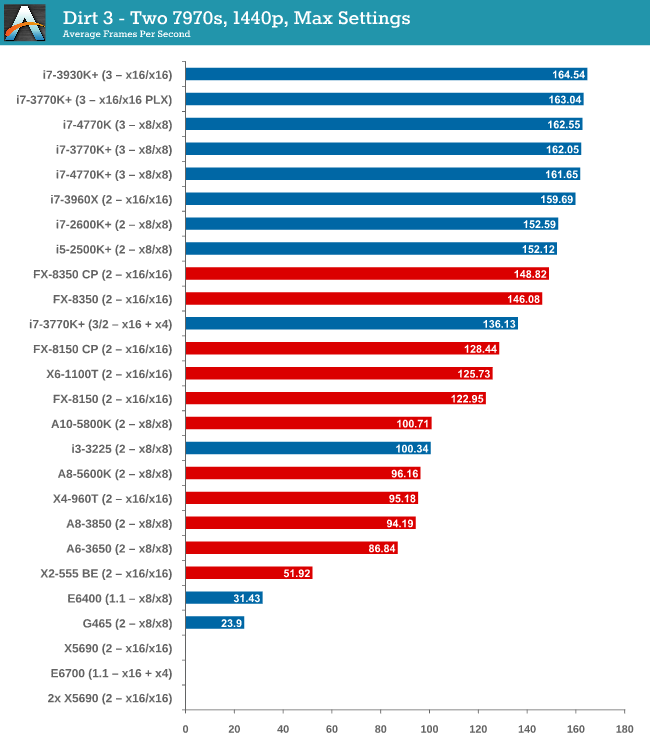

Two 7970s

When reaching two GPUs, the Intel/AMD split is getting larger. The FX-8350 puts up a good fight against the i5-2500K and i7-2600K, but the top i7-3770K offers almost 20 FPS more and 40 more than either the X6-1100T or FX-8150.

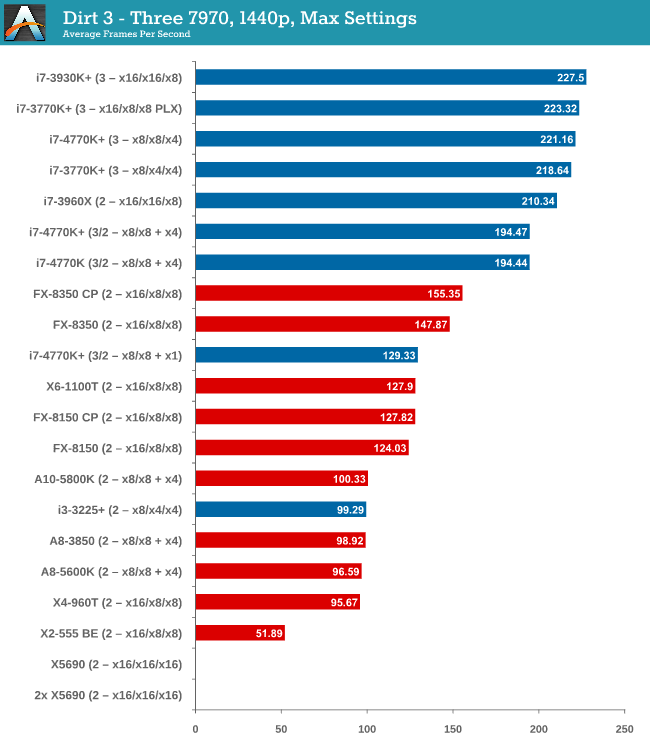

Three 7970s

Moving up to three GPUs and DiRT 3 is jumping on the PCIe bandwagon, enjoying bandwidth and cores as much as possible. Despite this, the gap to the best AMD processor is growing – almost 70 FPS between the FX-8350 and the i7-3770K. The 4770K is slightly ahead of the 3770K at x8/x4/x4, suggesting a small IPC difference,

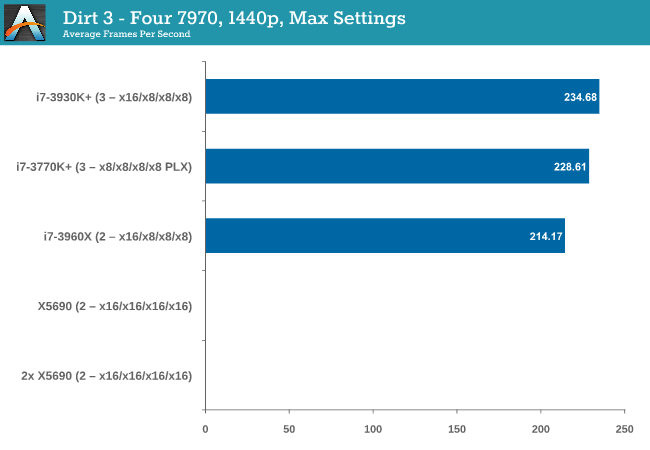

Four 7970s

At four GPUs, bandwidth wins out, and the PLX effect on the UP7 seems to cause a small dip compared to the native lane allocation on the RIVE (there could also be some influence due to 6 cores over 4).

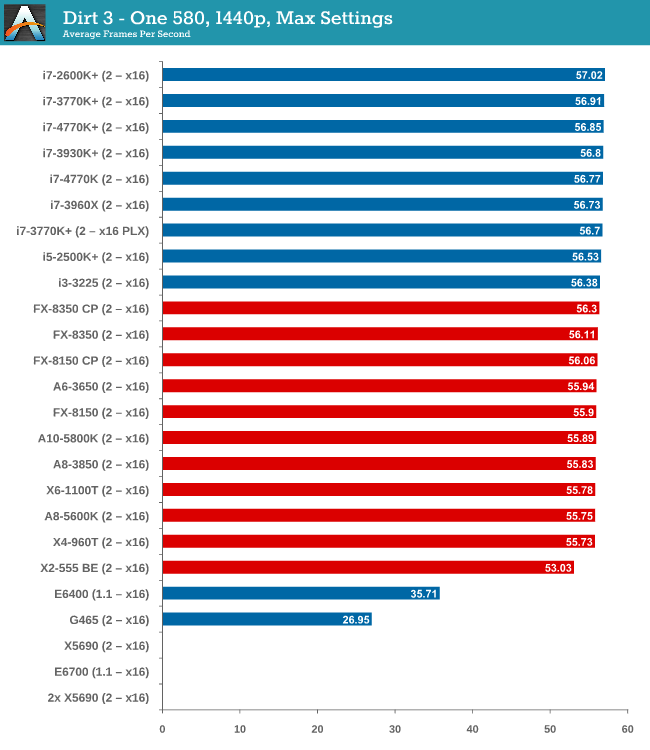

One 580

Similar to the one 7970 setup, using one GTX 580 has a split between AMD and Intel that is quite noticeable. Despite the split, all the CPUs perform within 1.3 FPS, meaning no big difference.

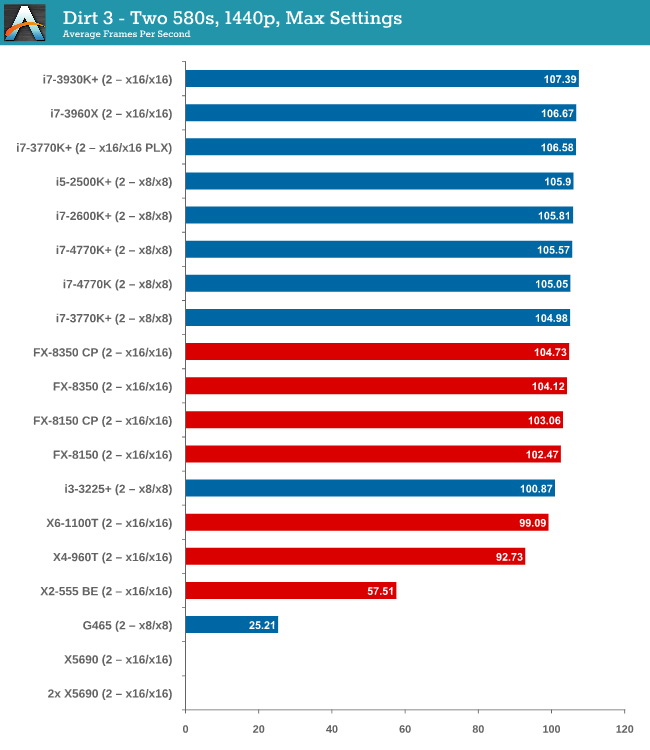

Two 580s

Moving to dual GTX 580s, and while the split gets bigger, processors like the i3-3225 are starting to lag behind. The difference between the best AMD and best Intel processor is only 2 FPS though, nothing to write home about.

DiRT 3 conclusion

Much like Metro 2033, DiRT 3 has a GPU barrier and until you hit that mark, the choice of CPU makes no real difference at all. In this case, at two-way 7970s, choosing a quad core Intel processor does the business over the FX-8350 by a noticeable gap that continues to grow as more GPUs are added, (assuming you want more than 120 FPS).

116 Comments

View All Comments

UltraTech79 - Saturday, June 22, 2013 - link

This article is irrelevant to 95+% of people. What was the point in this? I don't give a rats ass what will be in 3-5 years, I want to know performance numbers for using a setup with realistic numbers of TODAY.Useless.

core4kansan - Monday, July 15, 2013 - link

While I appreciate the time and effort you put into this, I have to agree with those who call out 1440p's irrelevance for your readers. I think if we tested at sane resolutions, we'd find that a low-end cpu, like a G2120, coupled with a mid-to-high range GPU, would yield VERY playable framerates at 1080p. I'd love to see some of the older Core 2 Duos up against the likes of a G2120, i3-3220/5, on up to i5-3570 and higher with a high end GPU and 1080p res. That would be very useful info for your readers and could save many of them lots of money. In fact, wouldn't you rather put your hard-earned money into a better GPU if you knew that you could save $200 on the cpu? I'm hinting that I believe (without seeing actual numbers) that a G2120+high end GPU would perform virtually identically in gaming to a $300+ cpu with the same graphics accelerator, at 1080p. Sure, you'd see see greater variation between the cpus at 1080p, but when we're testing cpus, don't we WANT that?lackynamber - Friday, August 9, 2013 - link

Some people dont really know what they are reading...apparently!!The fact that in every single review someone says anandtech is being paid by someone is actually a good thing. I mean, a month ago a bunch of people said they are trying to sell Intel cpus, and now we have people saying the same shit about AMD.

Furthermore, the whole benchmark is based around 1440p! Calling it bullshit because it is a small niche that has such a monitor is stupid. No body has Titan either, should they not benchmark it? No one runs quad sli either and so on.

Even the guy that flamed Ian admitted that the benchmark bottlenecks the CPU so it makes AMD look better. WELL THATS THE FUCKING POINT. Amd LOOKS better cause it fucking is, taking into consideration that, as long as you have a single card, YOU DONT FUCKING NEED ANY BETTER CPU. That what the review pointed.

All the benchmark and Ian's reccomendation was, that, for 1440p and one video card, since the gpu is already bottlenecking the cpu, get the cheapest you can, which in this case is amd's A8. I mean, why in fucking hell would I want an i7 on 10 ghz if it is left idle scratching balls cause of the GPU? I

zainab12345 - Thursday, August 20, 2020 - link

i have an amd 5450 gpu card and the game runs very slow. how to make my game very smoother while using this card. 'regards

https://hdpcgames.com/dirt-3-download-pc-game/

zainab12345 - Thursday, August 20, 2020 - link

running very smoothly using low end gpuhttps://hdpcgames.com

showbizclan - Saturday, June 26, 2021 - link

I wonder what they mean by "active".Most likely it's a number of users with steam client running.

Well, it runs idle for more than a year for me, yet I'm an "active" user I guess... https://showbizclan.com/japanese-comedy-movies/