Choosing a Gaming CPU at 1440p: Adding in Haswell

by Ian Cutress on June 4, 2013 10:00 AM ESTMetro 2033

Our first analysis is with the perennial reviewers’ favorite, Metro 2033. It occurs in a lot of reviews for a couple of reasons – it has a very easy to use benchmark GUI that anyone can use, and it is often very GPU limited, at least in single GPU mode. Metro 2033 is a strenuous DX11 benchmark that can challenge most systems that try to run it at any high-end settings. Developed by 4A Games and released in March 2010, we use the inbuilt DirectX 11 Frontline benchmark to test the hardware at 1440p with full graphical settings. Results are given as the average frame rate from a second batch of 4 runs, as Metro has a tendency to inflate the scores for the first batch by up to 5%.

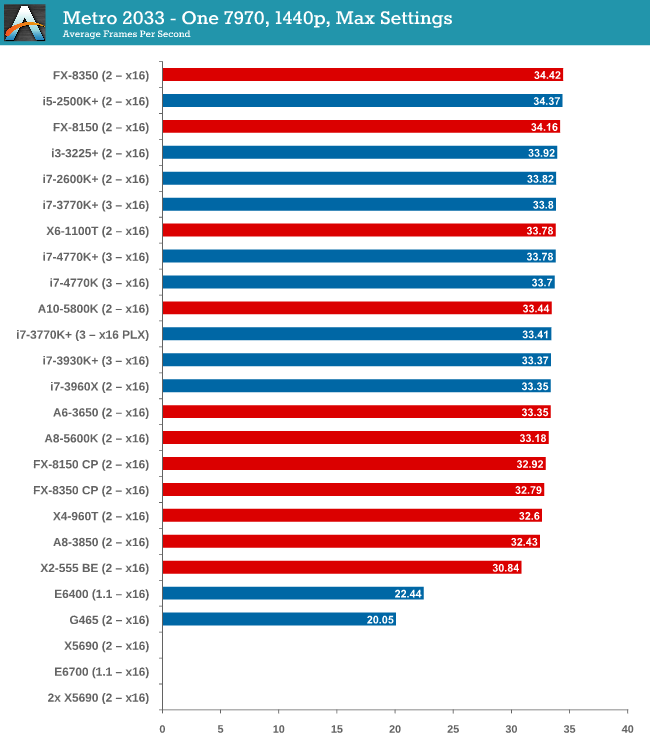

One 7970

With one 7970 at 1440p, every processor is in full x16 allocation and there seems to be no split between any processor with 4 threads or above. Processors with two threads fall behind, but not by much as the X2-555 BE still gets 30 FPS. There seems to be no split between PCIe 3.0 or PCIe 2.0, or with respect to memory.

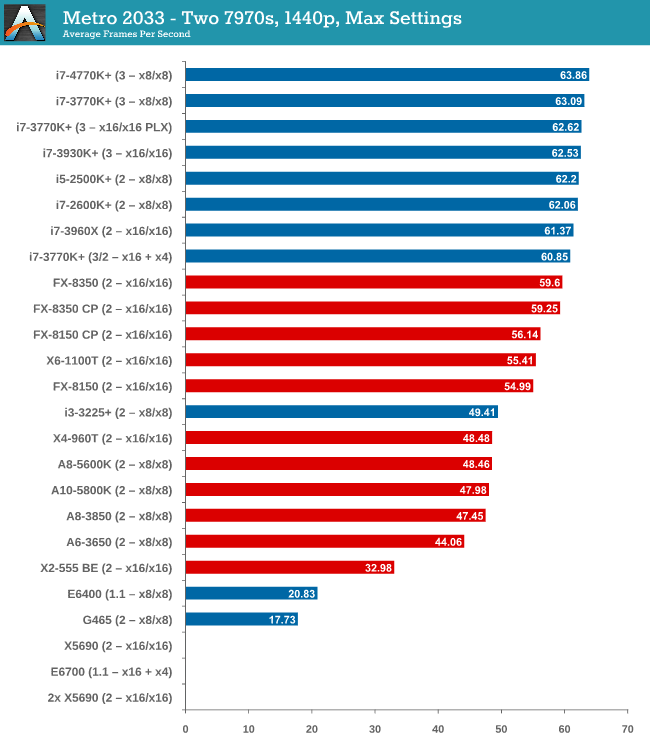

Two 7970s

When we start using two GPUs in the setup, the Intel processors have an advantage, with those running PCIe 2.0 a few FPS ahead of the FX-8350. Both cores and single thread speed seem to have some effect (i3-3225 is quite low, FX-8350 > X6-1100T).

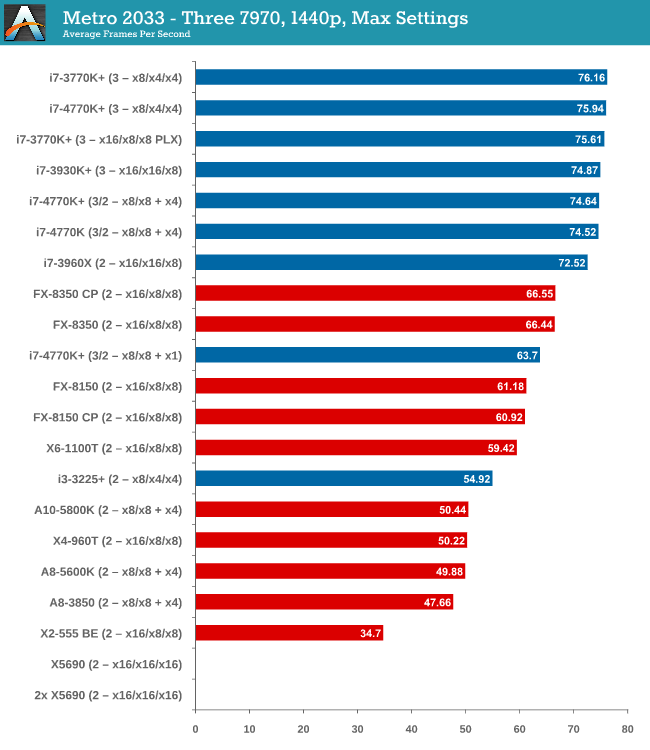

Three 7970s

More results in favour of Intel processors and PCIe 3.0, the i7-3770K in an x8/x4/x4 surpassing the FX-8350 in an x16/x16/x8 by almost 10 frames per second. There seems to be no advantage to having a Sandy Bridge-E setup over an Ivy Bridge one so far.

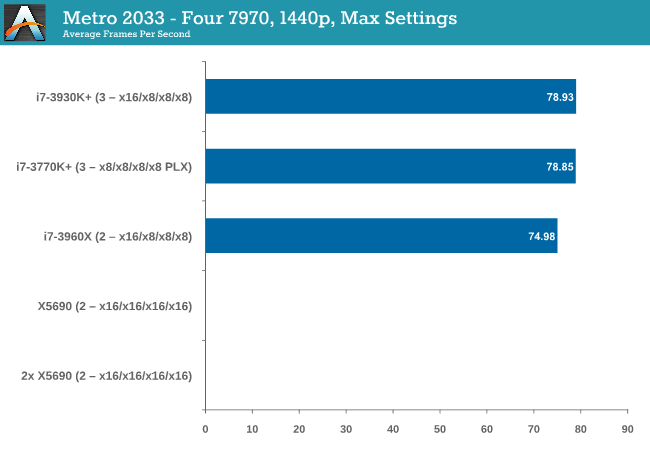

Four 7970s

While we have limited results, PCIe 3.0 wins against PCIe 2.0 by 5%.

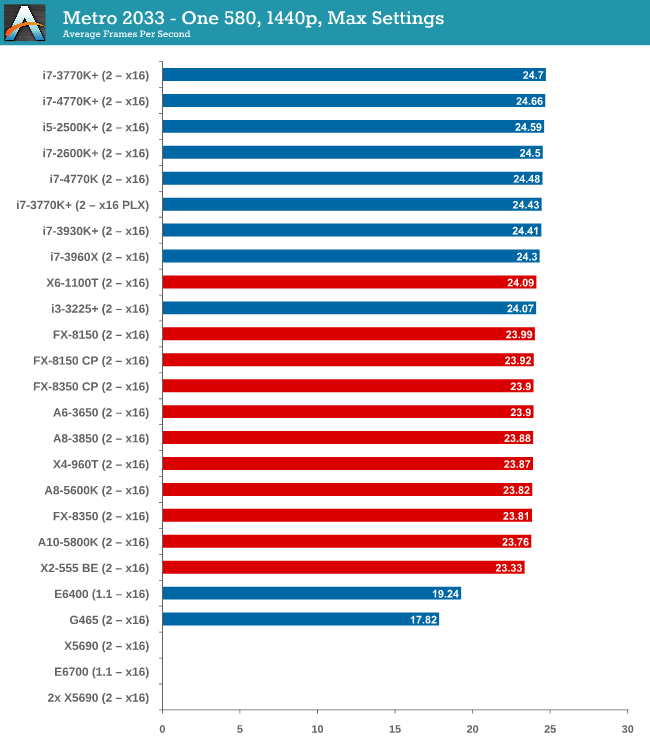

One 580

From dual core AMD all the way up to the latest Ivy Bridge, results for a single GTX 580 are all roughly the same, indicating a GPU throughput limited scenario.

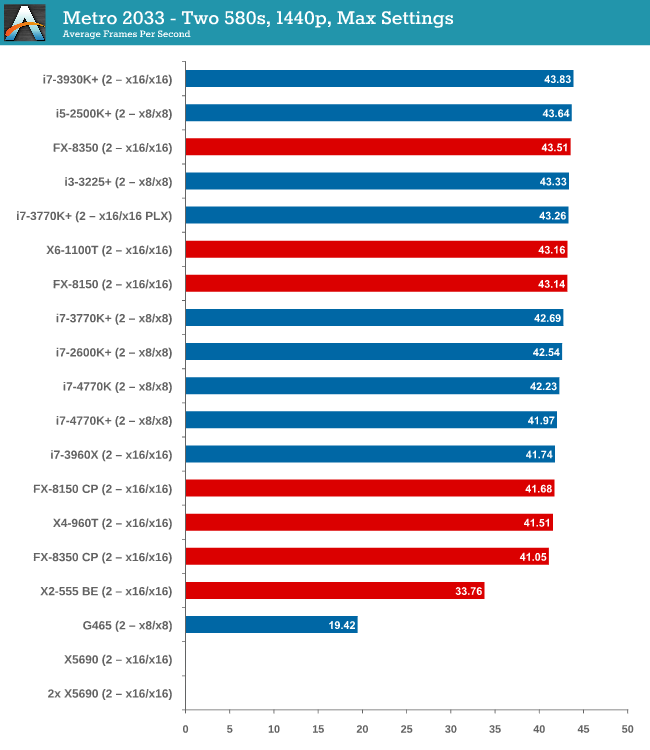

Two 580s

Similar to one GTX580, we are still GPU limited here.

Metro 2033 conclusion

A few points are readily apparent from Metro 2033 tests – the more powerful the GPU, the more important the CPU choice is, and that CPU choice does not matter until you get to at least three 7970s. In that case, you want a PCIe 3.0 setup more than anything else.

116 Comments

View All Comments

UltraTech79 - Saturday, June 22, 2013 - link

This article is irrelevant to 95+% of people. What was the point in this? I don't give a rats ass what will be in 3-5 years, I want to know performance numbers for using a setup with realistic numbers of TODAY.Useless.

core4kansan - Monday, July 15, 2013 - link

While I appreciate the time and effort you put into this, I have to agree with those who call out 1440p's irrelevance for your readers. I think if we tested at sane resolutions, we'd find that a low-end cpu, like a G2120, coupled with a mid-to-high range GPU, would yield VERY playable framerates at 1080p. I'd love to see some of the older Core 2 Duos up against the likes of a G2120, i3-3220/5, on up to i5-3570 and higher with a high end GPU and 1080p res. That would be very useful info for your readers and could save many of them lots of money. In fact, wouldn't you rather put your hard-earned money into a better GPU if you knew that you could save $200 on the cpu? I'm hinting that I believe (without seeing actual numbers) that a G2120+high end GPU would perform virtually identically in gaming to a $300+ cpu with the same graphics accelerator, at 1080p. Sure, you'd see see greater variation between the cpus at 1080p, but when we're testing cpus, don't we WANT that?lackynamber - Friday, August 9, 2013 - link

Some people dont really know what they are reading...apparently!!The fact that in every single review someone says anandtech is being paid by someone is actually a good thing. I mean, a month ago a bunch of people said they are trying to sell Intel cpus, and now we have people saying the same shit about AMD.

Furthermore, the whole benchmark is based around 1440p! Calling it bullshit because it is a small niche that has such a monitor is stupid. No body has Titan either, should they not benchmark it? No one runs quad sli either and so on.

Even the guy that flamed Ian admitted that the benchmark bottlenecks the CPU so it makes AMD look better. WELL THATS THE FUCKING POINT. Amd LOOKS better cause it fucking is, taking into consideration that, as long as you have a single card, YOU DONT FUCKING NEED ANY BETTER CPU. That what the review pointed.

All the benchmark and Ian's reccomendation was, that, for 1440p and one video card, since the gpu is already bottlenecking the cpu, get the cheapest you can, which in this case is amd's A8. I mean, why in fucking hell would I want an i7 on 10 ghz if it is left idle scratching balls cause of the GPU? I

zainab12345 - Thursday, August 20, 2020 - link

i have an amd 5450 gpu card and the game runs very slow. how to make my game very smoother while using this card. 'regards

https://hdpcgames.com/dirt-3-download-pc-game/

zainab12345 - Thursday, August 20, 2020 - link

running very smoothly using low end gpuhttps://hdpcgames.com

showbizclan - Saturday, June 26, 2021 - link

I wonder what they mean by "active".Most likely it's a number of users with steam client running.

Well, it runs idle for more than a year for me, yet I'm an "active" user I guess... https://showbizclan.com/japanese-comedy-movies/