The AMD Kabini Review: A4-5000 APU Tested

by Jarred Walton on May 23, 2013 12:00 AM ESTKabini vs. Clover Trail & ARM

Kabini is a difficult SoC to evaluate, primarily because of the nature of the test system we're using to evaluate it today. Although AMD's Jaguar cores are power efficient enough to end up in tablets, the 15W A4-5000 we're looking at today is a bit too much for something the size of an iPad. Temash, Kabini's even lower power counterpart, will change that but we don't have Temash with us today. Rather than wait for AMD to get us a Temash based tablet, I wanted to get an idea of how Jaguar stacks up to some of the modern low-power x86 and ARM competitors.

To start, let's characterize Jaguar in terms of its performance compared to Bobcat as well as Intel's current 32nm in-order Saltwell Atom core. As a reference, I've thrown in a 17W dual-core Ivy Bridge. The benchmarks we're looking at are PCMark 7 (only run on those systems with SSDs), Cinebench (FP workload) and 7-Zip (integer workload). With the exception of Kabini, all of these parts are dual-core. The Atom and Core i5 systems are dual-core but have Hyper-Threading enabled so they present themselves to the OS as 4-thread machines.

| CPU Performance | ||||||||||||||||

| PCMark 7 | Cinebench 11.5 (Single Threaded) | Cinebench 11.5 (Multithreaded) | 7-Zip Benchmark (Single Threaded) | 7-Zip Benchmark (Multithreaded) | ||||||||||||

| AMD A4-5000 (1.5GHz Jaguar x 4) | 2425 | 0.39 | 1.5 | 1323 | 4509 | |||||||||||

| AMD E-350 (1.6GHz Bobcat x 2) | 1986 | 0.32 | 0.61 | 1281 | 2522 | |||||||||||

| Intel Atom Z2760 (1.8GHz Saltwell x 2) | - | 0.17 | 0.52 | 754 | 2304 | |||||||||||

| Intel Core i5-3317U (1.7GHz IVB x 2) | 4318 | 1.07 | 2.39 | 2816 | 6598 | |||||||||||

Compared to a similarly clocked dual-core Bobcat part, Kabini shows a healthy improvement in PCMark 7 performance. Despite the clock speed disadvantage, the A4-5000 manages 22% better performance than AMD's E-350. The impressive gains continue as we look at single-threaded Cinebench performance. Again, a 22% increase compared to Bobcat. Multithreaded Cinebench performance scales by more than 2x thanks to the core count doubling and increased multi-core efficiency. The current generation Atom comparison here is just laughable—Jaguar offers more than twice the performance of Clover Trail in single threaded Cinebench.

The single threaded 7-Zip benchmark shows only mild gains if we don't take into account clock speed differences. If you normalize for CPU frequency, Jaguar is likely around 9% faster than Bobcat here. Multithreaded gains are quite good as well. Once again, Atom is no where near AMD's new A4.

The Ivy Bridge comparison is really just for reference. In all of the lightly threaded cases, a 1.7GHz Ivy Bridge delivers over 2x the performance of the A4-5000. The gap narrows for heavily threaded workloads but obviously any bigger core going into a more expensive system will yield appreciably better results.

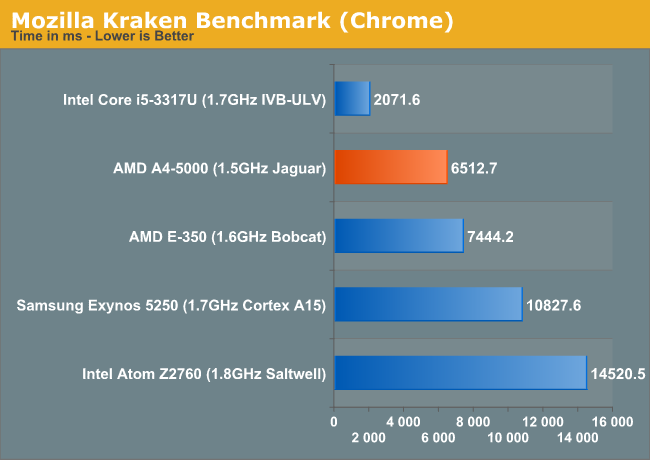

For the next test I expanded our comparison to include an ARM based SoC: the dual-core Cortex A15 powered Samsung Exynos 5250 courtesy of Google's Nexus 10. These cross platform benchmarks are all browser based and run in Google Chrome:

Here we see a 14% improvement over Bobcat, likely closer to 20% if we normalized clock speed between the parts—tracking perfectly with AMD's promised IPC gains for Jaguar. The A4-5000 completes the Kraken benchmark in less than half the time. The 1.7GHz Ivy Bridge part is obviously quicker, but what's interesting is that if we limit the IVB CPU's frequency to 800MHz Kabini is actually a near identical performer.

Jaguar seems to be around 9-20% faster than Bobcat depending on the benchmark. Multithreaded workloads are obviously much better as there are simply more cores to run on. In practice, using the Kabini test system vs. an old Brazos machine delivers a noticeable difference in user experience. Clover Trail feels anemic by comparison and even Brazos feels quite dated. Seeing as how Bobcat was already quicker than ARM's Cortex A15, its no surprise that Jaguar is as well. The bigger problem here is Kabini needs much lower platform power to really threaten the Cortex A15 in tablets—we'll see how Temash fares as soon as we can get our hands on a tablet.

130 Comments

View All Comments

HisDivineOrder - Thursday, May 23, 2013 - link

Given AMD's traditional design wins and how those systems end up, I suspect this is not going to matter much. I have more hope of Bay Trail providing a solid deal for once than I do this.It's a shame because this really should be AMD's niche to dominate, but I doubt any OEM'll give them a serious try.

Desperad@ - Thursday, May 23, 2013 - link

On competitive positioning, is it even near IB Pentium?brainee - Saturday, May 25, 2013 - link

I think so, yes. IB Pentium 2117U (17 Watt TDP) should be around 33 % faster in legacy Intel-optimised CPU benchmarks doing the math and according to say Techspot. I would think ULV Pentiums are more expensive for OEMs, notebooks is a different story. Not to mention Kabini should cost a fraction to make for AMD compared with even crippled 2C Ivybridges aka Celeron / Pentium. Kabini wins in games and Open CL, and in AVX-enabled applications it should eat the Pentium alive since the latter doesn't support AVX extensions (should be mentioned at least). I'd prefer AVX extensions to Cinebench but this site seems to suggest I am a minority...yhselp - Saturday, May 25, 2013 - link

Comparing a 3W SoC (Z2760) to a 15W SoC (A4-5000), and calling the former laughable... not really fair.Sure, Kabini is definitely faster than the old Atom architecture and, yes, I understand this is not a definitive comparison; nevertheless - it seems misleading.

What would happen if we compare a 3W Kabini to a 15W Haswell? Laughable wouldn't even begin to describe the performance difference.

silverblue - Saturday, May 25, 2013 - link

But... an A4-5000 doesn't use anywhere near 15W, as far as I've heard. Still, let's consider the evidence - the Z2760 is a 32-bit, dual core, hyperthreaded CPU at 1.8GHz with a low powered graphics unit and 1MB of L2. The A4-5000 is a 64-bit, quad core CPU at 1.5GHz with a far stronger graphics unit and 2MB of dynamic L2. Temash would be a different proposition I expect as the A4-1200 is only clocked at 1GHz.yhselp - Saturday, May 25, 2013 - link

Yes, absolutely, I agree - it's just that the direct comparisons and conclusions made are a bit stark.There's always another side to an argument; in your case, I could argue that comparing the brand new Jaguar to a terribly old Atom architecture isn't the way to go. Consider the following evidence - Silvermont is 64-bit, quad-core, 2MB L2 cache, OoO, 2GHz+, 22nm, far more energy efficient, supports 1st gen Core instructions and Turbo Boost; it would decimate Jaguar.

In the article, I also discovered that the 2020M is referred to as a 1.8GHz 35W part, when it's actually 2.4GHz. Are the benchmarks done on a underclocked 2020M or was that simply a typo?

That's the kind of stuff I'm talking about, not AMD vs. Intel.

jcompagner - Sunday, May 26, 2013 - link

So this is the core that will be in the next 2 big consoles?Am i the only one that think that these are quite weak, even if you have 8 of them?

That does mean now that if one of those 2 consoles are the lead in the development that the games will be forced to be really good multi threaded. (So i guess the next games for the pc will also be using multiply cores way more)

Why did they go for the jaguar core thats really targetted for ver low end or mobile stuff?

Why didn't they just go for a Richland 8 core system with a very good gpu that lets say is a 100W part?

What is the guess that the TDP is of the xbox one or ps4? A console can take 100W just easily that doesn't matter, so why choose for a core that is dedicated for mobile?

yhselp - Sunday, May 26, 2013 - link

Yes, the Jaguar core is 'weak', but what does 'weak' mean? That is such a vague definition. For one usage scenario Jaguar might be unacceptable, for another it might be overkill. Remember, Sony/MS are not building a contemporary PC. Jaguar might seem slow to us, and in a gaming desktop it would be, but that's not the point. Think of consoles, in this case the PS4 and the Xbox One, as non-PC devices such as tablets. Would you say the latest Samsung/Apple running on a Cortex A15 is slow? No, you would say it's super fast. Well, Jaguar is even faster. Yes, a console has to deal with different workloads than a tablet, but that's why it has very different hardware.Why did Sony/MS choose Jaguar? Jaguar is easier to integrated, more power efficient and most importantly cheaper than Richland. It's a far simpler architecture than Richland, and probably easier to work with in a console's life. Also, it's very important to note that Sony/MS wanted an integrated solution - they weren't going to build a system with a dedicated video card like a gaming PC.

Cost, cost, cost - everything is about the cost. A console cannot be expensive (the way a gaming PC is) - it has to sell very well in order to establish an install base to sell games to. Sony/MS will probably sell their 8th gen consoles at a loss initially - AMD's Jaguar/GCN was their best/only choice. What else could they do at the same price or even at all? Silvermont isn't ready yet and NVIDIA probably wouldn't be willing to integrate a GPU of theirs the way AMD did, and both of those would be more expensive than Jaguar/GCN. Not to mention, MS has had a ton of trouble with NVIDIA in the original Xbox - they are probably not willing to go down that road again.

It's not really an 8-core solution - it's two quad-core modules and communication between the two might be problematic; so games on the new PS/Xbox would probably run on four Jaguar cores at 1.6 GHz. However, don't forget that neither of the two consoles has a ton of raw graphics power under the hood - the Xbox GPU is roughly equivalent to an HD 7770 (but with better memory bandwidth), and the PS to an HD 7850. Games would be specifically developed for this kind of hardware (unlike PC games) and would most probably be GPU limited so the Jaguar cores would really be sufficient.

I hope this answers your questions.

Kevin G - Monday, May 27, 2013 - link

A Pile Driver module is much larger than a Jaguar core. For die size concerns, it going with Jaguar made sense if core counts are the same. Steam Roller cores are due out in 2014 which are expected to bring higher IPC and a slight clock speed increase compared to Pile Driver.Power consumption is also an issue. The bulk of the power consumption from the XBox One and PS4 SoC's will come from their GPU's. Adding a high power CPU core like Pile Driver would have ballooned power consumption close to 200W which makes cooling impractical and expensive. Jaguar still adds power but it is far more manageable in comparison.

In addition, Steam Roller is tied to processes from Global Foundries (though IBM could likely manufacture them if need be). TSMC is the preferred foundry for bulk processes due to cost and a slight edge in density. Jaguar has been prepared to be manufactured at TSMC from the start. AMD could have stuck with GF but it would have had to port GCN functional units to that same process. Such efforts are currently underway for Kaveri that is looking to be a 2014 part. So for any type of 2013 launch, going that route was not an option.

aikyucenter - Sunday, June 30, 2013 - link

Great OpenCL performance ... love it ... just make it faster launch and decrease TDP too = PERFECT :D