NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

GTX 780 comes into this phase of our testing with a very distinct advantage. Being based on an already exceptionally solid card in the GTX Titan, it’s guaranteed to do at least as well as Titan here. At the same time because its practical power consumption is going to be a bit lower due to the fewer enabled SMXes and fewer RAM chips, it can be said that it has Titan’s cooler and a lower yet TDP, which can be a silent (but deadly) combination.

| GeForce GTX 780 Voltages | ||||

| GTX 780 Max Boost | GTX 780 Base | GTX 780 Idle | ||

| 1.1625v | 1.025v | 0.875v | ||

Unsurprisingly, voltages are unchanged from Titan. GK110’s max safe load voltage is 1.1625v, with 1.2v being the maximum overvoltage allowed by NVIDIA. Meanwhile idle remains at 0.875v, and as we’ll see idle power consumption is equal too.

Meanwhile we also took the liberty of capturing the average clockspeeds of the GTX 780 in all of the games in our benchmark suite. In short, although the GTX 780 has a higher base clock than Titan (863MHz versus 837MHz), the fact that it only goes to one higher boost bin (1006MHz versus 993MHz) means that the GTX 780 doesn’t usually clock much higher than GTX Titan under load; for one reason or another it typically settles at the boost bin as the GTX Titan on tests that offer consistent work loads. This means that in practice the GTX 780 is closer to a straight-up harvested GTX Titan, with no practical clockspeed differences.

| GeForce GTX Titan Average Clockspeeds | ||||

| GTX 780 | GTX Titan | |||

| Max Boost Clock | 1006MHz | 992MHz | ||

| DiRT:S |

1006MHz

|

992MHz | ||

| Shogun 2 |

966MHz

|

966MHz | ||

| Hitman |

992MHz

|

992MHz | ||

| Sleeping Dogs |

969MHz

|

966MHz | ||

| Crysis |

992MHz

|

992MHz | ||

| Far Cry 3 |

979MHz

|

979MHz | ||

| Battlefield 3 |

992MHz

|

992MHz | ||

| Civilization V |

1006MHz

|

979MHz | ||

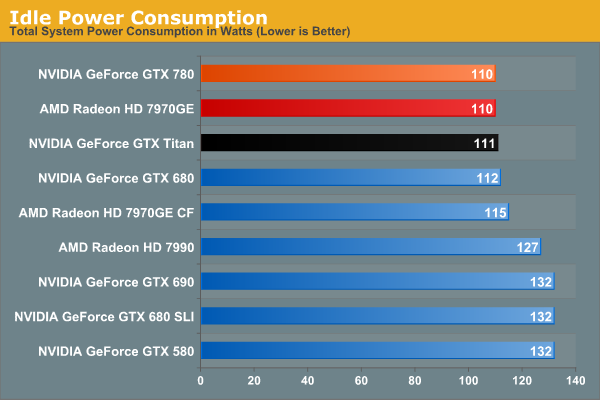

Idle power consumption is by the book. With the GTX 780 equipped, our test system sees 110W at the wall, a mere 1W difference from GTX Titan, and tied with the 7970GE. Idle power consumption of video cards is getting low enough that there’s not a great deal of difference between the latest generation cards, and what’s left is essentially lost as noise.

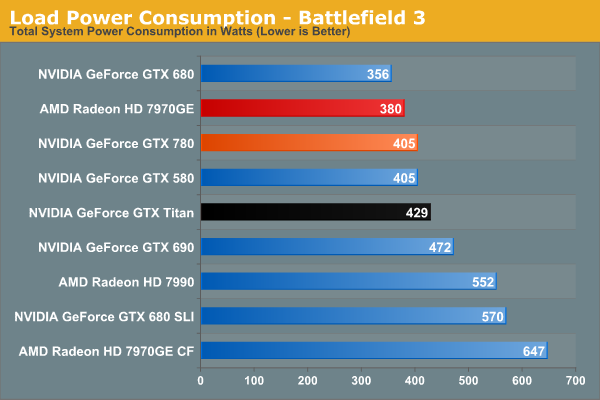

Moving on to power consumption under Battlefield 3, we get our first real confirmation of our earlier theories on power consumption. Between the slightly lower load placed on the CPU from the lower framerate, and the lower power consumption of the card itself, GTX 780 draws 24W less at the wall. Interestingly this is exactly how much our system draws with the GTX 580 too, which accounting for lower CPU power consumption means that video card power consumption on the GTX 780 is down compared to the GTX 580. GTX 780 being a harvested part helps a bit with that, but it still means we’re looking at quite the boost in performance relative to the GTX 580 for a simultaneous decrease in video card power consumption.

Moving along, we see that power consumption at the wall is higher than both the GTX 680 and 7970GE. The former is self-explanatory: the GTX 780 features a bigger GPU and more RAM, but is made on the same 28nm process as the GTX 680. So for a tangible performance improvement within the same generation, there’s nowhere for power consumption to go but up. Meanwhile as compared to the 7970GE, we are likely seeing a combination of CPU power consumption differences and at least some difference in video card power consumption, though this doesn’t make it possible to specify how much of each.

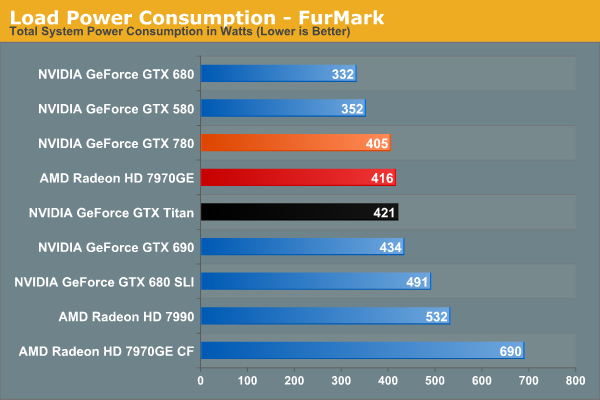

Switching to FurMark and its more pure GPU load, our results become compressed somewhat as the GTX 780 moves slightly ahead of the 7970GE. Power consumption relative to Titan is lower than what we expected it to be considering both cards are hitting their TDP limits, though compared to GTX 680 it’s roughly where it should be. At the same time this reflects a somewhat unexpected advantage for NVIDIA; despite the fact that GK110 is a bigger and logically more power hungry GPU than AMD’s Tahiti, the power consumption of the resulting cards isn’t all that different. Somehow NVIDIA has a slight efficiency advantage here.

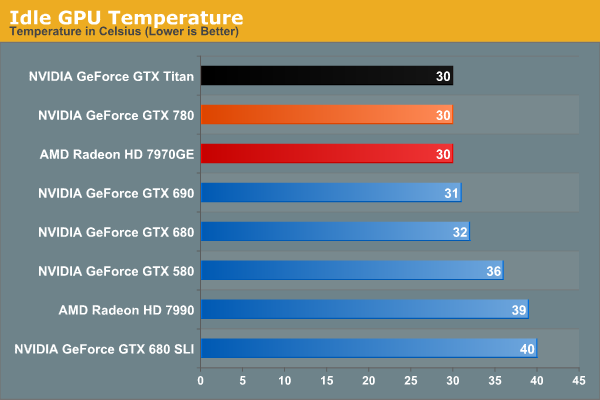

Moving on to idle temperatures, we see that GTX 780 hits the same 30C mark as GTX Titan and 7970GE.

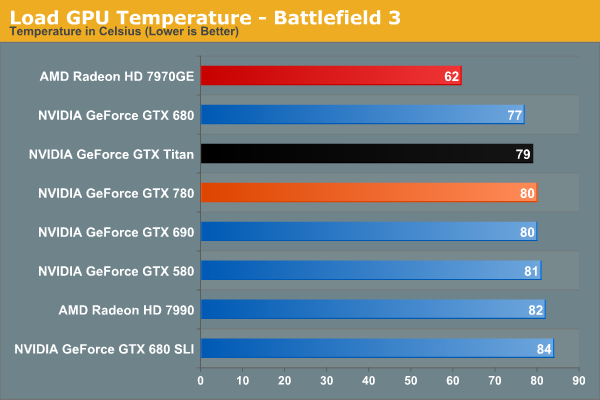

With GPU Boost 2.0, load temperatures are kept tightly in check when gaming. The GTX 780’s default throttle point is 80C, and that’s exactly what happens here, with GTX 780 bouncing around that number while shifting between its two highest boost bins. Note that like Titan however this means it’s quite a bit warmer than the open air cooled 7970GE, so it will be interesting to see if semi-custom GTX 780 cards change this picture at all.

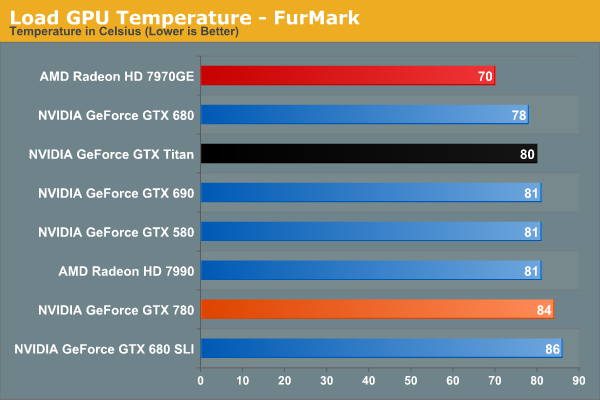

Whereas GPU Boost 2.0 keeps a lid on things when gaming, it’s apparently a bit more flexible on FurMark, likely because the video card is already heavily TDP throttled.

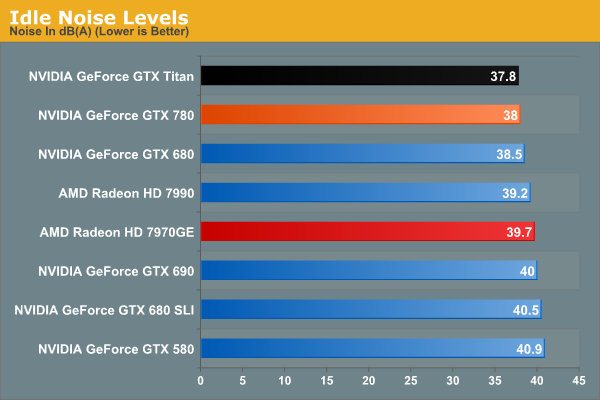

Last but not least we have our look at idle noise. At 38dB GTX 780 is essentially tied with GTX Titan, which again comes at no great surprise. At least in our testing environment one would be hard pressed to tell the difference between GTX 680, GTX 780, and GTX Titan at idle. They’re essentially as quiet as a card can get without being silent.

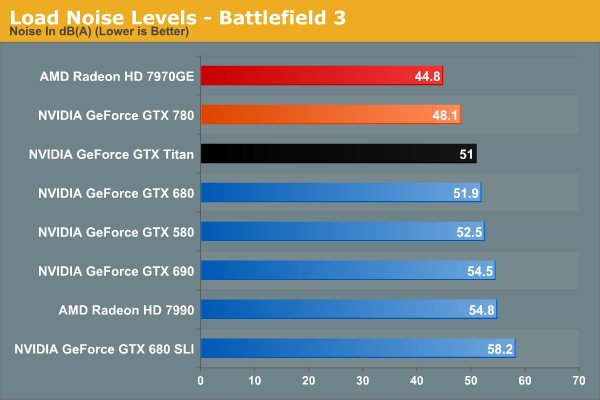

Under BF3 we see the payoff of NVIDIA’s fan modifications, along with the slightly lower effective TDP of GTX 780. Despite – or rather because – it was built on the same platform as GTX Titan, there’s nowhere for idle noise to go down. As a result we have a 250W blower based card hitting 48.1dB under load, which is simply unheard of. At nearly a 4dB improvement over both GTX 680 and GTX 690 it’s a small but significant improvement over NVIDIA’s previous generation cards, and even Titan has the right to be embarrassed. Silent it is not, but this is incredibly impressive for a blower. The only way to beat something like this is with an open air card, as evidenced by the 7970GE, though that does comes with the usual tradeoffs for using such a cooler.

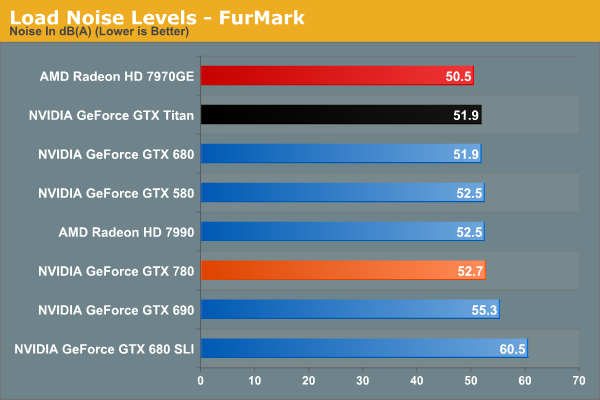

Because of the slightly elevated FurMark temperatures we saw previously, GTX 780 ends up being a bit louder than GTX Titan under FurMark. This isn’t something that we expect to see under any non-pathological workload, and I tend to favor BF3 over FurMark here anyhow, but it does point to there being some kind of minor difference in throttling mechanisms between the two cards. At the same time this means that GTX 780 is still a bit louder than our open air cooled 7970GE, though not by as large a difference as we saw with BF3.

Overall the GTX 780 generally meets or exceeds the GTX Titan in our power, temp, and noise tests, just as we’d expect for a card almost identical to Titan itself. The end result is that it maintains every bit of Titan’s luxury and stellar performance, and if anything improves on it slightly when we’re talking about the all-important aspects of load noise. It’s a shame that coolers such as 780’s are not a common fixture on cheaper cards, as this is essentially unparalleled as far as blower based coolers are concerned.

At the same time this sets up an interesting challenge for NVIDIA’s partners. To pass Greenlight they need to produce cards with coolers that function as good or as better than the reference GTX 780 in NVIDIA’s test environment. This is by no means impossible, but it’s not going to be an easy task. So it will be interesting to see what partners cook up, especially with the obligatory dual fan open air cooled models.

155 Comments

View All Comments

Akrovah - Friday, May 24, 2013 - link

Oh yeah, forgot audio data, all of which gets stored in main RAM. And THAT will take up a pretty nice chunk of space righ there.Sivar - Thursday, May 23, 2013 - link

You realize, of course, that the 8GB RAM in consoles is 8GB *TOTAL* RAM, whose capacity and bandwidth must be shared for video tasks, the OS, and shuffling the game's data files.A PC with a 3GB video card can use that 3GB exclusively for textures and other video card stuff.

B3an - Friday, May 24, 2013 - link

See my comment above.DanNeely - Thursday, May 23, 2013 - link

Right now all we've got is the reference card being rebadged by a half dozenish companies. Give it a few weeks or a month and I'm certain someone will start selling a 6GB model. People gaming at 2560 or on 3 monitor setups might benefit from the wait; people who just want to crank AA at 1080p or even just be able to always play at max instead of fiddling with settings (and there're a lot more of them than there are of us) have no real reason to wait. Also, in 12 months Maxwell will be out and with the power of a die shrink behind it the 860 will probably be able to match what the 780 does anyway.DanNeely - Tuesday, May 28, 2013 - link

On HardOCP's forum I've read that nVidia's told it's partners they shouldn't make a 6GB variant of the 780 (presumably to protect Titan sales). While it's possible one of them might do so anyway; getting nVidia mad at them isn't a good business strategy so it's doubtful any will.tipoo - Thursday, May 23, 2013 - link

If a slightly cut down Titan is their solution for the higher end 700 series card, I wonder what else the series will be like? Will everything just plop down a price category, the 680 in the 670s price point, etc? That would be uninteresting, but reasonable I guess, given how much power Kepler has on tap. And it wouldn't do much for mobile.DigitalFreak - Thursday, May 23, 2013 - link

The 770 will be identical to the 680, but with a slightly faster clock speed. I believe the same will be true with the 760 / 670. Those cards are probably still under NDA, which is why they weren't mentioned.chizow - Thursday, May 23, 2013 - link

Yep 770 at least is supposed to launch a week from today, 5/30. Satisfy demand from the top-down and grab a few impulse buyers who can't wait along the way.yannigr - Thursday, May 23, 2013 - link

No free games. With an AMD card you also get many AAA games. So Nvidia is a little more expensive than just +$200 compared with 7970GE.I am expecting reviewers someday to stop ignoring game bundles because they come from AMD. We are not talking for one or two games here, for old games, or demos. We are talking about MONEY. 6-7-8-9-10 free AAA titles are MONEY.

Tuvok86 - Thursday, May 23, 2013 - link

I believe nVidia has bundles as well