NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

GTX 780 comes into this phase of our testing with a very distinct advantage. Being based on an already exceptionally solid card in the GTX Titan, it’s guaranteed to do at least as well as Titan here. At the same time because its practical power consumption is going to be a bit lower due to the fewer enabled SMXes and fewer RAM chips, it can be said that it has Titan’s cooler and a lower yet TDP, which can be a silent (but deadly) combination.

| GeForce GTX 780 Voltages | ||||

| GTX 780 Max Boost | GTX 780 Base | GTX 780 Idle | ||

| 1.1625v | 1.025v | 0.875v | ||

Unsurprisingly, voltages are unchanged from Titan. GK110’s max safe load voltage is 1.1625v, with 1.2v being the maximum overvoltage allowed by NVIDIA. Meanwhile idle remains at 0.875v, and as we’ll see idle power consumption is equal too.

Meanwhile we also took the liberty of capturing the average clockspeeds of the GTX 780 in all of the games in our benchmark suite. In short, although the GTX 780 has a higher base clock than Titan (863MHz versus 837MHz), the fact that it only goes to one higher boost bin (1006MHz versus 993MHz) means that the GTX 780 doesn’t usually clock much higher than GTX Titan under load; for one reason or another it typically settles at the boost bin as the GTX Titan on tests that offer consistent work loads. This means that in practice the GTX 780 is closer to a straight-up harvested GTX Titan, with no practical clockspeed differences.

| GeForce GTX Titan Average Clockspeeds | ||||

| GTX 780 | GTX Titan | |||

| Max Boost Clock | 1006MHz | 992MHz | ||

| DiRT:S |

1006MHz

|

992MHz | ||

| Shogun 2 |

966MHz

|

966MHz | ||

| Hitman |

992MHz

|

992MHz | ||

| Sleeping Dogs |

969MHz

|

966MHz | ||

| Crysis |

992MHz

|

992MHz | ||

| Far Cry 3 |

979MHz

|

979MHz | ||

| Battlefield 3 |

992MHz

|

992MHz | ||

| Civilization V |

1006MHz

|

979MHz | ||

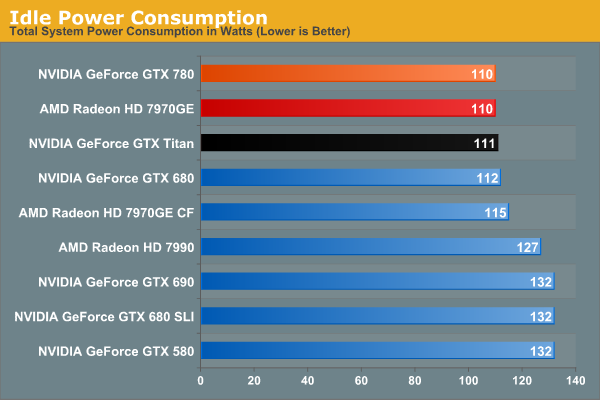

Idle power consumption is by the book. With the GTX 780 equipped, our test system sees 110W at the wall, a mere 1W difference from GTX Titan, and tied with the 7970GE. Idle power consumption of video cards is getting low enough that there’s not a great deal of difference between the latest generation cards, and what’s left is essentially lost as noise.

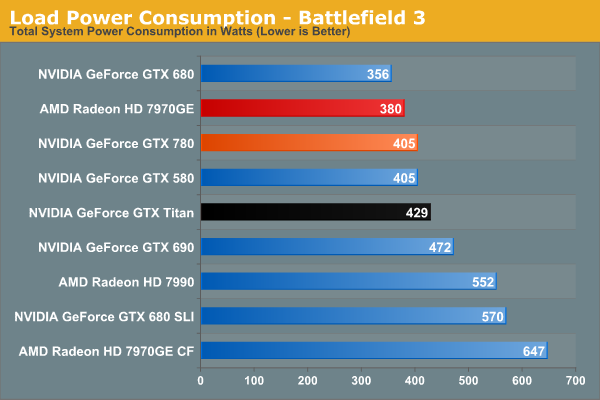

Moving on to power consumption under Battlefield 3, we get our first real confirmation of our earlier theories on power consumption. Between the slightly lower load placed on the CPU from the lower framerate, and the lower power consumption of the card itself, GTX 780 draws 24W less at the wall. Interestingly this is exactly how much our system draws with the GTX 580 too, which accounting for lower CPU power consumption means that video card power consumption on the GTX 780 is down compared to the GTX 580. GTX 780 being a harvested part helps a bit with that, but it still means we’re looking at quite the boost in performance relative to the GTX 580 for a simultaneous decrease in video card power consumption.

Moving along, we see that power consumption at the wall is higher than both the GTX 680 and 7970GE. The former is self-explanatory: the GTX 780 features a bigger GPU and more RAM, but is made on the same 28nm process as the GTX 680. So for a tangible performance improvement within the same generation, there’s nowhere for power consumption to go but up. Meanwhile as compared to the 7970GE, we are likely seeing a combination of CPU power consumption differences and at least some difference in video card power consumption, though this doesn’t make it possible to specify how much of each.

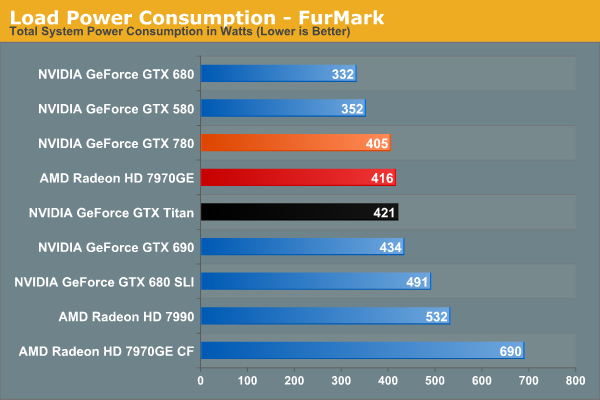

Switching to FurMark and its more pure GPU load, our results become compressed somewhat as the GTX 780 moves slightly ahead of the 7970GE. Power consumption relative to Titan is lower than what we expected it to be considering both cards are hitting their TDP limits, though compared to GTX 680 it’s roughly where it should be. At the same time this reflects a somewhat unexpected advantage for NVIDIA; despite the fact that GK110 is a bigger and logically more power hungry GPU than AMD’s Tahiti, the power consumption of the resulting cards isn’t all that different. Somehow NVIDIA has a slight efficiency advantage here.

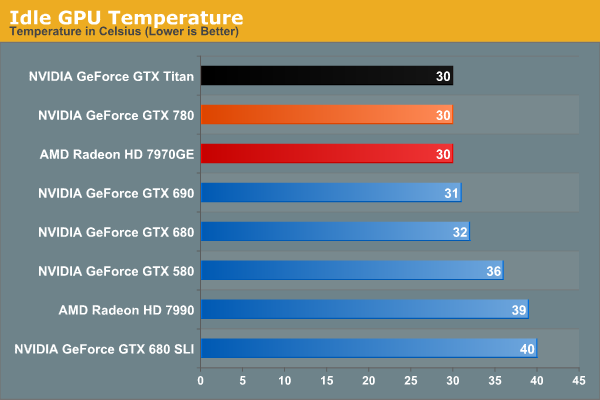

Moving on to idle temperatures, we see that GTX 780 hits the same 30C mark as GTX Titan and 7970GE.

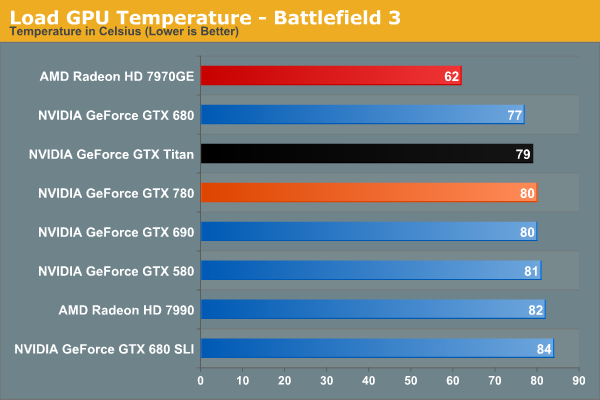

With GPU Boost 2.0, load temperatures are kept tightly in check when gaming. The GTX 780’s default throttle point is 80C, and that’s exactly what happens here, with GTX 780 bouncing around that number while shifting between its two highest boost bins. Note that like Titan however this means it’s quite a bit warmer than the open air cooled 7970GE, so it will be interesting to see if semi-custom GTX 780 cards change this picture at all.

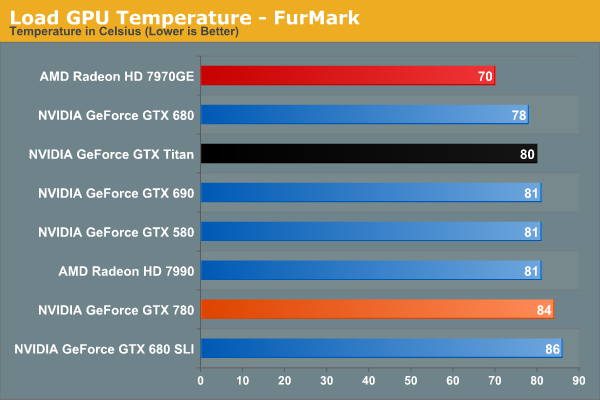

Whereas GPU Boost 2.0 keeps a lid on things when gaming, it’s apparently a bit more flexible on FurMark, likely because the video card is already heavily TDP throttled.

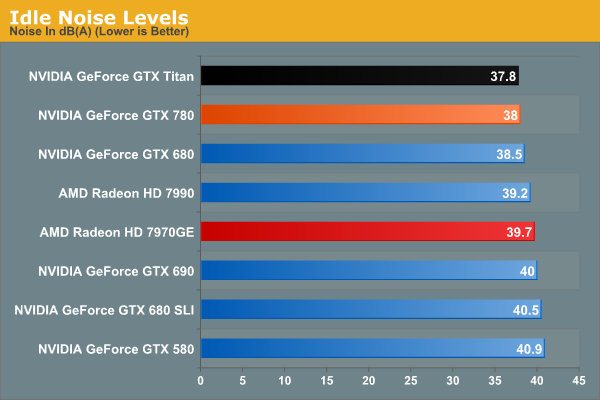

Last but not least we have our look at idle noise. At 38dB GTX 780 is essentially tied with GTX Titan, which again comes at no great surprise. At least in our testing environment one would be hard pressed to tell the difference between GTX 680, GTX 780, and GTX Titan at idle. They’re essentially as quiet as a card can get without being silent.

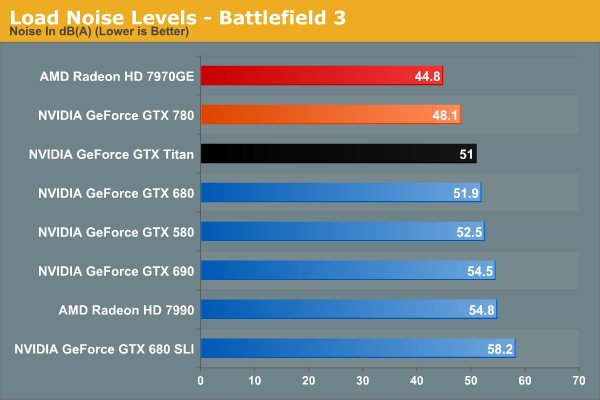

Under BF3 we see the payoff of NVIDIA’s fan modifications, along with the slightly lower effective TDP of GTX 780. Despite – or rather because – it was built on the same platform as GTX Titan, there’s nowhere for idle noise to go down. As a result we have a 250W blower based card hitting 48.1dB under load, which is simply unheard of. At nearly a 4dB improvement over both GTX 680 and GTX 690 it’s a small but significant improvement over NVIDIA’s previous generation cards, and even Titan has the right to be embarrassed. Silent it is not, but this is incredibly impressive for a blower. The only way to beat something like this is with an open air card, as evidenced by the 7970GE, though that does comes with the usual tradeoffs for using such a cooler.

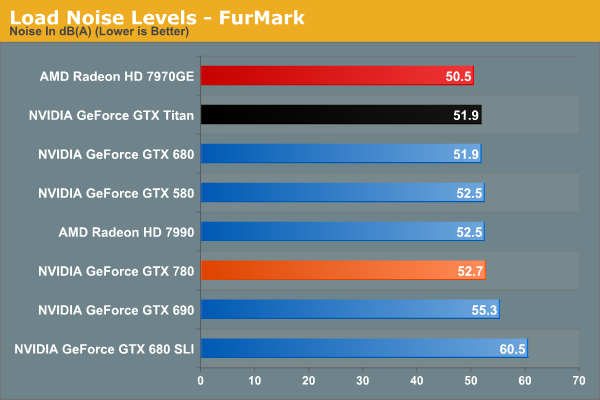

Because of the slightly elevated FurMark temperatures we saw previously, GTX 780 ends up being a bit louder than GTX Titan under FurMark. This isn’t something that we expect to see under any non-pathological workload, and I tend to favor BF3 over FurMark here anyhow, but it does point to there being some kind of minor difference in throttling mechanisms between the two cards. At the same time this means that GTX 780 is still a bit louder than our open air cooled 7970GE, though not by as large a difference as we saw with BF3.

Overall the GTX 780 generally meets or exceeds the GTX Titan in our power, temp, and noise tests, just as we’d expect for a card almost identical to Titan itself. The end result is that it maintains every bit of Titan’s luxury and stellar performance, and if anything improves on it slightly when we’re talking about the all-important aspects of load noise. It’s a shame that coolers such as 780’s are not a common fixture on cheaper cards, as this is essentially unparalleled as far as blower based coolers are concerned.

At the same time this sets up an interesting challenge for NVIDIA’s partners. To pass Greenlight they need to produce cards with coolers that function as good or as better than the reference GTX 780 in NVIDIA’s test environment. This is by no means impossible, but it’s not going to be an easy task. So it will be interesting to see what partners cook up, especially with the obligatory dual fan open air cooled models.

155 Comments

View All Comments

lukarak - Friday, May 24, 2013 - link

1/3rd FP32 and 1/24th FP32 is nowhere near 10-15% apart. Gaming is not everything.chizow - Friday, May 24, 2013 - link

Yes fine cut gaming performance on 780 and Titan down to 1/24th and see how many of these you sell at $650 and $1000.Hrel - Friday, May 24, 2013 - link

THANK YOU!!!! WHY this kind of thing isn't IN the review is beyond me. As much good work as Nvidia is doing they're pricing schemes, naming schemes and general abuse of customers has turned me off of them forever. Which convenient because AMD is really getting their shit together quickly.chizow - Saturday, May 25, 2013 - link

Ryan has danced around this topic in the past, he's a pretty straight shooter overall but it goes without saying why he isn't harping on this in his review. He has to protect his (and AT's) relationship with Nvidia to keep the gravy train flowing. They have gotten in trouble with Nvidia in the past (sometime around the "not covering PhysX enough" fiasco, along with HardOCP) and as a result, their review allocation suffered.In the end, while it may be the truth, no one with a vested interest in these products and their future success contributing to their livelihoods wants to hear about it, I guess. It's just us, the consumers that suffer for it, so I do feel it's important to voice my opinion on the matter.

Ryan Smith - Sunday, May 26, 2013 - link

While you are welcome to your opinion and I doubt I'll be able to change it, I would note that I take a dim view towards such unfounded nonsense.We have a very clear stance with NVIDIA: we write what we believe. If we like a product we praise it, if we don't like a product we'll say so, and if we see an issue we'll bring it up. We are the press and our role is clear; we are not any company's friend or foe, but a 3rd party who stakes our name and reputation (and livelihood!) on providing unbiased and fair analysis of technologies and products. NVIDIA certainly doesn't get a say in any of this, and the only thing our relationship is built upon is their trusting our methods and conclusions. We certainly don't require NVIDIA's blessing to do what we do, and publishing the truth has and always will come first, vendor relationships be damned. So if I do or do not mention something in an article, it's not about "protecting the gravy train", but about what I, the reviewer, find important and worth mentioning.

On a side note, I would note that in the 4 years I have had this post, we have never had an issue with review allocation (and I've said some pretty harsh things about NVIDIA products at times). So I'm not sure where you're hearing otherwise.

chizow - Monday, May 27, 2013 - link

Hi Ryan I respect your take on it and as I've said already, you generally comment on and understand more about the impact of pricing and economy more than most other reviews, which is a big part of the reason I appreciate AT reviews over others.That being said, much of this type of commentary about pricing/economics can be viewed as editorializing, so while I'm not in any way saying companies influence your actual review results and conclusions, the choice NOT to speak about topics that may be considered out of bounds for a review does not fall under the scope of your reputation or independence as a reviewer.

If we're being honest here, we're all human and business is conducted between humans with varying degrees of interpersonal relationships. While you may consider yourself truthful and forthcoming always, the tendency to bite your tongue when friendships are at stake is only natural and human. Certainly, a "How's your family?" greeting is much warmer than a "Hey what's with all that crap you wrote about our GTX Titan pricing?" when you meet up at the latest trade show or press event. Similarly, it should be no surprise when Anand refers to various moves/hires at these companies as good/close friends, that he is going to protect those friendships where and when he can.

In any case, the bit I wrote about allocation was about the same time ExtremeTech got in trouble with Nvidia and felt they were blacklisted for not writing enough about PhysX. HardOCP got in similar trouble for blowing off entire portions of Nvidia's press stack and you similarly glossed over a bunch of the stuff Nvidia wanted you to cover. Subsequently, I do recall you did not have product on launch day and maybe later it was clarified there was some shipping mistake. Was a minor release, maybe one of the later Fermi parts. I may be mistaken, but pretty sure that was the case.

Razorbak86 - Monday, May 27, 2013 - link

Sounds like you've got an axe to grind, and a tin-foil hat for armor. ;)ambientblue - Thursday, August 8, 2013 - link

Well, you failed to note how the GTX 780 is essentially kepler's version of a GTX 570. It's priced twice as high though. The Titan should have been a GTX 680 last year... its only a prosumer card because of the price LOL. that's like saying the GTX 480 is a prosumer card!!!cityuser - Thursday, May 23, 2013 - link

whatever Nvidia do, it never improve their 2D quality, I mean , look at what nVidia will give you at BluRay playing, the color still dead , dull, not really enjoyable.It's terrible to use nVidia to HD home cinema, whatever setting you try.

Why nVidia can ignore this? because it's spoiled.

Dribble - Thursday, May 23, 2013 - link

What are you going on about?Bluray is digital, hdmi is digital - that means the signal is decoded and basically sent straight to the TV - there is no fiddling with colours, or sharpening or anything else required.