NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTSoftware, Cont: ShadowPlay and "Reason Flags"

Along with providing the game optimization service and SHIELD’s PC client, GeForce Experience has another service that’s scheduled to be added this summer. That service is called ShadowPlay, and not unlike SHIELD it’s intended to serve as a novel software implementation of some of the hardware functionality present in NVIDIA’s latest hardware.

ShadowPlay will be NVIDIA’s take on video recording, the novel aspect of it coming from the fact that NVIDIA is basing the utility around Kepler’s hardware H.264 encoder. To be straightforward video recording software is nothing new, as we have FRAPS, Afterburner, Precision X, and other utilities that all do basically the same thing. However all of those utilities work entirely in software, fetching frames from the GPU and then encoding them on the CPU. The overhead from this is not insignificant, especially due to the CPU time required for video encoding.

With ShadowPlay NVIDIA is looking to spur on software developers by getting into video recording themselves, and to provide superior performance by using hardware encoding. Notably this isn’t something that was impossible prior to ShadowPlay, but for some reason recording utilities that use NVIDIA’s hardware H.264 encoder have been few and far between. Regardless, the end result should be that most of the overhead is removed by relying on the hardware encoder, minimally affecting the GPU while freeing up the CPU, reducing the amount of time spent on data transit back to the CPU, and producing much smaller recordings all at the same time.

ShadowPlay will feature multiple modes. Its manual mode will be analogous to FRAPS, recording whenever the user desires it. The second mode, shadow mode, is perhaps the more peculiar mode. Because the overhead of recording with the hardware H.264 encoder is so low, NVIDIA wants to simply record everything in a very DVR-like fashion. In shadow mode the utility keeps a rolling window of the last 20 minutes of footage, with the goal being that should something happen that the user decides they want to record after the fact, they can simply pull it out of the ShadowPlay buffer and save it. It’s perhaps a bit odd from the perspective of someone who doesn’t regularly record their gaming sessions, but it’s definitely a novel use of NVIDIA’s hardware H.264 encoder.

NVIDIA hasn’t begun external beta testing of ShadowPlay yet, so for the moment all we have to work from is screenshots and descriptions. The big question right now is what the resulting quality will be like. NVIDIA’s hardware encoder does have some limitations that are necessary for real-time encoding, so as we’ve seen in the past with qualitative looks at NVIDIA’s encoder and offline H.264 encoders like x264, there is a quality tradeoff if everything has to be done in hardware in real time. As such ShadowPlay may not be the best tool for reference quality productions, but for the YouTube/Twitch.tv generation it should be more than enough.

Anyhow, ShadowPlay is expected to be released sometime this summer. But since 95% of the software ShadowPlay requires is also required for the SHIELD client, we wouldn’t be surprised if ShadowPlay was released shortly after a release quality version of the SHIELD client is pushed out, which may come as early as June alongside the SHIELD release.

Reasons: Why NVIDIA Cards Throttle

The final software announcement from NVIDIA to coincide with the launch of the GTX 780 isn’t a software product in and of itself, but rather an expansion of NVIDIA’s 3rd party hardware monitoring API.

One of the common questions/complaints about GPU Boost that NVIDIA has received over the last year is about why a card isn’t boosting as high as it should be, or why it suddenly drops down a boost bin or two for no apparent reason. For technically minded users who know the various cards’ throttle points and specifications this isn’t too complex – just look at the power consumption, GPU load, and temperature – but that’s a bit much to ask of most users. So starting with the recently released 320.14 drivers, NVIDIA is exposing a selection of flags through their API that indicate what throttle point is causing throttling or otherwise holding back the card’s clockspeed. There isn’t an official name for these flags, but “reasons” is as good as anything else, so that’s what we’re going with.

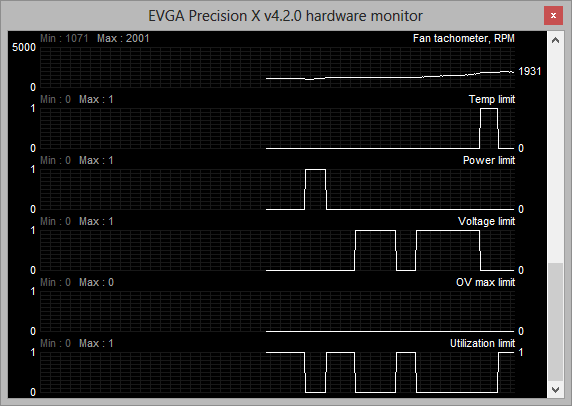

The reasons flags are a simple set of 5 binary flags that NVIDIA’s driver uses to indicate why it isn’t increasing the clockspeed of the card further. These flags are:

- Temperature Limit – the card is at its temperature throttle point

- Power Limit – The card is at its global power/TDP limit

- Voltage Limit – The card is at its highest boost bin

- Overvoltage Max Limit – The card’s absolute maximum voltage limit (“if this were to occur, you’d be at risk of frying your GPU”)

- Utilization Limit – The current workload is not high enough that boosting is necessary

As these are simple flags, it’s up to 3rd party utilities to decide how they want to present these flags. EVGA’s Precision X, which is NVIDIA’s utility of choice for sampling new features to the press, simply records the flags like it does the rest of the hardware monitoring data, and this is likely what most programs will do.

With the reason flags NVIDIA is hoping that this will help users better understand why their card isn’t boosting as high as they’d like to. At the same time the prevalence of GPU Boost 2.0 and its much higher reliance on temperature makes exposing this data all the more helpful, especially for overclockers that would like to know what attribute they need to turn up to unlock more performance.

155 Comments

View All Comments

milkman001 - Friday, May 24, 2013 - link

FYI,On the "Total War: Shogun 2" page, you have the 2650x1440 graph posted twice.

JDG1980 - Saturday, May 25, 2013 - link

I don't think that the release of this card itself is problematic for Titan owners - everyone knows that GPU vendors start at the top and work their way down with new silicon, so this shouldn't have come as much of a surprise.What I do find problematic is their refusal to push out BIOS-based fan controller improvements to Titan owners. *That* comes off as a slap in the face. Someone spends $1000 on a new video card, they deserve top-notch service and updates.

inighthawki - Saturday, May 25, 2013 - link

The typically swapchain format is something like R8G8B8A8 and the alpha channel is typically ignored (value of 0xFF typically written), since it is of no use to the OS, since it will not alpha blend with the rest of the GUI. You can create a 24-bit format, but it's very likely that for performance reasons, the driver will allocate it as if it were a 32-bit format, and not write to the upper 8 bits. The hardware is often only capable of writing to 32 bit aligned places, so its more beneficial for the hardware to just waste 8 bits of data and not have to do any fancy shifting to read or write from each pixel. I've actually seen cases where some drivers will allocate 8-bit formats as 32-bit formats, wasting 4x the space the user thought they were allocating.jeremyshaw - Saturday, May 25, 2013 - link

As a current GTX580 owner running at 2560x1440, I don't have any want of upgrade, especially in compute performance. I think I'll hold out for at least one more generation, before deciding.ahamling27 - Saturday, May 25, 2013 - link

As a GTX 560 Ti owner, I am chomping at the bit to pick up an upgrade. The Titan was out of the question, but the 780 looks a lot better at 65% of the cost for 90% of the performance. The only thing holding me back is that I'm still on z67 with a 2600k overclocked to 4.5 ghz. I don't see a need to rebuild my entire system as it's almost on par with the z77/3770. The problem is that I'm still on PCIe 2.0 and I'm worried that it would bottleneck a 780.Considering a 780 is aimed at us with 5xx or lower cards, it doesn't make sense if we have to abandon our platform just to upgrade our graphics card. So could you maybe test a 780 on PCIe 2.0 vs 3.0 and let us know if it's going to bottleneck on 2.0?

Ogdin - Sunday, May 26, 2013 - link

There will be no bottleneck.mapesdhs - Sunday, May 26, 2013 - link

Ogdin is right, it shouldn't be a bottleneck. And with a decent air cooler, you ought to be

able to get your 2600K to 5.0, so you have some headroom there aswell.

Lastly, you say you have a GTX 560 Ti. Are you aware that adding a 2nd card will give

performance akin to a GTX 670? And two 560 Tis oc'd is better than a stock 680 (VRAM

capacity not withstanding, ie. I'm assuming you have a 1GB card). Here's my 560Ti SLI

at stock:

http://www.3dmark.com/3dm11/6035982

and oc'd:

http://www.3dmark.com/3dm11/6037434

So, if you don't want the expense of an all new card for a while at the cost level of a 780,

but do want some extra performance in the meantime, then just get a 2nd 560Ti (good

prices on eBay these days), it will run very nicely indeed. My two Tis were only 177 UKP

total - less than half the cost of a 680, though atm I just run them at stock speed, don't

need the extra from an oc. The only caveat is VRAM, but that shouldn't be too much of

an issue unless you're running at 2560x1440, etc.

Ian.

ahamling27 - Wednesday, May 29, 2013 - link

Thanks for the reply! I thought about SLI but ultimately the 1 GB of vram is really going to hurt going forward. I'm not going to grab a 780 right away, because I want to see what custom models come out in the next few weeks. Although, EVGA's ACX cooler looks nice, I just want to see some performance numbers before I bite the bullet.Thanks again!

inighthawki - Tuesday, May 28, 2013 - link

Your comment is inaccurate. Just because a game requires "only 512MB" of video ram doesn't mean that's all it'll use. Video memory can be streamed in on the fly as needed off the hard drive, and as a result you can easily use a lot if you wanted as a performance optimization. I would not be the least bit surprised to see games on next gen consoles using WAY more video memory than regular memory. Running a game that "requires" 512MB of VRAM on a GPU with 4GB of VRAM gives it 3.5GB more storage to cache higher resolution textures.AmericanZ28 - Tuesday, May 28, 2013 - link

NVIDIA=FAIL....AGAIN! 780 Performs on par with a 7970GE, yet the GE costs $100 LESS than the 680, and $250 LESS than the 780.