The Xbox One: Hardware Analysis & Comparison to PlayStation 4

by Anand Lal Shimpi on May 22, 2013 8:00 AM ESTMemory Subsystem

With the same underlying CPU and GPU architectures, porting games between the two should be much easier than ever before. Making the situation even better is the fact that both systems ship with 8GB of total system memory and Blu-ray disc support. Game developers can look forward to the same amount of storage per disc, and relatively similar amounts of storage in main memory. That’s the good news.

The bad news is the two wildly different approaches to memory subsystems. Sony’s approach with the PS4 SoC was to use a 256-bit wide GDDR5 memory interface running somewhere around a 5.5GHz datarate, delivering peak memory bandwidth of 176GB/s. That’s roughly the amount of memory bandwidth we’ve come to expect from a $300 GPU, and great news for the console.

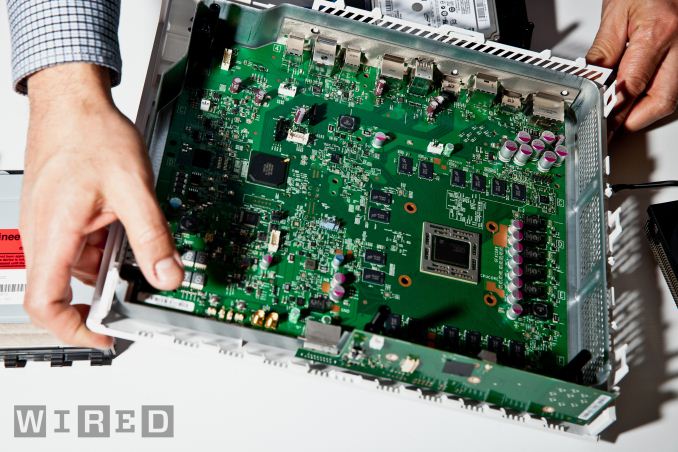

Xbox One Motherboard, courtesy Wired

Xbox One Motherboard, courtesy Wired

Die size dictates memory interface width, so the 256-bit interface remains but Microsoft chose to go for DDR3 memory instead. A look at Wired’s excellent high-res teardown photo of the motherboard reveals Micron DDR3-2133 DRAM on board (16 x 16-bit DDR3 devices to be exact). A little math gives us 68.3GB/s of bandwidth to system memory.

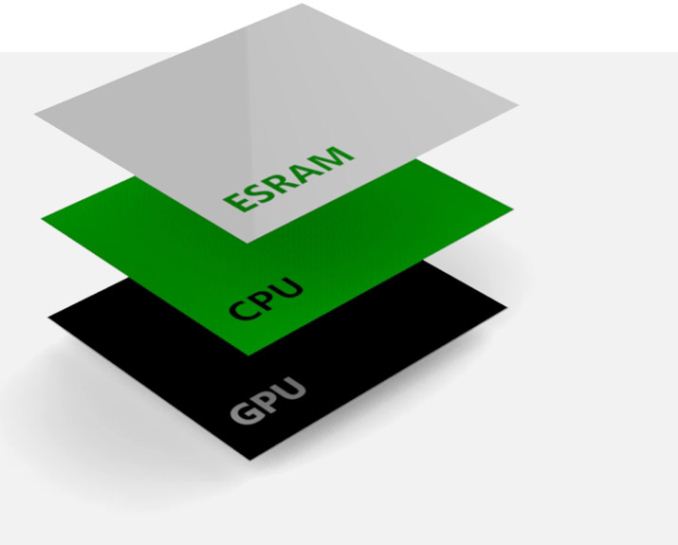

To make up for the gap, Microsoft added embedded SRAM on die (not eDRAM, less area efficient but lower latency and doesn't need refreshing). All information points to 32MB of 6T-SRAM, or roughly 1.6 billion transistors for this memory. It’s not immediately clear whether or not this is a true cache or software managed memory. I’d hope for the former but it’s quite possible that it isn’t. At 32MB the ESRAM is more than enough for frame buffer storage, indicating that Microsoft expects developers to use it to offload requests from the system memory bus. Game console makers (Microsoft included) have often used large high speed memories to get around memory bandwidth limitations, so this is no different. Although 32MB doesn’t sound like much, if it is indeed used as a cache (with the frame buffer kept in main memory) it’s actually enough to have a substantial hit rate in current workloads (although there’s not much room for growth).

Vgleaks has a wealth of info, likely supplied from game developers with direct access to Xbox One specs, that looks to be very accurate at this point. According to their data, there’s roughly 50GB/s of bandwidth in each direction to the SoC’s embedded SRAM (102GB/s total bandwidth). The combination of the two plus the CPU-GPU connection at 30GB/s is how Microsoft arrives at its 200GB/s bandwidth figure, although in reality that’s not how any of this works. If it’s used as a cache, the embedded SRAM should significantly cut down on GPU memory bandwidth requests which will give the GPU much more bandwidth than the 256-bit DDR3-2133 memory interface would otherwise imply. Depending on how the eSRAM is managed, it’s very possible that the Xbox One could have comparable effective memory bandwidth to the PlayStation 4. If the eSRAM isn’t managed as a cache however, this all gets much more complicated.

| Microsoft Xbox One vs. Sony PlayStation 4 Memory Subsystem Comparison | ||||||||||||||

| Xbox 360 | Xbox One | PlayStation 4 | ||||||||||||

| Embedded Memory | 10MB eDRAM | 32MB eSRAM | - | |||||||||||

| Embedded Memory Bandwidth | 32GB/s | 102GB/s | - | |||||||||||

| System Memory | 512MB 1400MHz GDDR3 | 8GB 2133MHz DDR3 | 8GB 5500MHz GDDR5 | |||||||||||

| System Memory Bus | 128-bits | 256-bits | 256-bits | |||||||||||

| System Memory Bandwidth | 22.4 GB/s | 68.3 GB/s | 176.0 GB/s | |||||||||||

There are merits to both approaches. Sony has the most present-day-GPU-centric approach to its memory subsystem: give the GPU a wide and fast GDDR5 interface and call it a day. It’s well understood and simple to manage. The downsides? High speed GDDR5 isn’t the most power efficient, and Sony is now married to a more costly memory technology for the life of the PlayStation 4.

Microsoft’s approach leaves some questions about implementation, and is potentially more complex to deal with depending on that implementation. Microsoft specifically called out its 8GB of memory as being “power friendly”, a nod to the lower power operation of DDR3-2133 compared to 5.5GHz GDDR5 used in the PS4. There are also cost benefits. DDR3 is presently cheaper than GDDR5 and that gap should remain over time (although 2133MHz DDR3 is by no means the cheapest available). The 32MB of embedded SRAM is costly, but SRAM scales well with smaller processes. Microsoft probably figures it can significantly cut down the die area of the eSRAM at 20nm and by 14/16nm it shouldn’t be a problem at all.

Even if Microsoft can’t deliver the same effective memory bandwidth as Sony, it also has fewer GPU execution resources - it’s entirely possible that the Xbox One’s memory bandwidth demands will be inherently lower to begin with.

245 Comments

View All Comments

tipoo - Wednesday, May 22, 2013 - link

I think it's so that they can bundle Kinect at a competitive cost.geniekid - Wednesday, May 22, 2013 - link

As alluded to in the article, only PS4 exclusives are likely to take advantage of the additional processing power. Most developers will probably use the same textures/lighting/etc. on both platforms to lower porting costs so you'd never see an improvement.I think they were correct to focus more on the Kinect 2.

dysonlu - Wednesday, May 22, 2013 - link

It's not difficult at all to include different levels of textures and ligthing. As we all know, the PC games makers have been doing that for years. And these news consoles are nothing but PCs.Flunk - Wednesday, May 22, 2013 - link

Frankly, even if they don't program for it, it means that everything will run just a little smoother on the PS4. I'm now leaning toward the PS4 to replace my 360. If they go the same pay for multiplayer route they did this generation it will cement my decision.Voldenuit - Wednesday, May 22, 2013 - link

The reality is that games are never fully optimized for any hardware configuration, so even if PS4 users never see higher res textures or higher poly models, having 50% (!!!) more GPU power means they will see smoother framerates with less dips.I'd take that over some Big Brother contraption in my living room (Kinect) that will be broken into by creepy hackers trying to spy on teenage girls. Or I would, if I were buying a console, which still hasn't been decided (cost/affordability rather than any ideological divides).

lmcd - Wednesday, May 22, 2013 - link

Exactly.Of course Move wasn't better given that a camera was used for that too...

Ramon Zarat - Tuesday, May 28, 2013 - link

Sony's cam is not required to be plugged in for the rest of the console to work . XB1, yes. No Kinect, no console, period.Sony's cam are not hooked to an always-on console. I could be offline forever if I want and the console would still work, and it would be impossible to hack if it's not online. If your XB1 is off the net for more than 24H, no console, period

Sony's cam can actually be turned off, and I mean completely off. XB1, no. It's on, even when everything else is off. Just in case you are too lazy to just get out of the couch and press power on on your console, your Kinect is always on to accept your voice command.

Always-online console + always-on cam and mic and no way to shut any of those thing off = sooner than later some Chinese hackers WILL record you f*cking your wife on that couch and blackmail you for some money threatening you to post that video on YouTube.

I just seriously can't way for this to happen! :)

blacks329 - Wednesday, May 22, 2013 - link

FYI The new PS Eye (Kinect like Camera) will be included in every PS4 box. This was confirmed in February. Whether it is required to be plugged in like the X1 remains to be seen, but I wouldn't be surprised.piiman - Saturday, June 22, 2013 - link

"I'd take that over some Big Brother contraption in my living room (Kinect) that will be broken into by creepy hackers trying to spy on teenage girls"Paranoid much?

Just place something in front of the camera if you’re really that worried.

lmcd - Wednesday, May 22, 2013 - link

Rather the opposite -- any engine-licensed game will take advantage of additional processing power and/or have way better framerates.