The Xbox One: Hardware Analysis & Comparison to PlayStation 4

by Anand Lal Shimpi on May 22, 2013 8:00 AM ESTPower & Thermals

Microsoft made a point to focus on the Xbox One’s new power states during its introduction. Remember that when the Xbox 360 was introduced, power gating wasn’t present in any shipping CPU or GPU architectures. The Xbox One (and likely the PlayStation 4) can power gate unused CPU cores. AMD’s GCN architecture supports power gating, so I’d assume that parts of the GPU can be power gated as well. Dynamic frequency/voltage scaling is also supported. The result is that we should see a good dynamic range of power consumption on the Xbox One, compared to the Xbox 360’s more on/off nature.

AMD’s Jaguar is quite power efficient, capable of low single digit idle power so I would expect far lower idle power consumption than even the current slim Xbox 360 (50W would be easy, 20W should be doable for truly idle). Under heavy gaming load I’d expect to see higher power consumption than the current Xbox 360, but still less than the original 2005 Xbox 360.

Compared to the PlayStation 4, Microsoft should have the cooler running console under load. Fewer GPU ALUs and lower power memory don’t help with performance but do at least offer one side benefit.

OS

The Xbox One is powered by two independent OSes running on a custom version of Microsoft’s Hyper-V hypervisor. Microsoft made the hypervisor very lightweight, and created hard partitions of system resources for the two OSes that run on top of it: the Xbox OS and the Windows kernel.

The Xbox OS is used to play games, while the Windows kernel effectively handles all apps (as well as things like some of the processing for Kinect inputs). Since both OSes are just VMs on the same hypervisor, they are both running simultaneously all of the time, enabling seamless switching between the two. With much faster hardware and more cores (8 vs 3 in the Xbox 360), Microsoft can likely dedicate Xbox 360-like CPU performance to the Windows kernel while running games without any negative performance impact. Transitioning in/out of a game should be very quick thanks to this architecture. It makes a ton of sense.

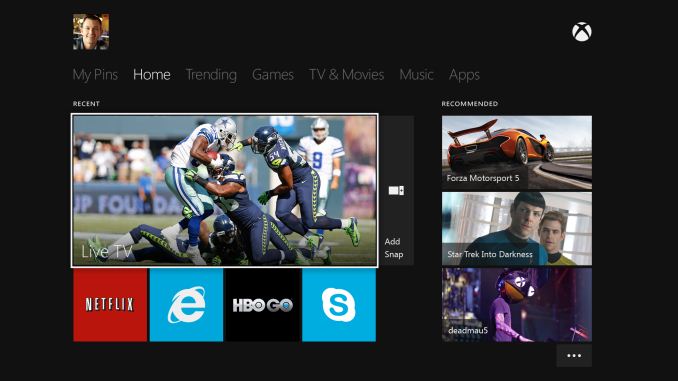

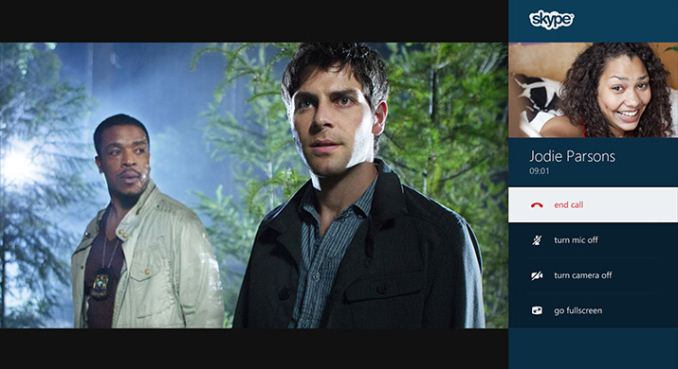

Similarly, you can now multitask with apps. Microsoft enabled Windows 8-like multitasking where you can snap an app to one side of the screen while watching a video or playing a game on the other.

The hard partitioning of resources would be nice to know more about. The easiest thing would be to dedicate a Jaguar compute module to each OS, but that might end up being overkill for the Windows kernel and insufficient for some gaming workloads. I suspect ~1GB of system memory ends up being carved off for Windows.

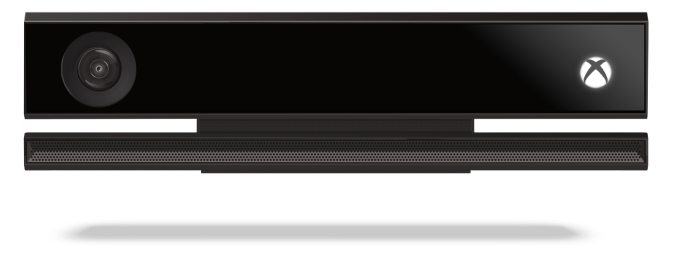

Kinect & New Controller

All Xbox One consoles will ship with a bundled Kinect sensor. Game console accessories generally don’t do all that well if they’re optional. Kinect seemed to be the exception to the rule, but Microsoft is very focused on Kinect being a part of the Xbox going forward so integration here makes sense.

The One’s introduction was done entirely via Kinect enabled voice and gesture controls. You can even wake the Xbox One from a sleep state using voice (say “Xbox on”), leveraging Kinect and good power gating at the silicon level. You can use large two-hand pinch and stretch gestures to quickly move in and out of the One’s home screen.

The Kinect sensor itself is one of 5 semi-custom silicon elements in the Xbox One - the other four are: SoC, PCH, Kinect IO chip and Blu-ray DSP (read: the end of optical drive based exploits). In the One’s Kinect implementation Microsoft goes from a 640 x 480 sensor to 1920 x 1080 (I’m assuming 1080p for the depth stream as well). The camera’s field of view was increased by 60%, allowing support for up to 6 recognized skeletons (compared to 2 in the original Kinect). Taller users can now get closer to the camera thanks to the larger FOV, similarly the sensor can be used in smaller rooms.

The Xbox One will also ship with a new redesigned wireless controller with vibrating triggers:

Thanks to Kinect's higher resolution and more sensitive camera, the console should be able to identify who is gaming and automatically pair the user to the controller.

TV

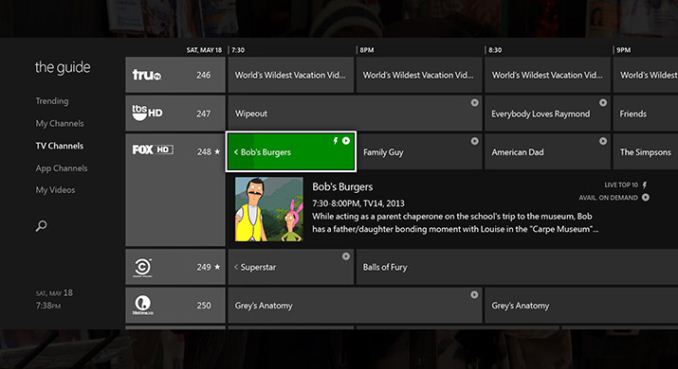

The Xbox One features a HDMI input for cable TV passthrough (from a cable box or some other tuner with HDMI out). Content passed through can be viewed with overlays from the Xbox or just as you would if the Xbox wasn’t present. Microsoft built its own electronic program guide that allows you to tune channels by name, not just channel number (e.g. say “Watch HBO”). The implementation looks pretty slick, and should hopefully keep you from having to switch inputs on your TV - the Xbox One should drive everything. Microsoft appears to be doing its best to merge legacy TV with the new world of buying/renting content via Xbox Live. It’s a smart move.

One area where Microsoft is being a bit more aggressive is in its work with the NFL. Microsoft demonstrated fantasy football integration while watching NFL passed through to the Xbox One.

245 Comments

View All Comments

Shawn74 - Tuesday, September 10, 2013 - link

mmmmm....Custom CPU (6 operations per clock compared to the 4 of PS4) and now overclocked.

GPU (now overclocked)

eSram (ultra fast memory with extremely low access time, we will see it's real function soon)

DDR3 (extremely fast access time memory)

Maybe this combination may become a nightmare for the PS4 owners?? xD

Yes, i really think YES.

And please don't forget the new pulse triggers (apparently fantastic and a must have for a completely-new experience)

YES, my final decision is for the ONE

Shad0w59 - Wednesday, September 11, 2013 - link

I don't really trust Microsoft with all that overclocking after Xbox 360's high failure rate.Shawn74 - Wednesday, September 11, 2013 - link

Shadow, have you seen the cooling system? It's giant..Have you seen the case? It's giant..(a lot of fresh air inside ;-)

Have you seen the Xbox One will detect heat, power down to avoid meltdown? http://www.vg247.com/2013/08/13/xbox-one-will-dete...

And the very heat power supply is outside.....

A perfect system for overclocking.... obviously for me....

Ah, for my first message here a reply to PS4 team made directly by Albert Penello (Microsoft Director of Product Planning):

"*******************************************************************************************

I see my statements the other day caused more of a stir than I had intended. I saw threads locking down as fast as they pop up, so I apologize for the delayed response.

I was hoping my comments would lead the discussion to be more about the games (and the fact that games on both systems look great) as a sign of my point about performance, but unfortunately I saw more discussion of my credibility.

So I thought I would add more detail to what I said the other day, that perhaps people can debate those individual merits instead of making personal attacks. This should hopefully dismiss the notion I'm simply creating FUD or spin.

I do want to be super clear: I'm not disparaging Sony. I'm not trying to diminish them, or their launch or what they have said. But I do need to draw comparisons since I am trying to explain that the way people are calculating the differences between the two machines isn't completely accurate. I think I've been upfront I have nothing but respect for those guys, but I'm not a fan of the mis-information about our performance.

So, here are couple of points about some of the individual parts for people to consider:

• 18 CU's vs. 12 CU's =/= 50% more performance. Multi-core processors have inherent inefficiency with more CU's, so it's simply incorrect to say 50% more GPU.

• Adding to that, each of our CU's is running 6% faster. It's not simply a 6% clock speed increase overall.

• We have more memory bandwidth. 176gb/sec is peak on paper for GDDR5. Our peak on paper is 272gb/sec. (68gb/sec DDR3 + 204gb/sec on ESRAM). ESRAM can do read/write cycles simultaneously so I see this number mis-quoted.

• We have at least 10% more CPU. Not only a faster processor, but a better audio chip also offloading CPU cycles.

• We understand GPGPU and its importance very well. Microsoft invented Direct Compute, and have been using GPGPU in a shipping product since 2010 - it's called Kinect.

• Speaking of GPGPU - we have 3X the coherent bandwidth for GPGPU at 30gb/sec which significantly improves our ability for the CPU to efficiently read data generated by the GPU.

Hopefully with some of those more specific points people will understand where we have reduced bottlenecks in the system. I'm sure this will get debated endlessly but at least you can see I'm backing up my points.

I still I believe that we get little credit for the fact that, as a SW company, the people designing our system are some of the smartest graphics engineers around – they understand how to architect and balance a system for graphics performance. Each company has their strengths, and I feel that our strength is overlooked when evaluating both boxes.

Given this continued belief of a significant gap, we're working with our most senior graphics and silicon engineers to get into more depth on this topic. They will be more credible then I am, and can talk in detail about some of the benchmarking we've done and how we balanced our system.

Thanks again for letting my participate. Hope this gives people more background on my claims.

"*****************************************************************************

Once again i would like to warn PS4 fan........Everytime Sony announced a new console, Sony have publicized it as the most powerful.... every time Xbox does the job better....

In my opinion

P.S. Sorry for my bad english, i'm italian

Shawn74 - Wednesday, September 11, 2013 - link

Penello's post is here:http://67.227.255.239/forum/showthread.php?p=80951...

tipoo - Saturday, September 21, 2013 - link

Regarding the eDRAM, it's now known not to be an automatically managed cache, from developer comments about having to code specifically to use it.