Choosing a Gaming CPU: Single + Multi-GPU at 1440p, April 2013

by Ian Cutress on May 8, 2013 10:00 AM ESTCPU Benchmarks

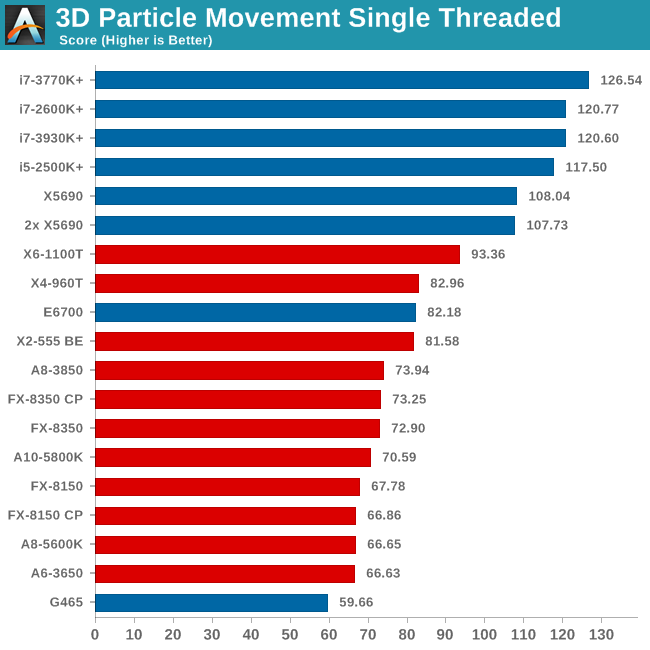

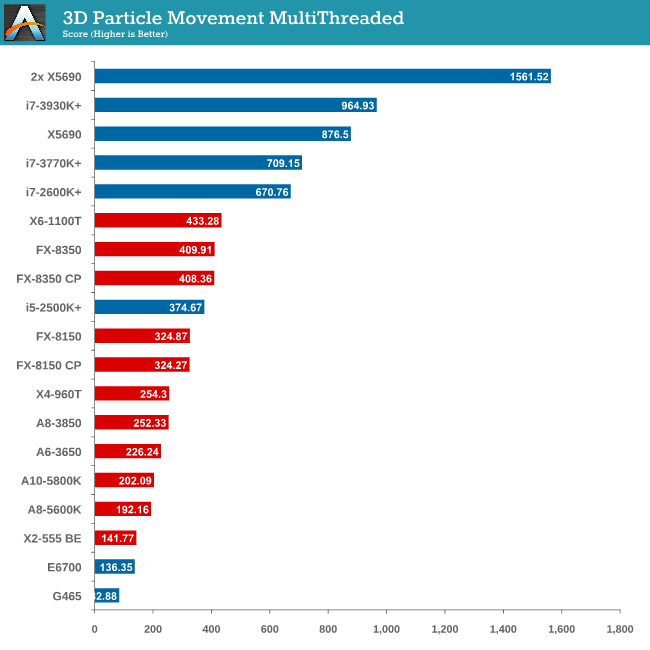

Point Calculations - 3D Movement Algorithm Test

The algorithms in 3DPM employ both uniform random number generation or normal distribution random number generation, and vary in amounts of trigonometric operations, conditional statements, generation and rejection, fused operations, etc. The benchmark runs through six algorithms for a specified number of particles and steps, and calculates the speed of each algorithm, then sums them all for a final score. This is an example of a real world situation that a computational scientist may find themselves in, rather than a pure synthetic benchmark. The benchmark is also parallel between particles simulated, and we test the single threaded performance as well as the multi-threaded performance.

As mentioned in previous reviews, this benchmark is written how most people would tackle the situation – using floating point numbers. This is also where Intel excels, compared to AMD’s decision to move more towards INT ops (such as hashing), which is typically linked to optimized code or normal OS behavior.

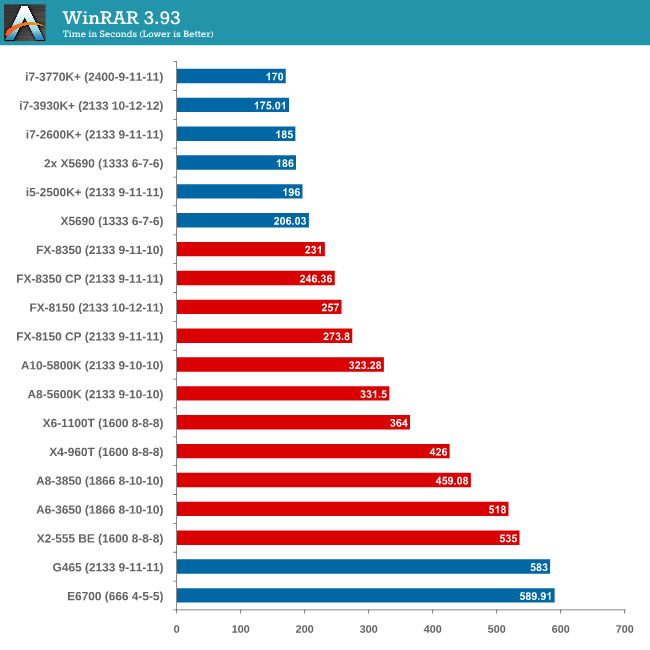

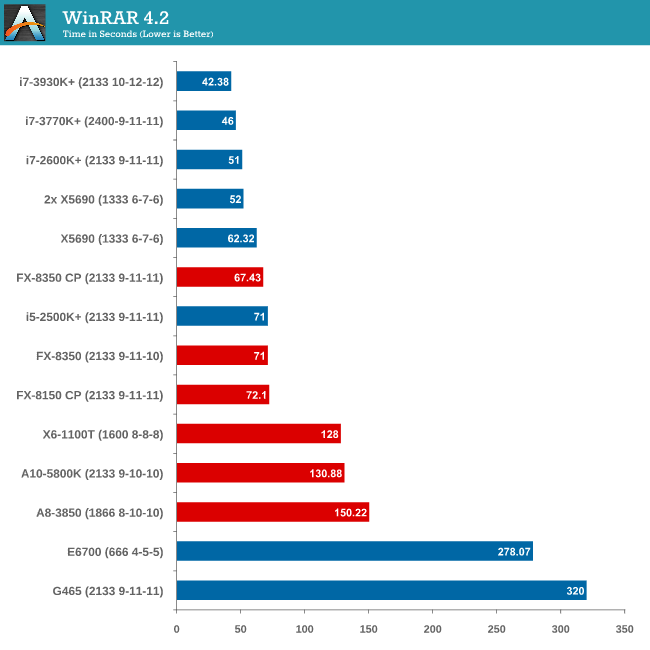

Compression - WinRAR x64 3.93 + WinRAR 4.2

With 64-bit WinRAR, we compress the set of files used in our motherboard USB speed tests. WinRAR x64 3.93 attempts to use multithreading when possible and provides a good test for when a system has variable threaded load. WinRAR 4.2 does this a lot better! If a system has multiple speeds to invoke at different loading, the switching between those speeds will determine how well the system will do.

Due to the late inclusion of 4.2, our results list for it is a little smaller than I would have hoped. But it is interesting to note that with the Core Parking updates, an FX-8350 overtakes an i5-2500K with MCT.

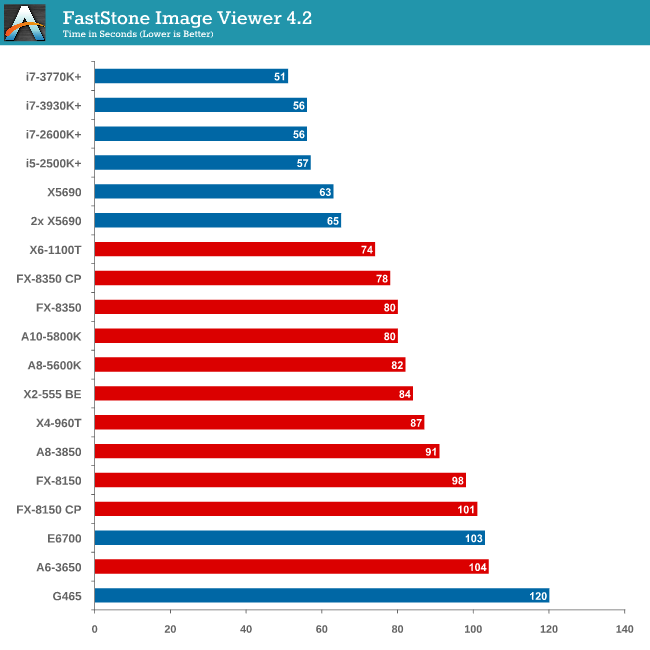

Image Manipulation - FastStone Image Viewer 4.2

FastStone Image Viewer is a free piece of software I have been using for quite a few years now. It allows quick viewing of flat images, as well as resizing, changing color depth, adding simple text or simple filters. It also has a bulk image conversion tool, which we use here. The software currently operates only in single-thread mode, which should change in later versions of the software. For this test, we convert a series of 170 files, of various resolutions, dimensions and types (of a total size of 163MB), all to the .gif format of 640x480 dimensions.

In terms of pure single thread speed, it is worth noting the X6-1100T is leading the AMD pack.

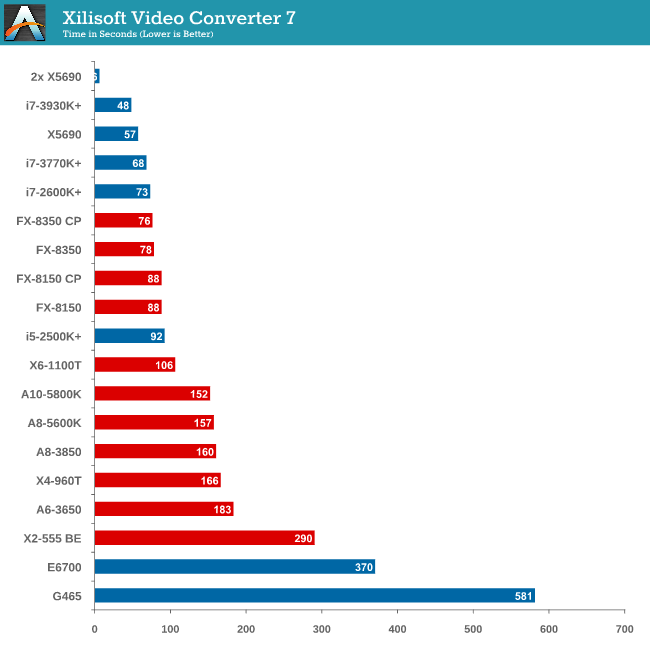

Video Conversion - Xilisoft Video Converter 7

With XVC, users can convert any type of normal video to any compatible format for smartphones, tablets and other devices. By default, it uses all available threads on the system, and in the presence of appropriate graphics cards, can utilize CUDA for NVIDIA GPUs as well as AMD WinAPP for AMD GPUs. For this test, we use a set of 33 HD videos, each lasting 30 seconds, and convert them from 1080p to an iPod H.264 video format using just the CPU. The time taken to convert these videos gives us our result.

XVC is a little odd in how it arranges its multicore processing. For our set of 33 videos, it will arrange them in batches of threads – so if we take the 8 thread FX-8350, it will arrange the videos into 4 batches of 8, and then a fifth batch of one. That final batch will only have one thread assigned to it (!), and will not get a full 8 threads worth of power. This is also why the 2x X5690 finishes in 6 seconds but the normal X5690 takes longer – you would expect a halving of time moving to two CPUs but XVC arranges the batches such that there is always one at the end that only gets a single thread.

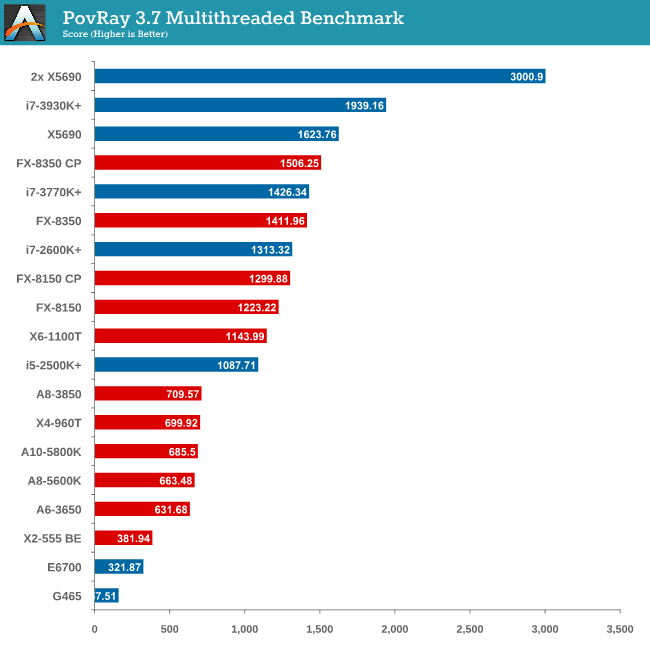

Rendering – PovRay 3.7

The Persistence of Vision RayTracer, or PovRay, is a freeware package for as the name suggests, ray tracing. It is a pure renderer, rather than modeling software, but the latest beta version contains a handy benchmark for stressing all processing threads on a platform. We have been using this test in motherboard reviews to test memory stability at various CPU speeds to good effect – if it passes the test, the IMC in the CPU is stable for a given CPU speed. As a CPU test, it runs for approximately 2-3 minutes on high end platforms.

The SMP engine in PovRay is not perfect, though scaling up in CPUs gives almost a 2x effect. The results from this test are great – here we see an FX-8350 CPU below an i7-3770K (with MCT), until the Core Parking updates are applied, meaning the FX-8350 performs better!

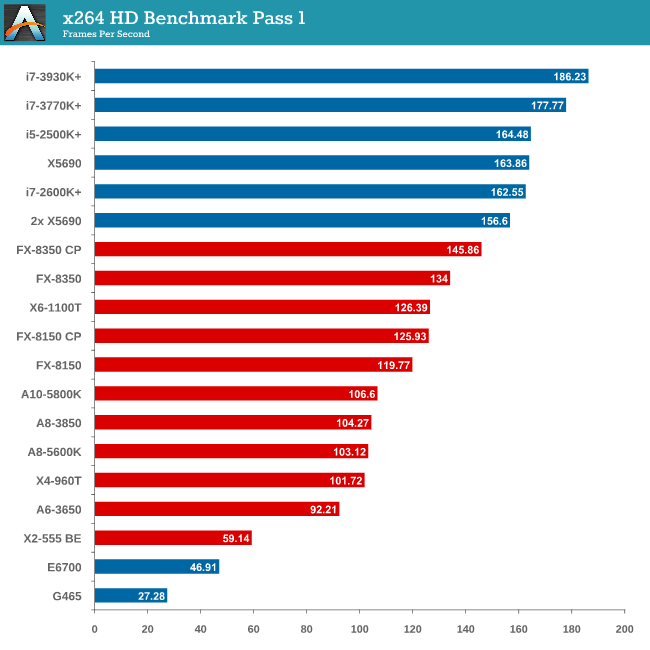

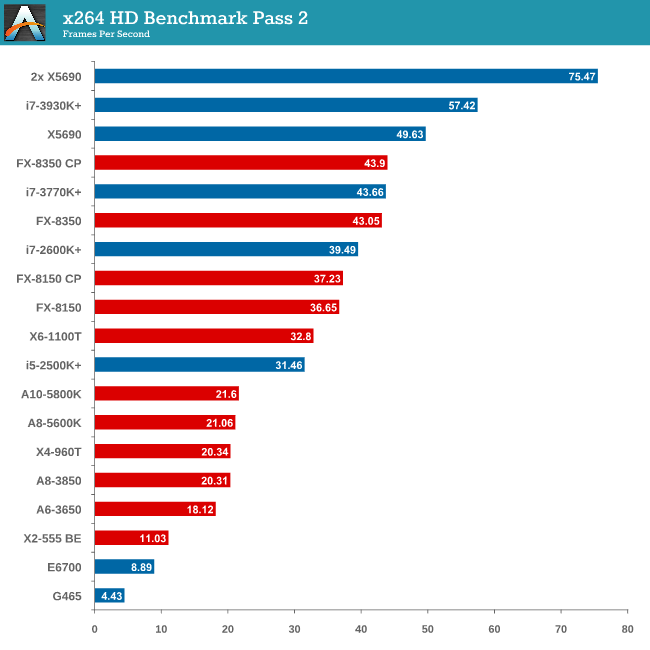

Video Conversion - x264 HD Benchmark

The x264 HD Benchmark uses a common HD encoding tool to process an HD MPEG2 source at 1280x720 at 3963 Kbps. This test represents a standardized result which can be compared across other reviews, and is dependent on both CPU power and memory speed. The benchmark performs a 2-pass encode, and the results shown are the average of each pass performed four times.

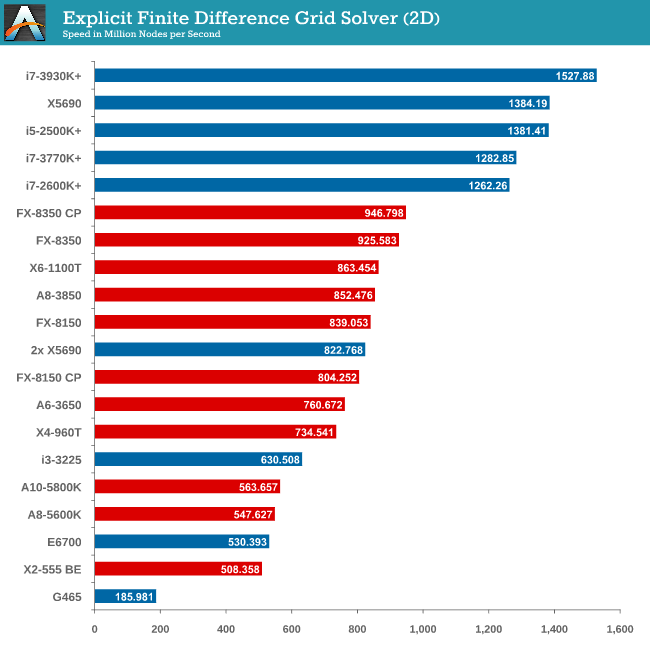

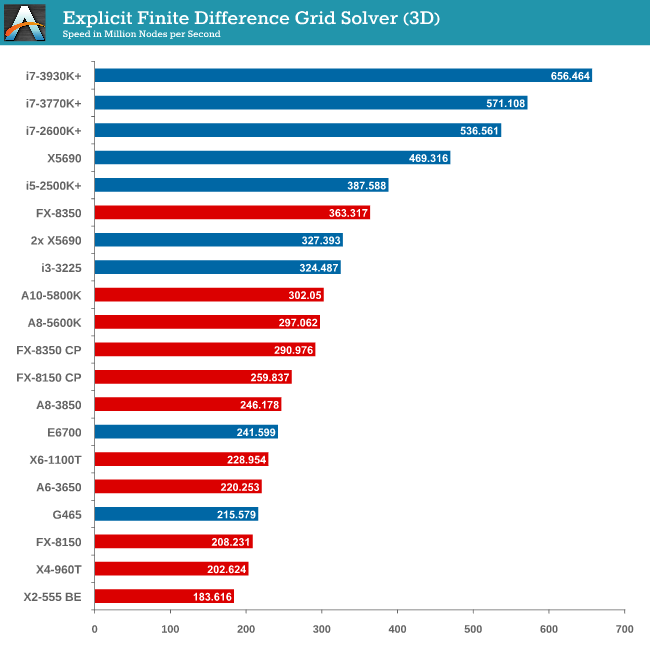

Grid Solvers - Explicit Finite Difference

For any grid of regular nodes, the simplest way to calculate the next time step is to use the values of those around it. This makes for easy mathematics and parallel simulation, as each node calculated is only dependent on the previous time step, not the nodes around it on the current calculated time step. By choosing a regular grid, we reduce the levels of memory access required for irregular grids. We test both 2D and 3D explicit finite difference simulations with 2n nodes in each dimension, using OpenMP as the threading operator in single precision. The grid is isotropic and the boundary conditions are sinks. Values are floating point, with memory cache sizes and speeds playing a part in the overall score.

Grid solvers do love a fast processor and plenty of cache in order to store data. When moving up to 3D, it is harder to keep that data within the CPU and spending extra time coding in batches can help throughput. Our simulation takes a very naïve approach in code, using simple operations.

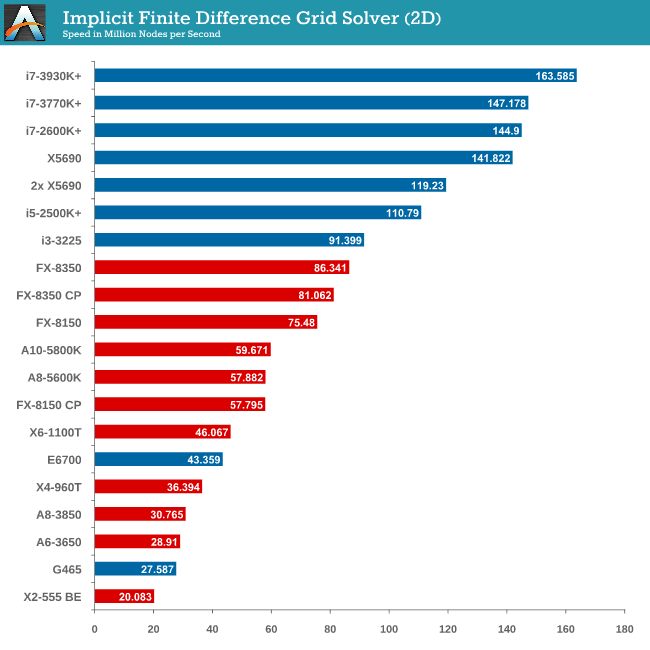

Grid Solvers - Implicit Finite Difference + Alternating Direction Implicit Method

The implicit method takes a different approach to the explicit method – instead of considering one unknown in the new time step to be calculated from known elements in the previous time step, we consider that an old point can influence several new points by way of simultaneous equations. This adds to the complexity of the simulation – the grid of nodes is solved as a series of rows and columns rather than points, reducing the parallel nature of the simulation by a dimension and drastically increasing the memory requirements of each thread. The upside, as noted above, is the less stringent stability rules related to time steps and grid spacing. For this we simulate a 2D grid of 2n nodes in each dimension, using OpenMP in single precision. Again our grid is isotropic with the boundaries acting as sinks. Values are floating point, with memory cache sizes and speeds playing a part in the overall score.

2D Implicit is harsher than an Explicit calculation – each thread needs more a lot memory, which only ever grows as the size of the simulation increases.

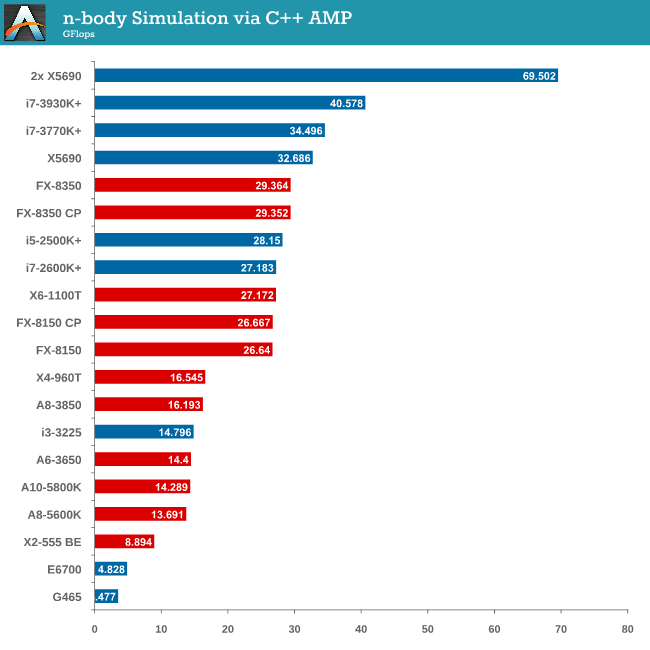

Point Calculations - n-Body Simulation

When a series of heavy mass elements are in space, they interact with each other through the force of gravity. Thus when a star cluster forms, the interaction of every large mass with every other large mass defines the speed at which these elements approach each other. When dealing with millions and billions of stars on such a large scale, the movement of each of these stars can be simulated through the physical theorems that describe the interactions. The benchmark detects whether the processor is SSE2 or SSE4 capable, and implements the relative code. We run a simulation of 10240 particles of equal mass - the output for this code is in terms of GFLOPs, and the result recorded was the peak GFLOPs value.

As we only look at base/SSE2/SSE4 depending on the processor (auto-detection), we don’t see full AVX numbers in terms of FLOPs.

242 Comments

View All Comments

Pheesh - Wednesday, May 8, 2013 - link

"2) Min FPS falls under the issue of statistical reporting. If you run a game benchmark (Dirt3) and in one scene of genuine gameplay there is a 6-car pileup, it would show the min FPS of that one scene. So if that happened on an FX-8350 and min-FPS was down to 20 FPS when others didn't have this scene were around 90 FPS for minimum, how is that easily reported and conveyed in a reasonable way to the public? A certain amount of acknowledgement is made on the fact that we're taking overall average numbers, and that users would apply brain matter with regard to an 'average minimum'."The point of a benchmark is to provide a consistent test that can be replicated exactly on multiple systems. If you're not able to do that then you aren't really benchmarking anything. That's why 99% of games are not tested in multiplayer but rather single player in experiences they can strictly control. (i.e. with test demos). If for some reason the game engine is just that unpredictable even in a strictly controlled test situation you could do multiple trials to take a minimum average.

Minimum FPS is an extremely necessary test and its easily possible to do. Other sites include it with all of their gaming benchmarks.

Spunjji - Wednesday, May 8, 2013 - link

That doesn't necessarily mean that the numbers they give you are worth a damn...beginner99 - Thursday, May 9, 2013 - link

"Minimum FPS is an extremely necessary test and its easily possible to do. Other sites include it with all of their gaming benchmarks."Or you could do 5 runs, discard the worst and best and average the rest (min, max average FPS).

http://en.wikipedia.org/wiki/Truncated_mean

But yeah statistics is extremely complex and error prone. I once read that a large amount of statistics in scientific publications have errors to a certain degree (but not necessarily making the results and conclusions completely wrong!!!)

Or if you actually know such a "special scene" can happen, discard all test were it happened.

beginner99 - Thursday, May 9, 2013 - link

The main issue here is actually available time or the amount of work. Averages over 3 aren't really that great. if you could run everything 100 times such "special scenes" would be irrelevant.mapesdhs - Monday, May 20, 2013 - link

Ian,

P55 boards can offer very good RAID0 performance with SSDs, or more importantly

RAID1 or RAID10 (I hope those with RAID0 have some kind of sensible backup

strategy). See my results:

http://www.sgidepot.co.uk/misc/ssd_tests.txt

One will obviously get more out of newer SSDs using native SATA3 mbds for the

sequential tests, but newer tech won't help 4K numbers that much. In reality few

would notice the difference between each type of setup. This is especially true

given how many later mbds use the really awful Marvell controllers for most of the

SATA3 ports (such a shame only a couple are normally controlled by the Intel or

other chipset); performance would be better with an older Intel SATA2. I expect

many just use the non-Marvell ports only if they can.

What matters is to have an SSD setup of some kind in the 1st place. My P55 system

(875K) boots very quick with a Vertex3, gives a higher 3DMark13 physics score than

a 3570K, and GPU performance with two 2x 560Ti is better than a stock 680. It's

really the previous gen of hw which can present more serious bottlenecks (S775,

AM2, DDR2, etc.), but even then results can often be surprisingly decent, eg. oc'd

Ph2 965, etc.

Also, RAID0 with SSDs often negates the potential of small I/O performance.

Depending on the game/task, this means SSD RAID0 might at times be slower than a

single good SSD.

Dribble is right in that respect, improvements are often not as significant as

people think or expect (I've read sooo many posts from those who have been

disappointed with their upgrades), though it does vary by game, settings, etc.

Games which impose a heavier CPU loading (physics, multiplayer, AI etc.) might see

more useful speedups from a better CPU, but not always. There are so many factors

involved, it can become complicated very quickly.

Ian.

Felix_Ram - Sunday, May 26, 2013 - link

Your 120 hz screen has a frame latency of about 8 ms. Meaning it effectively can't show you more than 60 new fps. Anything above that it shows you the same pixel twice. So basically, you are watching reruns, and anyone who states that he can tell a difference between 60 fps and +60fps is basically kidding himself.http://forums.anandtech.com/showthread.php?t=23049...

http://forums.steamgames.com/forums/showthread.php...

Felix_Ram - Sunday, May 26, 2013 - link

Can't edit. A screen latency of about 16 ms*tehh4ck3r - Wednesday, May 8, 2013 - link

You should test a Phenom II X4-965 and a i5-3570K.B-Unit1701 - Wednesday, May 8, 2013 - link

And throw in a 45nm Core2, preferably over 3.0Ghzboulard83 - Wednesday, May 8, 2013 - link

Really great review and testing. As for the CPU to add to the list, you could add some very cheap solution like the G1610 and G2020 too see how these 40-60$ chip perform againts all other chip or simply compare to an older E6700 like the one on the test. Other than that, you could also add a 3820 in the testing simply to lower the cost of the X79 setup, making it a little more mainstream VS a 600$ 3930k.