Server Update April 2013: Positioning the HP Moonshot 1500

by Johan De Gelas on April 11, 2013 8:11 AM EST- Posted in

- Enterprise CPUs

- Arm

- Xeon

- Enterprise

- Calxeda

- S1200

Our First Impressions

So how attractive is HP's Moonshot 1500 system chassis? HP claims that for the right workloads, these systems will be 77% less costly, consume 89% less energy, take 80% less space and be 97% less complex. HP bases this on a comparison of one of its own proprietary 47U (non-industry standard thus) Moonshot racks with 5 racks of traditional 1U, 2 socket servers. That is a server form factor which was very popular in the beginning of this century. For some reason, some of the PR people at HP have missed the launch of HP's blade servers in 2002, have never heard about the Twin(²) designs of Supermicro and also conveniently forgot about the fact that we now fill up our 2U and 3U boxes with memory, install a hypervisor on top of it and run a few tens of virtual machines upon it.

We have serious doubts that the current implementation will offer a significantly higher performance per watt ratio than the current low power server options, even when running the "right" workloads such as web front serving, content delivery (mostly photos and images) and memcached servers. Our doubts are based upon several datapoints.

Firstly, the performance per watt of the current Atom S1260. At 2GHz, it is clocked 7.5% higher than the Atom N2800 1.86GHz we tested. That clockspeed advantage is about the only advantage it has over the N2800, so we expect it to perform up to 7.5% better but not more. The advantage of using 1333 MHz instead of 1066 MHz DDR3 is probably very small and partly negated by the fact that ECC takes a bit of performance away. Therefore, we can get an idea how the individual Moonshot server Catridge will perform.

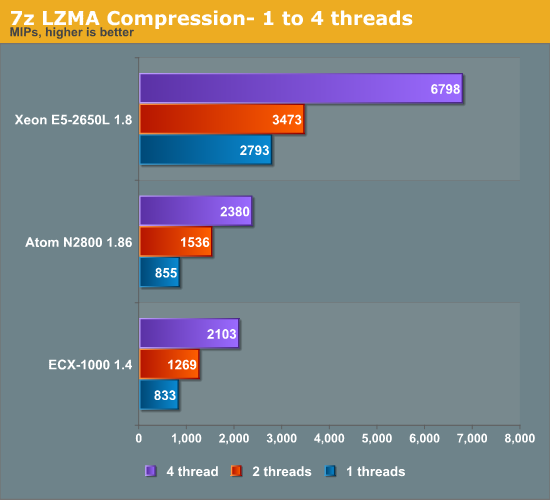

Take a look at the compression benchmark below. Compression is a low IPC workload that's sensitive to memory parallelism and latency. The instruction mix is a bit different, but this kind of workload is still somewhat similar to many server workloads.

You can easily calculate where the Atom S1260 would land. Add about 8% to the scores of the Atom N2800. Extrapolating these number, we may expect the Atom S1260 to score 2570 at the most. That is about 22% better than the ARM Cortex A9 based quad core of Calxeda at 1.4GHz. Single threaded performance would be less than 10% better.

If you consider the TDP of this chip, the age of the Atom core is really showing. The heavily integrated ECX-1000 SoC - 4 cores, management, networking and IO controllers - needs about 5W at the most (at 1.4GHz, 3.8W at 1.1GHz). The Atom S1260 needs 8.5W and that does not include its network and management chips. So we estimate that the current Atom S1260 probably needs twice as much power to offer the 20% performance advantage illustrated above.

There is more...

26 Comments

View All Comments

dealcorn - Thursday, April 11, 2013 - link

It makes me wonder why HP has been hogging almost all Intel's S1200 production capacity. HP may think there is a use case where some customers will find Moonshot attractive.Is Briarwood (S12X9) the end of the road for Atom at 32 mn? The addition of many Crystal DMA engines to provide a hardware assist in RAID6 calculations lets Atom be a category killer (in a niche market). I find it funny that after all the criticism, the venerable Atom core is departing 32 nm as a (niche) category killer.

Ammohunt - Thursday, April 11, 2013 - link

The should have named this product line Crapshoot you would think they would learn from past dealings with intel. As a career Systems Administrator i don't find this to be an attractive product as compared to scaling density using 1-2U servers crammed with ram running a modern hyper visor with 24 or more cores. At the same time a 1-2U server can be re-purposed for dedicated tasks.Spunjji - Friday, April 12, 2013 - link

Pun win.Jaybus - Friday, April 12, 2013 - link

Which makes me question why a E5 2650L, a 1.8 GHz Sandy Bridge part, was used as a comparison. WIth the E5-2600 V2 series being launched soon, I think an Ivy Bridge E3 would have been a better comparison. The 10 core E5 V2 at 70 W will allow 20 Ivy Bridge cores (40 hyper-threads) at around 3 GHz and at least 256 GB of RAM in a 1U space. That will allow a lot of web server VMs from a 1U. Can these Atom and ARM systems run as many web servers in a 1U space? For some of us, that is a more important question. Performance per Watt is important, but it doesn't necessarily translate to better performance per 1U space, which is the more important metric for some of us.Wilco1 - Friday, April 12, 2013 - link

Well according to Anand's Calxeda test, you need 2.7x as many Cortex-A9 cores than E5-2660 threads to equal it on webserving. With Cortex-A15 being at least 50% faster than the A9 that reduces to 1.8x. Assuming the E5 V2 is 25% faster, it becomes 2.3x. The max density of the Moonshot is 45 x 4 quadcores in 4.3U, so about 167 cores per 1U vs 40 threads for the E5 V2, ie. it gives 1.8 times as much performance per 1U.vFunct - Thursday, April 11, 2013 - link

Can we have ARM SoC's with stacked 128GB NAND flash chips on an interconnected grid already?It's obvious that this is where everything is headed. Don't need a giant cartridge/module when everything can be done in a stacked die. Or, perhaps just add an ARM core/network interface to NAND FLASH.

You could probably fit several thousand of them in a chassis. Maybe several hundred thousand in a rack.

wetwareinterface - Friday, April 12, 2013 - link

and that would be a fire you could see from space. a stacked die? and several thousand in a chassis...would require a cooling solution based on vapor phase and massive heat exchangers to keep it from burning itself up.

and further the arm cpu with the ability to access more than 4 GB of ram doesn't yet exist. tying slower flash to it isn't a solution either except in a san. and that would be fairly pointless as you'd hit a bottleneck on the network side so flash storage would be a pointless expense.

Wilco1 - Friday, April 12, 2013 - link

The HP Moonshot server supports 7200 ARM cores already using air cooling. Given that a typical quad core node uses about 5 Watts, stacking flash and/or RAM is certainly feasible. This is pretty much what many mobile phone SoCs already do.Also ARM's with more than 4GB capability have been on the market for at least 6 months - Cortex-A15 supports 40-bit addressing.

wetwareinterface - Monday, April 15, 2013 - link

okay i'll bite...first off, and i quote, "Don't need a giant cartridge/module when everything can be done in a stacked die." everything that module contains stacked up would equal a heat dissipation nightmare.

or the second option of just adding an arm core and network interface on top of flash would net nothing but a slow waste of cash.

and also the a15 has a 40 bit address space so it can see up to 1 TB of ram but each thread can only use 32bit of that address space so.... 4GB cap.

and stacking the ram and or flash is feasible... but when you cram a bunch of modules together in the thousands you have heat dissipation problems. the tighter you group the heat sources the more geometrical progression for heat buildup becomes an issue. that heat has to go somewhere and with nowhere to go and no air volume to exchange with the more you have to go with extreme cooling solutions.

phones get away with stacking the cpu and other elements because they don't have to run more than a few minutes accessing those elements at once so heat buildup doesn't become a problem in a typical use scenario. but it does cause issues when you use a phone outside the typical usage it sees.

a server would melt down with all that being accessed at once and constantly if it were stacked and air cooled.

vFunct - Monday, April 15, 2013 - link

If only there were some way to remove that heat, in a way that would "cool" the system..Also, the ARM A15 is the last ARM core ARM will ever design. They don't plan on making any future designs past that. There are no plans on making 64-bit ARM cores, ever.

So, everything you say is right.