NVIDIA Tegra 4 Architecture Deep Dive, Plus Tegra 4i, Icera i500 & Phoenix Hands On

by Anand Lal Shimpi & Brian Klug on February 24, 2013 3:00 PM ESTCortex A15 Architecture

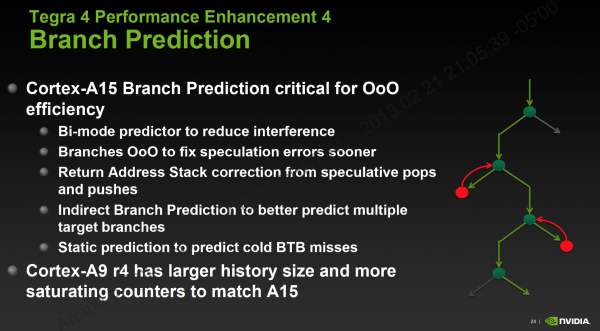

I want to go deeper into ARM’s Cortex A15 but I’ll have to save that for another time. At a high level you’re looking at a much deeper, much wider architecture than the Cortex A9. The integer pipeline is significantly deeper (15 stages vs. 9 stages), however branch prediction has been improved considerably to hopefully offset the difference.

The front end is 50% wider and has double the instruction fetch bandwidth of the Cortex A9, which helps increase instruction level parallelism. In order to capitalize on the 3-wide machine, ARM dramatically increased the size of the reorder buffer and all associated data structures within the machine. While the Cortex A9 could keep around 32 - 40 decoded instructions in its reorder buffer, Cortex A15 can hold 128 - an increase of up to 4x. The larger ROB alone gives you a good idea of the magnitude of difference between the Cortex A9 and A15. While the former was a natural evolution over the Cortex A8, ARM’s Cortex A15 is really a leap forward both in performance and power consumption - clearly aimed at something much more than just smartphones.

Getting to the execution core, A15 continues the trend of being considerably wider than A9. There are more execution ports and more execution units, all of which help to increase ILP/single threaded performance. ARM went to multiple, independent issue queues in order to keep frequencies high. Each issue queue can accept up to three instructions and all issue queues can dispatch in parallel.

The A15 can execute instructions out of order like the A9, however its abilities grow quite a bit. All FP/NEON instructions had to be executed in-order on Cortex A9, but they can now be executed OoO in the A15. Despite the beefier OoO execution engine, the Cortex A15 can’t reorder all memory operations (independent loads can be executed out of order, but stores can’t be completed ahead of loads).

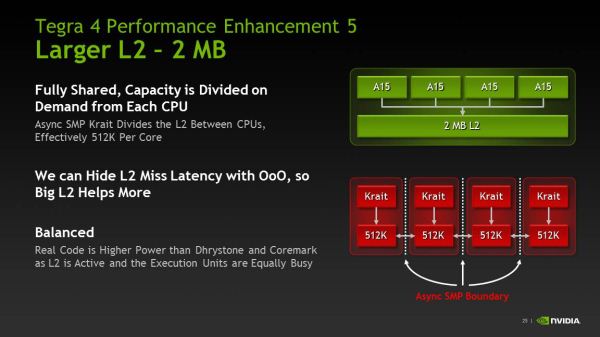

The Cortex A15 moves back to an integrated L2 cache structure, rather than a separate IP block as was the case with the Cortex A9. L1 and L2 cache latencies remain largely unchanged, although I do believe A15 does see a 1 - 2 cycle penalty over A9 in a few cases. The level 2 TLB and other data structures grow in size considerably in order to feed the hungrier machine.

Although the L1 caches remain the same size as NVIDIA’s Cortex A9 (32KB I + 32KB D), the the L2 cache grows to 2MB. The 2MB L2 is shared by all four cores (the companion core has its own private 512KB L2), and any individual core can occupy up to the entire 2MB space on its own. Alternatively, all four cores can evenly share and access the large L2.

75 Comments

View All Comments

PingviN - Monday, February 25, 2013 - link

Tegra made an operating loss of $150 million for fiscal year 2012, despite getting into both the Nexus 7 (the refresh coming this year has been lost to Qualcomm) and the Surface RT. Sales prognosis cut almost in half for the fiscal year 2013. To date, Nvidia hasn't had any profit coming out of Tegra and now it's in limbo mode until Tegra 4 is released because Tegra 3 gets smashed by it's competition.It's been a pretty crappy year for Tegra.

guilmon14 - Tuesday, February 26, 2013 - link

I don't know anything about this company "tegra", but have you heard about Nvidia? I heard they're doing great!http://nvidianews.nvidia.com/Releases/NVIDIA-Repor...

According to this Nvidia is up in income, revenue, and equity.

If you wanted to check the easy way just look at nvidia's wikipedia page, gives you all the nice money numbers.

http://en.wikipedia.org/wiki/Nvidia

trajan2448 - Monday, February 25, 2013 - link

5 years down the road phones will be cooking our dinner. It's amazing how fast the tech is advancing now.Scannall - Monday, February 25, 2013 - link

If they don't hustle right along, SOC's with the PowerVR 6 series (Rogue) will beat them to market. And considering their GPU just barely squeaks by the iPad as it is, it will be behind early on.Khato - Monday, February 25, 2013 - link

Was it specifically stated that the Tegra 4 SPECint/W figure was running on the high speed cores? As is mentioned later on the page, a SPECint2000 of 520 is within reach of the power optimized companion core, so the only reason I'd expect NVIDIA to not use the companion core for this data is if they explicitly stated that it wasn't.Part of the cause for my suspicion is that the Power vs DMIPS chart that Samsung recently provided for the Exynos 5 Octa shows 8k DMIPS at 1 watt... and from the press coverage back in 2009 for the A9 hard macros there's both the 10k DMIPS at 1.9 watts and 2GHz with the speed speed optimized and 4k DMIPS at 250 mW and 800 MHz for the power optimized. Which equate to 5.26 DMIPS/mW and 8 DMIPS/mW, respectively. Now the 2GHz data point should be even worse off than Tegra 3 and yet it only shows the Samsung Exynos 5 Octa as being 52% more efficient.

Going into estimating rather than published numbers, if we up the efficiency of Tegra 3 a bit compared to that 2GHz figure then it's likely going to be closer to A15 being 30% more efficient... which you then add the known ~40% efficiency bump going from a performance to power implementation and you get the kind of drastic increase NVIDIA is touting.

Wilco1 - Monday, February 25, 2013 - link

It doesn't matter whether they used the 5th core or one of the fast ones. By definition the cross over point is where the 5th core uses as much power as a fast core. Since that is ~800MHz, the power efficiency is the same. The 5th core can likely clock to well over 1GHz, but then it uses more power than a fast core.You are basically right that some of the 73% MIPS/W improvement comes from the 40-28nm process change. However the combined improvement of process and micro architecture means that you can use the low power core far more often. The 5th core in Tegra 4 is effectively more than 3 times as fast than the one in Tegra 3. So that means lots of tasks which needed 1-2 fast Tegra 3 cores can now run on the 5th Tegra 4 core. That means the power efficiency will actually improve by what NVidia suggests.

Khato - Monday, February 25, 2013 - link

Mind sharing the source for that? The wording in this article implies differently - "That 825MHz mark ends up being an important number, because that’s where the fifth companion Cortex A15 tops out at." Given 1.9GHz for the performance-optimized cores, something around 800 MHz sounds about right for the max frequency of a power-optimized version.Anyway, there's no question that Tegra 4 will be quite a bit more power efficient simply by virtue of being able able to run more workloads exclusively on the companion core. As said before, in exchange for a much lower cap on maximum frequency a power optimized synthesis gives at least a 40% bump in efficiency... and now that power optimized core will still deliver respectable performance.

Wilco1 - Monday, February 25, 2013 - link

Read http://www.nvidia.com/content/PDF/tegra_white_pape... it explains the difference between leakage and active power on low power and high performance transistors. It explicitly says the 5th core in Tegra is capped at 500MHz as that is where it is as power efficient as a fast core. The graphs and the word capped suggest the 5th core can go faster but there is no point.Note that Tegra 3 uses a different process with low power transistors for the 5th core rather than a low power synthesis (not that they couldn't have done that too, but it is never mentioned and the 5th core looks pretty much the same in the die plots). I presume Tegra 4 does the same on the 28nm process.

Khato - Tuesday, February 26, 2013 - link

Okay, so your commentary is based on the Tegra 3 which is using an entirely different approach to power savings for the companion core. Note that all of the data I was referencing for the difference in efficiency between ARM's two A9 hard macros was on the same process and hence is more applicable to the case of Tegra 4. As you correctly state, Tegra 3 gains its power efficiency for the companion core by using the LP process rather than a low power synthesis, likely due to it being a simpler and faster route to the desired end result and equally effective for their design goals.Tegra 4 isn't playing process games for the companion core. How do you gain efficiency on the same process? You loosen timings to allow for the usage of smaller transistors, less flop stages, so on so forth. The end result being that you sacrifice maximum switching speed to reduce both leakage and dynamic power. From all the information that NVIDIA has made available it's a completely different implementation from Tegra 3.

Wilco1 - Tuesday, February 26, 2013 - link

Tegra 4 does exactly the same as Tegra 3. According to NVidia's white paper on Tegra 4 (http://www.nvidia.com/docs/IO/116757/NVIDIA_Quad_a... it also uses low power transistors for the 5th core. Again if you look at the die photos of Tegra 4 all 5 cores are identical just like Tegra 3. So that seems to exclude a different synthesis.The way NVidia get a low power core is by using low power transistors. TSMC 28nm process supports several different transistor libraries, from high performance high leakage to low performance low leakage. Based on the information we have all they have done is swap the transistor libraries.