NVIDIA Tegra 4 Architecture Deep Dive, Plus Tegra 4i, Icera i500 & Phoenix Hands On

by Anand Lal Shimpi & Brian Klug on February 24, 2013 3:00 PM ESTThe Cortex A9 r4p1

Although we just call ARM’s previous architecture by its Cortex A9 name, there have been multiple revisions to the A9 architecture since its introduction. Tegra 2 implemented Cortex A9 r1p1, while Tegra 3 used r2p9. With Tegra 4i, NVIDIA moved to the absolute latest version of the Cortex A9 core: r4p1.

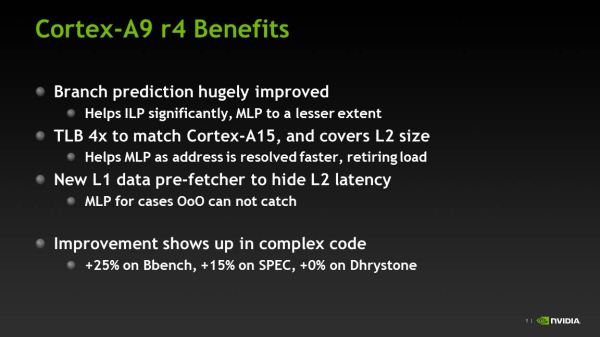

There are some significant changes to the Cortex A9 in r4p1. The GHB, L2 TLB and BTAC all grew by 4x and are now sized equally between the A9 and A15 implementations (16K predictors, 512 entries and 4096 entries, respectively). These changes help improve branch prediction accuracy, which further increases IPC on an already very efficient design.

The A9 r4p1 also has an enhanced data prefetching engine, including a small L1 prefetcher and dedicated hardware for the cache preload instruction.

NVIDIA claims a 15% increase in SPECint_base for the Cortex A9 r4p1 vs. r2p9, which is pretty impressive. Combined with the 2.3GHz max frequency, Tegra 4i’s CPU performance should be a healthy improvement over what we have in Tegra 3 today.

Tegra 4 Clock Speeds

Each of the four primary Cortex A15s is driven off the same voltage and frequency plane, although each core can be power gated individually. This is similar to how Intel designs its processors, but at odds with Qualcomm’s independent voltage/frequency planes.

NVIDIA does a good job of binning its SoCs, and the same will continue with Tegra 4. All four cores are capable of running at up to 1.9GHz, although NVIDIA claims we may see configurations with even higher single core boost frequencies (or even lower max frequencies, similar to Tegra 3). As I already mentioned, the fifth Cortex A15 runs at somewhere between 700 and 800MHz.

The Tegra 4 GPU operates at up to 672MHz, up from the 520MHz max in Tegra 3.

75 Comments

View All Comments

Krysto - Monday, February 25, 2013 - link

S600 is just a slightly overclocked S4 Pro with the same GPU.The real competitor of Tegra 4 will be S800. We'll see if it wins in CPU performance (it might not), and I think there's a high chance it will lose in GPU performance, as Adreno 330 is only 50% faster than Adreno 320 I think, and Tegra 4 is about twice as fast.

Qualcomm has always had slower graphics performance than Nvidia actually. The only "gap" they found in the market was last fall with the Adreno 320, when Nvidia didn't have anything good to show. But Tegra 3 beat S4 with its Adreno 225.

watersb - Monday, February 25, 2013 - link

I'm amazed at the depth of this NVIDIA data-dump. Brilliant work.Anand's observation re: die size, cost strategy, position in the market and how this buys them time to consolidate... Wow.

Clearly, Nvidia is in this game for the long haul.

djgandy - Monday, February 25, 2013 - link

So OpenGL ES 3.0 doesn't matter, but quad core A15 does? Why do people suck up to Nvidia and their marketing BS so much?T4i still single channel memory? What a joke configuration.

djgandy - Monday, February 25, 2013 - link

Also a 9 page article about a mobile SoC without a single reference to the word "battery".varad - Monday, February 25, 2013 - link

Read the article before you write such comments. The very first page is "Introduction & Power" where they do mention some numbers and their thoughts.djgandy - Tuesday, February 26, 2013 - link

Yeah its all smoke and mirrors under lab test conditions. Where is the real battery life? Is this not for battery powered devices?Krysto - Monday, February 25, 2013 - link

Personally, I think all 2013 GPU's should have support for OpenGL ES 3.0 and OpenCL. I was stunned to find out Tegra 4 was not going to support it as they haven't even switched to a unified shader architecture.That being said, Anand is probably right that it was the right move for Nvidia, and they are just going to wait for the Maxwell architecture to streamline the same custom ARMv8 CPU from Tegra 5 to Project Denver across product line-ups, and also the same Maxwell GPU cores.

If that's indeed their plan, then switching Tegra 4 to Kepler this year, only to switch again to Maxwell next year wouldn't have made any sense. GPU architectures barely change even every 2-3 years, let alone 1 year. It wouldn't have been cost effective for them.

I do hope they aren't going to delay the transition again with Tegra 5 though, and I also do hope they follow Qualcomm's strategy with S4 last year of switching IMEMDIATELY to the 20nm process, instead of continuing on 28nm with Tegra 5, like they did with Tegra 3 on 40nm. But I fear Nvidia will repeat the same mistake.

If they put Tegra 5 on 20nm, and make it 120mm2 in size, with Maxwell GPU core, I don't think even Apple's A8X will stand against it next year in terms of GPU performance (and of course it will get beaten easily in CPU performance, just like this year).

djgandy - Tuesday, February 26, 2013 - link

Tegra is smaller because it lacks features and also memory bandwidth. The comparison is not really fair to assume you can just throw more shaders at the problem. You'll need wider memory bus for a start. You'll need more TMU's and in the future it's probably smart to have a dedicate ROP unit. Then also are you seriously going to just stick with FP20 and not support ES 3.0 and OpenCL? OEMs see OpenCL as a de facto feature these days, not because it is widely used but because it opens up future possibilities. Nvidia has simply designed an SoC for gaming here.Your post focuses on performance, but these are battery powered devices. The primary design goal is efficiency, and it would appear that is why apple went swift and not A15. A15 is just too damn power hungry, even for a tablet.

metafor - Tuesday, February 26, 2013 - link

If the silicon division of Apple were its own business, they'd be in the red. Very few silicon providers can afford to make 120mm^2 chips and still make a profit; let alone one with as little bargaining clout in the mobile space as nVidia.Numbers are great but at the end of the day, making money is what matters.

milli - Monday, February 25, 2013 - link

nVidia is trying hard but Tegra still isn't making them any money ...