NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTThe Final Word On Overclocking

Before we jump into our performance breakdown, I wanted to take a few minutes to write a bit of a feature follow-up to our overclocking coverage from Tuesday. Since we couldn’t reveal performance numbers at the time – and quite honestly we hadn’t even finished evaluating Titan – we couldn’t give you the complete story on Titan. So some clarification is in order.

On Tuesday we discussed how Titan reintroduces overvolting for NVIDIA products, but now with additional details from NVIDIA along with our own performance data we have the complete picture, and overclockers will want to pay close attention. NVIDIA may be reintroducing overvolting, but it may not be quite what many of us were first thinking.

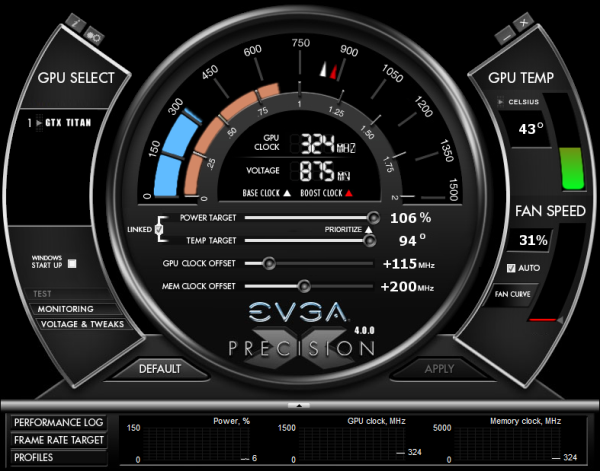

First and foremost, Titan still has a hard TDP limit, just like GTX 680 cards. Titan cannot and will not cross this limit, as it’s built into the firmware of the card and essentially enforced by NVIDIA through their agreements with their partners. This TDP limit is 106% of Titan’s base TDP of 250W, or 265W. No matter what you throw at Titan or how you cool it, it will not let itself pull more than 265W sustained.

Compared to the GTX 680 this is both good news and bad news. The good news is that with NVIDIA having done away with the pesky concept of target power versus TDP, the entire process is much simpler; the power target will tell you exactly what the card will pull up to on a percentage basis, with no need to know about their separate power targets or their importance. Furthermore with the ability to focus just on just TDP, NVIDIA didn’t set their power limits on Titan nearly as conservatively as they did on GTX 680.

The bad news is that while GTX 680 shipped with a max power target of 132%, Titan is again only 106%. Once you do hit that TDP limit you only have 6% (15W) more to go, and that’s it. Titan essentially has more headroom out of the box, but it will have less headroom for making adjustments. So hardcore overclockers dreaming of slamming 400W through Titan will come away disappointed, though it goes without saying that Titan’s power delivery system was never designed for that in the first place. All indications are that NVIDIA built Titan’s power delivery system for around 265W, and that’s exactly what buyers will get.

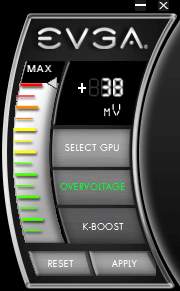

Second, let’s talk about overvolting. What we didn’t realize on Tuesday but realize now is that overvolting as implemented in Titan is not overvolting in the traditional sense, and practically speaking I doubt too many hardcore overclockers will even recognize it as overvolting. What we mean by this is that overvolting was not implemented as a direct control system as it was on past generation cards, or even the NVIDIA-nixed cards like the MSI Lightning or EVGA Classified.

Overvolting is instead a set of two additional turbo clock bins, above and beyond Titan’s default top bin. On our sample the top bin is 1.1625v, which corresponds to a 992MHz core clock. Overvolting Titan to 1.2 means unlocking two more bins: 1006MHz @ 1.175v, and 1019MHz @ 1.2v. Or put another way, overvolting on Titan involves unlocking only another 27MHz in performance.

These two bins are in the strictest sense overvolting – NVIDIA doesn’t believe voltages over 1.1625v on Titan will meet their longevity standards, so using them is still very much going to reduce the lifespan of a Titan card – but it’s probably not the kind of direct control overvolting hardcore overclockers were expecting. The end result is that with Titan there’s simply no option to slap on another 0.05v – 0.1v in order to squeak out another 100MHz or so. You can trade longevity for the potential to get another 27MHz, but that’s it.

Ultimately, this means that overvolting as implemented on Titan cannot be used to improve the clockspeeds attainable through the use of the offset clock functionality NVIDIA provides. In the case of our sample it peters out after +115MHz offset without overvolting, and it peters out after +115MHz offset with overvolting. The only difference is that we gain access to a further 27MHz when we have the thermal and power headroom available to hit the necessary bins.

| GeForce GTX Titan Clockspeed Bins | |||

| Clockspeed | Voltage | ||

| 1019MHz | 1.2v | ||

| 1006MHz | 1.175v | ||

| 992MHz | 1.1625v | ||

| 979MHz | 1.15v | ||

| 966MHz | 1.137v | ||

| 953MHz | 1.125v | ||

| 940MHz | 1.112v | ||

| 927MHz | 1.1v | ||

| 914MHz | 1.087v | ||

| 901MHz | 1.075v | ||

| 888MHz | 1.062v | ||

| 875MHz | 1.05v | ||

| 862MHz | 1.037v | ||

| 849MHz | 1.025v | ||

| 836MHz | 1.012v | ||

Finally, as with the GTX 680 and GTX 690, NVIDIA will be keeping tight control over what Asus, EVGA, and their other partners release. Those partners will have the option to release Titan cards with factory overclocks and Titan cards with different coolers (i.e. water blocks), but they won’t be able to expose direct voltage control or ship parts with higher voltages. Nor for that matter will they be able to create Titan cards with significantly different designs (i.e. more VRM phases); every Titan card will be a variant on the reference design.

This is essentially no different than how the GTX 690 was handled, but I think it’s something that’s important to note before anyone with dreams of big overclocks throws down $999 on a Titan card. To be clear, GPU Boost 2.0 is a significant improvement in the entire power/thermal management process compared to GPU Boost 1.0, and this kind of control means that no one needs to be concerned with blowing up their video card (accidentally or otherwise), but it’s a system that comes with gains and losses. So overclockers will want to pay close attention to what they’re getting into with GPU Boost 2.0 and Titan, and what they can and cannot do with the card.

337 Comments

View All Comments

piiman - Saturday, February 23, 2013 - link

Yes a $1000.00 GPU is a luxury. Don't want luxury use the on board GPU and have a blast! :-)CeriseCogburn - Sunday, February 24, 2013 - link

ANY GPU over about $100 to $150 bucks is a LUXURY PRODUCT.Of course we can get you a brand spankin new gpu for $20 after rebate DX11 capable with a gig of ram, GTX 600 series, ready to rock out, even fit in your HTPC.

So stop playing so stupid and so ridiculous. Why is stupidity so cool and so popular with you puke brains ?

A hundred bucks can get one a very reasonable GPU that will play everything now available with a quite tolerable eye candy pain level, so the point is dummy, THERE ARE THOUSANDS OF NON LUXURY GPU's, JUST LIKE THERE ARE ALWAYS A FEW DOZEN LUXURY GPU's.

So your faux aghast smarmy idiot comment about I thought GPU's were commodities fits right in with the retard liar shortbus so stuffed to the brim with the clowns we have here.

You're welcome, I'm certain that helped.

Ankarah - Thursday, February 21, 2013 - link

Could then you care to explain why any of those ultra enthusiasts would choose this card over the 690GTX, which seems to be faster overall?And let's leave the power consumption between the two out of this discussion - if you can drop a grand on your graphics card for your PC, then you can afford a big power supply too.

sherlockwing - Thursday, February 21, 2013 - link

You haven't seen the SLI benches yet.This card in SLI will perform better than GTX 690 in SLI due to bad scaling for Quad SLI.

sherlockwing - Thursday, February 21, 2013 - link

Correction: It will be better than GTX 690 SLI if you overclock the Titan to 1Ghz, 690 don't really have that much OC headroom.CeriseCogburn - Saturday, February 23, 2013 - link

did you say overclock ?" In our testing, NVIDIA’s GK110 core had no issue hitting a Boost Clock of 1162MHz but hit right into the Power Limit, despite it being set at 106%. Memory was also ready to overclock and hit a speed of 6676MHz. As you can see in the quick benchmarks below, this led to a performance increase of about 15%. "

So, that's the 27mhz MAX the review here claims...LOL

Yep, a 15% performance increase, or a lousy 27mhz, you decide.... amd fanboy fruiter, or real world overclocker...

http://www.hardwarecanucks.com/forum/hardware-canu...

Alucard291 - Thursday, February 21, 2013 - link

Well as Ryan said in the article its "removed from the price curve" which in human language means: Its priced badly and is hoping to gain sales from publicity as opposed to quality.Oxford Guy - Thursday, February 21, 2013 - link

Hence the "luxury product" meme.trajan2448 - Thursday, February 21, 2013 - link

Learn about latencies and micro stuttering, driver issues, heat and noise. Almost 50% better in frame latencies than 7970. Crossfire,don't make me laugh. here's an analysis.http://www.pcper.com/reviews/G...

CeriseCogburn - Thursday, February 21, 2013 - link

correcting that link for you again, that shows the PATHETIC amd crap videocard loser for what it ishttp://www.pcper.com/reviews/Graphics-Cards/NVIDIA...