NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTTitan’s Compute Performance (aka Ph.D Lust)

Because GK110 is such a unique GPU from NVIDIA when it comes to compute, we’re going to shake things up a bit and take a look at compute performance first before jumping into our look at gaming performance.

On a personal note, one of the great things about working at AnandTech is all the people you get to work with. Anand himself is nothing short of fantastic, but what other review site also has a Brian Klug or a Jarred Walton? We have experts in a number of fields, and as a computer technology site that includes of course includes experts in computer science.

What I’m trying to say is that for the last week I’ve been having to fend off our CS guys, who upon hearing I had a GK110 card wanted one of their own. If you’ve ever wanted proof of just how big a deal GK110 is – and by extension Titan – you really don’t have to look too much farther than that.

Titan, its compute performance, and the possibilities it unlocks is a very big deal for researchers and other professionals that need every last drop of compute performance that they can get, for as cheap as they can get it. This is why on the compute front Titan stands alone; in NVIDIA’s consumer product lineup there’s nothing like it, and even AMD’s Tahiti based cards (7970, etc), while potent, are very different from GK110/Kepler in a number of ways. Titan essentially writes its own ticket here.

In any case, as this is the first GK110 product that we have had access to, we couldn’t help but run it through a battery of tests. The Tesla K20 series may have been out for a couple of months now, but at $3500 for the base K20 card, Titan is the first GK110 card many compute junkies are going to have real access to.

To that end I'd like to introduce our newest writer, Rahul Garg, who will be leading our look at Titan/GK110’s compute performance. Rahul is a Ph.D student specializing in the field of parallel computing and GPGPU technology, making him a prime candidate for taking a critical but nuanced look at what GK110 can do. You will be seeing more of Rahul in the future, but first and foremost he has a 7.1B transistor GPU to analyze. So let’s dive right in.

By: Rahul Garg

For compute performance, we first looked at two common benchmarks: GEMM (measures performance of dense matrix multiplication) and FFT (Fast Fourier Transform). These numerical operations are important in a variety of scientific fields. GEMM is highly parallel and typically compute heavy, and one of the first tests of performance and efficiency on any parallel architecture geared towards HPC workloads. FFT is typically memory bandwidth bound but, depending upon the architecture, can be influenced by inter-core communication bandwidth. Vendors and third-parties typically supply optimized libraries for these operations. For example, Intel supplies MKL for Intel processors (including Xeon Phi) and AMD supplies ACML and OpenCL-based libraries for their CPUs and GPUs respectively. Thus, these benchmarks measure the performance of the combination of both the hardware and software stack.

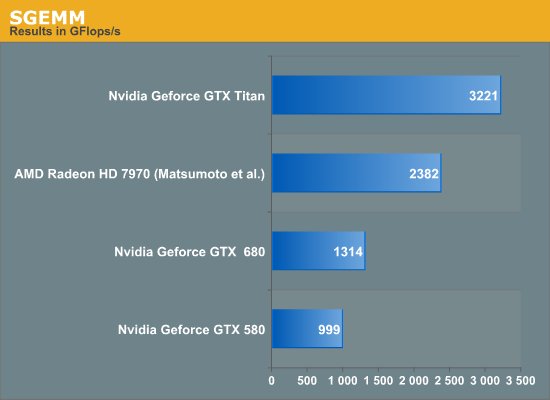

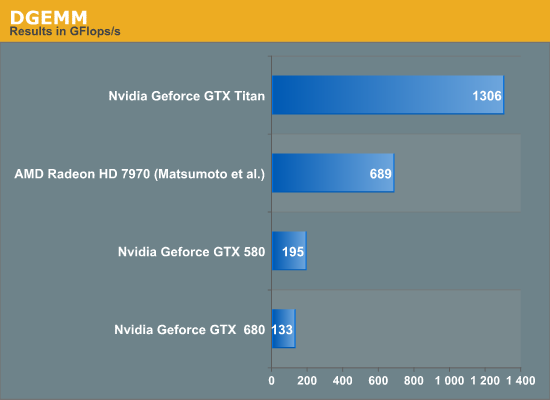

For GEMM, we tested the performance of NVIDIA's CUBLAS library supplied with CUDA SDK 5.0, on SGEMM (single-precision/fp32 GEMM) and DGEMM (double precision/fp64 GEMM) on square matrices of size 5k by 5k. For SGEMM on Titan, the data reported here was collected with boost disabled. We also conducted the experiments with boost enabled on Titan, but found that the performance was effectively equal to the non-boost case. We assume that it is because our test ran for a very short period of time and perhaps did not trigger boost. Therefore, for the sake of simpler analysis, we report the data with boost disabled on the Titan. If time permits, we may return to the boost issue in a future article for this benchmark.

Apart from the results collected by us for GTX Titan, GTX 680 and GTX 580, we refer to experiments conducted by Matsumoto, Nakasato and Sedukin reported in a technical report filed at the University of Aizu about GEMM on Radeon 7970. Their exact parameters and testbed are different than ours, and we include their results for illustrative purposes, as a ballpark estimate only. The results are below.

Titan rules the roost amongst the three listed cards in both SGEMM and DGEMM by a wide margin. We have not included Intel's Xeon Phi in this test, but the TItan's achieved performance is higher than the theoretical peak FLOPS of the current crop of Xeon Phi. Sharp-eyed readers will have observed that the Titan achieves about 1.3 teraflops on DGEMM, while the listed fp64 theoretical peak is also 1.3 TFlops; we were not expecting 100% of peak on the Titan in DGEMM. NVIDIA clarified that the fp64 rating for the Titan is a conservative estimate. At 837MHz, the calculated fp64 peak of Titan is 1.5 TFlops. However, under heavy load in fp64 mode, the card may underclock below the listed 837MHz to remain within the power and thermal specifications. Thus, fp64 ALU peak can vary between 1.3 TFlops and 1.5 TFlops and our DGEMM results are within expectations.

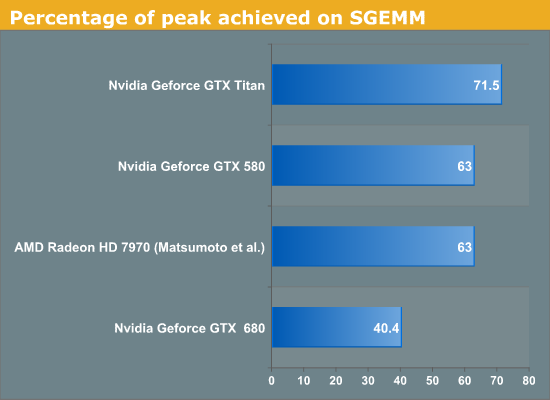

Next, we consider the percentage of fp32 peak achieved by the respective SGEMM implementations. These are plotted below.

Titan achieves about 71% of its peak while GTX 680 only achieves about 40% of the peak. It is clear that while both GTX 680 and Titan are said to be Kepler architecture chips, Titan is not just a bigger GTX 680. Architectural tweaks have been made that enable it to reach much higher efficiency than the GTX 680 on at least some compute workloads. GCN based Radeon 7970 obtains about 63% of peak on SGEMM using Matsumoto et al. algorithm, and Fermi based GTX 580 also obtains about 63% of peak using CUBLAS.

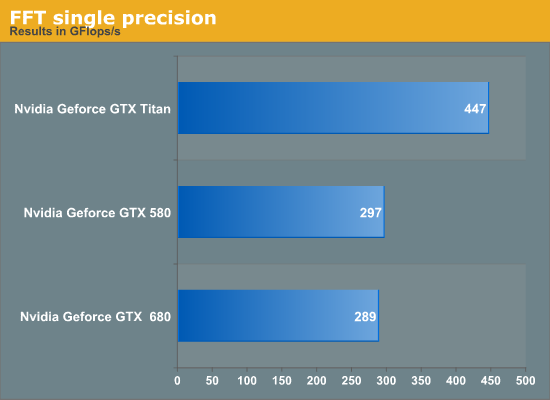

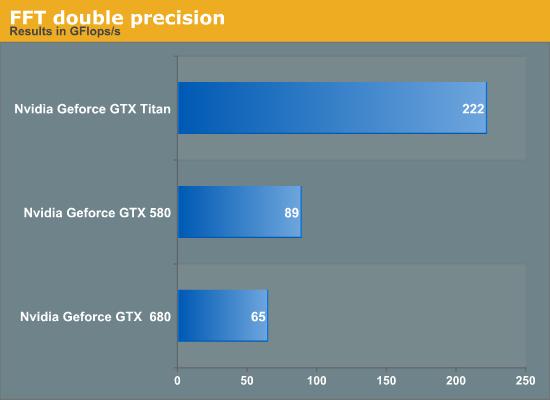

For FFT, we tested the performance of 1D complex-to-complex inplace transforms of size 225 using the CUFFT library. Results are given below.

Titan outperforms the GTX 680 in FFT by about 50% in single-precision. We suspect this is primarily due to increased memory bandwidth on Titan compared to GTX 680 but we have not verified this hypothesis. GTX 580 has a slight lead over the GTX 680. Again, if time permits, we may return to the benchmark for a deeper analysis. Titan achieves about 3.4x the performance of GTX 680 but this is not surprising given the poor fp64 execution resources on the GTX 680.

We then looked at an in-house benchmark called SystemCompute, developed by our own Ian Cutress. The benchmark tests the performance on a variety of sample kernels that are representative of some scientific computing applications. Ian described the CPU version of these benchmarks in a previous article. Ian wrote the GPU version of the benchmarks in C++ AMP, which is a relatively new GPGPU API introduced by Microsoft in VS2012.

Microsoft's implementation of AMP compiles down to DirectCompute shaders. These are all single-precision benchmarks and should run on any DX11 capable GPU. The benchmarks include 2D and 3D finite difference solvers, 3d particle movement, n-body benchmark and a simple matrix multiplication algorithm. Boost is enabled on both the Titan and GTX 680 for this benchmark. We give the score reported by the benchmark for both cards, and report the speedup of the Titan over 680. Speedup greater than 1 implies Titan is faster, while less than 1 implies a slowdown.

| Benchmark | GTX 580 | GTX 680 | GTX Titan |

Speedup of Titan over GTX 680 |

| 2D FD | 9053 | 8445 | 12461 | 1.47 |

| 3D FD | 3133 | 3827 | 5263 | 1.37 |

| 3DPmo | 41722 | 26955 | 40397 | 1.49 |

| MatMul | 172 | 197 | 229 | 1.16 |

| nbody | 918 | 1517 | 2418 | 1.59 |

The benchmarks show between 16% and 60% improvement, with the most improvement coming from the relatively FLOP-heavy n-body benchmark. Interestingly, GTX 580 wins over the Titan in 3DPMo and wins over the 680 in 3DPmo and 2D.

Overall, GTX Titan is an impressive accelerator from compute perspective and posts large gains over its predecessors.

337 Comments

View All Comments

etriky - Sunday, February 24, 2013 - link

OK, after a little digging I guess I shouldn't be to upset about not having Blender benches in this review. Tesla K20 and GeForce GTX TITAN support was only added to Blender on the 2/21 and requires a custom build (it's not in the main release). See http://www.miikahweb.com/en/blender/svn-logs/commi... for more infoRyan Smith - Monday, February 25, 2013 - link

As noted elsewhere, OpenCL was broken in the Titan launch drivers, greatly limiting what we could run. We have more planned including SLG's LuxMark, which we will publish an update for once the driver situation is resolved.kukreknecmi - Friday, February 22, 2013 - link

If you look at Azui's PDF, with using different type of kernel , results for 7970 are :SGEMM : 2646 GFLOP

DGEMM : 848 GFLOP

Why did u take the lowest numbers for 7970 ??

codedivine - Friday, February 22, 2013 - link

This was answered above. See one of my earlier comments.gwolfman - Friday, February 22, 2013 - link

ASUS: http://www.newegg.com/Product/Product.aspx?Item=N8...OR

Titan gfx card category (only one shows up for now): http://www.newegg.com/Product/ProductList.aspx?Sub...

Anand and staff, post this in your news feed please! ;)

extide - Friday, February 22, 2013 - link

PLEASE start including Folding@home benchmarks!!!TheJian - Sunday, February 24, 2013 - link

Why? It can't make me any money and isn't a professional app. It tells us nothing. I'd rather see photoshop, premier, some finite analysis app, 3d Studiomax, some audio or content creation app or anything that can be used to actually MAKE money. They should be testing some apps that are actually used by those this is aimed at (gamers who also make money on their PC but don't want to spend $2500-3500 on a full fledged pro card).What does any card prove by winning folding@home (same with bitcoin crap, botnets get all that now anyway)? If I cure cancer is someone going to pay me for running up my electric bill? NOPE. Only a fool would spend a grand to donate electricity (cpu/gpu cycles) to someone else's next Billion dollar profit machine (insert pill name here). I don't care if I get cancer, I won't be donating any of my cpu time to crap like this. Benchmarking this proves nothing on a home card. It's like testing to see how fast I can spin my car tires while the wheels are off the ground. There is no point in winning that contest vs some other car.

"If we better understand protein misfolding we can design drugs and therapies to combat these illnesses."

Straight from their site...Great I'll make them a billionaire drug and get nothing for my trouble or my bill. FAH has to be the biggest sucker pitch I've ever seen. Drug companies already rip me off every time I buy a bottle of their pills. They get huge tax breaks on my dime too, no need to help them, or for me to find out how fast I can help them...LOL. No point in telling me sythentics either. They prove nothing other than your stuff is operating correctly and drivers set up right. Their perf has no effect on REAL use of products as they are NOT a product, thus not REAL world. Every time I see the word synthetic and benchmark in the same sentence it makes me want to vomit. If they are limited on time (usually reviewers are) I want to see something benchmarked that I can actually USE for real.

I feel the same way about max fps. Who cares? You can include them, but leaving out MIN is just dumb. I need to know when a game hits 30fps or less, as that means I don't have a good enough card to get the job done and either need to spend more or turn things down if using X or Y card.

Ryan Smith - Monday, February 25, 2013 - link

At noted elsewhere, FAHBench is in our plans. However we cannot do anything further until NVIDIA fixes OpenCL support.vanwazltoff - Friday, February 22, 2013 - link

the 690, 680 and 7970 have had almost a year to brew and improve with driver updates, i suspect that after a few drivers and an overclock titan will creep up on a 690 and will probably see a price deduction after a few months. dont clock out yet, just think what this could mean for 700 and 800 series cards, its obvious nvidia can deliverTheJian - Sunday, February 24, 2013 - link

It already runs 1150+ everywhere. Most people hit around 1175 max OC stable on titan. Of course this may improve with aftermarket solutions for cooling but it looks like they hit 1175 or so around the world. And that does hit 690 perf and some cases it wins. In compute it's already a winner.If there is no die shrink on the next gens from either company I don't expect much. You can only do so much with 250-300w before needing a shrink to really see improvements. I really wish they'd just wait until 20nm or something to give us a real gain. Otherwise will end up with a ivy,haswell deal. Where you don't get much (5-15%). Intel won't wow again until 14nm. Graphics won't wow again until the next shrink either (full shrink, not the halves they're talking now).