NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTThe Final Word On Overclocking

Before we jump into our performance breakdown, I wanted to take a few minutes to write a bit of a feature follow-up to our overclocking coverage from Tuesday. Since we couldn’t reveal performance numbers at the time – and quite honestly we hadn’t even finished evaluating Titan – we couldn’t give you the complete story on Titan. So some clarification is in order.

On Tuesday we discussed how Titan reintroduces overvolting for NVIDIA products, but now with additional details from NVIDIA along with our own performance data we have the complete picture, and overclockers will want to pay close attention. NVIDIA may be reintroducing overvolting, but it may not be quite what many of us were first thinking.

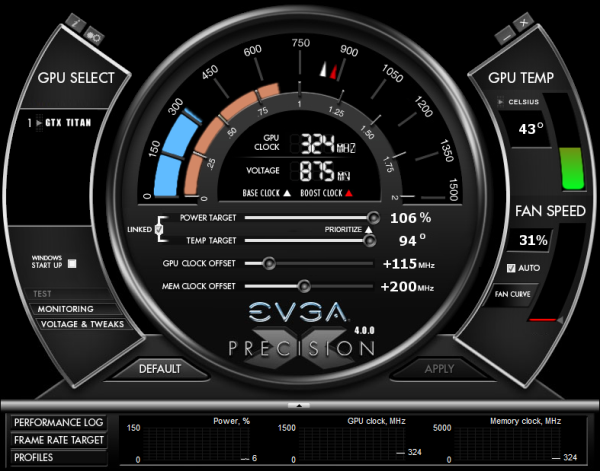

First and foremost, Titan still has a hard TDP limit, just like GTX 680 cards. Titan cannot and will not cross this limit, as it’s built into the firmware of the card and essentially enforced by NVIDIA through their agreements with their partners. This TDP limit is 106% of Titan’s base TDP of 250W, or 265W. No matter what you throw at Titan or how you cool it, it will not let itself pull more than 265W sustained.

Compared to the GTX 680 this is both good news and bad news. The good news is that with NVIDIA having done away with the pesky concept of target power versus TDP, the entire process is much simpler; the power target will tell you exactly what the card will pull up to on a percentage basis, with no need to know about their separate power targets or their importance. Furthermore with the ability to focus just on just TDP, NVIDIA didn’t set their power limits on Titan nearly as conservatively as they did on GTX 680.

The bad news is that while GTX 680 shipped with a max power target of 132%, Titan is again only 106%. Once you do hit that TDP limit you only have 6% (15W) more to go, and that’s it. Titan essentially has more headroom out of the box, but it will have less headroom for making adjustments. So hardcore overclockers dreaming of slamming 400W through Titan will come away disappointed, though it goes without saying that Titan’s power delivery system was never designed for that in the first place. All indications are that NVIDIA built Titan’s power delivery system for around 265W, and that’s exactly what buyers will get.

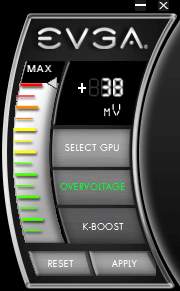

Second, let’s talk about overvolting. What we didn’t realize on Tuesday but realize now is that overvolting as implemented in Titan is not overvolting in the traditional sense, and practically speaking I doubt too many hardcore overclockers will even recognize it as overvolting. What we mean by this is that overvolting was not implemented as a direct control system as it was on past generation cards, or even the NVIDIA-nixed cards like the MSI Lightning or EVGA Classified.

Overvolting is instead a set of two additional turbo clock bins, above and beyond Titan’s default top bin. On our sample the top bin is 1.1625v, which corresponds to a 992MHz core clock. Overvolting Titan to 1.2 means unlocking two more bins: 1006MHz @ 1.175v, and 1019MHz @ 1.2v. Or put another way, overvolting on Titan involves unlocking only another 27MHz in performance.

These two bins are in the strictest sense overvolting – NVIDIA doesn’t believe voltages over 1.1625v on Titan will meet their longevity standards, so using them is still very much going to reduce the lifespan of a Titan card – but it’s probably not the kind of direct control overvolting hardcore overclockers were expecting. The end result is that with Titan there’s simply no option to slap on another 0.05v – 0.1v in order to squeak out another 100MHz or so. You can trade longevity for the potential to get another 27MHz, but that’s it.

Ultimately, this means that overvolting as implemented on Titan cannot be used to improve the clockspeeds attainable through the use of the offset clock functionality NVIDIA provides. In the case of our sample it peters out after +115MHz offset without overvolting, and it peters out after +115MHz offset with overvolting. The only difference is that we gain access to a further 27MHz when we have the thermal and power headroom available to hit the necessary bins.

| GeForce GTX Titan Clockspeed Bins | |||

| Clockspeed | Voltage | ||

| 1019MHz | 1.2v | ||

| 1006MHz | 1.175v | ||

| 992MHz | 1.1625v | ||

| 979MHz | 1.15v | ||

| 966MHz | 1.137v | ||

| 953MHz | 1.125v | ||

| 940MHz | 1.112v | ||

| 927MHz | 1.1v | ||

| 914MHz | 1.087v | ||

| 901MHz | 1.075v | ||

| 888MHz | 1.062v | ||

| 875MHz | 1.05v | ||

| 862MHz | 1.037v | ||

| 849MHz | 1.025v | ||

| 836MHz | 1.012v | ||

Finally, as with the GTX 680 and GTX 690, NVIDIA will be keeping tight control over what Asus, EVGA, and their other partners release. Those partners will have the option to release Titan cards with factory overclocks and Titan cards with different coolers (i.e. water blocks), but they won’t be able to expose direct voltage control or ship parts with higher voltages. Nor for that matter will they be able to create Titan cards with significantly different designs (i.e. more VRM phases); every Titan card will be a variant on the reference design.

This is essentially no different than how the GTX 690 was handled, but I think it’s something that’s important to note before anyone with dreams of big overclocks throws down $999 on a Titan card. To be clear, GPU Boost 2.0 is a significant improvement in the entire power/thermal management process compared to GPU Boost 1.0, and this kind of control means that no one needs to be concerned with blowing up their video card (accidentally or otherwise), but it’s a system that comes with gains and losses. So overclockers will want to pay close attention to what they’re getting into with GPU Boost 2.0 and Titan, and what they can and cannot do with the card.

337 Comments

View All Comments

ponderous - Thursday, February 21, 2013 - link

Cannot give kudos to what is a well performing card when it is so grosslyout of order in price for the performance. $1000 card for 35% more performance

than the $450 GTX680. A $1000 card that is 20% slower than the $1000 GTX690.

And a $1000 card that is 30% slower than a $900 GTX680SLI solution.

Meet the 'Titan'(aka over-priced GTX680).

Well here we have it, the 'real' GTX680 with a special name and a 'special'

price. Nvidia just trolled us with this card. It was not enough for them to

sell a mid-ranged card for $500 as the 'GTX680', now we have 'Titan' for twice

the price and an unremarkable performance level from the obvious genuine successor

to GF110(GTX580).

At this irrational price, this 'Titanic' amusement park ride is not one worth

standing in line to buy a ticket for, before it inevitably sinks,

along with its price.

wreckeysroll - Thursday, February 21, 2013 - link

now there is some good fps numbers for titan. we expected to see such. shocked to see it with the same performance as 7970ghz in that test although!much too much retail msrp for the card. unclear what nvidia was thinking. msrp is sitting far too high for this unfortunately

quantumsills - Thursday, February 21, 2013 - link

Wow....Some respectable performance turn-out here. The compute functionality is formidable, albeit the value of such is questionable in what is a consumer gaming card.

A g-note though ? Really nvidia ? At what degree of inebriation was the conclusion drawn that this justifies a thousand dollar price tag ?

Signed

Flabbergasted.

RussianSensation - Thursday, February 21, 2013 - link

Compute functionality is nothing special. Still can't bitcoin mine well, sucks at OpenCL (http://www.computerbase.de/artikel/grafikkarten/20... and if you need double precision, well a $500 Asus Matrix Platinum @ 1300mhz gives you 1.33 Tflops. You really need to know specific apps you are going to run on this like Adobe CS6 or very specific CUDA compute programs to make it worthwhile as a non-gaming card.JarredWalton - Thursday, February 21, 2013 - link

Really? People are going to trot out Bitcoin still? I realize AMD does well there, but if you're serious about BTC you'd be looking at FPGAs or trying your luck at getting one of the ASICs. I hear those are supposed to be shipping in quantity some time soon, at which point I suspect prices of BTC will plummet as the early ASIC owners cash out to pay for more hardware.RussianSensation - Thursday, February 28, 2013 - link

It's not about bitcoin mining alone. What specific compute programs outside of scientific research does the Titan excel at? It fails at OpenCL, what about ray-tracing in Luxmark? Let's compare its performance in many double precision distributed computing projects (MilkyWay@Home, CollatzConjecture), run it through DirectCompute benches, etc.http://www.computerbase.de/artikel/grafikkarten/20...

So far in this review covers the Titan's performance from specific scientific work done by universities. But those types of researchers get grants to buy full-fledged Tesla cards. The compute analysis in the review is too brief to conclude that it's the best compute card. Even the Elcomsoft password hashing - well AMD cards perform faster there too but they weren't tested. My point is it's not true to say this card is unmatched in compute. It's only true in specific apps. Also, leaving full double precision compute doesn't justify its price tag either since AMD cards have had non-gimped DP for 5+ years now.

maxcellerate - Thursday, March 28, 2013 - link

I tend to argee with RussianSensation, though the fact is that the first batch of Titans has sold out. But to who? There will be the odd mad gamer who must have the latest most expensive card in their rig, regardless. But I suspect the majority of sales have gone to CG renderers where CUDA still rules and $1000 for this card is a bargain compared to what they would have paid for it as a Quadra. Once sales to that market have dried up, the price will drop.Then I can have one;)

ponderous - Thursday, February 21, 2013 - link

True. Very disappointing card. Not enough performance for the exorbitant cost.Nvidia made a fumble here on the cost. Will be interesting to watch in the coming months where the sure to come price drops wind up placing the actual value of this card at.

CeriseCogburn - Saturday, February 23, 2013 - link

LOL - now compute doesn't matter - thank you RS for the 180 degree flip flop, right on schedule...RussianSensation - Thursday, February 28, 2013 - link

I never said compute doesn't matter. I said the Titan's "out of this world compute performance" needs to be better substantiated. Compute covers a lot of apps, bitcoin, openCL, distributed computing projects. None of these are mentioned.