NVIDIA's GeForce GTX Titan, Part 1: Titan For Gaming, Titan For Compute

by Ryan Smith on February 19, 2013 9:01 AM ESTGPU Boost 2.0: Overclocking & Overclocking Your Monitor

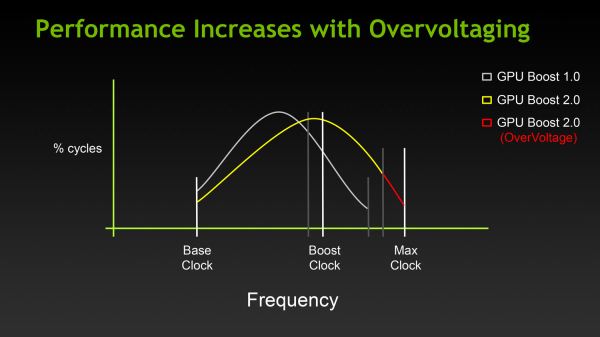

The first half of the GPU Boost 2 story is of course the fact that with 2.0 NVIDIA is switching from power based controls to temperature based controls. However there is also a second story here, and that is the impact to overclocking.

With the GTX 680, overclocking capabilities were limited, particularly in comparison to the GeForce 500 series. The GTX 680 could have its power target raised (guaranteed “overclocking”), and further overclocking could be achieved by using clock offsets. But perhaps most importantly, voltage control was forbidden, with NVIDIA going so far as to nix EVGA and MSI’s voltage adjustable products after a short time on the market.

There are a number of reasons for this, and hopefully one day soon we’ll be able to get into NVIDIA’s Project Greenlight video card approval process in significant detail so that we can better explain this, but the primary concern was that without strict voltage limits some of the more excessive users may blow out their cards with voltages set too high. And while the responsibility for this ultimately falls to the user, and in some cases the manufacturer of their card (depending on the warranty), it makes NVIDIA look bad regardless. The end result being that voltage control on the GTX 680 (and lower cards) was disabled for everyone, regardless of what a card was capable of.

With Titan this has finally changed, at least to some degree. In short, NVIDIA is bringing back overvoltage control, albeit in a more limited fashion.

For Titan cards, partners will have the final say in whether they wish to allow overvolting or not. If they choose to allow it, they get to set a maximum voltage (Vmax) figure in their VBIOS. The user in turn is allowed to increase their voltage beyond NVIDIA’s default reliability voltage limit (Vrel) up to Vmax. As part of the process however users have to acknowledge that increasing their voltage beyond Vrel puts their card at risk and may reduce the lifetime of the card. Only once that’s acknowledged will users be able to increase their voltages beyond Vrel.

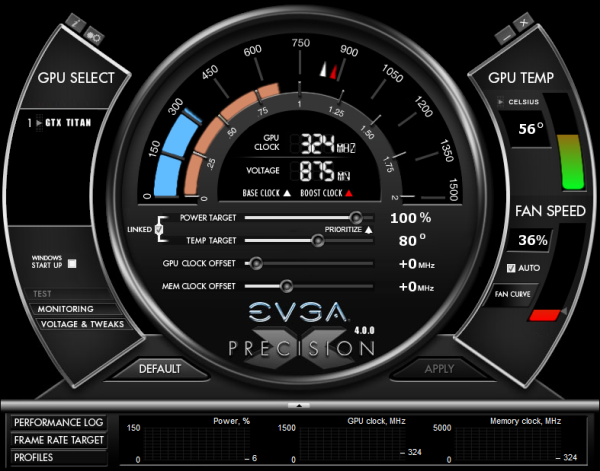

With that in mind, beyond overvolting overclocking has also changed in some subtler ways. Memory and core offsets are still in place, but with the switch from power based monitoring to temperature based monitoring, the power target slider has been augmented with a separate temperature target slider.

The power target slider is still responsible for controlling the TDP as before, but with the ability to prioritize temperatures over power consumption it appears to be somewhat redundant (or at least unnecessary) for more significant overclocking. That leaves us with the temperature slider, which is really a control for two functions.

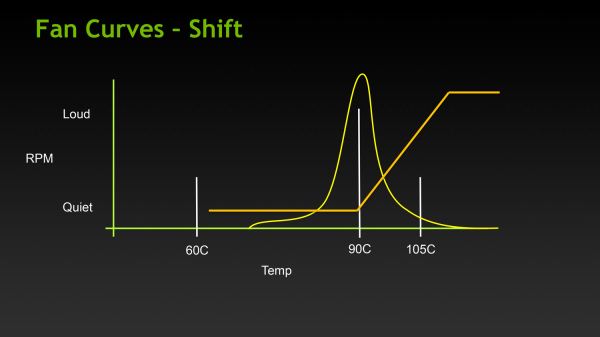

First and foremost of course is that the temperature slider controls what the target temperature is for Titan. By default for Titan this is 80C, and it may be turned all the way up to 95C. The higher the temperature setting the more frequently Titan can reach its highest boost bins, in essence making this a weaker form of overclocking just like the power target adjustment was on GTX 680.

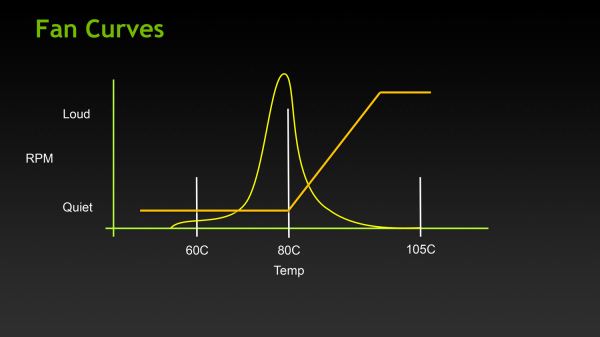

The second function controlled by the temperature slider is the fan curve, which for all practical purposes follows the temperature slider. With modern video cards ramping up their fan speeds rather quickly once cards get into the 80C range, merely increasing the power target alone wouldn’t be particularly desirable in most cases due to the extra noise it generates, so NVIDIA tied in the fan curve to the temperature slider. By doing so it ensures that fan speeds stay relatively low until they start exceeding the temperature target. This seems a bit counterintuitive at first, but when put in perspective of the goal – higher temperatures without an increase in fan speed – this starts to make sense.

Finally, in what can only be described as a love letter to the boys over at 120hz.net, NVIDIA is also introducing a simplified monitor overclocking option, which can be used to increase the refresh rate sent to a monitor in order to coerce it into operating at that higher refresh rate. Notably, this isn’t anything that couldn’t be done before with some careful manipulation of the GeForce control panel’s custom resolution option, but with the monitor overclocking option exposed in PrecisionX and other utilities, monitor overclocking has been reduced to a simple slider rather than a complex mix of timings and pixel counts.

Though this feature can technically work with any monitor, it’s primarily geared towards monitors such as the various Korean LG-based 2560x1440 monitors that have hit the market in the past year, a number of which have come with electronics capable of operating far in excess of the 60Hz that is standard for those monitors. On the models that have been able to handle it, modders have been able to get some of these 2560x1440 monitors up to and above 120Hz, essentially doubling their native 60Hz refresh rate to 120Hz, greatly improving smoothness to levels similar to a native 120Hz TN panel, but without the resolution and quality drawbacks inherent to those TN products.

![]()

Of course it goes without saying that just like any other form of overclocking, monitor overclocking can be dangerous and risks breaking the monitor. On that note, out of our monitor collection we were able to get our Samsung 305T up to 75Hz, but whether that’s due to the panel or the driving electronics it didn’t seem to have any impact on performance, smoothness, or responsiveness. This is truly a “your mileage may vary” situation.

157 Comments

View All Comments

chizow - Friday, February 22, 2013 - link

Um, GF100/110 are absolutely the same league as this card. In the semiconductor industry, size = classification. This is not the first 500+mm^2 ASIC Nvidia has produced, the lineage is long and distinguished:G80, GT200, GT200b, GF100, GF110.

*NONE* of these GPUs cost $1K, only the 8800Ultra came anywhere close to it at $850. All of these GPUs offered similar features and performance relative to the competition and prevailing landscape. Hell, GT200 was even more impressive as it offered a 512-bit memory interface.

Increase in number of transistors is just Moore's law, that's just expected progress. If you don't know the material you're discussing please refrain from commenting, thank you.

CeriseCogburn - Sunday, February 24, 2013 - link

Wait a minute doofus, you said the memory cost the same, and it's cheap.You entirely disregarded the more than double the core transistor footprint, the R&D for it, the yield factor, the high build quality, and the new and extra top tier it resides in, not to mention it's awesome features the competition did not develop and does not have, AT ALL.

4 monitors out of the box, Single card 3d and surround, extra monitor for surfing, target frame rate, TXAA, no tesselation lag, and on and on.

Once a product breaks out far from the competitions underdeveloped and undeveloped failures, it EARNS a price tier.

You're living in the past, you're living with the fantasy of zero worldwide inflation, you'r living the lies you've told yourself and all of us about the last 3 top tier releases, all your arguments exposed in prior threads for the exaggerated lies they were and are, and the Charlie D RUMORS all you of the this same ilk repeat, even as you ignore the absolute time years long DEV time and entire lack of production capability with your tinfoil hat whine.

The market has changed you fool. There was a SEVERE SHORTAGE in the manufacturing space (negating your conspiracy theory entirely) and still there's pressure, and nVidia has developed a large range of added features the competition is entirely absent upon.

You didn't get the 680 for $350 (even though you still 100% believe Charlie D's lie filled rumor) and you're not getting this for your fantasy lie price either.

CeriseCogburn - Sunday, February 24, 2013 - link

NONE had the same or much bigger die sizes.NONE had 7.1 BILLION engineer traced research die points.

NONE had the potential downside low yield.

NONE had the twice plus expensive ram in multiples more attached.

NONE is the amount of truth you told.

Stuka87 - Tuesday, February 19, 2013 - link

Common sense would say nVidia is charging double what they should be.384bit memory is certainly not a reason for high cost as AMD uses it in the 79x0 series chips. A large die adds to cost, but the 580 had a big die as well (520mm2), so that cant be the whole reason for the high cost (the GK110 does have more transistors).

So it comes down to nVidia wanted to scalp customers.

As for your comments on AMD, what proof do you have that AMD has nothing else in the works? Not sure what crap you are referring too. I have had no issues with my AMD cards or their drivers (Or my nVidias for that matter). Just keep on hating for no reason.

AssBall - Tuesday, February 19, 2013 - link

You speak of common sense, but miss the point. When have you ever bought a consumer card for the pre-listed MSRP? These cards will sell to OEM's for compute and to enthusiasts via Nvidia's partners for much less.So it comes down to "derp Nvidia is a company that wants to make money derp".

Calling someone a hater for unrealistic reasons is much less of an offense than being generally an idiot.

TheJian - Wednesday, February 20, 2013 - link

A chip with 7.1B transistors is tougher to make correctly than 3B. Which card has 6GB of 6ghz memory from AMD that's $500 with this performance? 7990 is $900-1000 with 6GB and is poorly engineered compared to this (nearly double the watts, two slots more heat etc etc).This is why K20 costs $2500. They get far few of these perfect than much simpler chips. Also as said before, engineering these are not free. AMD charges less you say? Their bottom line for last year shows it too...1.18B loss. That's why AMD will have no answer until the end of the year. They can't afford to engineer an answer now. They just laid of 30% of their workforce because they couldn't afford them. NV hired 800 people last year for new projects. You do that with profits, not losses. You quit giving away free games or go out of business.

Let me know when AMD turns a profit for a year. I guess you won't be happy until AMD is out of business. I think you're hating on NV for no reason. If they were anywhere near scalping customers they should have record PROFITS but they don't. Without Intel's lawsuit money (300mil a year) they'd be making ~1/2 of what they did in 2007. You do understand a company has to make money to stay in business correct?

If NV charged 1/2 the price for this they would be losing probably a few hundred on each one rather than probably a $200 profit or so.

K20 is basically the same card for $2500. You're lucky their pricing it at $1000 for what you're getting. Amazon paid $2000ea for 10000 of these as K20's. You think they feel robbed? So by your logic, they got scalped 20,000 times since they paid double the asking here with 10000 of them?...ROFL. OK.

What it comes down to is NV knows how to run a business, while AMD knows how to run one into the ground. AMD needs to stop listening to people like you and start acting like NV or they will die.

AMD killed themselves the day they paid 3x the price they should have for ATI. Thank Hector Ruiz for that. He helped to ruin Motorola too if memory serves...LOL. I love AMD, love their stuff, but they run their business like idiots. Kind of like Obama runs the country. AMD is running a welfare business (should charge more, and overpays for stuff they shouldn't even buy), obama runs a welfare country, and pays for crap like solyndra etc he shouldn't (with our money!). Both lead to credit downgrades and bankruptcy. You can't spend your way out of a visa bill. But both AMD and Obama think you can. You have to PAY IT OFF. Like NV, no debt. Spend what you HAVE, not what you have to charge.

Another example. IMG.L, just paid triple what they should have for the scrap of MIPS. I think this will be their downfall. They borrowed 22million to pay 100mil bid for mips. It was worth 30mil. This will prove to be Imaginations downfall. That along with having chip sales up 90% but not charging enough to apple for them. They only made 30mil for 6 MONTHS! Their chip powers all of apples phones and tablets graphics! They have a hector ruiz type running their company too I guess. Hope they fire him before he turns them into AMD. Until Tegra4 they have the best gpu on a soc in the market. But they make 1/10 of what NV does. Hmmm...Wrong pricing? Apple pockets 140Bil over the life of ipad/iphone...But IMG.L had to borrow 22mil just to buy a 100mil company? They need to pull a samsung and raise prices 20% on apple. NV bought icera with 325mil cash...Still has 3.74B in the bank (which btw is really only up from 2007 because of Intel's 300mil/yr, not overcharging you).

CeriseCogburn - Sunday, February 24, 2013 - link

Appreciate it. Keep up the good work, as in telling the basic facts called the truth to the dysfunctional drones.no physx

no cuda

no frame rate target (this is freaking AWESOME, thanks nVidia)

no "cool n quiet" on the fly GPU heat n power optimizing max fps

no TXAA

no same game day release drivers

EPIC FAIL on dual cards, yes even today for amd

" While it suffers from the requirement to have proper game-specific SLI profiles for optimum scaling, NVIDIA has done a very good job here in the past, and out of the 19 games in our test suite, SLI only fails in F1 2012. Compare that to 6 out of 19 failed titles with AMD CrossFire."

http://www.techpowerup.com/reviews/NVIDIA/GeForce_...

nVidia 18 of 19, 90%+ GRADE AAAAAAAAAA

amd 13 of 19 < 70% grade DDDDDDDDDD

Iketh - Tuesday, February 19, 2013 - link

please drag yourself into the street and stone yourselfCeriseCogburn - Sunday, February 24, 2013 - link

LOL awww, now that wasn't very nice... May I assume you aren't in the USA and instead in some 3rd world hole with some 3rd world currency and economy where you can't pitch up a few bucks because there's no welfare available ? Thus your angry hate filled death wish ?MrSpadge - Tuesday, February 19, 2013 - link

Don't worry.. price will drop if they're actually in a hurry to sell them.