NVIDIA's GeForce GTX Titan, Part 1: Titan For Gaming, Titan For Compute

by Ryan Smith on February 19, 2013 9:01 AM ESTGPU Boost 2.0: Temperature Based Boosting

With the Kepler family NVIDIA introduced their GPU Boost functionality. Present on the desktop GTX 660 and above, boost allows NVIDIA’s GPUs to turbo up to frequencies above their base clock so long as there is sufficient power headroom to operate at those higher clockspeeds and the voltages they require. Boost, like turbo and other implementations, is essentially a form of performance min-maxing, allowing GPUs to offer higher clockspeeds for lighter workloads while still staying within their absolute TDP limits.

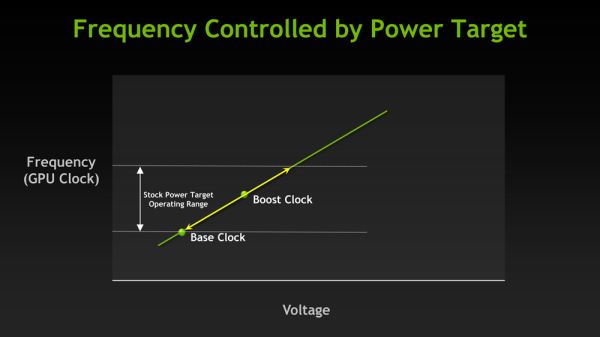

With the first iteration of GPU Boost, GPU Boost was based almost entirely around power considerations. With the exception of an automatic 1 bin (13MHz) step down in high temperatures to compensate for increased power consumption, whether GPU Boost could boost and by how much depended on how much power headroom was available. So long as there was headroom, GPU Boost could boost up to its maximum boost bin and voltage.

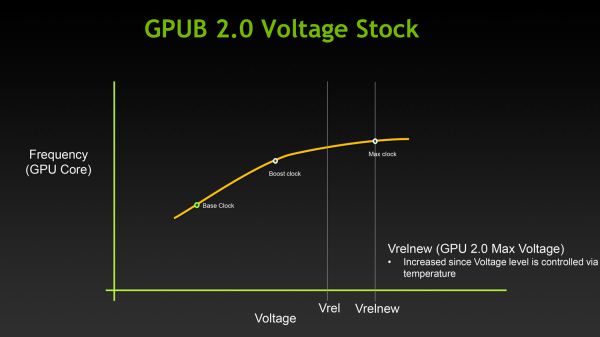

For Titan, GPU Boost has undergone a small but important change that has significant ramifications to how GPU Boost works, and how much it boosts by. And that change is that with GPU Boost 2, NVIDIA has essentially moved on from a power-based boost system to a temperature-based boost system. Or perhaps more precisely, a system that is predominantly temperature based but is also capable of taking power into account.

When it came to GPU Boost 1, its greatest weakness as explained by NVIDIA is that it essentially made conservative assumptions about temperatures and the interplay between high temperatures and high voltages in order keep from seriously impacting silicon longevity. The end result being that NVIDIA was picking boost bin voltages based on the worst case temperatures, which meant those conservative assumptions about temperatures translated into conservative voltages.

So how does a temperature based system fix this? By better mapping the relationship between voltage, temperature, and reliability, NVIDIA can allow for higher voltages – and hence higher clockspeeds – by being able to finely control which boost bin is hit based on temperature. As temperatures start ramping up, NVIDIA can ramp down the boost bins until an equilibrium is reached.

Of course total power consumption is still a technical concern here, though much less so. Technically NVIDIA is watching both the temperature and the power consumption and clamping down when either is hit. But since GPU Boost 2 does away with the concept of separate power targets – sticking solely with the TDP instead – in the design of Titan there’s quite a bit more room for boosting thanks to the fact that it can keep on boosting right up until the point it hits the 250W TDP limit. Our Titan sample can boost its clockspeed by up to 19% (837MHz to 992MHz), whereas our GTX 680 sample could only boost by 10% (1006MHz to 1110MHz).

Ultimately however whether GPU Boost 2 is power sensitive is actually a control panel setting, meaning that power sensitivity can be disabled. By default GPU Boost will monitor both temperature and power, but 3rd party overclocking utilities such as EVGA Precision X can prioritize temperature over power, at which point GPU Boost 2 can actually ignore TDP to a certain extent to focus on power. So if nothing else there’s quite a bit more flexibility with GPU Boost 2 than there was with GPU Boost 1.

Unfortunately because GPU Boost 2 is only implemented in Titan it’s hard to evaluate just how much “better” this is in any quantities sense. We will be able to present specific Titan numbers on Thursday, but other than saying that our Titan maxed out at 992MHz at its highest boost bin of 1.162v, we can’t directly compare it to how the GTX 680 handled things.

157 Comments

View All Comments

AeroJoe - Wednesday, February 20, 2013 - link

Very good article - but now I'm confused. If I'm building an Adobe workstation to handle video and graphics, do I want a TITAN for $999 or the Quadro K5000 for $1700? Both are Kepler, but TITAN looks like more bang for the buck. What am I missing?Rayb - Wednesday, February 20, 2013 - link

The extra money you are paying is for the driver support in commercial applications like Adobe CS6 with a Quadro card vs a non certified card.mdrejhon - Wednesday, February 20, 2013 - link

Excellent! Geforce Titan will make it much easier to overclock an HDTV set to 120 Hz( http://www.blurbusters.com/zero-motion-blur/hdtv-r... )

Some HDTV’s such as Vizio e3d420vx can be successfully “overclocked” to a 120 Hz native PC signal from a computer. This was difficult because an EDID override was necessary. However, the Geforce Titan should make this a piece of cake!

Blazorthon - Wednesday, February 20, 2013 - link

Purely as a gaming card, Titan is obviously way to overpriced to be worth considering. However, it's compute performance is intriguing. It can't totally replace a Quadro or Tesla, but there are still many compute workloads that you don't need those extremely expensive extra features such as ECC and Quadro/Tesla drivers to excel in. Many of them may be better suited to a Tahiti card's far better value, but stuff like CUDA workloads may find Titan to be the first card to truly succeed GF100/GF110 based cards as a gaming and compute-oriented card, although like I said, I think that the price could still be at least somewhat lower. I understand it not being around $500 like GF100/110 launched at for various reasons, but come on, at most give us an arpund $700-750 price...just4U - Thursday, February 21, 2013 - link

Some one here have stated that AMD is at fault for pricing their 7x series so high las year. Perhaps many were disapointed with the $550 price range but that's still somewhat lower than previously released Nvidia products thru the years. Several of those cards (at various price points) handily beat the 580 (which btw never did get much of a price drop) and at the time that's what it was competing against.So I can't quite connect the dots in why they are saying that it's AMD's fault for originally pricing the 7x series so high when in reality it was still lower than newly released Nvidia product over the past several years.

CeriseCogburn - Monday, March 4, 2013 - link

For the most part, correct.The 7970 came out at $579 though, not $550. And it was nearly not present for many months, till just the prior day to the 680's $499 launch.

In any case, ALL these cards drop in price over the first six months or so, EXCEPT sometimes, if they are especially fast, like the 580, they hold at the launch price, which it did, until the 7970 was launched - the 580 was $499 till the day the 7970 launched.

So what we have here is the tampon express. The tampon express has not paid attnetion to any but fps/price vs their revised and memory holed history, so it will continue forever.

They have completely ignored capital factors like the extreme lack of production space in the node, ongoing prior to the 7970 release, and at emergency low levels prior to the months later 680 release, with the emergency board meeting, and multi-billion dollar borrowing buildout for die space production expansion, not to mention the huge change in wafer from dies payment which went from per good die to per wafer cost, thus placing the burden of failure on the GPU company side.

It's not like they could have missed that, it was all over the place for months on end, the amd fanboys were bragging amd got diespace early and constantly hammering away at nVidia and calling them stupid for not having reserved space and screaming they would be bankrupt from low yields they had to pay for from the "housefires" dies.

So what we have now is well trained (not potty trained) crybabies pooping their diapers over and over again, and let's face it, they do believe they have the power to lower the prices if they just whine loudly enough.

AMD has been losing billions, and nVidia profit ratio is 10% - but the crying babies screams mean to assist their own pocketbooks at any expense, including the demise of AMD even though they all preach competition and personal CEO capitalist understanding after they spew out 6th grader information or even make MASSIVE market lies and mistakes with illiterate interpretation of standard articles or completely blissful denial of things like diespace (mentioned above) or long standing standard industry tapeout times for producing the GPU's in question.

They want to be "critical reporters" but they fail miserably at it, and merely show crybaby ignorance with therefore false outrage. At least they consider themselves " the good hipster !"

clickonflick - Thursday, March 7, 2013 - link

i agree that the price of this GPU is really high , one could easily assemble a fully mainstream laptop online with dell at this price tag or a desktop, but for gamers, to whom performance is above price. then it is a boon for themfor more pics check this out

clickonflick/nvidia-geforce-gtx-titan