Calxeda's ARM server tested

by Johan De Gelas on March 12, 2013 7:14 PM EST- Posted in

- IT Computing

- Arm

- Xeon

- Boston

- Calxeda

- server

- Enterprise CPUs

It's a Cluster, Not a Server

When unpacking our Boston Viridis server, the first thing that stood out is the bright red front panel. That is Boston's way of telling us that we have the "Cloud Appliance" edition. The model with an orange bezel is intended to serve as a NAS appliance, purple stands for "web farm", and blue is more suited for a Hadoop cluster. Another observation is that the chassis looks similar to recent SuperMicro servers; it is indeed a bare bones system filled with Calxeda hardware.

Behind the front panel we find 24 2.5” drive bays, which can be fitted with SATA disks. If we take a look at the back, we can find a standard 750W 80 Plus Gold PSU, a serial port, and four SFP connectors. Those connectors are each capable of 10Gbit speeds, using copper and/or fiber SFP(+) transceivers.

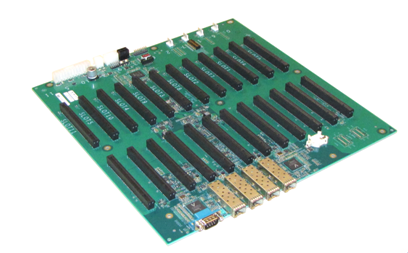

When we open up the chassis, we find somewhat less standard hardware. Mounted on the bottom is what you might call the motherboard, a large, mostly-empty PCB that contains the shared Ethernet components and a number of PCIe slots.

The 10Gb Ethernet Media Access Controller (MAC) is provided on the EnergyCore SoC, but in order to allow every node to communicate via the SFP ports, each node forwards its Ethernet traffic to one of the first four cards (the cards in slots 0-3). These nodes are connected via a XAUI interface to one of the two Vitesse VSC8488 XAUI-to-serial transceivers that in turn control two SFP modules each. Hidden behind an air duct is a Xilinx Spartan-6 FPGA, configured to act as chassis manager.

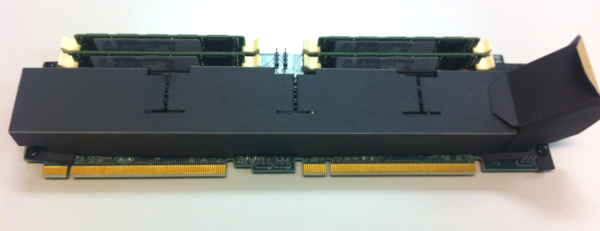

Each pair of PCIe slots contains what turns this chassis into a server cluster: an EnergyCard (EC). Each EnergyCard contains four SoCs, each with one DIMM slot. An EnergyCard contains thus four server nodes, with each node running on a quad-core ARM CPU.

The chassis can hold as many as 12 EnergyCards, so currently up to 48 server nodes. That limit is only imposed by physical space constraints, as the fabric supports up to 4096 nodes, leaving the potential for significant expansion if Calxeda maintains backwards compatibility with their existing ECs.

The system we received can only hold 6 ECs; one EnergyCard slot is lost because of the SATA cabling, giving us six ECs with four server nodes each, or 24 server nodes in total. Some creative effort has been made to provide air baffles that direct the air through the heat sinks on the ARM chips.

The air baffles are made of a finicky plastic-coated paper, glued to gether and placed on the EC with plastic nails, making it difficult to remove them from an EC by hand. Each EC can be freely placed on the motherboard, with the exception of the Slot 0 card that needs a smaller baffle.

Every EnergyCard is thus fitted with four EnergyCore SoCs, each having access to one miniDIMM slot and four SATA connectors. In our configuration each miniDIMM slot was populated with a Netlist 4GB low-voltage (1.35V instead of 1.5V) ECC PC3L-10600W-9-10-ZZ DIMM. Every SoC provided was hooked up to a Samsung 256GB SSD (MZ7PC256HAFU, comparable to Samsung’s 310 Series consumer SSDs), filling up every disk slot in the chassis. We removed those SSDs and used our iSCSI SAN to boot the server nodes. This way it was easier to compare the system's power consumption with other servers.

Previous EC versions had a microSD slot per node at the back, but in our version it has been removed. The cards are topology-agnostic; each node is able to determine where it is placed. This enables you to address and manage nodes based on their position in the system.

99 Comments

View All Comments

JohanAnandtech - Wednesday, March 13, 2013 - link

Ok, good question. I'll look into it, as I am definitely considering a follow-upskyroski - Wednesday, March 13, 2013 - link

I make performance oriented web apps for a living and I was looking forward to this performance test very much. However, I was quite disappointed at how you have done the "real world" test.If you're serving a single site you would never put a Xeon through the performance penalties of virtualisation, so I deem your real world results flawed/unusable.

Basically, if I was to consider buying a Calxeda server tomorrow, I want to know if I can serve a site faster/better by using the "cluster in a box" solution which ARM's partners are going for or if a single Xeon server with standardised dedicated hardware will serve me and my businesses better.

The other thing that I would have also tested is SSL request performance because Intel has AES-NI built in and I believe ARM has something similar? I would say the majority of request today for a serious web app/site will be traffic using the SSL protocol, so that would also be one of those deciding factors I would look at.

If I was a cloud host provider your comparison may contain some truth as their business model would be to presumably let each ARM node out as a VPS alternative, but that isn't what you were testing were you?

JohanAnandtech - Wednesday, March 13, 2013 - link

1. The single site: it is not meant to be an environment of one single site. The reason why we use the same site over and over again, is that it makes it easier to interpret the results and more repeatable. Consider a hosting provider who host many similar - but not the same - LAMP sites.The repeatable part is the part that most people don't understand very well: we don't just hit the same URL over and over again. We perform real user interactions and randomize them in realworld patterns (like logging in first and then several real actions) and then getting a repeatable benchmark gets very complex.

2. The SSL comment is definitely good feedback. We are currently writing the connection code for such SSL websites but also need to find one or more good examples. If your site is a good example, maybe we can use yours (even under NDA if necessary) ?

3. Lastly, the virtualization overhead of ESXi 5 is very small.

Kurge - Wednesday, March 13, 2013 - link

You know, you can host multiple different LAMP sites on bare metal ;)klmccaughey - Wednesday, March 13, 2013 - link

It won't be LAMP sites any more though - take a trawl through something like the Linode forums to get an idea of what people are building. You are talking higher concurrency and more likely nginx.Someone made a valid comment about database sharding - for web apps this is much more likely as people try to make sure they have failover.

Whilst initially very disappointed, if you imaging the refresh on the ARM cores over the next 2 years (and considering the rate of change due to the phone market) you might actualy be looking at a beast of a machine in two or three iterations. Imagine if you could buy these off the shelf for under $10k: That feels to me like mission critical failover systems in a box. I can see this taking off in a couple of years.

klmccaughey - Wednesday, March 13, 2013 - link

And kudos for the review - I look forward to the follow-up. This is a space that needs watching!Silma - Thursday, March 14, 2013 - link

True but do you think Intel will stop product development for the next 3 years? In addition who will have the best fabs then? My guess is Intel.Krysto - Monday, March 18, 2013 - link

I don't know how fast it actually is, but relative to the ARMv7 architecture, AES should be up to 10x faster on ARMv8.kfreund - Wednesday, March 13, 2013 - link

Nice job, Johan. Can't wait to see your next one; we will be sure to get you an A15 based system as soon as we get it out! Let the debates begin!kfreund - Wednesday, March 13, 2013 - link

Regarding Stream performance, this is a known limitation of A9; it just can't handle a lot of concurrent memory requests. A15 will nearly triple the memory bandwidth at same DDR rate.