Calxeda's ARM server tested

by Johan De Gelas on March 12, 2013 7:14 PM EST- Posted in

- IT Computing

- Arm

- Xeon

- Boston

- Calxeda

- server

- Enterprise CPUs

Pricing

So how much does this Boston Viridis server cost? $20,000 is the official price for one Boston Viridis with 24 nodes at 1.4GHz and 96GB of RAM. That is simply very expensive. A Dell R720 with dual 10 gigabit, 96GB of RAM and two Xeons E5-L2650L is in the $8000 range; you could easily buy two Dell R720 and double your performance. The higher power bill of the Xeon E5 servers is that case hardly an issue, unless you are very power constrained. However, these systems are targeted at larger deployments.

Buy a whole rack of them and the price comes down to $352 per server node, or about $8500 per server. We have some experience with medium quantity sales, and our best guess is that you get typically a 10 to 20% discount when you buy 20 of them. So that would mean that the Xeon E5 server would be around $6500-$7200 and the Boston Viridis around $8500. Considering that you get an integrated (5x 10Gbit) switch and a lower power bill with the Boston Viridis, the difference is not that large anymore.

Calxeda's Roadmap and Our Opinion

Let's be clear: most applications still run better on the Xeon E5. Our CPU benchmarks clearly indicate that any application that accesses the memory frequently or that needs high per thread integer processing power will run better on the Xeon E5. Compiling and installing software simply feels so much faster on the Xeon E5, there is no need to benchmark.

There's more: if your performance requirements are higher than what a quad-core Cortex-A9 can deliver, the Xeon E5 is a lot more flexible and a better choice in most cases. Scaling up is after all a lot easier than using load balancers and other complex software or hardware to scale out. Also, the management software of the Boston Viridis does the job, but Dell's DRAC, HP ILO, and Supermicro's IM are more user friendly.

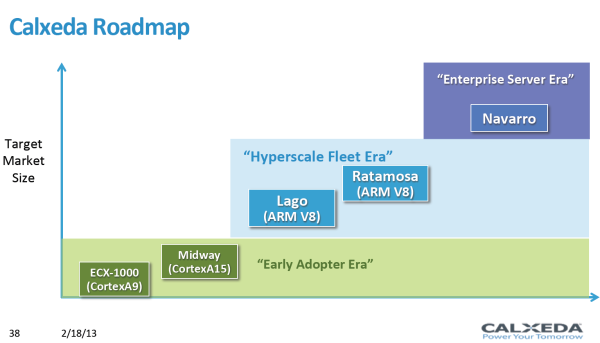

Calxeda is aware of all this, as they label their first "highbank" server architecture with the ECX-1000 SoC as targeted to the "early adopter". That is why we deliberately tested a scenario that would be relevant to the potential early adopters: a cluster of web servers that is relatively network intensive as it serves a lot of media files. This is one of the better scenarios for Calxeda, but not the best: we could imagine that a streaming server or storage server would be an even better fit. Especially the latter catches on, and the storage version of the Boston Viridis sells well.

So on the one hand, no, the current Calxeda servers are no Intel Xeon killers (yet). However, we feel that the Calxeda's ECX-1000 server node is revolutionary technology. When we ran 16 VMs (instead of 24), the dual low power Xeon was capable of achieving the same performance per VM as the Calxeda server nodes. That this 24 node system could offer 50% more throughput at 10% lower power than one of the best Xeon machines available was honestly surprising to us. 8W at the wall per server node—exactly what Calxeda claimed—is nothing short of remarkable, because it means that the 48 server node machine, which is also available, is even more efficient.

To put that 8W number in perspective, the current Intel Atoms that offer similar performance need that kind of power for the SoC alone and are baked with Intel's superior 32nm process technology. The next generation ARM servers are already on the way and will probably hit the market in the third quarter of this year. The "Midway" SoC is based on a 28nm (TSMC) Cortex-A15 chip. A 28nm Cortex-A15 offers 50% higher single-threaded integer performance at slightly higher power levels and can address up to 16GB of RAM. With that it's safe to conclude that the next Calxeda server will be a good match for a much larger range of applications--memcached, larger web, and midrange database servers for examples. By then, virtualization will be available with KVM and Xen, but we think virtualization on ARM will only take off when the ARM A57 core with its 64-bit ARM V8 ISA hits the market in 2014.

Right now, the limited performance of the individual server nodes makes the Boston Viridis attractive for web applications with lower CPU demands in a power constrained data center. But the extremely low energy consumption and the rapidly increasing performance of the ARM cores show great potential for Calxeda's technology. Short term, this is a niche market, but in another year or two this style of approach could easily encroach on Intel's higher end markets.

99 Comments

View All Comments

JohanAnandtech - Wednesday, March 13, 2013 - link

Hmmm ... There is almost no info on how that hypervisor works. It is hard to imagine that kind of system would scale very well. How does it keep Cache coherent? Do you have info on that?timbuktu - Wednesday, March 13, 2013 - link

I can't speak directly to ScaleMP, but it looks similar to NUMALink.http://en.wikipedia.org/wiki/NUMAlink

Reading through this article about Calxedas, great job BTW, I couldn't help but think about the old SGI hardware that seemed pretty similar with MIPs (and later Itanium) processors connected through a switch with NUMALink. I haven't played with NUMALink directly in almost a decade, but back then cheaper Altix slabs were ring topology while higher end hardware was switched. In the end though, you could put together a bunch of 1U racks together and have a single system image. Like you mentioned though, cache coherency was exceptionally important. Since we have a uv here, I can point you to the documentation for that box.

http://techpubs.sgi.com/library/tpl/cgi-bin/getdoc...

Everything old is new again, I suppose. Well, except NUMAlink never went away. =D

Tunrip - Wednesday, March 13, 2013 - link

I'd be interested in knowing how the Xeon compared if you did the same test without the virtual machines.JohanAnandtech - Wednesday, March 13, 2013 - link

The website won't scale to 32 logical cores I am afraid... but we can try to see how far we can getColin1497 - Wednesday, March 13, 2013 - link

A better question might be "is 24 VM's a logical number to use?" Would more or fewer VM's work better? The appearance is that you have 24VM's because you have 24 ARM nodes?duploxxx - Wednesday, March 13, 2013 - link

very interesting, loved reading it. But although early in the ball game I do think there are other way better solutions in the pipe-line from the big OEM:HP Moonshot

http://h17007.www1.hp.com/us/en/iss/110111.aspx

JohanAnandtech - Wednesday, March 13, 2013 - link

Isn't remarkable how PR people manage to fill so many pages with "extreme" and "the future" without telling anything. Frustation became even higher when I clicked "get the facts" page. That is more like "You are not getting any facts at all".DuckieHo - Wednesday, March 13, 2013 - link

Since these are set up as webservers, what's the power consumption at say 20-40% load? Usually there is some load instead of completely idle.JohanAnandtech - Wednesday, March 13, 2013 - link

Good suggestion... you'll like to see a step by step power measurement like SpecPower right? Let me try that.DanNeely - Wednesday, March 13, 2013 - link

I'd be interested in seeing where, and what happens when you start pushing single chips to and slightly beyond their limits. Calxeda's hardware's proved competitive on a very friendly workload (which I didn't really expect would happen until their A15 product); but in the real world a set of small websites are unlikely to all have equal load levels. Virtual servers on larger CPUs should give more headroom for load spikes; so knowing what the limits on Calxeda's hardware are strikes me as fairly important.