Calxeda's ARM server tested

by Johan De Gelas on March 12, 2013 7:14 PM EST- Posted in

- IT Computing

- Arm

- Xeon

- Boston

- Calxeda

- server

- Enterprise CPUs

It's a Cluster, Not a Server

When unpacking our Boston Viridis server, the first thing that stood out is the bright red front panel. That is Boston's way of telling us that we have the "Cloud Appliance" edition. The model with an orange bezel is intended to serve as a NAS appliance, purple stands for "web farm", and blue is more suited for a Hadoop cluster. Another observation is that the chassis looks similar to recent SuperMicro servers; it is indeed a bare bones system filled with Calxeda hardware.

Behind the front panel we find 24 2.5” drive bays, which can be fitted with SATA disks. If we take a look at the back, we can find a standard 750W 80 Plus Gold PSU, a serial port, and four SFP connectors. Those connectors are each capable of 10Gbit speeds, using copper and/or fiber SFP(+) transceivers.

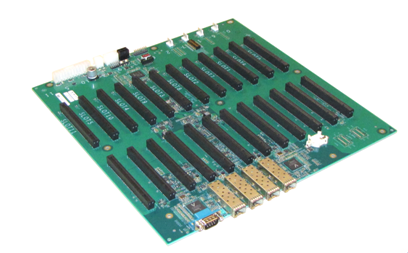

When we open up the chassis, we find somewhat less standard hardware. Mounted on the bottom is what you might call the motherboard, a large, mostly-empty PCB that contains the shared Ethernet components and a number of PCIe slots.

The 10Gb Ethernet Media Access Controller (MAC) is provided on the EnergyCore SoC, but in order to allow every node to communicate via the SFP ports, each node forwards its Ethernet traffic to one of the first four cards (the cards in slots 0-3). These nodes are connected via a XAUI interface to one of the two Vitesse VSC8488 XAUI-to-serial transceivers that in turn control two SFP modules each. Hidden behind an air duct is a Xilinx Spartan-6 FPGA, configured to act as chassis manager.

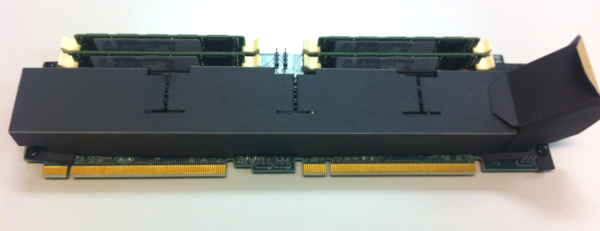

Each pair of PCIe slots contains what turns this chassis into a server cluster: an EnergyCard (EC). Each EnergyCard contains four SoCs, each with one DIMM slot. An EnergyCard contains thus four server nodes, with each node running on a quad-core ARM CPU.

The chassis can hold as many as 12 EnergyCards, so currently up to 48 server nodes. That limit is only imposed by physical space constraints, as the fabric supports up to 4096 nodes, leaving the potential for significant expansion if Calxeda maintains backwards compatibility with their existing ECs.

The system we received can only hold 6 ECs; one EnergyCard slot is lost because of the SATA cabling, giving us six ECs with four server nodes each, or 24 server nodes in total. Some creative effort has been made to provide air baffles that direct the air through the heat sinks on the ARM chips.

The air baffles are made of a finicky plastic-coated paper, glued to gether and placed on the EC with plastic nails, making it difficult to remove them from an EC by hand. Each EC can be freely placed on the motherboard, with the exception of the Slot 0 card that needs a smaller baffle.

Every EnergyCard is thus fitted with four EnergyCore SoCs, each having access to one miniDIMM slot and four SATA connectors. In our configuration each miniDIMM slot was populated with a Netlist 4GB low-voltage (1.35V instead of 1.5V) ECC PC3L-10600W-9-10-ZZ DIMM. Every SoC provided was hooked up to a Samsung 256GB SSD (MZ7PC256HAFU, comparable to Samsung’s 310 Series consumer SSDs), filling up every disk slot in the chassis. We removed those SSDs and used our iSCSI SAN to boot the server nodes. This way it was easier to compare the system's power consumption with other servers.

Previous EC versions had a microSD slot per node at the back, but in our version it has been removed. The cards are topology-agnostic; each node is able to determine where it is placed. This enables you to address and manage nodes based on their position in the system.

99 Comments

View All Comments

Kurge - Wednesday, March 13, 2013 - link

Yeah, should have had two teams - each with goal to optimize on each platform. The Xeon team would not (lol) load up 24 VM's to serve the same web app. It's silly. Go bare metal in that use case.There will be different needs for different cases. The "lets load up a bunch of VMs" is useful to cloud providers and in other cases, but not for "I want to feed this app to as many users as possible".

dig23 - Tuesday, March 12, 2013 - link

Interesting article and great first effort but felt bit outdated on both ATOM as well as ARM front, I am not blaming you, just saying.JarredWalton - Tuesday, March 12, 2013 - link

Outdated in what sense? No one else has really made a serious attempt to review thee Calxedas stuff, and while there are better Atom option out there, as Johan notes we were unable to get any in-house in time for testing. Or do you mean Calxedas' use of Cortex-A9 is outdated? If so, that's more of a case of laying the groundwork I think. Assuming they have their A15 option be backwards compatible with the current system (e.g. just get a new set of cards with the updated SoCs), that would be very cool.JohanAnandtech - Wednesday, March 13, 2013 - link

I can only agree with Jarred. There are no A15 server chips AFAIK, and unless I have missed a launch, I think the Atom N2800 is not outdated at all (Dec 2011).aryonoco - Wednesday, March 13, 2013 - link

This was a fabulous and most informative write up. You answered so many of my questions with this article. Excellent job covering an area that no one else is, and also kudos for running such great benchmarks.This really is tech journalism at its best. Thank you Johan, and thank you Anand for employing such high-quality writers.

We all know how memory constrained the ARM A9 is. Even something like Krait would solve a lot of A9's traditional weak areas. And yet, it looks like the Calxeda makes sense in enough niches to be sustain their R&D and development efforts. Low-to-medium traffic web hosting, media streaming and storage. Each one of those areas is a sizeable market and the Calxeda solution offers enough to be seriously considered in these makets.

And when one thinks about how many years of x86 optimisation has gone into the toolchain in things like the gcc, one realises the potential that lies ahead for ARM in this market. ARM's future roadmap is well known, next is Cortex A15 and then Cortex A57. Meanwhile there will be more software optimisation, and the management/deployment side will also improve. With all these in mind, I think it's more than conceivable that ARM will grab up to 20% marketshare in the server market by 2015.

JohanAnandtech - Wednesday, March 13, 2013 - link

Thanks! Good summary... and indeed 20% marketshare is not impossible. The real questions is whether Intel give the Atom it is long overdue architecture update, or will Haswell put some pressure from above? Exciting times.beginner99 - Wednesday, March 13, 2013 - link

Isn't it much easier to administer 24 virtual servers than 24 physical ones (cost of personnel)? When all servers have the same workload it look sgood for ARM but the virtualized intel environment easily wins if some servers get a lot more requests than others, meaning too much for one ARM SOC to handle. The tested scenario is basically the best one could ever hope for the ARM server and pretty unrealistic (same load for all servers). That's fine but then also post worst-case scenarios...Intel server is a lot more flexible.hardwaremister - Wednesday, March 13, 2013 - link

I completely agree with the other readers that this writing is just absolutely superb. Fantastic novel job Johan.However, I also agree with the above commenter: a big part coup on virtualizing a "fat" core system is to be able to properly utilize the resources of the machine across VMs. By equally loading "tiny tiles", the obvious advantage of the inherent load balancing of a virtualized infrastructure completely disappears.

Under current the current "fat" VM infrastructure you can accomodate individual VMs with heterogeneous loading levels, with extra provisioning in the resource pool.

That is just not simply the case for these tests based on an army of individual machines against a many VMs virtualized under a few "fat" cpus.

I don't mean to be overcritical, but this is a proper apples vs oranges comparison.

bobbozzo - Wednesday, March 13, 2013 - link

A lot of shared hosting ISP's use lightweight virtualization with Linux or BSD "Containers". I would like to see you re-benchmark with those on both servers instead of using VMs.You should see higher performance vs full virtualization. I'm not sure how it would affect the ARM performance, but it shouldn't hurt much, and there is more potential for better load sharing if some sites are busier than others.

Jambe - Wednesday, March 13, 2013 - link

Surprising, indeed! Thoroughgoing as usual, and excellently written.