Calxeda's ARM server tested

by Johan De Gelas on March 12, 2013 7:14 PM EST- Posted in

- IT Computing

- Arm

- Xeon

- Boston

- Calxeda

- server

- Enterprise CPUs

Our Real World Test

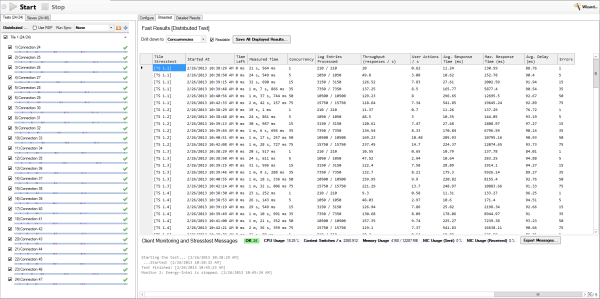

We've set up two systems, the Xeon system with two different pairs of Xeon CPUs, 128GB and ESXi 5.1. We created 24 virtual machines on top of the Xeon server. Inside each we have a PhpBB (Apache2, MySQL) website with four virtual CPUs and 4GB RAM. The website uses about 8GB of disk space. We simulate up to 75 concurrent users that send new requests every 0.6-2.4 seconds.

The Boston Viridis server gets the same workload, but instead of using virtual machines, we used the 24 physical server nodes.

Since the redesign in late 2010, our vApus stresstesting framework is well suited to hit (virtualized or not) clusters with lots of workloads in parallel. A quad 7400 server is able to spawn 24 testing clients. The server is connected to our Dell PowerConnect 8024F (10Gbit Ethernet), which is connected to the testservers.

vApus hitting 24 web servers in parallel

This way we can simulate a webhosting environment where tens of websites are hit by a few thousands of visitors per second. That might not sound very impressive, but those few thousand requests per second result in a web environment with 100 million hits per day.

Since we made sure that our web server serves up some nice pictures (png), there is some significant network traffic going on. We measured peaks of up to 8Gbit/s, with typical network traffic being about 4 to 6Gbit/s.

99 Comments

View All Comments

Gigaplex - Tuesday, March 12, 2013 - link

I wouldn't call that a spectacular performance per watt ratio. It's a bit faster than the Xeon under a cherry picked benchmark (much slower under others), and is only marginally lower power. Best case it's an 80% improvement over Sandy Bridge with regards to performance per watt, and Atom wasn't represented. Considering all the hype, I was expecting something a little more... exciting. Ignoring Ivy Bridge improvements, Haswell isn't far off.spronkey - Tuesday, March 12, 2013 - link

Yeah... I agree. It also only seems to really come into its own in high concurrency. The Xeons idle quite similarly in terms of power - what happens if you compare it to more Xeon cores? It seems like on a per core basis, Intel still has the advantage on both fronts?spronkey - Tuesday, March 12, 2013 - link

I would also point out that the A15 has already been compared against Sandy and Ivy cores and come up short in performance per watt; so I'm very interested to see what the next step for these ARM node servers is.JohanAnandtech - Wednesday, March 13, 2013 - link

I warned against the hype in the first sentences. :-) ARM CPUs are still rather weak and not a good match for most applications. However, the fact that we could actually find a case where they do a lot better than the current Xeon systems was surprising to me.wsw1982 - Wednesday, April 3, 2013 - link

No, it should not surprise any people regarding how picky the use case is. I mean, I do think you can find a use case the ARM 11 output perform Xeon. E.g. Serving 1 web request per hour :)LogOver - Tuesday, March 12, 2013 - link

24 servers ran inside 24 VM's on Xeon server, while for ARM server you used the 24 physical server nodes... Hmm... Does not seems to me like apple to apple comparison. Why not to compare, for example, 16 physical nodes on both, xeon and arm servers?haplo602 - Wednesday, March 13, 2013 - link

And how do you slice the Xeon server into 16 physical nodes ? It does not support any kind of HW partitioning that I am aware of. On the other hand the Calxeda machine is a cluster by design. If you try 16 Xeon nodes you'll go through the roof with power.Colin1497 - Wednesday, March 13, 2013 - link

I think the question is this:Was 24 VM's optimal for the Xeon? Since we're visualizing the Xeon, why 24? Just because you had 24 ARM nodes? Would the Xeon done better with 4VM's? Or 16? Or 1000? 24 seems arbitrary.

JohanAnandtech - Wednesday, March 13, 2013 - link

We tested with 16 as I briefly mentioned in the conclusion. The 2650L did 170 responses/s per VM, or about 40% better. Total Throughput = 2.7k/s, while with 24, 2.9 K/s. THe flexibility that the Xeon has to reduce the number of VMs if higher throughput is necessary is definitely an advantage, but the performance numbers are not that different with different VM configs.Kurge - Wednesday, March 13, 2013 - link

How about with 0 VM's? Just run it on the metal.