A Month with Apple's Fusion Drive

by Anand Lal Shimpi on January 18, 2013 9:30 AM EST- Posted in

- Storage

- Mac

- SSDs

- Apple

- SSD Caching

- Fusion Drive

Putting Fusion Drive’s Performance in Perspective

Benchmarking Fusion Drive is a bit of a challenge since it prioritizes the SSD for all incoming writes. If you don’t fill the Fusion Drive up, you can write tons of data to the drive and it’ll all hit the SSD. If you do fill the drive up and test with a dataset < 4GB, then you’ll once again just measure SSD performance.

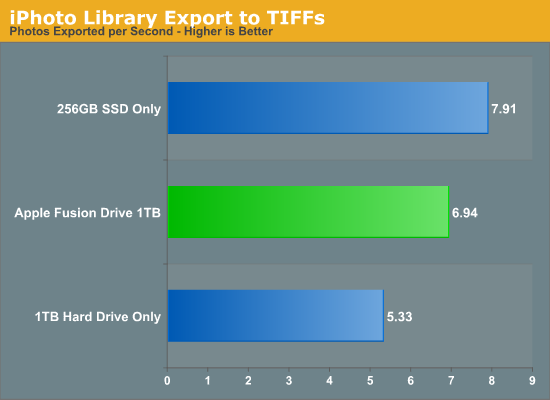

In trying to come up with a use case that spanned both drives I stumbled upon a relatively simple one. By now my Fusion Drive was over 70% full, which meant the SSD was running as close to capacity as possible (save its 4GB buffer). I took my iPhoto library with 703 photos and simply exported all photos as TIFFs. The resulting files were big enough that by the time I hit photo 297, the 4GB write buffer on the SSD was full and all subsequent exported photos were directed to the HDD instead. I timed the process, then compared it to results from a HDD partition on the iMac as well as compared to a Samsung PM830 SSD connected via USB 3.0 to simulate a pure SSD configuration. The results are a bit biased in favor of the HDD-only configuration since the writes are mostly sequential:

The breakdown accurately sums up my Fusion Drive experience: nearly half-way between a hard drive and a pure SSD configuration. In this particular test the gains don't appear all that dramatic, but again that's mostly because we're looking at relatively low queue depth sequential transfers. The FD/HDD gap would grow for less sequential workloads. Unfortunately, I couldn't find a good application use case to generate 4GB+ of pseudo-random data in a repeatable enough fashion to benchmark.

If I hammered on the Fusion Drive enough, with constant very large sequential writes (up to 260GB for a single file) I could back the drive into a corner where it would no longer migrate data to the SSD without a reboot (woohoo, I sort of broke it!). I suspect this is a bug that isn't triggered through normal automated testing (for obvious reasons), but it did create an interesting situation that I could exploit for testing purposes.

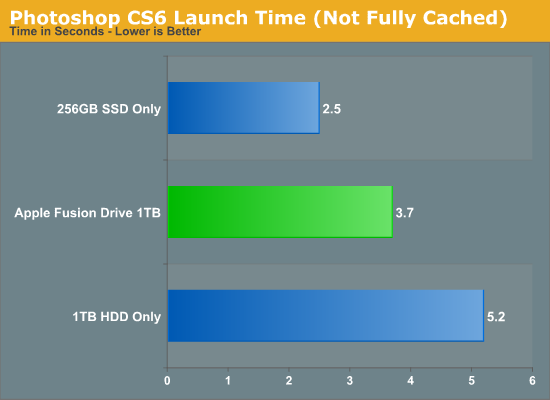

Although launching any of the iMac's pre-installed applications frequently used by me proved that they were still located on the SSD, this wasn't true for some of the late comers. In particular, Photoshop CS6 remained partially on the SSD and partially on the HDD. It ended up being a good benchmark for pseudo-random read performance on Fusion Drive where the workload is too big (or in this case, artificially divided) to fit on the SSD partition alone. I measured Photoshop launch time on the Fusion Drive, a HDD-only partition and on a PM830 connected via USB 3.0. The results, once again, mirrored my experience with the setup:

Fusion Drive delivers a noticeable improvement over the HDD-only configuration, speeding up launch time by around 40%. A SSD-only configuration however cuts launch time in more than half. Note that if Photoshop were among the most frequently used applications, it would get moved over to the SSD exclusively and deliver performance indistinguishable from a pure SSD configuration. In this case, it hadn't because my 1.1TB Fusion Drive was nearly 80% full, which brings me to a point I made earlier:

The Practical Limits of Fusion Drive

Apple's Fusion Drive is very aggressive at writing to the SSD, however the more data you have the more conservative the algorithm seems to become. This isn't really shocking, but it's worth pointing out that at a lower total drive utilization the SSD became home to virtually everything I needed, but as soon as my application needs outgrew what FD could easily accommodate the platform became a lot pickier about what would get moved onto the SSD. This is very important to keep in mind. If 128GB of storage isn’t enough for all of your frequently used applications, data and OS to begin with, you’re going to have a distinctly more HDD-like experience with Fusion Drive. To simulate/prove this I took my 200GB+ MacBook Pro image and moved it over to the iMac. Note that most of this 200GB was applications and data that I actually used regularly.

By the end of my testing experience, I was firmly in the category where I needed more solid state storage. Spotlight searches took longer than on a pure SSD configuration, not all application launches were instant, adding photos to iPhoto from Safari took longer, etc... Fusion Drive may be good, but it's not magic. If you realistically need more than 128GB of solid state storage, Fusion Drive isn't for you.

127 Comments

View All Comments

guidryp - Friday, January 18, 2013 - link

These claims about the effort in setting up SSD/HD combo are getting quite silly.There is essentially ZERO time difference into setting up SSD/HD partitioned combo vs Fusion. Your payback would be on Day 1.

The only effort is simply deciding which partition to load new material on. That decision takes what? Microseconds.

It is as simple as install OS/Apps on SSD, Media HD. Vs Install OS/Apps/Media on Fusion. The effort is essentially the same.

But that simple manual partition will perform better, create less system thrashing and less wear on all your drives.

Zink - Friday, January 18, 2013 - link

But then you end up with a SSD filled up with no longer relevant data and you need to figure out how to free up space again. A combo drive takes care of that for you and keeps the SSD filled to the brim with most of the data that gets used. You can download any games, start any big video editing project, and know that you are getting 50%-100% of the benefit of the SSD without worrying about managing segregated data. With a segregated setup you end up playing games from the HDD or editing video files that are on the HDD and sometimes see 0% of the benefit of the SSD. Fusion seems like the future.KitsuneKnight - Friday, January 18, 2013 - link

If you can divide your data up as OS, Apps, and Media, and OS + Apps fits on the SSD, then sure, it's not too bad.Unfortunately, my Steam library is approximately 250 GBs... That alone would fill up most SSDs out there. And that's not even counting all my non-Steam games, which would help push most any SSD towards being totally full. If I'd bought too many recent games, it'd likely be quite a bit larger than that (AAA games seem to be ranging from 10-30 GBs these days).

Unless you sprung for a 500 GB SSD (which aren't exactly cheap, even today), you'd be having quite a pickle on your hands. Likely having to move most of the library manually to the HDD (which is a bit of a pain with Steam). Which means it's suddenly much more complicated than OS/Apps on SSD, and Media on the HDD. Especially since SSDs massively improve the load time of large games (unlike the impact it has on media).

And then there's the other examples I've already given: the artist I know that works on absurdly massive PSDs, and has many terabytes of them (what's the point of a SSD if it doesn't benefit your primary usage of a computer?), as well as my situation with VMs on my non-gaming machine (which actually has a SSD + HDD setup right now). A lot of people could probably do the divide you're talking about, but likely even more people could fit all their data in either a 128 or 256 GB SSD.

name99 - Friday, January 18, 2013 - link

Then WTF are you complaining about?You can still buy an HD only mac mini and add your own USB3 SSD as boot disk.

Or you can buy a fusion mac mini and split the two drives apart.

It's not enough that things can be done your way, you ALSO want everyone else, who wants a simple solution, to have to suffer?

Mr Perfect - Friday, January 18, 2013 - link

Intel SRT is useful for everyone, there's no reason to look down on it. Could I sit there and manually move files back and forth between the SSD and HD? Sure. But why? Seriously, I have better things to do with my time then move around the program of the week between storage mediums. Last week I was using Metro 2033, this week is World of Tanks. Next week I might finish one of those run throughs of D:HR or Portal 2 that I left hanging. SRT takes care of all of that. This is 2013, an enthusiast class workstation should damn well be able to handle something as simple as caching, and it can. Enterprise class servers have been doing it for some time, so why isn't it good enough for a gameing rig?My one complaint with RST is the cache size limit. Why would Intel even impose a limit?

EnzoFX - Saturday, January 19, 2013 - link

You're framing it in your own way so that only your solution works. Fail. Unnecessary stressing of the SSD? The better argument for most people would be putting that SSD to good use. Not trying to NOT use it.It further isn't simply about putting the files where they go, and then be done with. Files are changed, updated, and if you're on multiple drives, copied back and forth. Some people don't want to deal with that. Actually, no one should want to do deal with that. There are only barriers with every person having their own thresholds to good solutions. Is it that hard to understand?

lyeoh - Saturday, January 19, 2013 - link

Do you manually control the data in the 1st, 2nd level cache in your CPU too? There are plenty of decent caching algorithms created by very smart people. If the algorithms were that bad your CPU would be running very slow.There should be no need for you to WASTE TIME moving crap around from drive to drive. The OS can know how often you use stuff, and whether the accesses are sequential, random, slow.

If Windows 8's Storage Spaces was more like Fusion Drive out of the box (or better even), us geeks would be more impressed by Windows 8.

Feldur - Monday, June 29, 2015 - link

Having designed and built both computers and operating systems, I qualify as not naive. I'm interested in your assertion, therefore, that because I prefer letting the Fusion drive do the work that I must be lazy. You're making a judgement about how I should spend my time - that it's the best investment of my time to shuffle files about (non-trivial if I want the level of granularity a Fusion drive can offer, too) versus developing software or playing with my dog. It's interesting that you think you know me that well, regardless of the fact you're dead wrong.How do you reconcile that?

StormyParis - Friday, January 18, 2013 - link

The device is technically nice, however the price is wayyy too expensiveat around $450 for 128GB+2TB:Apple's 128GB SSD+ 2TB HDD "Fusions drive" is about $450 ($400 as an upgrade)

A regular 256 GB SSD is $170

A regular 3TB HD is $150.

regular equivalent for Apple's price: 256 SSD+ 2x3TB HDD = $470

You can get twice the SSD storage, and 3 times the HDD storage, for about the Apple price. This will take up more physical space, but also offer you way more storage space, both on the SSD side (plenty of space for your OS, apps, and live data files) and HDD space (3TB + 3TB backup, or 6TB JBOD for your archives and media)

jeffkibuule - Friday, January 18, 2013 - link

Hence the DIY route.