The Tegra 4 GPU, NVIDIA Claims Better Performance Than iPad 4

by Anand Lal Shimpi on January 14, 2013 6:13 PM ESTAt CES last week, NVIDIA announced its Tegra 4 SoC featuring four ARM Cortex A15s running at up to 1.9GHz and a fifth Cortex A15 running at between 700 - 800MHz for lighter workloads. Although much of CEO Jen-Hsun Huang's presentation focused on the improvements in CPU and camera performance, GPU performance should see a significant boost over Tegra 3.

The big disappointment for many was that NVIDIA maintained the non-unified architecture of Tegra 3, and won't fully support OpenGL ES 3.0 with the T4's GPU. NVIDIA claims the architecture is better suited for the type of content that will be available on devices during the Tegra 4's reign.

Despite the similarities to Tegra 3, components of the Tegra 4 GPU have been improved. While we're still a bit away from a good GPU deep-dive on the architecture, we do have more details than were originally announced at the press event.

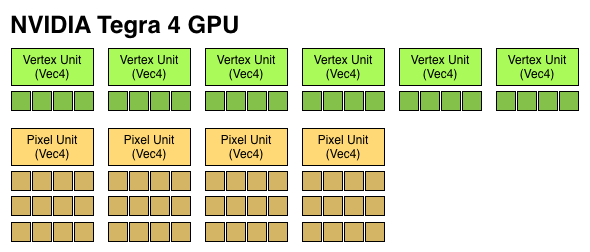

Tegra 4 features 72 GPU "cores", which are really individual components of Vec4 ALUs that can work on both scalar and vector operations. Tegra 2 featured a single Vec4 vertex shader unit (4 cores), and a single Vec4 pixel shader unit (4 cores). Tegra 3 doubled up on the pixel shader units (4 + 8 cores). Tegra 4 features six Vec4 vertex units (FP32, 24 cores) and four 3-deep Vec4 pixel units (FP20, 48 cores). The result is 6x the number of ALUs as Tegra 3, all running at a max clock speed that's higher than the 520MHz NVIDIA ran the T3 GPU at. NVIDIA did hint that the pixel shader design was somehow more efficient than what was used in Tegra 3.

If we assume a 520MHz max frequency (where Tegra 3 topped out), a fully featured Tegra 4 GPU can offer more theoretical compute than the PowerVR SGX 554MP4 in Apple's A6X. The advantage comes as a result of a higher clock speed rather than larger die area. This won't necessarily translate into better performance, particularly given Tegra 4's non-unified architecture. NVIDIA claims that at final clocks, it will be faster than the A6X both in 3D games and in GLBenchmark. The leaked GLBenchmark results are apparently from a much older silicon revision running no where near final GPU clocks.

| Mobile SoC GPU Comparison | |||||||||||||||

| GeForce ULP (2012) | PowerVR SGX 543MP2 | PowerVR SGX 543MP4 | PowerVR SGX 544MP3 | PowerVR SGX 554MP4 | GeForce ULP (2013) | ||||||||||

| Used In | Tegra 3 | A5 | A5X | Exynos 5 Octa | A6X | Tegra 4 | |||||||||

| SIMD Name | core | USSE2 | USSE2 | USSE2 | USSE2 | core | |||||||||

| # of SIMDs | 3 | 8 | 16 | 12 | 32 | 18 | |||||||||

| MADs per SIMD | 4 | 4 | 4 | 4 | 4 | 4 | |||||||||

| Total MADs | 12 | 32 | 64 | 48 | 128 | 72 | |||||||||

| GFLOPS @ Shipping Frequency | 12.4 GFLOPS | 16.0 GFLOPS | 32.0 GFLOPS | 51.1 GFLOPS | 71.6 GFLOPS | 74.8 GFLOPS | |||||||||

Tegra 4 does offer some additional enhancements over Tegra 3 in the GPU department. Real multisampling AA is finally supported as well as frame buffer compression (color and z). There's now support for 24-bit z and stencil (up from 16 bits per pixel). Max texture resolution is now 4K x 4K, up from 2K x 2K in Tegra 3. Percentage-closer filtering is supported for shadows. Finally, FP16 filter and blend is supported in hardware. ASTC isn't supported.

If you're missing details on Tegra 4's CPU, be sure to check out our initial coverage.

59 Comments

View All Comments

alexvoda - Monday, January 14, 2013 - link

Adding the Apple A6 and the Samsung Exynos 5 Dual would be nice for comparison.StormyParis - Monday, January 14, 2013 - link

Do we know what percentage of tablet users actually play demanding 3D games ? Out of 5-6 people off the top of my head, no one does.BugblatterIII - Monday, January 14, 2013 - link

Well I do. Android is all about choice; if you want killer 3D you should be able to have it. If you don't need it then you can buy a device more to your taste.CeriseCogburn - Sunday, January 20, 2013 - link

Worse yet, the Apple fanboys don't play 3D, they might play a 1932xx oh sorry 1999 Syndicate clone that ran on a clamshell...Or they bootcamp and have suckage hardware to deal with for their dollars spent, usually something the rabid AMD fanboy base would scream about - where are they ? Oh that's right, if it's nVidia getting bashed that's them doing it - so no using their 1,000 % always overstated talking point price/perf for now....

What the appleheads do is bask in the glory, true or not, that "they could" play some 3D if they "wanted to". LOL

This site did a tegra3 vs the then current Apple gaming comparison and apple did not win - it lost in fact.

Apple looked worse, didn't performa any better, had less features active -

Apple lost to tegra3 in gaming, right here at this site.. I know the article is still up as usual.

gbanfalvi - Wednesday, January 23, 2013 - link

You're not talking about this one then, are you :Dhttp://www.anandtech.com/show/5163/asus-eee-pad-tr...

Quizzical - Monday, January 14, 2013 - link

There are a variety of claims around the Internet that Tegra 4 supports Direct3D 11 and OpenGL 4. Those have 6 and 5 programmable pipeline stages, respectively. (The sixth, compute shaders, was added by OpenGL in 4.3.) Direct3D has the whole feature_level business that lets companies claim Direct3D 11 compliance while not supporting anything not already in Direct3D 9.0c, but OpenGL doesn't do that.If it doesn't have unified shaders, then where do the other pipeline stages get executed? Does everything except fragment shaders run on vertex shaders, since any position computations will desperately need the 32-bit precision to avoid massive graphical artifacting? Or are the more recent pipeline stages simply not supported by Tegra 4 at all, and the claims of OpenGL 4 compliance simply wrong?

And now you're claiming that Tegra 4 doesn't even fully support OpenGL ES 3.0, let alone the full OpenGL? OpenGL ES 3.0 still only has two programmable stages, too.

dgschrei - Tuesday, January 15, 2013 - link

The Direct3D practice you describe is actually gone since DirectX 10.On DX9 you could indeed claim your card was DX9-capable even though you only supported a single one of the DX9 features.

Since DX10 MS -thankfully- changed this and you are only allowed to put the DX10/11 stamp onto your hardware if you support the full DX10/11 feature set.

Krysto - Wednesday, January 16, 2013 - link

Are you sure about that? I've seen mobile GPU's say they support DirectX11.1 but only Direct3D 9.3 feature set. So they might support other stuff in DirectX up to 11.1, but as far as graphics and gaming go, they'll only support the feature set up to 9.3.I mean, do you actually expect these chips to support tessellation? Even if they did, it would be useless for mobile devices, as they would use too much power. That's why the OpenGL ES standard exists for mobile, separated from the full OpenGL. You can't use the full features yet without consuming a lot of power, and the same is true for DirectX.

Quizzical - Wednesday, January 16, 2013 - link

Tessellation done right is actually a huge performance optimization.One classical problem in 3D graphics is how many vertices to use in your models for objects that are supposed to appear curved. Use few vertices and they appear horribly blocky up close. Use a ton of vertices and it's a huge performance hit when you have to process a ton of vertices for objects that are far enough away that a large number of vertices is complete overkill.

Ideally, you'd like to use few vertices for objects that are far away (and most of the time, most objects are far away), many vertices for the few objects that are up close, and interpolate smoothly between them to use not much more the minimum number of vertices to make everything look smooth, regardless of how far away they are. That's exactly what tessellation does.

There is a minimum performance level needed for using tessellation to make sense, though. If you're forced to turn tessellation down far enough that there are obvious jumps in the model whenever the tessellation level changes, it looks terrible. The quad core version of AMD Temash will have plenty of GPU performance for heavy use of tessellation to make sense, and the dual core version might, too. Nvidia Tegra 4 might well have had that level of GPU performance, too, if it supported tessellation.

-----

But you can live without tessellation, and just accept that 3D models will be blocky up close. I'm actually more concerned about missing geometry shaders.

Geometry shaders let you see an entire primitive at once in a programmable shader stage, rather than only one vertex or pixel at a time. They also let you create new primitives or discard old ones on the GPU rather than having to process them all on the CPU and then send them to the GPU. Both of those give you a lot more versatility, and allow you to do a lot of work on the GPU that would otherwise have to be done on the CPU.

And that, I think, should be a huge deal for tablets--more so than for desktops, even. Taking GPU-friendly work and insisting that it has to be done on the CPU instead is not a recipe for good energy efficiency. In a desktop, you may have enough brute force CPU power to make things work even without geometry shaders, albeit inefficiently, but tablets and phones tend not to have a ton of brute force CPU power available.

Ryan Smith - Tuesday, January 15, 2013 - link

Any claims that T4 supports D3D11 or OpenGL 4 would be incorrect. As you correctly note, it has no way to support those missing pipeline stages.