The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Final Words

Whereas I didn't really have anything new to conclude in the original article (Atom Z2760 is faster and more power efficient than Tegra 3), there's a lot to talk about here. We already know that Atom is faster than Krait, but from a power standpoint the two SoCs are extremely competitive. At the platform level Intel (at least in the Acer W510) generally leads in power efficiency. Note that this advantage could just as easily be due to display and other power advantages in the W510 itself and not necessarily indicative of an SoC advantage.

Looking at the CPU cores themselves, Qualcomm takes the lead. It's unclear how things would change if we could include L2 cache power consumption for Qualcomm as we do for Intel (see page 2 for an explanation). I suspect that Qualcomm does maintain the power advantage here though, even with the L2 cache included.

On the GPU side, Intel/Imagination win there although the roles reverse as Adreno 225 holds a performance advantage. For modern UI performance, the PowerVR SGX 545 is good enough but Adreno 225 is clearly the faster 3D GPU. Intel has underspecced its ultra mobile GPUs for a while, so a lot of the power advantage is due to the lower performing GPU. In 2D/modern UI tests however, the performance advantage isn't realized and thus the power advantage is still valid.

Qualcomm is able to generally push to lower idle power levels, indicating that even Intel's 32nm SoC process is getting a little long in the tooth. TSMC's 28nm LP and Samsung's 32nm LP processes both help silicon built in those fabs drive down to insanely low idle power levels. That being said, it is still surprising to me that a 5-year-old Atom architecture paired with a low power version of a 3-year-old process technology can be this competitive. In the next 9 - 12 months we'll finally get an updated, out-of-order Atom core built on a brand new 22nm low power/SoC process from Intel. This is one area where we should see real improvement. Intel's chances to do well in this space are good if it can manage to execute well and get its parts into designs people care about.

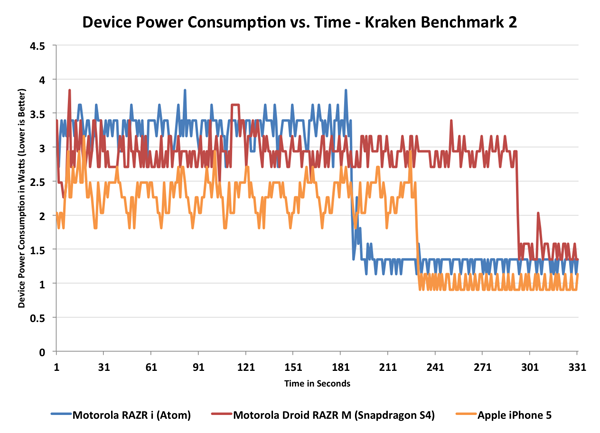

Device level power consumption, from our iPhone 5 review, look familiar?

If the previous article was about busting the x86 power myth, one key takeaway here is that Intel's low power SoC designs are headed in the right direction. Atom's power curve looks a lot like Qualcomm's, and I suspect a lot like Apple's. There are performance/power tradeoffs that all three make, but they're all being designed the way they should.

The Cortex A15 data is honestly the most intriguing. I'm not sure how the first A15 based smartphone SoCs will compare to Exynos 5 Dual in terms of power consumption, but at least based on the data here it looks like Cortex A15 is really in a league of its own when it comes to power consumption. Depending on the task that may not be an issue, but you still need a chassis that's capable of dissipating 1 - 4x the power of a present day smartphone SoC made by Qualcomm or Intel. Obviously for tablets the Cortex A15 can work just fine, but I am curious to see what will happen in a smartphone form factor. With lower voltage/clocks and a well architected turbo mode it may be possible to deliver reasonable battery life, but simply tossing the Exynos 5 Dual from the Nexus 10 into a smartphone isn't going to work well. It's very obvious to me why ARM proposed big.LITTLE with Cortex A15 and why Apple designed Swift.

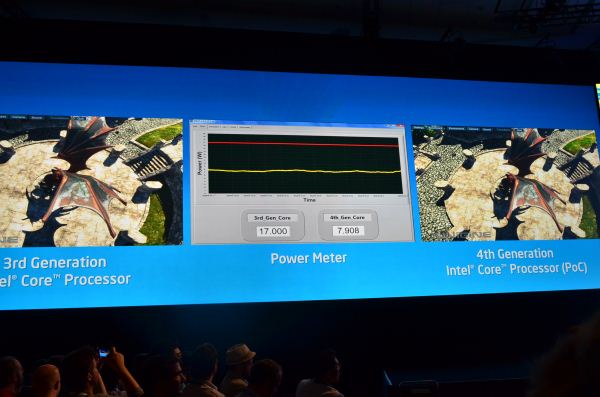

I'd always heard about Haswell as the solution to the ARM problem, particularly in reference to the Cortex A15. The data here, particularly on the previous page, helped me understand exactly what that meant. Under a CPU or GPU heavy workload, the Exynos 5 Dual will draw around 4W. Peak TDP however is closer to 8W. If you remember back to IDF, Intel specifically called out 8W as a potential design target for Haswell. In reality, I expect that we'll see Haswell parts even lower power than that. While it may still be a stretch to bring Haswell down to 4W, it's very clear to me that Intel sees this as a possiblity in the near term. Perhaps not at 22nm, but definitely at 14nm. We already know Core can hit below 8W at 22nm, if it can get down to around 4W then that opens up a whole new class of form factors to a traditionally high-end architecture.

Ultimately I feel like that's how all of this is going to play out. Intel's Core architectures will likely service the 4W and above space, while Atom will take care of everything else below it. The really crazy part is that it's not too absurd to think about being able to get a Core based SoC into a large smartphone as early as 14nm, and definitely by 10nm (~2017) should the need arise. We've often talked about smartphones being used as mainstream computing devices in the future, but this is how we're going to get there. By the time Intel moves to 10nm ultramobile SoCs, you'll be able to get somewhere around Sandy/Ivy Bridge class performance in a phone.

At the end of the day, I'd say that Intel's chances for long term success in the tablet space are pretty good - at least architecturally. Intel still needs a Nexus, iPad or other similarly important design win, but it should have the right technology to get there by 2014. It's up to Paul or his replacement to ensure that everything works on the business side.

As far as smartphones go, the problem is a lot more complicated. Intel needs a good high-end baseband strategy which, as of late, the Infineon acquisition hasn't been able to produce. I've heard promising things in this regard but the baseband side of Intel remains embarassingly quiet. This is an area where Qualcomm is really the undisputed leader, Intel has a lot of work ahead of it here. As for the rest of the smartphone SoC, Intel is on the right track. Its existing architecture remains performance and power competitive with the best Qualcomm has to offer today. Both Intel and Qualcomm have architecture updates planned in the not too distant future (with Qualcomm out of the gate first), so this will be one interesting battle to watch. If ARM is the new AMD, then Krait is the new Athlon 64. The difference is, this time, Intel isn't shipping a Pentium 4.

140 Comments

View All Comments

A5 - Friday, January 4, 2013 - link

Even if you just look at the Sunspider (which draws nothing on the screen) power draw, it's pretty clear that the A15 draws more power. There have been a ton of OEMs complaining about A15's power draw, too.madmilk - Friday, January 4, 2013 - link

Since when did screen resolution matter for CPU power consumption on CPU benchmarks? Platform power might change, yes, but this doesn't invalidate many facts like Cortex-A15 using twice as much power on average compared to Krait, Atom or Cortex-A9.Wolfpup - Friday, January 4, 2013 - link

Good lord. Do you have some evidence for any of this? If neither Windows nor Android is the "right platform" for ARM, then...are you waiting for Blackberry benchmarks? That's a whole lot of spin you're doing, presumably to fit the data to your preconceived "ARM IS BETTER!" faith.Veteranv2 - Friday, January 4, 2013 - link

Hahaha, the Nexus 10 has almost 4 times the pixels of the Atom.And the conclusion is it draws more power in benchmarks? Of course, those pixels aren't going to fill itself. Way to make conclusion.

How big was that Intel PR cheque?

iwod - Saturday, January 5, 2013 - link

While i wouldn't say it was a Intel PR, I think they should definitely have left the system level power usage out of the questions. There is no point telling me that a 100" Screen with ARM is using X amount of power compared to 1" Screen with Haswell.It is confusing.

But they did include CPU and GPU benchmarks. So saying it is Intel PR is just trolling.

AlB80 - Friday, January 4, 2013 - link

Architectures with variable length of instruction are doomed. Actually there is only one remains. x86.Intel made the step into a happy past when CISC has an advantage over RISC, when superscalarity was just a theory.

Cortex A57 is coming. ARM cores will easily outperform Atom by effective instruction rate with minimum overhead.

Wolfpup - Friday, January 4, 2013 - link

How is x86 doomed when it has an absolute stranglehold on real PCs, and is now competitive on ultramobile platforms?The only disadvantage it holds is the need for a larger decoder on the front end, which has been proportionally shrinking since 1995.

djgandy - Friday, January 4, 2013 - link

plus effing one!I think some people heard their uni lecturers say something once in 1999 and just keep repeating it as if it is still true!

AlB80 - Friday, January 4, 2013 - link

Shrinking decoder... nice myth. Of course complicated scheduler and ALU dozen impact on performance, but do not forget how decoded instruction queues are filled. Decoder is only one real difference.1. There is fundamental limits how many variable instructions can be decoded per clock. CISC has an instruction cross-interference at the decoder stage. One logical block should determine a total length of decoded instructions.

2. There is a trick when CISC decoder is disintegrated into 2-3 parts with dedicated inputs, so its looks like a few independent decoders, but each part can not decode any instruction.

Now compare it with RISC.

And as I said, what happens when Cortex can decode 4,5,6,7,8 instructions?

Kogies - Friday, January 4, 2013 - link

Don't be so quick to prophesy the death of a' that. What happens when a Cortex decodes 8 instructions... I don't know, it uses 8W?Also, didn't Apple choose CISC (Intel) chips over RISC (PowerPC)? Interestingly, I believe Apple made the switch to Intel because the PowerPC chips had too high a power premium for mobile computers.