The x86 Power Myth Busted: In-Depth Clover Trail Power Analysis

by Anand Lal Shimpi on December 24, 2012 5:00 PM ESTWireless Web Browsing Battery Life Test

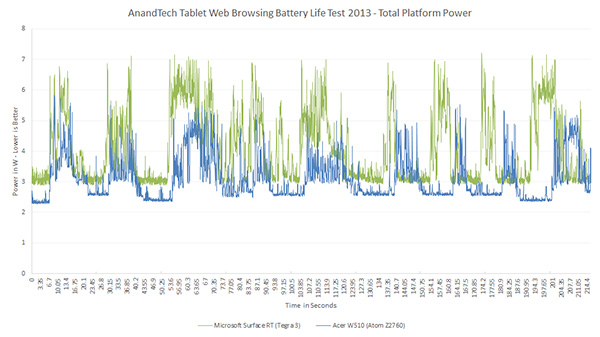

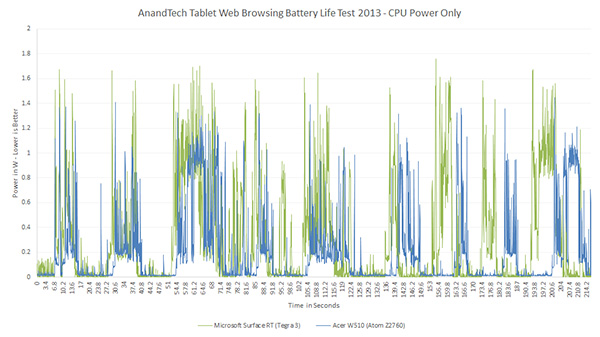

For our final test I wanted to provilde a snippet of our 2013 web browsing battery life test to show what its power profile looked like. Remember the point of this test was to simulate periods of increased CPU and network activity, that could correspond to more than just browsing the web but interacting with your device in general.

Those bursts of power consumption are the direct result of our battery life test doing its job. That the tasks should take roughly the same time to complete on both devices, making this a good battery life test by not penalizing a faster SoC with more work.

Note that the W510's curve ends up lagging behind Surface RT's curve a bit by the end of the chart. This is purely because of the W510's garbage WiFi implementation. I understand that a fix from Acer is on the way, but it's neat to see something as simple as poorly implemented WiFi showing up in these power consumption graphs.

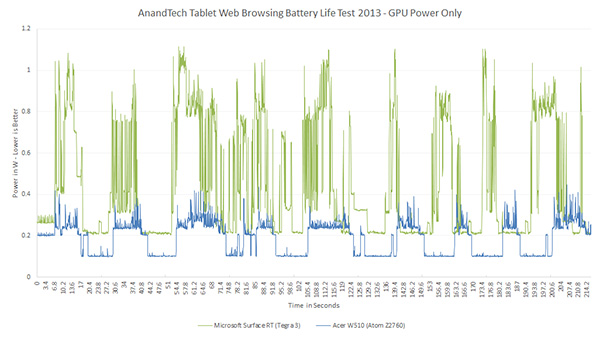

I always think about GPU power consumption while playing a game, but going through this experiment gave me a new found appreciation for non-gaming GPU power efficiency. Simply changing what's displayed on screen does burn an appreciable amount of power.

163 Comments

View All Comments

snoozemode - Monday, December 24, 2012 - link

The difference this time is huge compared to competitors Intel has faced in its history. Its not just about specs anymore, no matter if its performance or performance per watt, its a lot more about economy and margins. Anyone with an ARM license can build a SoC and a lot of new players are coming to the game, especially from China. And players like Samsung who produces own SoCs for their own devices, soon LG and possibly Apple aswell means that the days of Intel dictating the terms and dominating the industry is just not going to happen this time.KitsuneKnight - Monday, December 24, 2012 - link

You mean it's much closer to Intel's early days, when it faced dozens of competing companies, instead of just a couple tiny ones.snoozemode - Tuesday, December 25, 2012 - link

Still very different because of ARMs business model which is nothing like the past with Intel, AMD, Cyrix, IBM etc. Intel definitely didn't became the giant it is because of products with good value. They are what they are because of continously raising the bar above everyone else from a performance standpoint at a time when performance was everyting and energy efficiency was close to nothing. And when they sometimes have failed to compete from a performance standpoint (ex AMD around 2000) they have resorted to some serious foul play.ay@m - Thursday, December 27, 2012 - link

well, what if Intel is now measuring the energy efficiency as the new performance benchmark?that's exactly what this article is pointing out, that Intel's Atom chip is starting to focus on energy efficiency and not focusing on the performance anymore...right?

so i think Intel understands now what it takes to enter the mobile market and what the end user will perceive as the new performance benchmark.

Kidster3001 - Friday, January 4, 2013 - link

You make Intel's point!Intel will continue to have the performance advantage. They are now going after the power part of the equation. When they are best at both (or at least significantly better at one and same at the other) what do think the result will be?

Kevin G - Tuesday, December 25, 2012 - link

The problem for Intel is that they'll design the SoC that they want to manufacture and not necessarily what the OEM wants. Intel could over spec an SoC to cover a broader market but at an increased die size and power consumption. Intel's process advantage mitigates those factors but they'll still be at a disadvantage compared to another SoC designer that'll make a product with the bare necessary functional blocks. For Intel to become a major mobile player, they'll have to start listening to OEM's and designing chips specific to them.yyrkoon - Tuesday, December 25, 2012 - link

Intel already is a major player in the mobile market. They have been far longer than anyone producing ARM based processors.Granted according to an article I read a few months back. Tablet / smart phone sales are supposedly eclipsing the sales of laptops, and desktops combined. whether true or not, I can see it being possible soon, if not already.

p3ngwin1 - Tuesday, December 25, 2012 - link

yep, it's also about PRICE.if you've seen the price of the cheap Android tablets and devices, you know Intel will have to either convince us we need to pay a premium for the extra battery-life and performance, or they're going to have to lower their prices to compete aggressively.

you can buy decent SoC's from Allwinner, Rockchip, Nvidia, Qualcomm, etc that deliver "good enough" specs and a decent price, while Intel charges a premium for it's processors.

those cheap China SoC's like Rockwell and Allwinner, etc are ridiculously cheap and you can get Android 4.1 Tablets with 1.6Ghz dual-core ARM A9 processors with Mali400 GPU's and 7" 1200x720 screens for less than $150.

Intel charges way more for their processors compared to the ARM equivalents.

name99 - Tuesday, December 25, 2012 - link

The price issue is a good point.A second point is to ask WHY Intel does better in this comparison. I'd love to see a more detailed exploration of this, but maybe the tools don't exist. However one datapoint we do know is this

(a) SMT on Atom is substantially more effective than on Nehalem/SB/IB. Whereas on the desktop processors it gets you maybe a 25% boost (on IB, worse on older models), on Atom it gets you about 50%. (This isn't surprising because Atom's in-order'ness means there are just that many more execution slots going vacant.)

(b) SMT on Atom (and IB and SB, not on Nehalem) is extremely power efficient.

So one way to run an Atom and get better energy performance than CURRENT ARM devices is to be careful about using only one core, dual threaded, for as long as you can (which is probably almost all the time --- there is very little code that makes use of four cores, or even two cores both at max speed).

I bring this up because this sort of Intel advantage is easily copied. (I'm actually surprised it hasn't already been done --- in my eyes it's a toss up whether Apple's A7 will be a 64-bit Swift or a Swift with SMT. There are valid arguments either way --- it's in Apple's interests to get to 64-bit and a clean switchover as fast as possible, but the power advantages in SMT are substantial, since you can keep your second core asleep so much more often.)

Once you accept the value of companion cores it becomes even more interesting. One could imagine (especially for an Apple with so much control over the OS and CPU) a three-way graduated SOC, with dual-core OoO high performance cores (to give a snappiness to the UI), a single in-order SMT core (for work most of the time), and a low-end in-order core (for those long periods of idle punctuated by waking up to examine the world before going back to sleep). The area costs would be low (because each core could be a quarter or less of the area of its larger sibling; the real pain would be in writing and refining the OS smarts to use such a device well. But the payoff would be immense...

Point is --- I wouldn't write off ARM yet. Intel has process advantages, and some µArch advantages. But the µArch advantages can be copied; and is the process advantage enough to offset the extra cost (in dollars) that it imposes?

Kidster3001 - Friday, January 4, 2013 - link

big.LITTLE is a waste of time. It is a stop-gap ARM manufacturers are using to try and keep the power down. As they increase performance they are not able to keep power in the envelopes they thought they could. It is far more efficient to have cores that are capable of filling all the roles you suggest by dynamically changing how they operate. This is where Intel will succeed, just look at what Haswell can do.