The x86 Power Myth Busted: In-Depth Clover Trail Power Analysis

by Anand Lal Shimpi on December 24, 2012 5:00 PM ESTThe untold story of Intel's desktop (and notebook) CPU dominance after 2006 has nothing to do with novel new approaches to chip design or spending billions on keeping its army of fabs up to date. While both of those are critical components to the formula, its Intel's internal performance modeling team that plays a major role in providing targets for both the architects and fab engineers to hit. After losing face (and sales) to AMD's Athlon 64 in the early 2000s, Intel adopted a "no more surprises" policy. Intel would never again be caught off guard by a performance upset.

Over the past few years however the focus of meaningful performance has shifted. Just as important as absolute performance, is power consumption. Intel has been going through a slow waking up process over the past few years as it's been adapting to the new ultra mobile world. One of the first things to change however was the scope and focus of its internal performance modeling. User experience (quantified through high speed cameras mapping frame rates to user survey data) and power efficiency are now both incorporated into all architecture targets going forward. Building its next-generation CPU cores no longer means picking a SPECCPU performance target and working towards it, but delivering a certain user experience as well.

Intel's role in the industry has started to change. It worked very closely with Acer on bringing the W510, W700 and S7 to market. With Haswell, Intel will work even closer with its partners - going as far as to specify other, non-Intel components on the motherboard in pursuit of ultimate battery life. The pieces are beginning to fall into place, and if all goes according to Intel's plan we should start to see the fruits of its labor next year. The goal is to bring Core down to very low power levels, and to take Atom even lower. Don't underestimate the significance of Intel's 10W Ivy Bridge announcement. Although desktop and mobile Haswell will appear in mid to late Q2-2013, the exciting ultra mobile parts won't arrive until Q3. Intel's 10W Ivy Bridge will be responsible for at least bringing some more exciting form factors to market between now and then. While we're not exactly at Core-in-an-iPad level of integration, we are getting very close.

To kick off what is bound to be an exciting year, Intel made a couple of stops around the country showing off that even its existing architectures are quite power efficient. Intel carried around a pair of Windows tablets, wired up to measure power consumption at both the device and component level, to demonstrate what many of you will find obvious at this point: that Intel's 32nm Clover Trail is more power efficient than NVIDIA's Tegra 3.

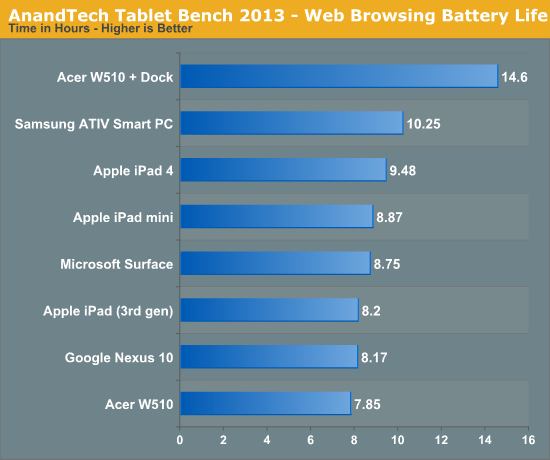

We've demonstrated this in our battery life tests already. Samsung's ATIV Smart PC uses an Atom Z2760 and features a 30Wh battery with an 11.6-inch 1366x768 display. Microsoft's Surface RT uses NVIDIA's Tegra 3 powered by a 31Wh battery with a 10.6-inch, 1366x768 display. In our 2013 wireless web browsing battery life test we showed Samsung with a 17% battery life advantage, despite the 3% smaller battery. Our video playback battery life test showed a smaller advantage of 3%.

For us, the power advantage made a lot of sense. We've already proven that Intel's Atom core is faster than ARM's Cortex A9 (even four of them under Windows RT). Combine that with the fact that NVIDIA's Tegra 3 features four Cortex A9s on TSMC's 40nm G process and you get a recipe for worse battery life, all else being equal.

Intel's method of hammering this point home isn't all that unique in the industry. Rather than measuring power consumption at the application level, Intel chose to do so at the component level. This is commonly done by taking the device apart and either replacing the battery with an external power supply that you can measure, or by measuring current delivered by the battery itself. Clip the voltage input leads coming from the battery to the PCB, toss a resistor inline and measure voltage drop across the resistor to calculate power (good ol' Ohm's law).

Where Intel's power modeling gets a little more aggressive is what happens next. Measuring power at the battery gives you an idea of total platform power consumption including display, SoC, memory, network stack and everything else on the motherboard. This approach is useful for understanding how long a device will last on a single charge, but if you're a component vendor you typically care a little more about the specific power consumption of your competitors' components.

What follows is a good mixture of art and science. Intel's power engineers will take apart a competing device and probe whatever looks to be a power delivery or filtering circuit while running various workloads on the device itself. By correlating the type of workload to spikes in voltage in these circuits, you can figure out what components on a smartphone or tablet motherboard are likely responsible for delivering power to individual blocks of an SoC. Despite the high level of integration in modern mobile SoCs, the major players on the chip (e.g. CPU and GPU) tend to operate on their own independent voltage planes.

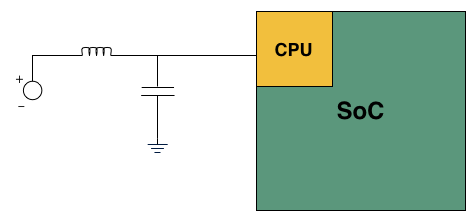

A basic LC filter

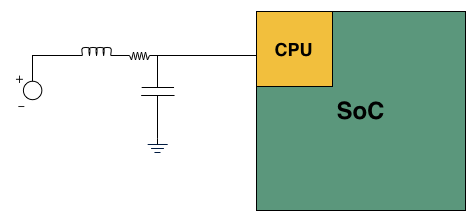

What usually happens is you'll find a standard LC filter (inductor + capacitor) supplying power to a block on the SoC. Once the right LC filter has been identified, all you need to do is lift the inductor, insert a very small resistor (2 - 20 mΩ) and measure the voltage drop across the resistor. With voltage and resistance values known, you can determine current and power. Using good external instruments you can plot power over time and now get a good idea of the power consumption of individual IP blocks within an SoC.

Basic LC filter modified with an inline resistor

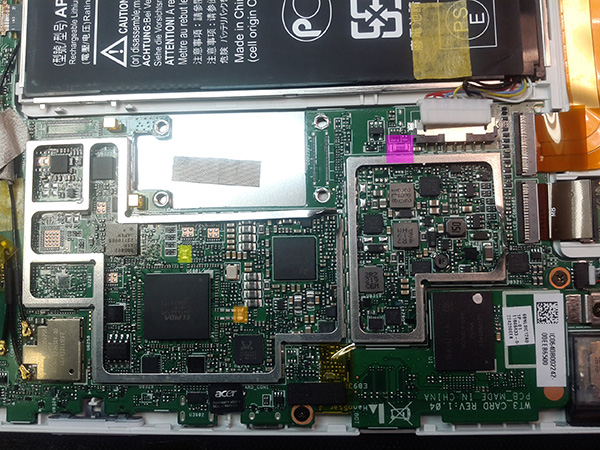

Intel brought one of its best power engineers along with a couple of tablets and a National Instruments USB-6289 data acquisition box to demonstrate its findings. Intel brought along Microsoft's Surface RT using NVIDIA's Tegra 3, and Acer's W510 using Intel's own Atom Z2760 (Clover Trail). Both of these were retail samples running the latest software/drivers available as of 12/21/12. The Acer unit in particular featured the latest driver update from Acer (version 1.01, released on 12/18/12) which improves battery life on the tablet (remember me pointing out that the W510 seemed to have a problem that caused it to underperform in the battery life department compared to Samsung's ATIV Smart PC? it seems like this driver update fixes that problem).

I personally calibrated both displays to our usual 200 nits setting and ensured the software and configurations were as close to equal as possible. Both tablets were purchased by Intel, but I verified their performance against my own review samples and noticed no meaningful deviation. All tests and I've also attached diagrams of where Intel is measuring CPU and GPU power on the two tablets:

Microsoft Surface RT: The yellow block is where Intel measures GPU power, the orange block is where it measures CPU power

Acer's W510: The purple block is a resistor from Intel's reference design used for measuring power at the battery. Yellow and orange are inductors for GPU and CPU power delivery, respectively.

The complete setup is surprisingly mobile, even relying on a notebook to run SignalExpress for recording output from the NI data acquisition box:

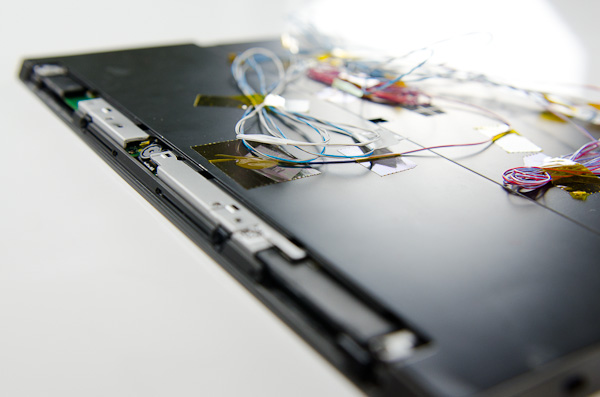

Wiring up the tablets is a bit of a mess. Intel wired up far more than just CPU and GPU, depending on the device and what was easily exposed you could get power readings on the memory subsystem and things like NAND as well.

Intel only supplied the test setup, for everything you're about to see I picked and ran whatever I wanted, however I wanted. Comparing Clover Trail to Tegra 3 is nothing new, but the data I gathered is at least interesting to look at. We typically don't get to break out CPU and GPU power consumption in our tests, making this experiment a bit more illuminating.

Keep in mind that we are looking at power delivery on voltage rails that spike with CPU or GPU activity. It's not uncommon to run multiple things off of the same voltage rail. In particular, I'm not super confident in what's going on with Tegra 3's GPU rail although the CPU rails are likely fairly comparable. One last note: unlike under Android, NVIDIA doesn't use its 5th/companion core under Windows RT. Microsoft still doesn't support heterogeneous computing environments, so NVIDIA had to disable its companion core under Windows RT.

163 Comments

View All Comments

dangerjaison - Tuesday, December 25, 2012 - link

The only reason why arm could make this progress is bcoz of android and ios. They are built mainly to run on arm architecture. There are lots of issues mainly hardware acceleration in intel's architecture coz the developers build their games n apps to perform well on arm. The recently launches Intel device with atom running android had good backup and performance but couldn't succeed bcoz of compatibility. Intel still can make a big comeback to take over arm in mobile market.SilentLennie - Tuesday, December 25, 2012 - link

Euh... actually, everyone knew the NVidia product sucked on the power efficiency front.There is still a lot of work to do for Intel.

Blaster1618 - Tuesday, December 25, 2012 - link

One would think that Nvidia would have spent a couple of dollars to to work on their GPU efficiency. lolULP Geforce at 520 MHz in (40 nm) process easily beat a Power VR SGX545 (65 nm).

Even when when Nvida moves to (28 nm) technology next year it will move form a pig to a Pig-lite.

Another thought it is so Microsoft to make an ARM specific OS that does not support the 5th core on the Tegra 3.

CeriseCogburn - Friday, January 25, 2013 - link

Tegra4 is looking mighty fine, so whatever.Tegra3 was great when it came to gaming - it kept making Apple's best look just equal.

Microsoft may actually be the bloated pig syndrome company. I find it likely that the LP 5th tegra core wasn't enough to keep the fat msft pig OS running.

Of course it could just be their anti-competitive practice in full swing.

GillyBillyDilly - Tuesday, December 25, 2012 - link

but when I watch the power eaters on a Nexus 7, up to 90 percent is used up by display alone, which makes the cpu power efficiency somewhat seem irrelevant. Isn't it time to talk about display efficiency?CeriseCogburn - Friday, January 25, 2013 - link

Yep. Good point. No, great point, although it looks to be more like 40% for display power use on the new large screen mobile phones and phablets.shadi_h - Tuesday, December 25, 2012 - link

I really believe Microsoft missed a great opportunity to go forward with an Intel only CPU strategy (they already have the best development kits for x86). An Intel powered cellphone is what I really want! Maybe the RT version should have been Clover Trail w/ 32-bit and Pro w/ 64-bit. Their decision makes me believe they put too much emphasis getting easy app conversions from the iOS/Android communities and not creating the best hardware.jeffkibuule - Tuesday, December 25, 2012 - link

It's not such a great idea to hitch all of your hopes on Intel, they seem to only do their best work when they have a strong competitor.shadi_h - Tuesday, December 25, 2012 - link

True but that's not anyone's fault but AMD. it seems they have no clue how to even enter this space. That's puzzling since it can be argued they potentially could have the best overall SoC tech (thought that was the whole reason they bought ATI in the first place).Powerlurker - Wednesday, January 2, 2013 - link

AMD dumped their mobile lineup in 2008 and sold it to Qualcomm (now known as Adreno) and sold their STB lineup (Xilleon) to Broadcom. Anything they could have used is gone at this point.