The Xeon Phi at work at TACC

by Johan De Gelas on November 14, 2012 1:44 PM EST- Posted in

- Cloud Computing

- CPUs

- IT Computing

- Intel

- Larrabee

- Xeon

- Xeon Phi

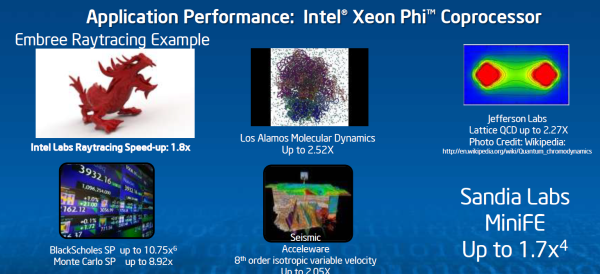

One of the big selling points of the Xeon Phi is that you can simply run multi-threaded Xeon code on the Xeon Phi. If you want to get decent performance out of the Xeon Phi, that code should be compiled with the Intel C or fortan Compiler and the Intel MKL math libraries. In that case, Intel claims many "typical applications" get about 2 to 2.5 higher performance with the Xeon Phi. A few exceptions get more.

That is an impressive performance boost, but not earth shattering. These numbers are much more realistic than the typical benchmarks of 100x that are throw around by the GPU folks. Those benchmarks are typically comparing a single threaded non SIMD binaries running on a CPU to a fully threaded carefully tuned application running on a GPU.

The question remains in which applications a cheaper quad CPU solution is more effective. Before the Xeon E5 (Sandy Bridge EP) came out, AMD was quite succesful with their less expensive quad CPU platforms in the HPC world. It will be interesting to compare the performance per dollar and performance per watt of such quad CPU platforms with a CPU + Phi solution. There are certainly applications where the CPU + Phi wins hands down, but we are willing to bet that there are lots of HPC applications where it is a close call (e.g. highly threaded, but harder to vectorize code).

The point is of course that the time investment to get there is a lot lower than is the case with CUDA on NVIDIA's Tesla K20. We have heard from several companies that debugging CUDA code is still a pretty daunting experience. One good example can be found here. The maturity of the Intel compilers and high performance software is a big plus for the Xeon Phi. The numerous papers and OpenMP to CUDA frameworks/translators clearly indicate that porting OpenMP applications to CUDA is not necessarily straightforward. That in contrast with the Xeon Phi, where existing OpenMP applications run faster on the Xeon Phi without a recompile. OpenMP is simply the ecosystem where the Xeon Phi thrives. And Intel has an excellent track record when it comes to supporting OpenMP in its compilers.

The Xeon Phi might also prove to be a bit more flexible and forgiving. The Xeon Phi architecture still, at a high level, resembles a general purpose Xeon core. We're talking about 60 in-order x86 cores with wider SIMD units, a 512KB L2 feeding 4 threads per core.

GPUs on the other hand are built for more "extreme" parallelism: hundreds of stream processors, with small shared L1-caches and one relatively small L2-cache.

We'll have to hold final judgement until we get a Xeon Phi equipped system in house, but our first impressions are that the Xeon Phi looks like a more cost effective, potentially easier to use alternative to high-end GPUs for HPC.

46 Comments

View All Comments

SodaAnt - Wednesday, November 14, 2012 - link

It does support the x86 instruction set though, so it shouldn't be too hard to port.MrSpadge - Wednesday, November 14, 2012 - link

But you have to use the custom vector format to stampede anything.Kevin G - Saturday, November 17, 2012 - link

In theory it should run the current the Linux version of F@H without modification. That catch is that the current version is going to be horribly suboptimal as it doesn't natively support the 512 bit wide vector format used by the Xeon Phi. This would leave only the x87 FPU for calculations. This would allow the 60 scalar FPU's to be used but limit performance to a mere 60 GFLOP across all the cores. There maybe some weird scheduling oddities with Linux and/or F@H due to the chips ability to expose 240 logical processors to the host OS (the result would be better performance from running multiple instances in parallel instead of one large instance using 240 threads).An OpenCL version of F@H might be coaxed to working and it that would utilize the 512 bit vector units. Intel would have to have OpenCL drivers available for this to even have a chance of working. This would allow the full ~1 TFLOP performance to be utilized.

SydneyBlue120d - Wednesday, November 14, 2012 - link

Why did Intel choose a custom SIMD format? Why not AVX?Jaybus - Thursday, November 15, 2012 - link

Because they needed heavier duty vector units. Each Phi core has 32 512-bit registers, where Core i7 has 16 256-bit registers. They just didn't implement the backward compatibility, probably to reduce complexity. It is certainly possible to do, and we may indeed see AVX, SSE, etc. added in a future revision.Kevin G - Saturday, November 17, 2012 - link

The 512 bit vector instructions change how exceptions and the register masking are handled in comparison to AVX. Outside of that, the vector instructions are similar to how AVX instructions are formatted and the output complies with IEEE floating point standards. So while there is a distinct break in ISA capabilities, it does appear that it is possible to bridge the two together in future designs. Still it is odd that Intel has forked their ISA.coder543 - Wednesday, November 14, 2012 - link

I just want to know how much it will cost.Why is Intel keeping this such a ridiculous secret? Knowing Intel, these will easily be $2,000+ a piece, if not much higher, but I still want to *know.*

LogOver - Wednesday, November 14, 2012 - link

Did you read the article at all? Check the second page again.Comdrpopnfresh - Wednesday, November 14, 2012 - link

How could PCIe 3.0 result in more overhead?nutgirdle - Wednesday, November 14, 2012 - link

I concur. A major dis-advantage to co-processor computing is the time it takes to move data on and off the card. The PCIe 2.0 bus is already a bottleneck in our workflow involving a Tesla card. This was a very short-sighted omission.