The Xeon Phi at work at TACC

by Johan De Gelas on November 14, 2012 1:44 PM EST- Posted in

- Cloud Computing

- CPUs

- IT Computing

- Intel

- Larrabee

- Xeon

- Xeon Phi

The Xeon Phi card comes on a PCIe card, much like a GPU. Given the architecture's origins as a GPU, the form factor should't come as a surprise. Like modern HPC GPUs however, the Xeon Phi card has no display output - its role is strictly for compute.

The Xeon Phi acts as a multi-core system on chip running its own operating system, a modified Linux kernel. Each Xeon Phi card has its own IP address however, the Xeon Phi can not operate on its own. A "normal" Xeon will be be the host CPU, the Xeon Phi card is a coprocessor, similar to the way your CPU and GPU work together.

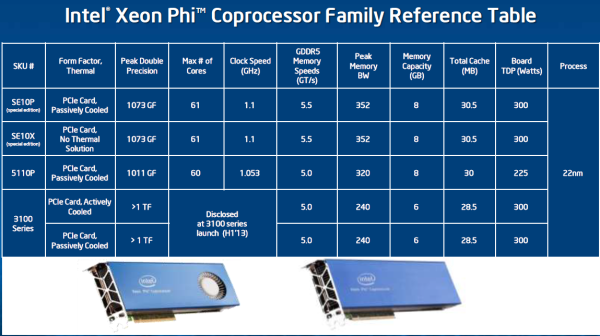

Below you can see the SKUs that Intel will offer.

The Xeon Phi inside the Stampede are special edition Xeon Phis.These special editions get 61 cores and run at a slightly higher clockspeed (1.1 GHz).

The commercially avialable 5110P has one core and 50 MHz less than the special edition Phi but comes with 8 GB of ECC memory. The P-suffix indicates that it's passively cooled, relying on the host server for airflow. The 5110P is not cheap at $2699, but it's still more affordable than NVIDIA's Tesla K20 ($3199). The Xeon Phi 5100 series is really intended for more memory bandwidth bound applications thanks to the use of 5GHz GDDR5 and a fully populated 512-bit memory interface.

For compute bound applications however, Intel will offer the Xeon Phi 3100 series in the first half of next year for less than $2000. The Xeon Phi 3100 will come with 6GB of GDDR5 (5GHz data rate) and only a 384-bit memory interface. Core clock should be higher, delivering over 1TFLOP of DP FP performance.

The Xeon Phi cards use a 7GHz PCIe 2.0 interface, as Intel found moving to PCIe 3.0 resulted in slightly higher overhead.

46 Comments

View All Comments

SodaAnt - Wednesday, November 14, 2012 - link

It does support the x86 instruction set though, so it shouldn't be too hard to port.MrSpadge - Wednesday, November 14, 2012 - link

But you have to use the custom vector format to stampede anything.Kevin G - Saturday, November 17, 2012 - link

In theory it should run the current the Linux version of F@H without modification. That catch is that the current version is going to be horribly suboptimal as it doesn't natively support the 512 bit wide vector format used by the Xeon Phi. This would leave only the x87 FPU for calculations. This would allow the 60 scalar FPU's to be used but limit performance to a mere 60 GFLOP across all the cores. There maybe some weird scheduling oddities with Linux and/or F@H due to the chips ability to expose 240 logical processors to the host OS (the result would be better performance from running multiple instances in parallel instead of one large instance using 240 threads).An OpenCL version of F@H might be coaxed to working and it that would utilize the 512 bit vector units. Intel would have to have OpenCL drivers available for this to even have a chance of working. This would allow the full ~1 TFLOP performance to be utilized.

SydneyBlue120d - Wednesday, November 14, 2012 - link

Why did Intel choose a custom SIMD format? Why not AVX?Jaybus - Thursday, November 15, 2012 - link

Because they needed heavier duty vector units. Each Phi core has 32 512-bit registers, where Core i7 has 16 256-bit registers. They just didn't implement the backward compatibility, probably to reduce complexity. It is certainly possible to do, and we may indeed see AVX, SSE, etc. added in a future revision.Kevin G - Saturday, November 17, 2012 - link

The 512 bit vector instructions change how exceptions and the register masking are handled in comparison to AVX. Outside of that, the vector instructions are similar to how AVX instructions are formatted and the output complies with IEEE floating point standards. So while there is a distinct break in ISA capabilities, it does appear that it is possible to bridge the two together in future designs. Still it is odd that Intel has forked their ISA.coder543 - Wednesday, November 14, 2012 - link

I just want to know how much it will cost.Why is Intel keeping this such a ridiculous secret? Knowing Intel, these will easily be $2,000+ a piece, if not much higher, but I still want to *know.*

LogOver - Wednesday, November 14, 2012 - link

Did you read the article at all? Check the second page again.Comdrpopnfresh - Wednesday, November 14, 2012 - link

How could PCIe 3.0 result in more overhead?nutgirdle - Wednesday, November 14, 2012 - link

I concur. A major dis-advantage to co-processor computing is the time it takes to move data on and off the card. The PCIe 2.0 bus is already a bottleneck in our workflow involving a Tesla card. This was a very short-sighted omission.