NVIDIA Launches Tesla K20 & K20X: GK110 Arrives At Last

by Ryan Smith on November 12, 2012 9:00 AM ESTNVIDIA Launches Tesla K20, Cont

To put the Tesla K20's performance in perspective, this is going to be a very significant increase in the level of compute performance NVIDIA can offer with the Tesla lineup. The Fermi based M2090 offered 655 GFLOPS of performance with FP64 workloads, while the K20X will straight-up double that with 1.31 TFLOPS. Meanwhile in the 225W envelope the 1.17 TFLOPS K20 will be replacing the 515 GFLOPS M2075, more than doubling NVIDIA’s FP64 performance there. As for FP32 workloads the gains are even greater due to the fact that NVIDIA’s FP64 rate has fallen from ½ on GF100/GF110 Fermi to 1/3 on GK110 Kepler; the 1.33 TFLOPS M2090 for example is being replaced by the 3.95 TFLOPS K20X.

Speaking of FP32 performance, when asked about the K10 NVIDIA told us that K20 would not be replacing K10, rather the two will exist side-by-side. K10 actually has better FP32 performance at 4.5 TFLOPs (albeit split across two GPUs), but as it’s based on the GK104 GPU it lacks some Tesla features like on-die (SRAM) ECC protection and HyperQ/Dynamic Parallelism. For the user base that could already be sufficiently served by the K10 it will continue to exist for those users, while for the FP64 users and users who needed ECC and other Tesla features K20 will now step up to the plate as NVIDIA’s other FP32 compute powerhouse.

The Tesla K20 family will be going up against a number of competitors, both traditional and new. On a macro level the K20 family and supercomputers based on it like Titan will go up against more traditional supercomputers like those based on IBM’s BlueGene/Q hardware, which Titan is just now dethroning in the Top500 list.

A Titan compute board: 4 AMD Opteron (16-core CPUs) + 4 NVIDIA Tesla K20 GPUs

Meanwhile on a micro/individual level the K20 family will be going up against products like AMD’s FirePro S9000 and FirePro S10000, along with Intel’s Xeon Phi, their first product based on their GPU-like MIC architecture. Both the Xeon Phi and FirePro S series can exceed 1 TFLOPS FP64 performance, making them potentially strong competition for the K20. Ultimately these products aren’t going to be separated by their theoretical performance but rather their real world performance, so while NVIDIA has a significant 30%+ lead in theoretical performance over their most similar competition (FirePro S9000 and Xeon Phi) it's too early to tell whether the real world performance difference will be quite that large, or conversely whether it will be even larger. Tool chains will also play a huge part here, with K20 relying predominantly on CUDA, the FirePro S on OpenCL, and the Xeon Phi on x86 coupled with Phi-specific tools.

Finally, let’s talk about pricing and availability. NVIDIA’s previous projection for K20 family availability was December, but they have now moved ahead by a couple of weeks. K20 products are already shipping to NVIDIA’s server partners, with those partners and NVIDIA both getting ready to ship to buyers soon after that. NVIDIA’s general guidance is November-December, so some customers should have K20 cards in their hands before the end of the month.

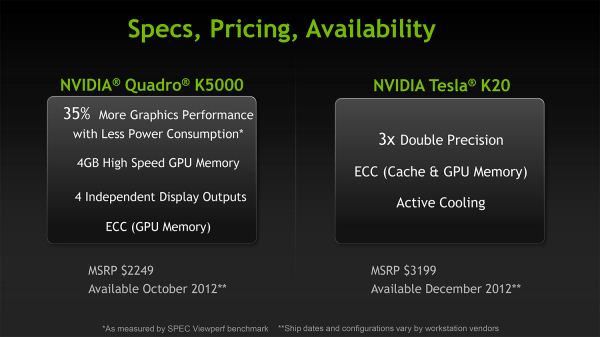

Meanwhile pricing will be in the $3000 to $5000 range, owing mostly to the fact that NVIDIA’s list prices rarely line up with the retail price of their cards, or what their server partners charge customers for specific cards. Back at the Quadro K5000 launch NVIDIA announced a MSRP of $3199 for the K20, and we’d expect the shipping K20 to trend close to that. Meanwhile we expect the K20X to trend closer to $4000-$5000, again depending on various markup factors.

K20 Pricing As Announced During Quadro K5000 Launch

As for the total number of cards they’re looking at shipping and the breakdown of K20/K20X, NVIDIA’s professional solutions group is as mum as usual, but whatever it is we’re being told it won’t initially be enough. NVIDIA is already taking pre-orders through their server partners, with a very large number of pre-orders outstripping the supply of cards and creating a backlog.

Interestingly NVIDIA tells us that their yields are terrific – a statement backed up in their latest financial statement – so the problem NVIDIA is facing appears to be demand and allocation rather than manufacturing. This isn’t necessarily a good problem to have as either situation involves NVIDIA selling fewer Teslas than they’d like, but it’s the better of the two scenarios. Similarly, for the last month NVIDIA has been offering time on a K20 cluster to customers, only for it to end up being oversubscribed due to the high demand from customers. So NVIDIA has no shortage of customers at the moment.

Ultimately the Tesla K20 launch appears to be shaping up very well for NVIDIA. Fermi was NVIDIA’s first “modern” compute architecture, and while it didn’t drive the kind of exponential growth that NVIDIA had once predicted it was very well received regardless. Though there’s no guarantee that Tesla K20 will finally hit that billion dollar mark, the K20 enthusiasm coming out of NVIDIA is significant, legitimate, and infectious. Powering the #1 computer in the Top500 list is a critical milestone for the company’s Tesla business and is just about the most positive press the company could ever hope for. With Titan behind them, Tesla K20 may be just what the company needs to finally vault themselves into a position as a premiere supplier of HPC processors.

73 Comments

View All Comments

DanNeely - Monday, November 12, 2012 - link

The Tesla (and quadro) cards have always been much more expensive than their consumer equivalents. The Fermi generation M2090 and M2070Q were priced at the same several thousand dollar pricepoint as K20 family; but the gaming oriented 570/580 were at the normal several hundred dollar prices you'd expect for a high end GPU.wiyosaya - Tuesday, November 13, 2012 - link

Yes, I understand that; however, IMHO, the performance differences are not significant enough to justify the huge price difference unless you work in very high end modeling or simulation.To me, with this generation of chips, this changes. I paid close attention to 680 reviews, and DP performance on 680 based cards is below that of the 580 - not, of course, that it matters to the average gamer. However, I highly doubt that the chips in these Teslas would not easily adapt to use as graphics cards.

While it is nVidia's right to sell these into any market they want, as I see it, the only market for these cards is the HPC market, and that is my point. It will be interesting to see if nVidia continues to be able to make a profit on these cards now that they are targeted only at the high-end market. With the extreme margins on these cards, I would be surprised if they are unable to make a good profit on them.

In other words, do they sell X amount at consumer prices, or do they sell Y amount at professional prices and which target market would be the better market for them in terms of profits? IMHO, X is likely the market where they will sell many times the amount of chips than they do in the Y market, but, for example, they can only charge 5X for the Y card. If they sell ten times the chips in X market, they will have lost profits buy targeting the Y market with these chips.

Also, nVidia is writing their own ticket on these. They are making the market. They know that they have a product that every supercomputing center will have on its must buy list. I doubt that they are dumb.

What I am saying here is that nVidia could sell these for almost any price they choose to any market. If nVidia wanted to, they could sell this into the home market at any price. It is nVidia that is making the choice of the price point. By selling the 680 at high-end enthusiast prices, they artificially push the price points of the market.

Each time a new card comes out, we expect it to be more expensive than the last generation, and, therefore, consumers perceive that as good reason to pay more for the card. This happens in the gaming market, too. It does not matter to the average gamer that the 580 outperforms the 680 in DP operations; what matters is that games run faster. Thus, the 680 becomes worth it to the gamer and the price of the hardware gets artificially pushed higher - as I see it.

IMHO, the problem with this is that nVidia may paint themselves into an elite market. Many companies have tried this, notably Compaq and currently Apple. Compaq failed, and Apple, depending on what analysts you listen to, is losing its creative edge - and with that may come the loss of its ability to charge high prices for its products. While nVidia may not fall into the "niche" market trap, as I see it, it is a pattern that looms on the horizon, and nVidia may fall into that trap if they are not careful.

CeriseCogburn - Thursday, November 29, 2012 - link

Yep, amd is dying, rumors are it's going to be bought up after a chapter bankruptcy, restructured, saved from permadeath, and of course, it's nVidia that is in danger of killing itself... LOLBoinc is that insane sound in your head.

NVidia professionals do not hear that sound, they are not insane.

shompa - Monday, November 12, 2012 - link

These are not "home computer" cards. These are cards for high performance calculations "super computers". And the prices are low for this market.The unique thing about this years launch is that Nvidia always before sold consumer cards first and supercomputer cards later. This time its the other way.

Nvidia uses the supercomputer cards for more or less subsidising its "home PC" graphic cards. Usually its the same card but with different drivers.

Home 500 dollars

Workstation 1000-1500 dollars

Supercomputing 3000+ dollars

Three different prices for the same card.

But 7 billion transistors on 28nm will be expensive for home computing. It cost more then 100% more to manufacture these GPUs then Nvidia 680.

7 BILLION. Remember that the first Pentium was the first 1 MILLION transistors. This is 7000 more dense.

kwrzesien - Monday, November 12, 2012 - link

All true.But I think what has people complaining is that this time around Nvidia isn't going to release this "big" chip to the Home market at all. They signaled this pretty clearly by putting their "middle" chip into the 680. Unless they add a new top-level part name like a 695 or something they have excluded this part from the home graphics naming scheme. Plus since it is heavily FP64 biased it may not perform well for a card that would have to be sold for ~$1000. (Remember they are already getting $500 for their middle-size chip!)

Record profits - that pretty much sums it up.

DanNeely - Monday, November 12, 2012 - link

AFAIK that was necessity speaking. The GK100 had some (unspecified) problems; forcing them to put the Gk104 in both the mid and upper range of their product line. When the rest of the GK11x series chips show up and nVidia launches the 7xx series I expect to see GK110's in the top as usual. Having seen nVidia's midrange chip trade blows with their top end one, AMD is unlikely to be resting on it's laurels for their 8xxx series.RussianSensation - Monday, November 12, 2012 - link

Great to see someone who understood the situation NV was in. Also, people think NV is a charity or something. When they were selling 2x 294mm^2 GTX690 for $1000, we can approximate that on a per wafer cost, it would have been too expensive to launch a 550-600mm^2 GK100/110 early in the year and maintain NV's expected profit margins. They also faced wafer shortages which explains why they re-allocated mobile Kepler GPUs and had to delay under $300 desktop Kepler allocation by 6+ months to fulfill 300+ notebook design wins. Sure, it's still Kepler's mid-range chip in the Kepler family, but NV had to use GK104 as flagship.CeriseCogburn - Thursday, November 29, 2012 - link

kwrsezien, another amd fanboy idiot loser with a tinfoil brain and rumor mongered brainwashed gourdEverything you said is exactly wrong.

Perhaps and OWS gathering will help your emotional turmoil, maybe you can protest in front of the nVidia campus.

Good luck, wear red.

bebimbap - Monday, November 12, 2012 - link

Each "part" being made with the "same" chip is more expensive for a reason.For example Hard drives made by the same manufacturer have different price points for enterprise, small business, and home user. I remember an Intel server rep said to use parts that are designed for their workload so enterprise "should" use an enterprise drive and so forth because of costs. And he added further that with extensive testing the bearings used in home user drives will force out their lubricant fluid causing the drive to spin slower and give read/write errors if used in certain enterprise scenarios, but if you let the drive sit on a shelf after it has "failed" it starts working perfectly again because the fluids returned to where they need to be. Enterprise drives also tend to have 1 or 2 orders of magnitude better bit read error rate than consumer drives too.

In the same way i'm sure the tesla, quadro, and gtx all have different firmwares, different accepted error rates, different loads they are tested for, and different binning. So though you say "the same card" they are different.

And home computing has changed and have gone in a different direction. No longer are we gaming in a room that needs a separate AC unit because of the 1500w of heat coming from the computer. We have moved from using 130w CPUs to only 78w. Single gpu cards are no longer using 350w but only 170w. so we went from using +600-1500w systems using ~80% efficient PSUs to using only about ~<300-600w with +90% efficient PSUs, and that is just under high loads. If we were to compare idle power, instead of only using 1/2 we are only using 1/10. We no longer need a GK110 based GPU, and it might be said that it will not make economic sense for the home user.

GK104 is good enough.

EJ257 - Monday, November 12, 2012 - link

The consumer model of this with the fully operational die will be in the $1000 range. 7 billion transitors is a really big chip even for 28nm process.