OCZ Vector (256GB) Review

by Anand Lal Shimpi on November 27, 2012 9:10 PM ESTTimes are changing at OCZ. There's a new CEO at the helm, and the company is now focused on releasing fewer products but that have gone through more validation and testing than in years past. The hallmark aggressive nature that gave OCZ tremendous marketshare in the channel overstayed its welcome. The new OCZ is supposed to sincerely prioritize compatibility, reliability and general validation testing. Only time will tell if things have changed, but right off the bat there's a different aura surrounding my first encounter with OCZ's Vector SSD.

Gone are the handwritten notes that accompanied OCZ SSD samples in years past, replaced by a much more official looking letter:

The drive itself sees a brand new 7mm chassis. The aluminum colored enclosure features a new label. Only the bottom of the SSD looks familiar as the name, part number and other details are laid out in traditional OCZ fashion.

Under the hood the drive is all new. Vector uses the first home-grown SSD controller by OCZ. Although the Octane and Vertex 4 SSDs both used OCZ Indilinx branded silicon, they were both based on Marvell IP - the controller architecture was licensed, not designed in house. Vector on the other hand uses OCZ's brand new Barefoot 3 controller, designed entirely in-house.

Barefoot 3 is the result of three different teams all working together. OCZ's UK design team, staffed with engineers from the PLX acquisition, the Korea design team inherited after the Indilinx acquisition, and folks at OCZ proper in California all came together to bring Barefoot 3 and Vector to life.

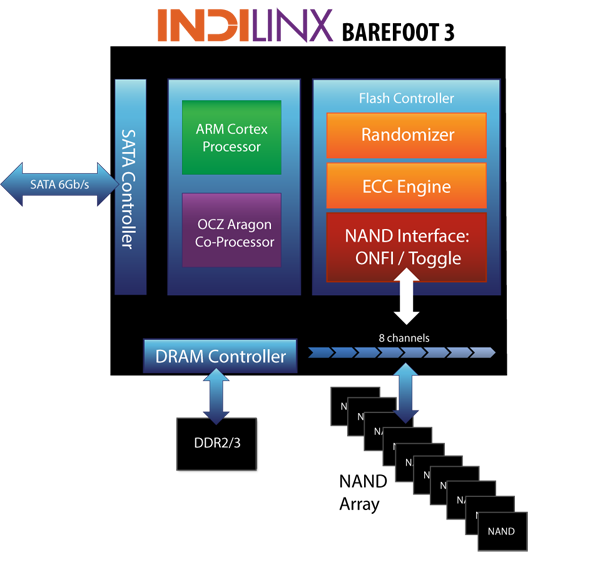

The Barefoot 3 controller integrates an unnamed ARM Cortex core as well as an OCZ Aragon co-processor. OCZ isn't going into a lot of detail as to how these two cores interact or what they handle, but multi-core SoCs aren't anything new in the SSD space. A branded co-processor is a bit unusual, and I suspect that whatever is responsible for Vector's distinct performance has to do with this part of the SoC.

Architecturally, Barefoot 3 can talk to NAND across 8 parallel channels. The controller is paired with two DDR3L-1600 DRAMs, although there's a pad for a third DRAM for use in the case where parity is needed for ECC.

Hardware encryption is not presently supported, although OCZ tells us Barefoot 3 is more than fast enough to handle it should a customer demand the feature. Hardware encryption remains mostly unused and poorly executed on client drives, so its absence isn't too big of a deal in my opinion.

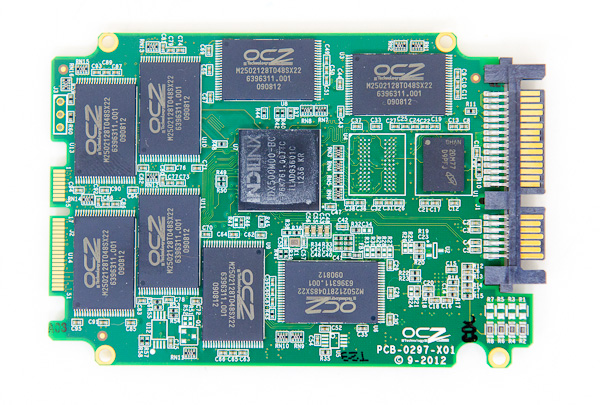

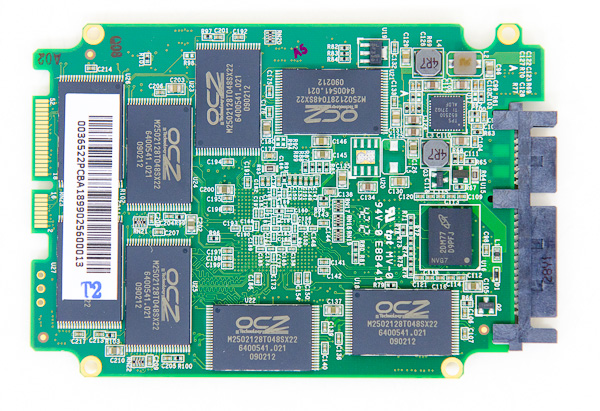

OCZ does its own NAND packaging, and as a result Vector is home to a sea of OCZ branded NAND devices. In reality you're looking at 25nm IMFT synchronous 2-bit-per-cell MLC NAND, just with an OCZ silkscreen on it. There's no NAND redundancy built in to the drive as OCZ is fairly comfortable with the error and failure rates at 25nm. The only spare area set aside is the same 6.8% we see on most client drives (e.g. a 256GB Vector offers 238GB usable space in Windows).

| OCZ Vector | |||||

| 128GB | 256GB | 512GB | |||

| Sequential Read | 550 MB/s | 550 MB/s | 550 MB/s | ||

| Sequential Write | 400 MB/s | 530 MB/s | 530 MB/s | ||

| Random Read | 90K IOPS | 100K IOPS | 100K IOPS | ||

| Random Write | 95K IOPS | 95K IOPS | 95K IOPS | ||

| Active Power Use | 2.25W | 2.25W | 2.25W | ||

| Idle Power Use | 0.9W | 0.9W | 0.9W | ||

Regardless of capacity, OCZ is guaranteeing the Vector for up to 20GB of host writes per day for 5 years. The warranty on the Vector expires after 5 years or 36.5TB of writes, whichever comes first. As with most similar claims, the 20GB value is pretty conservative and based on a 4KB random write workload. With more realistic client workloads you can expect even more life out of the NAND.

Despite being built on a brand new SoC, there's a lot of firmware carryover from Vertex 4. Indeed if you look at the behavior of Vector, it is a lot like a much faster Vertex 4. OCZ does continue to use its performance mode that enables faster performance if less than 50% of the drive's capacity is used, however in practice OCZ seems to rely on it less than in the Vertex 4.

The design cycle for Vector is the longest OCZ has ever endured. It took OCZ 18 months to bring the Vector SSD to market, compared to less than 12 months for previous designs. The additional time was used not only to coordinate teams across the globe, but also to put Vector through more testing and validation than any previous OCZ SSD. It's impossible to guarantee a flawless drive, but doing considerably more testing can't hurt.

The Vector is available starting today in 128GB, 256GB and 512GB capacities. Pricing is directly comparable to Samsung's 840 Pro:

| OCZ Vector Pricing (MSRP) | ||||||

| 64GB | 128GB | 256GB | 512GB | |||

| OCZ Vector | - | $149.99 | $269.99 | $559.99 | ||

| Samsung SSD 840 Pro | $99.99 | $149.99 | $269.99 | $599.99 | ||

OCZ is a bit more aggressive on its 512GB MSRP, otherwise it's very clear what OCZ views as Vector's immediate competition.

151 Comments

View All Comments

dj christian - Thursday, November 29, 2012 - link

What is SZ80/100 in the graphs, what do they stand for?Anand Lal Shimpi - Wednesday, November 28, 2012 - link

You are correct, I ran a 100% span of the 4KB/QD32 random write test. The right way to do this test is actually to gather all IO latency data until you hit steady state, which you can usually do on most consumer drives after just a couple of hours of testing. The problem is the resulting dataset ends up being a pain to process and present.There is definitely a correlation between spare area and IO consistency, particularly on drives that delay their defragmentation routines quite a bit. If you look at the Intel SSD 710 results you'll notice that despite having much more spare area than the S3700, consistency is clearly worse.

As your results show though, for an emptier drive IO consistency isn't as big of a problem (although if you continued to write to it you'd eventually see the same issues as all of that spare area would get used up). I think there's definitely value in looking at exactly what you're presenting here. The interesting aspect to me is this tells us quite a bit about how well drives make use of empty LBA ranges.

I tend to focus on the worst case here simply because that ends up being what people notice the most. Given that consumers are often forced into a smaller capacity drive than they'd like, I'd love to encourage manufacturers to pursue architectures that can deliver consistent IO even with limited spare area available.

Take care,

Anand

jwilliams4200 - Wednesday, November 28, 2012 - link

Anand wrote:"As your results show though, for an emptier drive IO consistency isn't as big of a problem (although if you continued to write to it you'd eventually see the same issues as all of that spare area would get used up)."

Actually, all of my tests did use up all the spare area, and had reached steady state during the graph shown. Perhaps you have misunderstood how I did my tests. I just overprovisioned it so that it had almost as much spare area as the Intel S3700. Otherwise, I was doing the same thing as you did in your tests.

The conclusion to be drawn is that the Intel S3700 is not all that special. You can approach the same performance as the S3700 with a consumer SSD, at least with a Samsung 840 Pro, just by overprovisioning enough.

Look at this one again:

http://i.imgur.com/Vvo1H.png

It reaches steady state somewhere between 80 and 120GB. The spare area is used up at about 62GB and the speed drops precipitously, but then there is a span where the speed actually increases slightly, and then levels out somewhere around 80-120GB.

Note that steady state is about 110MB/sec. That is about 28K IOPS. Not as good as the Intel S3700, but certainly approaching it.

Ictus - Wednesday, November 28, 2012 - link

Hey J, thanks for taking the time to reply to me in the other comment.I think my question is even more noobish than you have assumed.

"I just overprovisioned it so that it had almost as much spare area as the Intel S3700. Otherwise, I was doing the same thing as you did in your tests."

I am confused because I thought the only way to "over-provision" was to create a partition that didn't use all the available space??? If you are merely writing raw data up to the 80% full level, what exactly does over provisioning mean? Does the term "over provisioning" just mean you didn't fill the entire drive, or you did something to the drive?

jwilliams4200 - Wednesday, November 28, 2012 - link

No, overprovisioning generally just means that you avoid writing to a certain range of LBAs (aka sectors) on the SSD. Certainly one way to do that is to create a partition smaller than the capacity of the SSD. But that is completely equivalent to writing to the raw device but NOT writing to a certain range of LBAs. The key is that if you don't write to certain LBAs, however that is accomplished, then the SSD's flash translation table (FTL) will not have any mapping for those LBAs, and some or all SSDs will be smart enough to use those unmapped-LBAs as spare area to improve performance and wear-leveling.So no, I did not "do something to the drive". All I did was make sure that fio did not write to any LBAs past the 80% mark.

gattacaDNA - Sunday, December 2, 2012 - link

"The conclusion to be drawn is that the Intel S3700 is not all that special. You can approach the same performance as the S3700 with a consumer SSD, at least with a Samsung 840 Pro, just by overprovisioning enough."WOW - this is an interesting discussion which concludes that by simply over-provisioning a consumer SSD by 20-30% those units can approach the vetted S3700! I had to re-read those posts 2x to be sure I read that correctly.

It seems some later posts state that if the workload is not sustained (drive can recover) and the drive is not full, that the OP has little to no benefit.

So is an best bang really just not fill the drives past 75% of the available area and call it a day?

jwilliams4200 - Sunday, December 2, 2012 - link

The conclusion I draw from the data is that if you have a Samsung 840 Pro (or similar SSD, I believe several consumer SSDs behave similarly with respect to OP), and the big one -- IF you have a very heavy, continuous write workload, then you can achieve large improvements in throughput and huge improvements in maximum latency if you overprovision at 80% (i.e., leave 20% unwritten or unpartitioned)Note that such OP is not needed for most desktop users, for two reasons. First, most desktop users will not fill the drive 100% and as long as they have TRIM working, and if the drive is only filled to 80% (even if the filesystem covers all 100%), then it should behave as if it were actually overprovisioned at 80%. Second, most desktop users do not continuously write tens of Gigabytes of data without pause.

gattacaDNA - Sunday, December 2, 2012 - link

Thank You. That's what my take-away is as well.jwilliams4200 - Wednesday, November 28, 2012 - link

By the way, I am not sure why you say the data sets are "a pain to process and present". I have written some test scripts to take the data automatically and to produce the graphs automatically. I just hot-swap the SSD in, run the script, and then come back when it is done to look at the graphs.Also, the best way to present latency data is in a cumulative distribution function (CDF) plot with a normal probability scale on the y-axis, like this:

http://i.imgur.com/RcWmn.png

http://i.imgur.com/arAwR.png

One other tip is that it does not take hours to reach steady state if you use a random map. This means that you do a random write to all the LBAs, but instead of sampling with replacement, you keep a map of the LBAs you have already written to and don't randomly select the same ones again. In other words, write each 4K-aligned LBA on a tile, put all the tiles in a bag, and randomly draw the tiles out but do not put the drawn tile back in before you select the next tile. I use the 'fio' program to do this. With an SSD like the Samsung 840 Pro (or any SSD than can do 300+ MB/s 4KQD32 random writes), you only have to write a little more than the capacity of the SSD (eg., 256GB + 7% of 256GB) to reach steady state. This can be done in 10 or 20 minutes on fast SSDs.

Brahmzy - Wednesday, November 28, 2012 - link

I consistently over-provision every single SSD I use by at least 20%. I have had stellar performance doing this with 50-60+ SSDs over the years.I do this on friend's/family's builds and tell anybody I know to do this with theirs. So, with my tiny sample here, OP'ing SSDs is a big deal, and it works. I know many others do this as well. I base my purchase decisions with OP in mind. If I need 60GB of space, I'll buy a 120GB. If I need 120GB of usable space, I'll buy a 250GB drive, etc.

I think it would be valuable addition to the Anand suite of tests to account for this option that many of us use. Maybe a 90% OP write test and maybe an 80% OP write test. Assuming there's a constitent difference between the two.