OCZ Vector (256GB) Review

by Anand Lal Shimpi on November 27, 2012 9:10 PM ESTTRIM Functionality

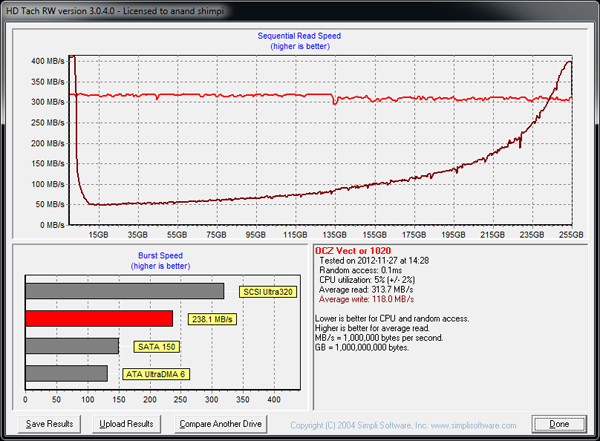

Over time SSDs can get into a fairly fragmented state, with pages distributed randomly all over the LBA range. TRIM and the naturally sequential nature of much client IO can help clean this up by forcing blocks to be recycled and as a result become less fragmented. Leaving as much free space as possible on your drive helps keep performance high (20% is a good number to shoot for), but it's always good to see how bad things can get before the GC/TRIM routines have a chance to operate. As always I filled all user addressible LBAs with data, wrote enough random data to the drive to fill the spare area and then some, then ran a single HD Tach pass to visualize how slow things got:

As we showed in our enterprise results, Vector's steady state 4KB random write performance is around 33MB/s. The worst case sequential performance here is around 50MB/s, which is in line with what you'd expect. Sequential writes do improve performance, but as with most SSDs you're best operating the Vector with a bit of spare area left on the drive (in addition to what's already set aside by firmware).

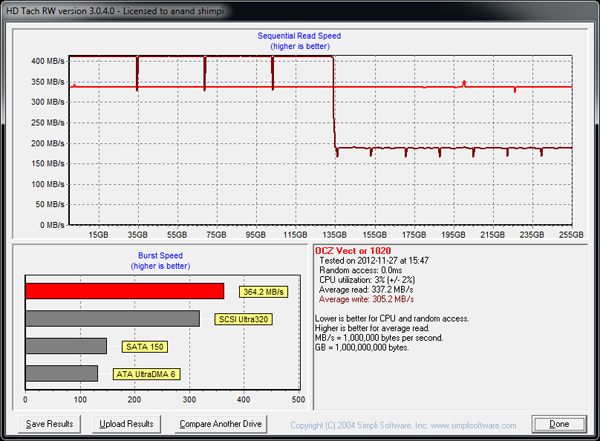

TRIM and another sequential pass restore performance to normal, but it also triggers the Vector's performance mode penalty:

At 50% capacity there's an internal reorganization routine that's triggered on Vector, similar to what happens on the Vertex 4. During this time, all performance is impacted, which is why you see a sharp drop in performance just beore the 135GB mark. The re-org routine only takes a few minutes. I went back and measured sequential write performance after this test and came back with 380MB/s in Iometer. In other words, don't be startled by the graph above - it's expected behavior, it just looks bad as the drive doesn't get a chance to run its background operations in peace.

151 Comments

View All Comments

dj christian - Thursday, November 29, 2012 - link

"Now, according to Anandtech, a 256GB-labelled SSD actually *HAS* the full 256GiB (275GB) of flash memory. But you lose 8% of flash for provisioning, so you end up with around 238GiB (255GB) anyway. It displays as 238GB in Windows.If the SSDs really had 256GB (238GiB) of space as labelled, you'd subtract your 8% and get 235GB (219GiB) which displays as 219GB in Windows. "

Uuh what?

sully213 - Wednesday, November 28, 2012 - link

I'm pretty sure he's referring to the amount of NAND on the drive minus the 6.8% set aside as spare area, not the old mechanical meaning where you "lost" disk space when a drive was formatted because of base 10 to base 2 conversion.JellyRoll - Tuesday, November 27, 2012 - link

How long does the heavy test take? The longest recorded busy time was 967 seconds from the Crucial M4. This is only 16 minutes of activity. Does the trace replay in real time, or does it run compressed? 16 minutes surely doesnt seem to be that much of a long test.DerPuppy - Tuesday, November 27, 2012 - link

Quote from text "Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:"JellyRoll - Tuesday, November 27, 2012 - link

yes, I took note of that :). That is the reason for the question though, if there were an idea of how long the idle periods were we can take into account the amount of time the GC for each drive functions, and how well.Anand Lal Shimpi - Wednesday, November 28, 2012 - link

I truncate idles longer than 25 seconds during playback. The total runtime on the fastest drives ends up being around 1.5 hours.Take care,

Anand

Kristian Vättö - Wednesday, November 28, 2012 - link

And on Crucial v4 it took 7 hours...JellyRoll - Wednesday, November 28, 2012 - link

Wouldn't this compress the QD during the test period? If the SSDs recorded activity is QD2 for an hour, then the trace is replayed quickly this creates a high QD situation. QD2 for an hour compressed to 5 minutes is going to play back at a much higher QD.dj christian - Thursday, November 29, 2012 - link

What is QD?doylecc - Tuesday, December 4, 2012 - link

Que Depth