OCZ Vector (256GB) Review

by Anand Lal Shimpi on November 27, 2012 9:10 PM ESTAnandTech Storage Bench 2011

Two years ago we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running in 2010.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011 - Heavy Workload

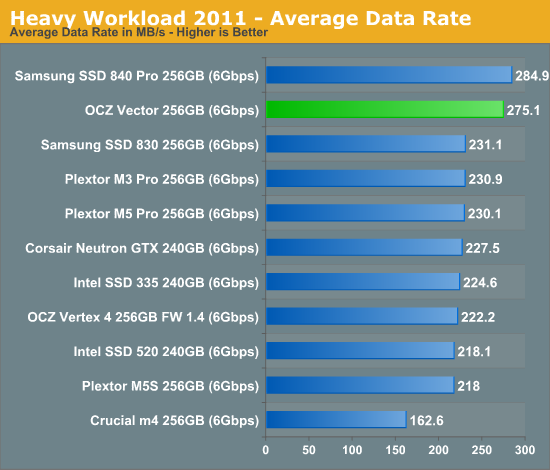

We'll start out by looking at average data rate throughout our new heavy workload test:

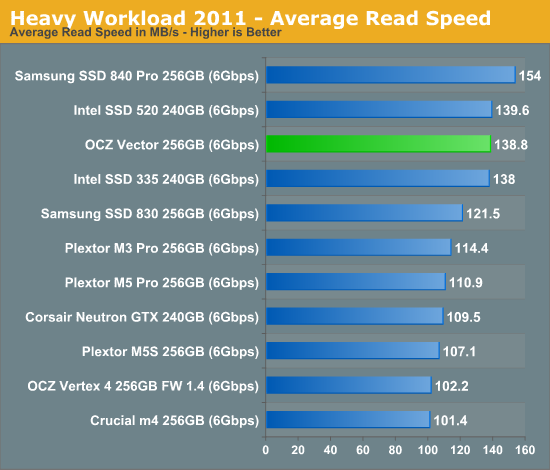

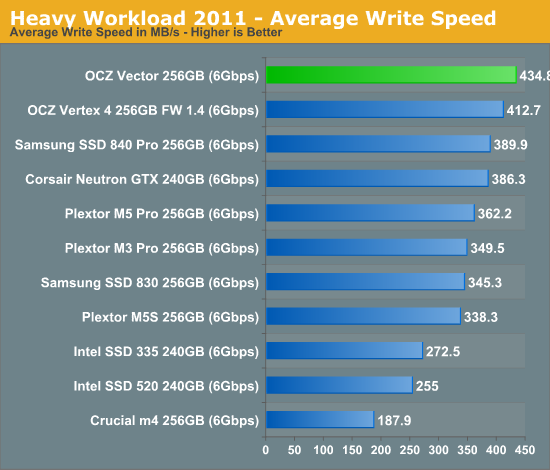

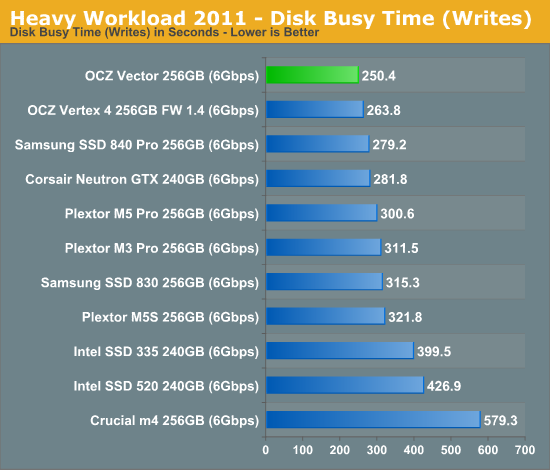

Here it is. OCZ's Vector comes within 4% of Samsung's SSD 840 Pro and manages a 22% increase in performance compared to the Vertex 4. The breakdown shows Vector's strong write performance are really what push it over the edge. At the same time, OCZ has finally addressed whatever poor read performance issues plagued the Vertex 4 in our test - the Vector is a different beast here.

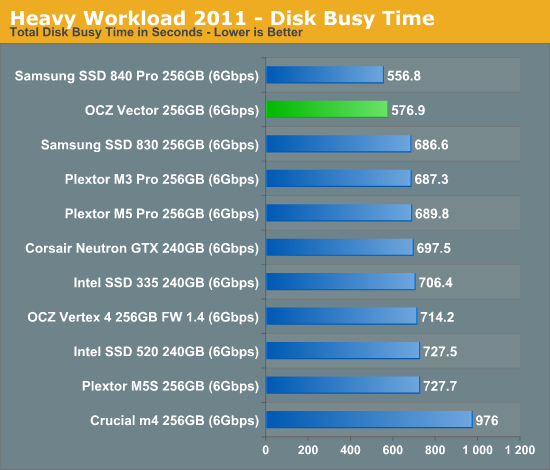

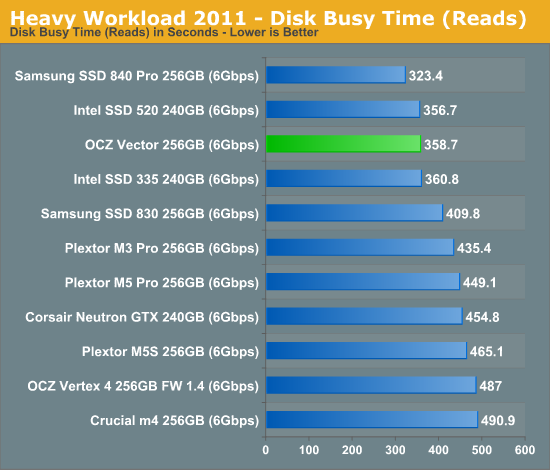

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

151 Comments

View All Comments

kmmatney - Tuesday, November 27, 2012 - link

I don't see anything wrong with stating that. My 256 Samsung 830 also appears as a 238GB drive in windows...jwilliams4200 - Tuesday, November 27, 2012 - link

The problem is that "formatting" a drive does not change the capacity.Windows is displaying the capacity in GiB, not GB. It is just Windows bug that they label their units incorrectly.

Gigaplex - Tuesday, November 27, 2012 - link

Yes and no. There is some overhead in formatting which reduces usable capacity, but the GiB/GB distinction is a much larger factor in the discrepancy.jwilliams4200 - Wednesday, November 28, 2012 - link

The GiB/GB bug in Windows accounts for almost all of the difference. It is not worth mentioning that partitioning usually leaves 1MiB of space at the beginning of the drive. 256GB = 238.4186GiB. If you subtract 1MiB from that, it is 238.4176GiB. So why bother to split hairs?Anand Lal Shimpi - Wednesday, November 28, 2012 - link

This is correct. I changed the wording to usable vs. formatted space, I was using the two interchangeably. The GiB/GB conversion is what gives us the spare area.Take care,

Anand

suprem1ty - Thursday, November 29, 2012 - link

It's not a bug. Just a different way of looking at digital capacity.suprem1ty - Thursday, November 29, 2012 - link

Oh wait sorry I see what you mean now. Disregard previous postflyingpants1 - Wednesday, November 28, 2012 - link

I think I might know what his problem is.When people see their 1TB-labelled drive displays only 931GB in Windows, they assume it's because formatting a drive with NTFS magically causes it to lose 8% of space, which is totally false. Here's a short explanation for newbie readers. A gigabyte (GB) as displayed in Windows is actually a gibibyte (GiB).

1 gibibyte = 1073741824 bytes = 1024 mebibytes

1 gigabyte = 1000000000 bytes = 1000 megabytes = 0.931 gibibytes

1000 gigabytes = 931 gibibytes

Windows says GB but actually means GiB.

SSDs and HDDs are labelled differently in terms of space. Let's say they made a spinning hard disk with exactly 256GB (238GiB) of space. It would appear as 238GB in Windows, even after formatting. You didn't lose anything,

because the other 18 gigs was never there in the first place.

Now, according to Anandtech, a 256GB-labelled SSD actually *HAS* the full 256GiB (275GB) of flash memory. But you lose 8% of flash for provisioning, so you end up with around 238GiB (255GB) anyway. It displays as 238GB in Windows.

If the SSDs really had 256GB (238GiB) of space as labelled, you'd subtract your 8% and get 235GB (219GiB) which displays as 219GB in Windows.

flyingpants1 - Wednesday, November 28, 2012 - link

IMO drive manufacturers should stop messing around and put 256GiB of USABLE space on each 256GiB drive, and start marking them as such.Holly - Wednesday, November 28, 2012 - link

Tbh imho using base 10 units in binary environment is just asking for a facepalm. Everything underneath runs on 2^n anyway and this new "GB" vs "GiB" is just a commercial bullshit so storage devices can be sold with flashier stickers. Your average raid controller bios will show 1TB drive as 931GB one as well (at least few ICHxR and one server Adaptec I have access to right now all do).