Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTDecoupled L3 Cache

With Nehalem Intel introduced an on-die L3 cache behind a smaller, low latency private L2 cache. At the time, Intel maintained two separate clock domains for the CPU (core + uncore) and a third for what was, at the time, an off-die integrated graphics core. The core clock referred to the CPU cores, while the uncore clock controlled the speed of the L3 cache. Intel believed that its L3 cache wasn't incredibly latency sensitive and could run at a lower frequency and burn less power. Core CPU performance typically mattered more to most workloads than L3 cache performance, so Intel was ok with the tradeoff.

In Sandy Bridge, Intel revised its beliefs and moved to a single clock domain for the core and uncore, while keeping a separate clock for the now on-die processor graphics core. Intel now felt that race to sleep was a better philosophy for dealing with the L3 cache and it would rather keep things simple by running everything at the same frequency. Obviously there are performance benefits, but there was one major downside: with the CPU cores and L3 cache running in lockstep, there was concern over what would happen if the GPU ever needed to access the L3 cache while the CPU (and thus L3 cache) was in a low frequency state. The options were either to force the CPU and L3 cache into a higher frequency state together, or to keep the L3 cache at a low frequency even when it was in demand to prevent waking up the CPU cores. Ivy Bridge saw the addition of a small graphics L3 cache to mitigate this situation, but ultimately giving the on-die GPU independent access to the big, primary L3 cache without worrying about power concerns was a big issue for the design team.

When it came time to define Haswell, the engineers once again went to Nehalem's three clock domains. Ronak (Nehalem & Haswell architect, insanely smart guy) tells me that the switching between designs is simply a product of the team learning more about the architecture and understanding the best balance. I think it tells me that these guys are still human and don't always have the right answer for the long term without some trial and error.

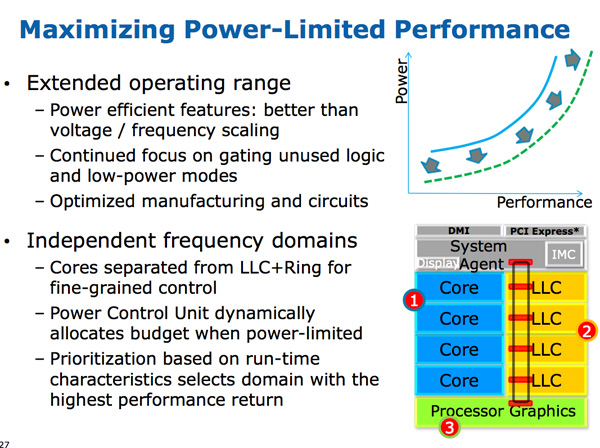

The three clock domains in Haswell are roughly the same as what they were in Nehalem, they just all happen to be on the same die. The CPU cores all run at the same frequency, the on-die GPU runs at a separate frequency and now the L3 + ring bus are in their own independent frequency domain.

Now that CPU requests to L3 cache have to cross a frequency boundary there will be a latency impact to L3 cache accesses. Sandy Bridge had an amazingly fast L3 cache, Haswell's L3 accesses will be slower.

The benefit is obviously power. If the GPU needs to fire up the ring bus to give/get data, it no longer has to drive up the CPU core frequency as well. Furthermore, Haswell's power control unit can dynamically allocate budget between all areas of the chip when power limited.

Although L3 latency is up in Haswell, there's more access bandwidth offered to each slice of the L3 cache. There are now dedicated pipes for data and non-data accesses to the last level cache.

Haswell's memory controller is also improved, with better write throughput to DRAM. Intel has been quietly telling the memory makers to push for even higher DDR3 frequencies in anticipation of Haswell.

245 Comments

View All Comments

Magik_Breezy - Sunday, October 14, 2012 - link

Anything delivers "solid performance" on Facebook & iWorkWhy pay $2,000 for that?

random2 - Friday, October 5, 2012 - link

I agree. admittedly I am not an apple fan and view them as people who have undergone a degree of brainwashing compounded by the need for some to keep up with the Jone's. A certain degree of mind control must be necessary to stick with a company that has had some questionable business practices as far as customer relations, dealing with product issues and denying said issues, not to mention the whole hypocritical stance by apple in regards to copyright infringement has also left a bad taste in my mouth.hasseb64 - Saturday, October 6, 2012 - link

Disagree, not that much new from already published IDF reports almost 1 month ago. What is intresting is the claimed 40 EU GT3, other sources say lower amounts.JKflipflop98 - Saturday, October 6, 2012 - link

I totally agree. It's articles like this that have kept me coming back for years. Keep up the good work Anand!tipoo - Sunday, October 7, 2012 - link

"You can expect CPU performance to increase by around 5 - 15% at the same clock speed as Ivy Bridge. "That seems terribly disappointing for a tock, even IVB as a Tick managed 10% in most cases.

medi01 - Tuesday, October 9, 2012 - link

One can't be biased !@# !@#@ and a good journalist at the same time.One needs to be blind not to see how glass is always half empty for AMD, and half full for nVidia/Intel. F**!@#'s were shameless enough, to test 45W APU with 1000W PSU and such crap is all over the place.

Paulman - Friday, October 5, 2012 - link

As I was reading this article, about part way into the low platform power sections I suddenly had this thought: "Oh man, AMD is gonna die...!"I don't know if that's true for the entire microprocessor side of AMD, since they look like they're already starting to transition out of the desktop space, but I don't know if they're going to stand much of a chance if they're planning on entering the same TDP range as Haswell.

Do you think there's a chance AMD will start focussing on designing ARM ISA cores? Or will expanding on their x86 Bobcat-type cores be enough for them?

sean.crees - Friday, October 5, 2012 - link

I also worry about AMD. AMD has been 1-2 steps behind Intel for a while now, and now it seems Intel is at least 1 or 2 steps behind ARM and the future. Is that going to mean AMD is just too far behind to stay relevant now? If nothing else, i suppose AMD can fall back on graphic cards with it's ATI acquisition.Da W - Friday, October 5, 2012 - link

If Haswell keeps x86 relevant in the tablet space and thus Windows 8 has the upper edge over Windows RT and Windows tablets can grab +-50% market share from the iPad, then it can be good for AMD, provided they survive that long.RedemptionAD - Friday, October 5, 2012 - link

If AMD can create a team to focus on increasing IPC with a goal to one up Intel and have the ATI graphics people keep doing what they do with a time goal of say 2 years, (Note: Portables/Notebooks/Desktops should all be x64 by then), then I think that AMD will be able to return to their Athlon 64 glory days or better.