Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTCPU Architecture Improvements: Background

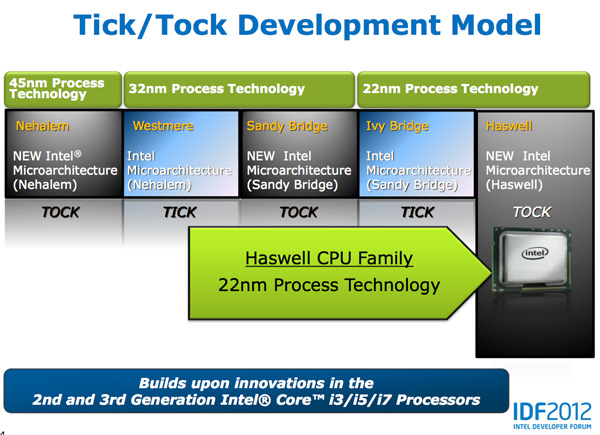

Despite all of this platform discussion, we must not forget that Haswell is the fourth tock since Intel instituted its tick-tock cadence. If you're not familiar with the terminology by now a tock is a "new" microprocessor architecture on an existing manufacturing process. In this case we're talking about Intel's 22nm 3D transistors, that first debuted with Ivy Bridge. Although Haswell is clearly SoC focused, the designs we're talking about today all use Intel's 22nm CPU process - not the 22nm SoC process that has yet to debut for Atom. It's important to not give Intel too much credit on the manufacturing front. While it has a full node advantage over the competition in the PC space, it's currently only shipping a 32nm low power SoC process. Intel may still have a more power efficient process at 32nm than its other competitors in the SoC space, but the full node advantage simply doesn't exist there yet.

Although Haswell is labeled as a new micro-architecture, it borrows heavily from those that came before it. Without going into the full details on how CPUs work I feel like we need a bit of a recap to really appreciate the changes Intel made to Haswell.

At a high level the goal of a CPU is to grab instructions from memory and execute those instructions. All of the tricks and improvements we see from one generation to the next just help to accomplish that goal faster.

The assembly line analogy for a pipelined microprocessor is over used but that's because it is quite accurate. Rather than seeing one instruction worked on at a time, modern processors feature an assembly line of steps that breaks up the grab/execute process to allow for higher throughput.

The basic pipeline is as follows: fetch, decode, execute, commit to memory. You first fetch the next instruction from memory (there's a counter and pointer that tells the CPU where to find the next instruction). You then decode that instruction into an internally understood format (this is key to enabling backwards compatibility). Next you execute the instruction (this stage, like most here, is split up into fetching data needed by the instruction among other things). Finally you commit the results of that instruction to memory and start the process over again.

Modern CPU pipelines feature many more stages than what I've outlined here. Conroe featured a 14 stage integer pipeline, Nehalem increased that to 16 stages, while Sandy Bridge saw a shift to a 14 - 19 stage pipeline (depending on hit/miss in the decoded uop cache).

The front end is responsible for fetching and decoding instructions, while the back end deals with executing them. The division between the two halves of the CPU pipeline also separates the part of the pipeline that must execute in order from the part that can execute out of order. Instructions have to be fetched and completed in program order (can't click Print until you click File first), but they can be executed in any order possible so long as the result is correct.

Why would you want to execute instructions out of order? It turns out that many instructions are either dependent on one another (e.g. C=A+B followed by E=C+D) or they need data that's not immediately available and has to be fetched from main memory (a process that can take hundreds of cycles, or an eternity in the eyes of the processor). Being able to reorder instructions before they're executed allows the processor to keep doing work rather than just sitting around waiting.

Sidebar on Performance Modeling

Microprocessor design is one giant balancing act. You model application performance and build the best architecture you can in a given die area for those applications. Tradeoffs are inevitably made as designers are bound by power, area and schedule constraints. You do the best you can this generation and try to get the low hanging fruit next time.

Performance modeling includes current applications of value, future algorithms that you expect to matter when the chip ships as well as insight from key software developers (if Apple and Microsoft tell you that they'll be doing a lot of realistic fur rendering in 4 years, you better make sure your chip is good at what they plan on doing). Obviously you can't predict everything that will happen, so you continue to model and test as new applications and workloads emerge. You feed that data back into the design loop and it continues to influence architectures down the road.

During all of this modeling, even once a design is done, you begin to notice bottlenecks in your design in various workloads. Perhaps you notice that your L1 cache is too small for some newer workloads, or that for a bunch of popular games you're seeing a memory access pattern that your prefetchers don't do a good job of predicting. More fundamentally, maybe you notice that you're decode bound more often than you'd like - or alternatively that you need more integer ALUs or FP hardware. You take this data and feed it back to the team(s) working on future architectures.

The folks working on future architectures then prioritize the wish list and work on including what they can.

245 Comments

View All Comments

tipoo - Sunday, October 7, 2012 - link

I don't think so, doesn't the HD4000 have more bandwidth to work with than AMDs APUs yet offers worse performance? They still had headroom there. I think it's just for TDP, they limit how much power the GPUs can use since the architecture is oriented at mobile.magnimus1 - Friday, October 5, 2012 - link

Would love to hear your take on how Intel's latest and greatest fares against Qualcomm's latest and greatest!cosmotic - Friday, October 5, 2012 - link

Ah, an MPEG2 encoder. Just in time!jamyryals - Friday, October 5, 2012 - link

This made me :)name99 - Friday, October 5, 2012 - link

We laugh but one possibility is that Intel hopes to sell Haswell's inside US broadcast equipment.There isn't much broadcast equipment sold, but the costs are massive, and there's no obvious reason not to replace much of that custom hardware with intel chips.

And much of the existing broadcast hardware (at least the MPEG2-encoding part) is obviously garbage --- the artifacts I see on broadcast TV are bad even for the prime-time networks, and are truly awful for the budget independent operators.

Much like they have written a cell-tower stack to run on i7's to replace the similarly grossly over-priced custom hardware that lives in cell towers, and are currently deploying in China. Anand wrote about this about two weeks ago.

vt1hun - Friday, October 5, 2012 - link

Do you have an idea when Intel will move to DDR4 ? Not with Haswell according to this article.Thank you

tipoo - Friday, October 5, 2012 - link

Haswell EX for servers will support DDR4, but even Broadwell on desktops is only DDR3, we won't see DDR4 in desktops until 2015.jwcalla - Friday, October 5, 2012 - link

We'll probably see DDR4 in the ARM space before we have it on Intel.Maybe this should be AMD's focus of attack: if they can't compete on performance, at least try on chipset features.

Perhaps Intel's biggest concern would be if somebody comes along with a super-efficient x86 emulator for ARM. Going forward, "legacy applications" is going to be an increasingly important selling point to prevent ARM inroads on the low end.

Microsoft keeping their Windows ARM version locked-down is a key to that too, and likely a deference to their relationship with Intel. But Apple is less likely to similarly constrain themselves.

meloz - Saturday, October 6, 2012 - link

>We'll probably see DDR4 in the ARM space before we have it on Intel.>Maybe this should be AMD's focus of attack: if they can't compete on performance, at least try on chipset features.

The problem with DDR4 is likely going to be the price. We all know how the memory industry likes to jack up the prices whenever a new spec comes out. Remember how expensive DDr3 was when it started to replace DDR2?

Some people joke that this transition is the only time they make any money in the RAM business, and considering the low prices of DDR3 you have to wonder.

DDR4 might offer some performance and power advantage on release, but it will likely be more expensive and take time (12-18 months?) to offer a compelling performance / $ advantage over cheap DDR3 variants.

If AMD is trying to position itself as 'value' brand, chaining themselves to DDR4 (before Intel's volume brings down the prices for everyone) could spell their doom.

Kevin G - Friday, October 5, 2012 - link

Intel is set to launch Ivy Bridge EX on a new socket late in 2013 on a new socket. The on-die controller will likely use memory buffering similar to what Nehalem-EX and Westmere-EX use. The buffer chips may initially use DDR3 but this would allow for a trivial migration to DDR4 since the on-die controller doesn't communicate directly with the memory chips.Come to think of it, Intel could migration Nehalem-EX/Westmere-EX to DDR4 with a chipset upgrade. Vendors like HP put the buffer chips and memory slots on a daughter card so only that part would need replacement.