Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTHaswell's GPU

Although Intel provided a good amount of detail on the CPU enhancements to Haswell, the graphics discussion at IDF was fairly limited. That being said, there's still some to talk about here.

Haswell builds on the same fundamental GPU architecture we saw in Ivy Bridge. We won't see a dramatic redesign/re-plumbing of the graphics hardware until Broadwell in 2014 (that one is going to be a big one).

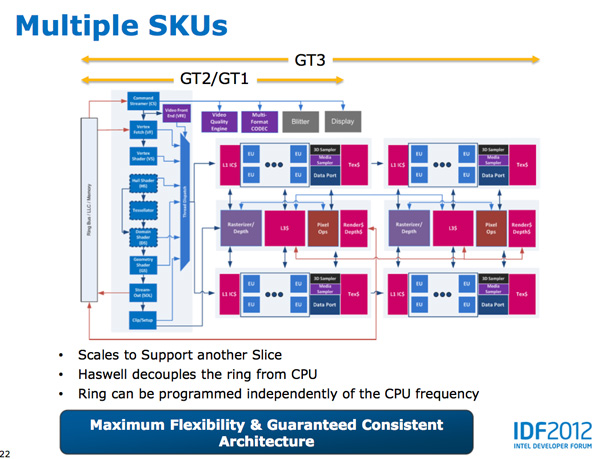

Haswell's GPU will be available in three physical configurations: GT1, GT2 and GT3. Although Intel mentioned that the Haswell GT3 config would have twice the shader count of Haswell GT2, it was careful not to disclose the total number of EUs in any of the versions. Based on the information we have at this point, GT3 should be a 40 EU configuration while GT2 should feature 20 EUs. Intel will also be including up to one redundant EU to deal with the case where there's a defect in an EU in the array. This isn't an uncommon practice, but it does indicate just how much of the die will be dedicated to graphics in Haswell. The larger of an area the GPU covers, the greater the likelihood that you'll see unrecoverable defects in the GPU. Redundancy at the EU level is one way of mitigating that problem.

Haswell's processor graphics extends API support to DirectX 11.1, OpenCL 1.2 and OpenGL 4.0.

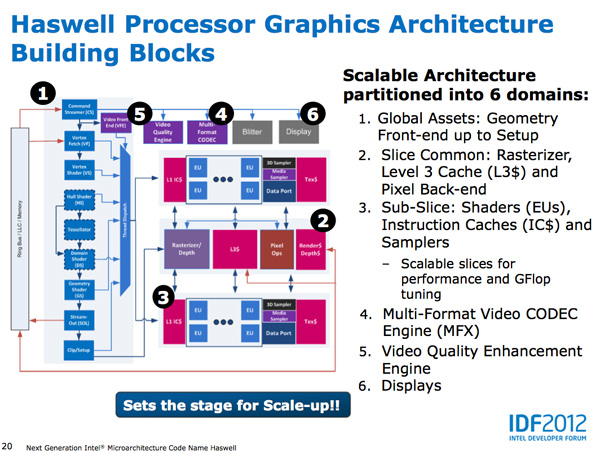

At the front of the graphics pipeline is a new resource streamer. The RS offloads some driver work that the CPU would normally handle and moves it to GPU hardware instead. Both AMD and NVIDIA have significant command processors so this doesn't appear to be an Intel advantage although the devil is in the (unshared) details. The point from Intel's perspective is that any amount of processing it can shift away from general purpose CPU hardware and onto the GPU can save power (CPU cores go to sleep while the RS/CS do their job).

Beyond the resource streamer, most of the fixed function graphics hardware sees a doubling of performance in Haswell.

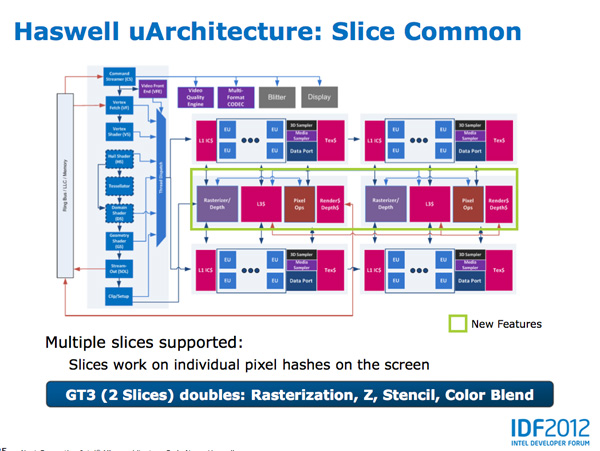

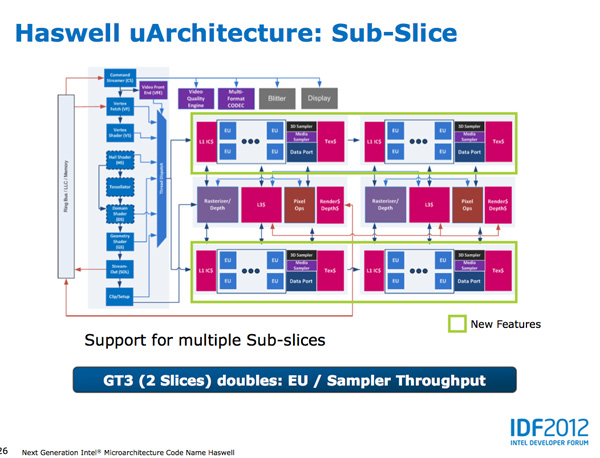

At the shader core level, Intel separates the GPU design into two sections: slice common and sub-slice. Slice common includes the rasterizer, pixel back end and GPU L3 cache. The sub-slice includes all of the EUs, instruction caches and EUs.

In Haswell GT1 and GT2 there's a single slice common, while GT3 sees a doubling of slice common. GT3 similarly has two sub-slices, although once again Intel isn't talking specifics about EU counts or clock speeds between GT1/2/3.

The final bit of detail Intel gave out about Haswell's GPU is the texture sampler sees up to a 4x improvement in throughput over Ivy Bridge in some modes.

Now to the things that Intel didn't let loose at IDF. Although originally an option for Ivy Bridge (but higher ups at Intel killed plans for it) was a GT3 part with some form of embedded DRAM. Rumor has it that Apple was the only customer who really demanded it at the time, and Intel wasn't willing to build a SKU just for Apple.

Haswell will do what Ivy Bridge didn't. You'll see a version of Haswell with up to 128MB of embedded DRAM, with a lot of bandwidth available between it and the core. Both the CPU and GPU will be able to access this embedded DRAM, although there are obvious implications for graphics.

Overall performance gains should be about 2x for GT3 (presumably with eDRAM) over HD 4000 in a high TDP part. In Ultrabooks those gains will be limited to around 30% max given the strict power limits.

As for why Intel isn't talking about embedded DRAM on Haswell, your guess is as good as mine. The likely release timeframe for Haswell is close to June 2013, there's still tons of time between now and then. It looks like Intel still has a desire to remain quiet on some fronts.

245 Comments

View All Comments

dishayu - Friday, October 5, 2012 - link

Woah! I did not even think of that. That is VERY compelling but i can't do without unlocked multiplier, so there is no perfect processor for me still :(StevoLincolnite - Friday, October 5, 2012 - link

Or just go with a Socket 2011 Core i7 3930K like I have and do a little bit of undervolting and has no IGP's.I think the reason why the Desktop space has seen decreasing/stagnant sales is simply because allot of people see no need to upgrade.

A Core 2 Quad Q6600 @ 3.6ghz, with a decent chunk of Ram and a decent graphics card is actually fairly capable of running almost every game at maximum settings.

Heck I know people who are perfectly happy sitting with a Pentium 4 for basic web use.

I think a change needs to happen where software catches up with hardware to give people a reason to upgrade and drive sales which might reinvigorate Intel and AMD to innovate.

Windows 8 and the next generation consoles might actually help in that regard.

De_Com - Friday, October 5, 2012 - link

Well said Steve. Couldn't agree with you more.

I'm running a Core 2 Extreme QX6850 at 3.4ghz, 1066Mhz DDR2 Ram and a GTX295 and it still rocks all the newest games at or close to max settings.

Will have this system 4 years this November.(all except the GTX295, which was upgraded from a 9800 GX2), even now I'm thinking that was a waste of cash.

I've gone to upgrade at least twice each year, but can't justify it.

The only place I'd see returns is in the power costs, but hey, whats a few extra cents.....

The system meets my needs, and forking out for a similar system today would cost around the €1800 mark.

Until the software can better utilize the components I'm holding out until Summer 2013, that'll be over 4 years I've gotten out of this system. Up until 2008 I slavishly upgraded every year or 2.

lukarak - Saturday, October 6, 2012 - link

This (late) December, i will have had my i7 for 4 years, and i have not seen a single reason to upgrade. The GPU is 2.5 years old (GTX480, was 280 before that).A x58 motherboard has 6 memory slots, and now houses 24 GB of ram for virtual machines, which can go 48 GB for a reasonable price.

I just don't see the need to do anything more, and this will probably fail from old age before i would need a drastically faster machine.

xaml - Thursday, May 23, 2013 - link

"but hey, whats a few extra cents....."Sure, it's probably not your generation to take the hit, having to deal with the consequences of energy excesses.

DanNeely - Friday, October 5, 2012 - link

Is that actually an IGPless chip, or just a standard LGA1155 quadcore chip with a disabled IGP.csroc - Friday, October 5, 2012 - link

I don't mind power savings, the few times my system is idle it could certainly benefit but overall it would mean reduced consumption even under load. My system just doesn't spend enough time in idle with my Q9450.Ultimately it does seem as though the software demand for faster CPU hardware has slowed and between that and the lack of real competition, so has the development.

If it weren't for the fact that I need more RAM or wanted faster photo processing (and may start doing some video) I'd probably keep what I've got a bit longer. My Q9450 hasn't held me back from playing any games yet. The 20% OC I've been running doesn't hurt but ultimately a lot of things just aren't CPU limited anymore.

Kidster3001 - Monday, October 15, 2012 - link

If you're playing 3D games then your CPU is likely "idle" 50%-75% of the time. Idle time does not just mean when the display is off.IanCutress - Friday, October 5, 2012 - link

You may think this as a result of all the low power talk, but Haswell is doing something rather important on the peak performance side. The increase in the size of the execution engine is important - adding in another integer ALU and another load/store means that in workloads that share INT and FPU performance (think loop counters which store an INT for loop iteration then perform some FP calcs) will improve. By increasing the bandwidth available and being able to keep the two FPU fed with info means a greater throughput as long as the bandwidth and thread switching can hide any additional L3 latency. Personally I'm thinking this may be a subtle move towards more threads per core in future architectures. Some of the non x86 are abusing 8 threads/core with improvement gains, so I wonder if that would be possible here. Ideally we would like every port on the execution engine to do everything, with a single pipeline feeding it and excellent branch prediction to help with single thread speed. Smaller nodes help with that silicon real estate, or someone will stumble on a better/smaller way to actually physically create these things.Ian

DanNeely - Friday, October 5, 2012 - link

I'm curious what IBM/Oracle's high SMT designs look like on the execution port side. As long as it's business as usual I doubt Intel will ever make all the ports do everything because it would just be hogging a huge amount of die area when the odds of each thread doing all of the same instruction type constantly are very low. Smaller bursts of one type can be spread out using OOOE.