Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTHaswell's GPU

Although Intel provided a good amount of detail on the CPU enhancements to Haswell, the graphics discussion at IDF was fairly limited. That being said, there's still some to talk about here.

Haswell builds on the same fundamental GPU architecture we saw in Ivy Bridge. We won't see a dramatic redesign/re-plumbing of the graphics hardware until Broadwell in 2014 (that one is going to be a big one).

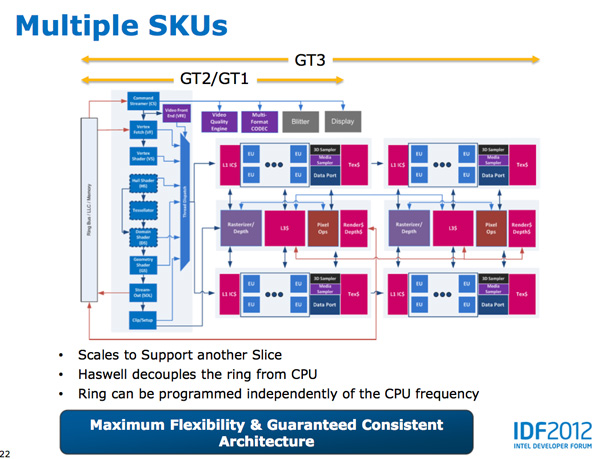

Haswell's GPU will be available in three physical configurations: GT1, GT2 and GT3. Although Intel mentioned that the Haswell GT3 config would have twice the shader count of Haswell GT2, it was careful not to disclose the total number of EUs in any of the versions. Based on the information we have at this point, GT3 should be a 40 EU configuration while GT2 should feature 20 EUs. Intel will also be including up to one redundant EU to deal with the case where there's a defect in an EU in the array. This isn't an uncommon practice, but it does indicate just how much of the die will be dedicated to graphics in Haswell. The larger of an area the GPU covers, the greater the likelihood that you'll see unrecoverable defects in the GPU. Redundancy at the EU level is one way of mitigating that problem.

Haswell's processor graphics extends API support to DirectX 11.1, OpenCL 1.2 and OpenGL 4.0.

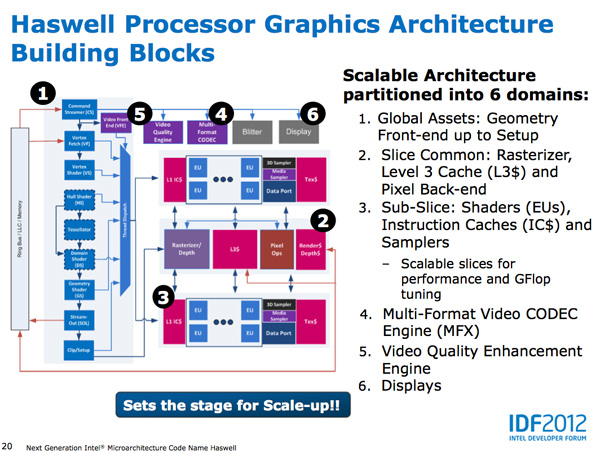

At the front of the graphics pipeline is a new resource streamer. The RS offloads some driver work that the CPU would normally handle and moves it to GPU hardware instead. Both AMD and NVIDIA have significant command processors so this doesn't appear to be an Intel advantage although the devil is in the (unshared) details. The point from Intel's perspective is that any amount of processing it can shift away from general purpose CPU hardware and onto the GPU can save power (CPU cores go to sleep while the RS/CS do their job).

Beyond the resource streamer, most of the fixed function graphics hardware sees a doubling of performance in Haswell.

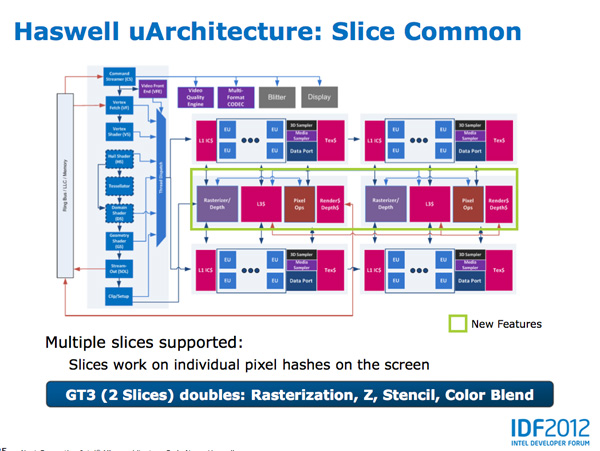

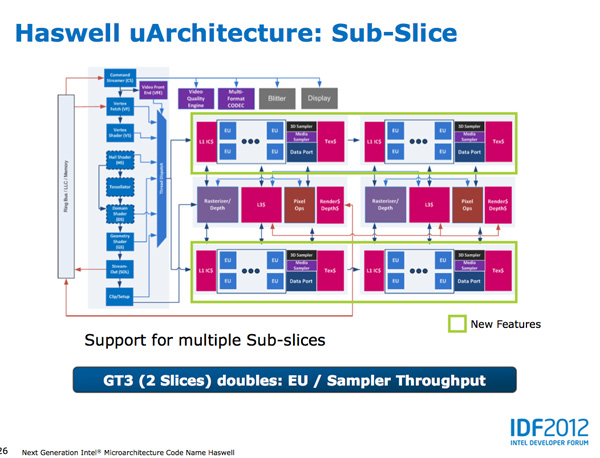

At the shader core level, Intel separates the GPU design into two sections: slice common and sub-slice. Slice common includes the rasterizer, pixel back end and GPU L3 cache. The sub-slice includes all of the EUs, instruction caches and EUs.

In Haswell GT1 and GT2 there's a single slice common, while GT3 sees a doubling of slice common. GT3 similarly has two sub-slices, although once again Intel isn't talking specifics about EU counts or clock speeds between GT1/2/3.

The final bit of detail Intel gave out about Haswell's GPU is the texture sampler sees up to a 4x improvement in throughput over Ivy Bridge in some modes.

Now to the things that Intel didn't let loose at IDF. Although originally an option for Ivy Bridge (but higher ups at Intel killed plans for it) was a GT3 part with some form of embedded DRAM. Rumor has it that Apple was the only customer who really demanded it at the time, and Intel wasn't willing to build a SKU just for Apple.

Haswell will do what Ivy Bridge didn't. You'll see a version of Haswell with up to 128MB of embedded DRAM, with a lot of bandwidth available between it and the core. Both the CPU and GPU will be able to access this embedded DRAM, although there are obvious implications for graphics.

Overall performance gains should be about 2x for GT3 (presumably with eDRAM) over HD 4000 in a high TDP part. In Ultrabooks those gains will be limited to around 30% max given the strict power limits.

As for why Intel isn't talking about embedded DRAM on Haswell, your guess is as good as mine. The likely release timeframe for Haswell is close to June 2013, there's still tons of time between now and then. It looks like Intel still has a desire to remain quiet on some fronts.

245 Comments

View All Comments

jigglywiggly - Friday, October 5, 2012 - link

wish the onboard gpu was better =/woula been nice for a laptop

tipoo - Friday, October 5, 2012 - link

2x the HD4000 is pretty decent for integrated. I wonder if that's 2x with or without the eDRAM cache though.ElvenLemming - Friday, October 5, 2012 - link

It's been known for a while that Haswell was only going to have a moderate improvement in the iGPU and the next big overhaul would be coming with Broadwell.csroc - Friday, October 5, 2012 - link

This is impressive, it might convince me it's time for a new laptop. On the other hand I also need to build a new desktop workstation and Haswell so far hasn't impressed me in that space.mayankleoboy1 - Friday, October 5, 2012 - link

Is Intel sacrificing Desktop CPU performance to make an architecture that is geared to the mobile space ?csroc - Friday, October 5, 2012 - link

It feels that way to me. Mobile performance seems to be their big concern now, that and improving the GPU. Two things I generally can't be bothered to care about when I'm looking to build a new workstation. I suspect I'll build an Ivy Bridge system because I could use it now and see nothing worth getting excited about.dishayu - Friday, October 5, 2012 - link

I fully share your sentiment. TO be very crude, i don't mind at all, paying for power imporvements, because it will pay back for itself in the long term (by consuming less power AND needing lesser cooling). But i DO mind very much, paying for 40 EUs of GPU on my desktop build which i will not use even for a second. Me, you and many others do not care about on-die graphics and Intel should realize that.I don't know why intel can't offer us both GPU and GPU-less options, the way they did with motherboards back in the days? P965 had no graphics, G965 did. Pretty sure it's technologically not an issue.

DanNeely - Friday, October 5, 2012 - link

If it makes you feel any better; reports elsewhere are that GT3 will be mobile only, because desktops don't have the power/size constraints driving the need for premium IGPs.Intel's not IGP CPUs are the E series parts; unfortunately they've failed to execute on the enthusiast side in terms of price/launch date leaving them as mostly server parts.

There just aren't enough of us to justify Intel adding another die design for their mass market socket that doesn't have an IGP at all instead of just letting us turn it off and use the extra TDP headroom for more time at boost speeds.

Omoronovo - Friday, October 5, 2012 - link

I'm somewhat in disagreement with you both.Whilst I share a concern that Intel is no longer focusing on raw performance improvements in the purely desktop space, they are still delivering incremental updates to the architecture that will benefit all current software (even if only marginally). However, processor performance has been reaching more and more diminishing returns in recent years, namely that software is simply not able to take advantage of multiple cores and improved performance because of (primarily) locks and complexity in creating multi-threaded applications.

As such, Intel has been focusing on that area - to make it easier for software and software developers to take advantage of the performance that exists *now* rather than brute forcing the issue by simply delivering more raw performance (much of which will be wasted/remain idle due to current software constraints).

With this, Intel has been able to focus on keeping performance high whilst subsequently dropping power usage substantially - the fact the iGPU is oftentimes not being used in a desktop environment does not invalidate it's utility - QuickSync is a prime example of where the gpu can accelerate certain types of processing, and if more software takes advantage of this we should see even more gains in future.

For the last 6 years or so, Intel has shown that it knows what demands will be placed on future computing hardware, and they seem convinced that this is the way to go. We might not be there yet, but technologies like C++AMP, OpenCL and such make me hopeful that this will change in a few years.

cmrx64 - Friday, October 5, 2012 - link

I solved this problem by buying an Ivy Bridge Xeon (specifically, an E3-1230v2). No GPU, lower power consumption than the equivalent i5/i7, has hyperthreading, performs really good, and a lot cheaper than an i7.If you don't care about the GPU, look to the Xeon line.