AMD A10-5800K & A8-5600K Review: Trinity on the Desktop, Part 2

by Anand Lal Shimpi on October 2, 2012 1:45 AM ESTVideo Transcoding Performance

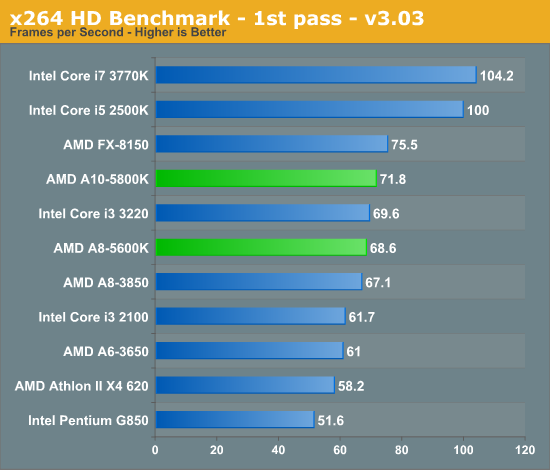

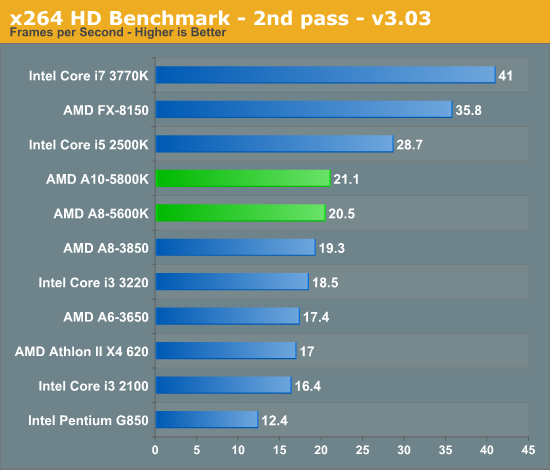

x264 HD 3.03 Benchmark

Graysky's x264 HD test uses x264 to encode a 4Mbps 720p MPEG-2 source. The focus here is on quality rather than speed, thus the benchmark uses a 2-pass encode and reports the average frame rate in each pass.

CPU based video transcode performance is as good as it can get from AMD here given the 2/4 module/core setup of these Trinity APUs. Intel's Core i3 3220 is a bit slower than the A10-5800K. We're switching to a much newer version of the x264 HD benchmark for our new test suite (5.0.1). Some early results are below if you want to see how things change under the new test:

| x264 HD 5.0.1 Benchmark | ||||

| 1st Pass | 2nd Pass | |||

| AMD A10-5800K (3.8GHz) | 33.5 fps | 7.41 fps | ||

| AMD A8-5600K (3.6GHz) | 32.2 fps | 7.12 fps | ||

| Intel Core i3 3220 (3.3GHz) | 35.2 fps | 6.61 fps | ||

178 Comments

View All Comments

Aone - Wednesday, October 3, 2012 - link

Who being in his right buys high-end discrete GPU for cheap CPU or APU?Plus, those who buy cheap CPUs usually don't have money for high-end discrete GPU.

Gaugamela - Wednesday, October 3, 2012 - link

Here are benchmarks that test the importance of faster RAM in these APUs. The difference in performance is astonishing. http://hexus.net/tech/reviews/cpu/46073-amd-a10-58...By overclocking and using 2133Mhz RAM the A10-5800 can get approximately a 30% increase in 3DMark and some games.

These Trinity APUs seem to be really interesting to tinker with.

creed3020 - Wednesday, October 3, 2012 - link

Thanks so much for posting that. I've been looking for this exact testing of Trinity. AT did this previously with Llano but forgot this crucial test with Trinity.It really helps system builders to set expectations for performance if a client doesn't want to pay for faster memory, or if they do want more performance we can quantify how much an improvement faster memory will have.

mikato - Wednesday, October 3, 2012 - link

Holy molyvozmem - Wednesday, October 3, 2012 - link

Keep encouraging AMD, guys.rarson - Wednesday, October 3, 2012 - link

Why in the world did you not mention which video card you were using on this page? I see that it's mentioned in the test bed, but why the heck do I have to go back and check that when you could have easily mentioned it on the discrete test page?Also, why are you using a 5870 with this? Who the hell is going to pair a new A8 or A10 Trinity with a 5870? That's completely illogical. Couldn't you have tried something newer, perhaps something within the same architecture? Extremely puzzling.

etamin - Wednesday, October 3, 2012 - link

And why was an FX-8150 thrown into the DISCRETE PROCESSOR GRAPHICS benchmark?Hardcore69 - Wednesday, October 3, 2012 - link

HA! Glad I went with an i3 3220 for this office box. Look at the power consumption at load, look at the single threaded benchmarks, even look at the multi threaded benchmarks. AMD is crap. It still hasn't caught up. And there are very few upgrade options compared to Intel. If you want to play games, a dedicated GPU is still vastly better. For other basic tasks, FAIL.rarson - Wednesday, October 3, 2012 - link

You paid more money.They're called trade-offs. That's reality.

Nil Einne - Friday, October 5, 2012 - link

Has anyone come across real world power consumption figures for either the A8-5500 vs A8-5600K or the A10-5700 vs A10-5800K. These have different TDPs, 65W vs 100W and slightly different clocks. But I'm wondering whether the K ones are really that bad in general or it's partially that they wanted more headroom since the K ones are to some extent designed to be overclocked. Of course the different ratings means that you may get unlucky and get a fairly high consumption K processor because of binning but still may be relevent. I'm somewhat out of date and not familiar with how turbo works, but I'm guessing the higher binning means it will stay at turbo for longer so a proper test should also try limiting the K to be the same as the non K just to see if that's the primary reason for any differences. (Ideally also limit the frequencies.)Most reviews including this one seem to be of the Ks I presume because that's what AMD sent out for testing.

Cheers