Samsung SSD 840 (250GB) Review

by Kristian Vättö on October 8, 2012 12:14 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

Lower Endurance—Why?

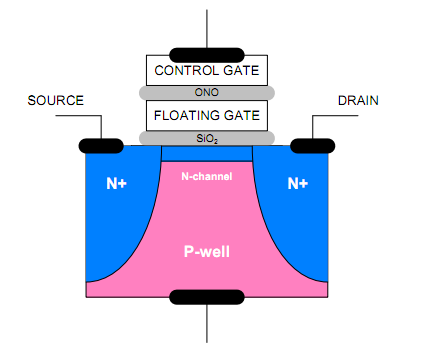

Below we have a diagram of a MOSFET (Metal Oxide Semiconductor Field Effect Transistor). When programming a cell, voltage is placed on the control gate, which forms an electric field that allows electrons to tunnel through the silicon oxide barrier to the floating gate. Once the tunneling process is complete, voltage to the control gate is dropped back to 0V and the silicon oxide acts as an insulator. Erasing a cell is done in a similar way but this time the voltage is placed on the silicon substrate (P-well in the picture), which again creates an electric field that allows the electrons to tunnel through the silicon oxide.

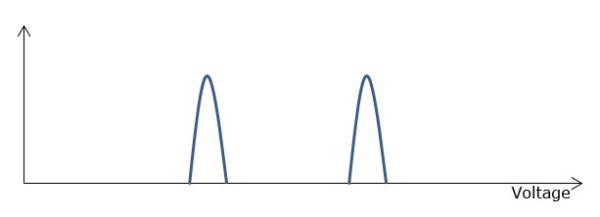

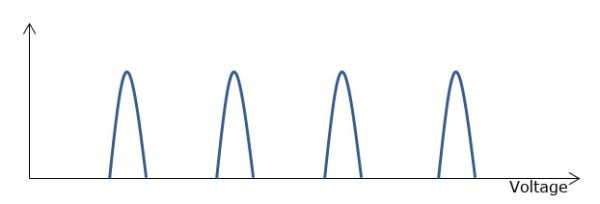

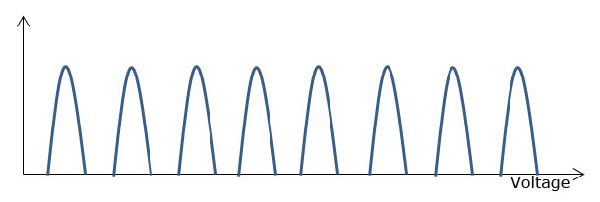

While the MOSFET is exactly the same for SLC, MLC and TLC, the difference lies in how the cell is programmed. With SLC, the cell is either programmed or it's not because it can only be "0" or "1". As MLC stores two bits in one cell, its value can either be "00", "01", "10" or "11", which means there are four different voltage states. TLC ups the voltage states to eight as there are eight different combinations of "0" and "1" when grouped in groups of three bits. Below are diagrams showing the graphical version of the voltage states:

SLC

MLC

TLC

The above diagrams show the voltages for brand new NAND—everything looks nice and neat and the only difference is that TLC has more states. However, the tunneling process that happens every time the cell is programmed or erased wears the silicon oxide out. The actual oxide is only about 10nm thick and it gets thinner every time a smaller process node is introduced, which is why endurance gets worse as we move to smaller nodes. When the silicon dioxide wears out, atomic bonds break and some electrons may get trapped inside the oxide during the tunneling process. That builds up negative charge in the silicon oxide, which in turn negates some of the control gate voltage when the cell is programmed.

The wear results in longer erase times because higher voltages need to be applied for longer times before the right voltage is found. Remember, the controller can't adjust to changes in program and erase voltages (well, some can; more on this on the next page) that come from the trapped electrons, cell leakage, and other sources. If the voltage that's supposed to work doesn't, the controller has to basically go on guess basis and simply try different voltages before the right one is found. That takes time and causes even more stress on the silicon oxide.

The difference between SLC, MLC, and TLC is pretty simple: SLC has the fewest voltage states and hence it can tolerate bigger changes in voltages. With TLC, there are eight different states and hence a lot less voltage room to play with. While the exact voltages used are unknown, you basically have to divide the same voltage into eight sections instead of four or two like the graphs above show, which means the voltages don't have room to change as much. The reason why a NAND block has to be retired is that erasing it starts to take too long, which impacts performance (and eventually a NAND block simply becomes nonfunctional, e.g. the voltage states for 010 and 011 begin to overlap).

There is also more and more ECC needed as the NAND wears out because the possibility for errors is greater. With TLC, that's once again a bigger problem because there are three bits to correct instead of one or two. While today's ECC engines are fairly powerful, at some point it will be easier to just retire the block than to keep correcting errors.

86 Comments

View All Comments

xdrol - Monday, October 8, 2012 - link

You sir need to learn how SSDs work. Static data is not static on the flash chip - the controller shuffles it around, exactly because of wear levelling.name99 - Tuesday, October 9, 2012 - link

"I think Kristian should have made this all more clear because too many people don't bother to actually read stuff and just look at charts."Kristian is not the problem.

There is a bizarre fraction of the world of tech "enthusiasts" who are convinced that every change in the world is a conspiracy to screw them over.

These people have been obsessing about the supposed fragility of flash memory from day one. We have YEARS of real world experience with these devices but it means nothing to them. We haven't been screwed yet, but with TLC it's coming, I tell you.

The same people spent years insisting that non-replacable batteries were a disaster waiting to happen.

Fifteen years ago they were whining about the iMac not including a floppy drive, for the past few years they have been whining about recent computers not including an optical drive.

A few weeks ago we saw the exact same thing regarding Apple's new Lightning connector.

The thing you have to remember about these people is

- evidence means NOTHING. you can tell them all the figures you want, about .1% failure rates, or minuscule return rates or whatever. None of that counts against their gut feeling that this won't work, or even better an anecdote that some guy some somewhere had a problem.

- they have NO sense of history. Even if they lived through these transitions before, they cannot see how changes in 2000 are relevant to changes in 2012.

- they will NEVER admit that they were wrong. The best you can possibly get out of them is a grudging acceptance that, yeah, Apple was right to get rid of floppy disks, but they did it too soon.

In other words these are fools that are best ignored. They have zero knowledge of history, zero knowledge of the market, zero knowledge of the technology --- and the grandiose opinions that come from not actually knowing any pesky details or facts.

piiman - Tuesday, February 19, 2013 - link

Then stick with Intel not because they last longer but they have a great warranty.(5 years) My drive went bad at about 3.5 years and Intel replaced it no questions asked and did it very quickly. I sent it in and had a new one 2 days after they received my old one. great service!GTRagnarok - Monday, October 8, 2012 - link

This is assuming a very exaggerated amplification of 10x.Kristian Vättö - Monday, October 8, 2012 - link

Keep in mind that it's an estimation based on the example numbers. 10x write amplification is fairly high for consumer workloads, most usually have something between 1-3x (though it gets a big bigger when taking wear leveling efficiency into account). Either way, we played safe and used 10x.Furthermore, the reported P/E cycle counts are the minimums. You have to be conservative when doing endurance ratings because every single die you sell must be able to achieve that. Hence it's completely possible (and even likely) that TLC can do more than 1,000 P/E cycles. It may be 1,500 or 3,000, I don't know; but 1,000 is the minimum. There is a Samsung 830 at XtremeSystems (had to remove the link as our system thought it was spam, LOL) that has lasted for more than 3,000TiBs, which would translate to over 10,000 P/E cycles (supposedly, that NAND is rated at 3,000 cycles).

Of course, as mentioned at the end of the review, the 840 is something you would recommend to a light user (think about your parents or grandparents for instance), whereas the 840 Pro is the drive for heavier users. Those users are not writing a lot (heck, they may not use their system for days!), hence the endurance is not an issue.

A5 - Monday, October 8, 2012 - link

Ah. I didn't know the 10x WA number was exceedingly conservative. Nevermind, then.TheinsanegamerN - Friday, July 5, 2013 - link

3.5 years is considering you are writing 36.5 GB of data a day. if the computer it is sitting in is mostly used for online work of document editing, youll get far more. the laptop would probably die long before the ssd did.also, this only apples to the tls ssds. mlc ssds last 3 times longer, so the 840 pro would be better for a computer kept longer than 3 years.

Vepsa - Monday, October 8, 2012 - link

Might just be able to convince the wife that this is the way to go for her computer and my computer.CaedenV - Monday, October 8, 2012 - link

That is how I did it. My wife's old 80GB system drive died a bit over a year ago, and it was one of those issues of $75 for a decent HDD, or $100 for an SSD that would be 'big enough' for her as a system drive (60GB at the time). So I spent the extra $25, and it made her ~5 year old Core2Duo machine faster (for day-to-day workloads) than my brand new i7 monster that I had just build (but was still using traditional HDD at the time).I eventually got so frustrated by the performance difference that I ended up finally getting one for myself, and then after my birthday came then I spent my fun money on a 2nd one for RAID0. It did not make a huge performance increase (I mean it was faster in benchmarks, but doubling the speed of instant is still instant lol), but it did allow me to have enough space to load all my programs on the SSD instead of being divided between the SSD and HDD.

AndersLund - Sunday, November 25, 2012 - link

Notice, that setting up a RAID with your SSD might hinder the OS to see the SSDs as SSD and not sending TRIM commands to the disks. My first (and current) gamer system consists of two Intel 80 GB SSD in a RAID0 setup, but the OS (and Intel's toolbox) does not recognize them as SSD.